Mark Frazier

5.7K posts

Mark Frazier

@openworld

President, Openworld. Coauthor of Founding Startup Societies https://t.co/cbOSibJYk0. Helping to seed and spread voluntary communities.

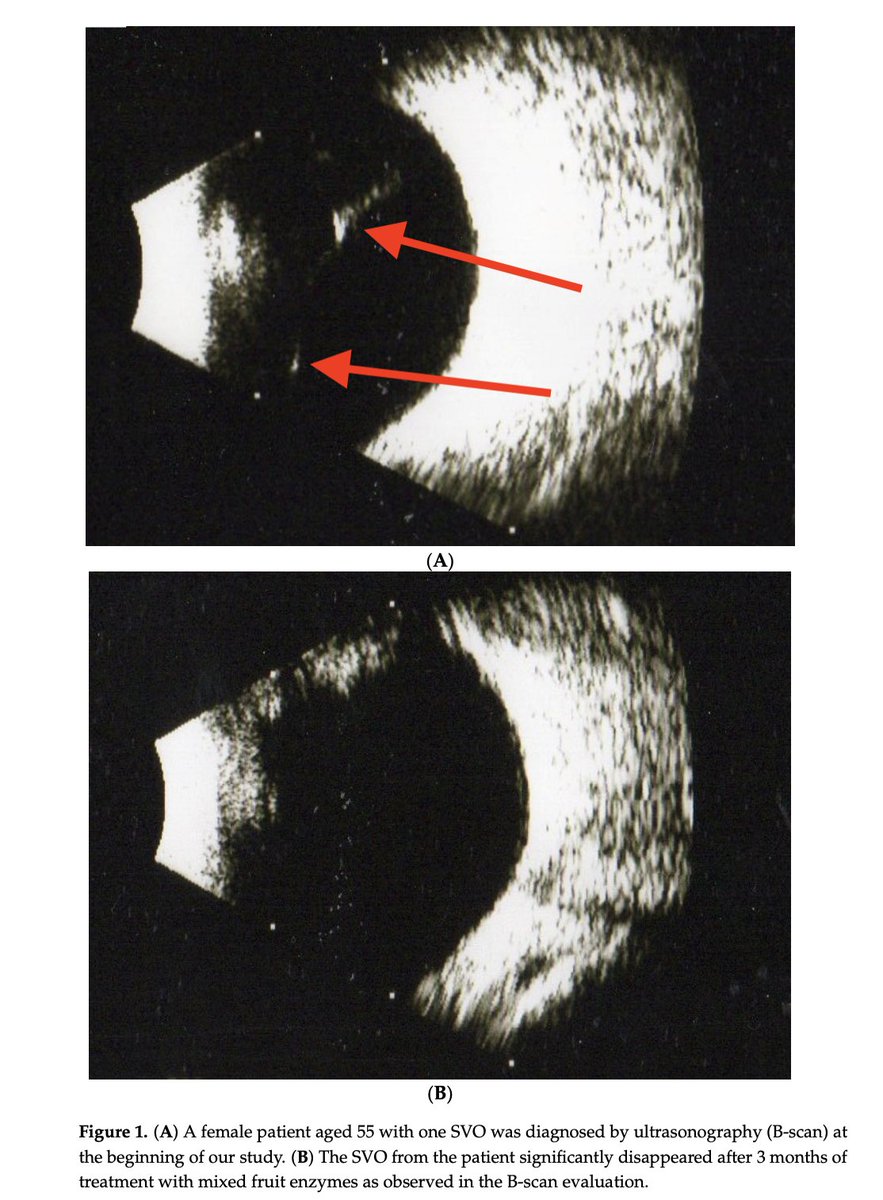

Eye floaters disappear within 3 months of taking fruit enzymes. The enzymes contained in pineapple, papaya and fig: ⬩190 mg bromelain ⬩95 mg papain ⬩95 mg ficin Literally dissolve the floaters away.

your gentle reminder… there are like zero economists or ppl in general who know how to reason about what happens when near zero cost >human level intelligence gets woven into the fabric of the economy at scale this fast. this scenario has never remotely been in the possibility space of econ textbooks or any theory. when cognition starts behaving like a commodity & the environment turns structurally deflationary no one actually knows what happens. kinda like no “expert” really understood a novel virus like covid.

Remember the freakout when a supercomputer beat the best chess player in the world and everyone declared the game over, and then everyone forgot about it (and chess-playing computers) and chess became more popular than ever and a hit Netflix show? Man vs. machine is fleetingly interesting but machine vs. machine is boring and pointless: nobody cares about interacting machines because there’s no human soul in the mix with emotions and moral agency. It’s just slop squared. The only machine chatter anyone will care about (and then only indirectly) will be that between agents booking your flights, buying your groceries, etc. And it’ll be about as interesting as TCP/IP to most people. AI actually puts the focus on the human more, not less.