Angehefteter Tweet

Prophit Crypto

246 posts

Prophit Crypto

@prophit_crypto

Debunking the bubble

Blockchain Beigetreten Ocak 2018

1.5K Folgt88 Follower

@TateTheTalisman At least you believe South Africa shouldn't have any white leadership

English

@blknoiz06 Industry on HBO Max

Tokyo Vice on HBO Max

@Counterpart_STZ on Starz

English

@angeloinchina @RealAlexJones He's right. He means capitalism for those in power and communism for the rest

English

Alex, there is no more capitalist than Soros. Stop calling him communist, it is an insult to the intelligence of your followers and you know it.

And by the way, try to study a bit communist rather than regurgitating Mainstream talking points, looks like a pavlovian reaction to whatever you dislike by calling it communist. it is childish.

English

The communist funded by George Soros are looking for a confrontation. People should not instigate or initiate any violence towards them. I have been warning for over a year that the deep state is openly planning to instigate civil unrest nationwide once President Trump is reelected. What we are witnessing now is the slow burn leading to the big events to come in November. The globalist agenda is failing badly. The establishment is betting everything on triggering a Civil War in America.

English

I would like to thank everyone for your care and support, be it writing letters, showing support on X, or in any other form. They all mean a lot to me and keep me strong. I will do my time, conclude this phase and focus on the next chapter of my life (education).

I will remain a passive investor (and holder) in crypto. Our industry has entered a new phase. Compliance is super important.

A silver lining of this whole process is that Binance has been under the microscope. And funds are SAFU.

Protect users!

English

Prophit Crypto retweetet

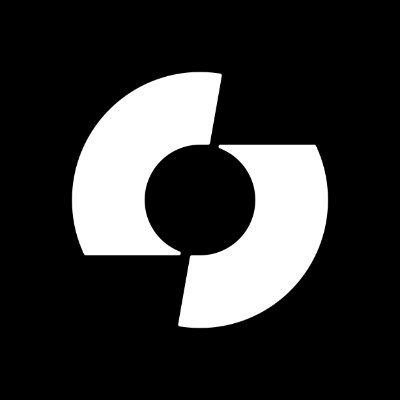

Long Live Points, Long Live Snapshots.

The INTENTional Campaign (7%) is LIVE.

$APTR #INTENTS

English

@KIPprotocol @Ins_Penguin @CryptoJune777 @fartconthird @ItsAverageJO @btcliverpool @whalepoon EZAY2

BCO1Z

English

@lisa_k_h @Cancelcloco The why is simple... money, power, control

English

@Cancelcloco Most people can’t get the mind around the “why” part to all of this. Can’t wait for your next video.

English

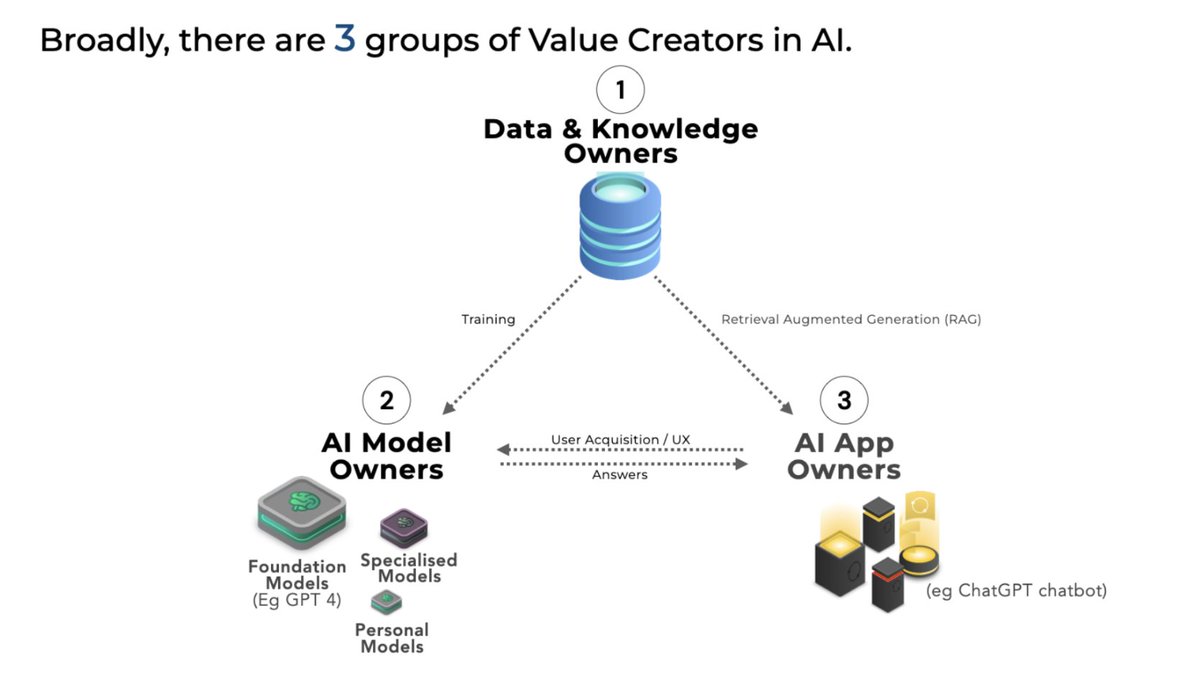

KIP Explainer Series: #2

What is RAG, and Why is KIP decentralizing it?

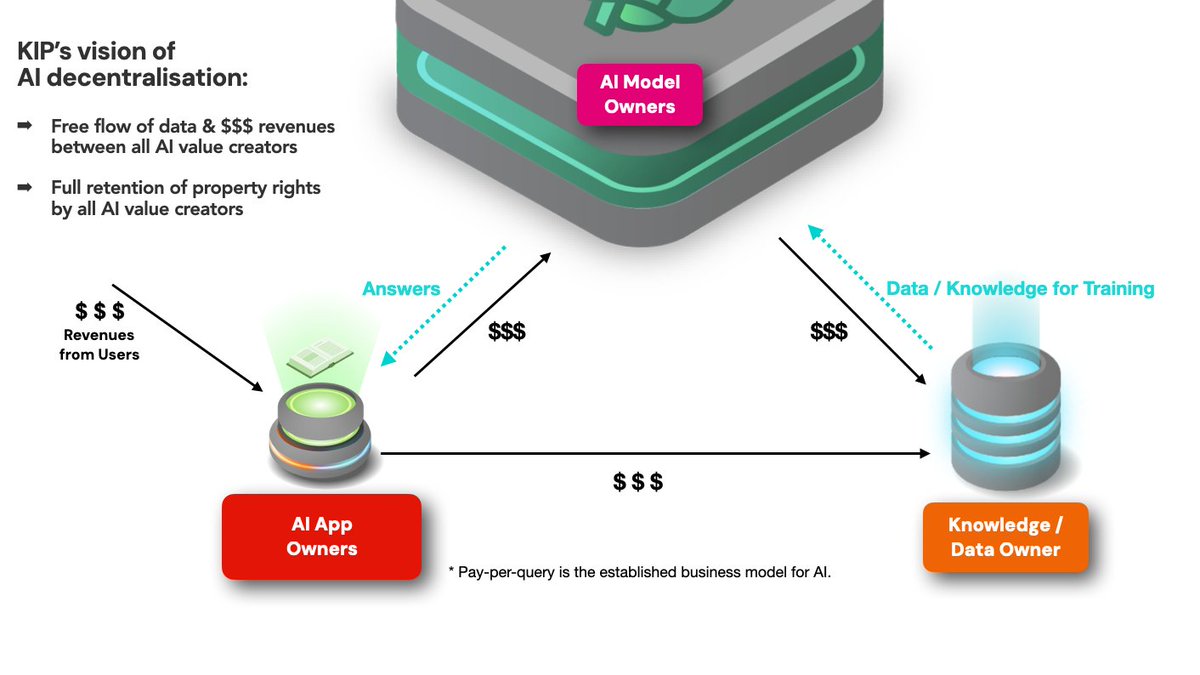

TLDR: RAG is an innovative technique used in Generative AI, involving 3 key Value Creators in AI (app owners,📱, model owners 🤖, data owners 📚 ).

KIP's successful decentralisation of RAG essentially gives a framework for the decentralisation of all of AI, and is a necessary first step to fight encroaching AI Monopolies.

1⃣ RAG IN A NUTSHELL

AI models are trained by feeding in data. They learn from the data, adjusting their internal weights to recognize patterns, enabling them to make predictions or decisions based on new data. The model can then answer user queries from its newly-gained "native" knowledge.

But this training process requires the entire dataset to be exposed to the model, and essentially results in the data being 'absorbed' into the model. If the data includes confidential or copyrighted information, there's a risk that the model will spit that information out verbatim at some point in the future.

So what if you don't wish to put your data at risk?

That's where Retrieval-Augmented Generation, or RAG comes in.

RAG is a sophisticated technique that enables AI models to generate answers it doesn't natively know, by retrieving data & information from external knowledge bases & databases it is given access to.

It's like an intelligent assistant who does not know the answer to your question, but is able to expertly research to find the answer from external data sources.

1. User Query Input:

The process begins with a user posing a question or query to a chatbot running a RAG system.

For example, "What are the symptoms of COVID-19?"

2. Retrieval from External Databases:

The model initiates the retrieval phase by searching through linked external knowledge bases & databases, such as medical journals, health websites, and clinical databases, to retrieve only relevant chunks of data and info related to the user query.

3. Data Processing, Filtering and Generation:

Retrieved data undergoes processing and filtering to extract key information and eliminate irrelevant data points. The AI model synthesizes the retrieved data with contextual cues from the user query to generate a response.

In the case of the COVID-19 symptoms query, RAG might generate a response listing common symptoms such as fever, cough, and shortness of breath, but also potentially including information the latest medical research papers that was not available when the model was trained - a higher quality response.

4.Response Delivery:

The generated response is presented to the user via the chatbot interface.

Thus, RAG allows external data to be used to answer AI queries without needing that data to be "absorbed" first by a model through the training process.

RAG techniques are getting more sophisticated all the time, and in our research paper here, we show that quality of answers under RAG can outperform trained models. arxiv.org/pdf/2311.05903…

2⃣ IMPORTANCE OF RAG

RAG is going to become increasingly important because:

1. Model training is a highly technical and specialised activity, and often very expensive to do - not everyone will have the necessary skillsets or resources to able to train models.

2. There is a lot of data (confidential, proprietary etc.) whose owners may never feel comfortable to expose fully to models they don't fully own or control.

One important point you may also have noticed is:

Under a RAG framework, app owners,📱, model owners 🤖 and data owners 📚 work together and each contribute to the answering of user queries.

Thus, in a equitable state of affairs, each party should be fairly compensated for their contributions.

But there is currently no easy way to do this without compromising each party's independence or ownership rights. (Incidentally, this problem is exactly what prompted us to start building KIP, more than a year ago.)

This is the "money problem".

3⃣ "THE MONEY PROBLEM" WITH RAG & CENTRALISED AI

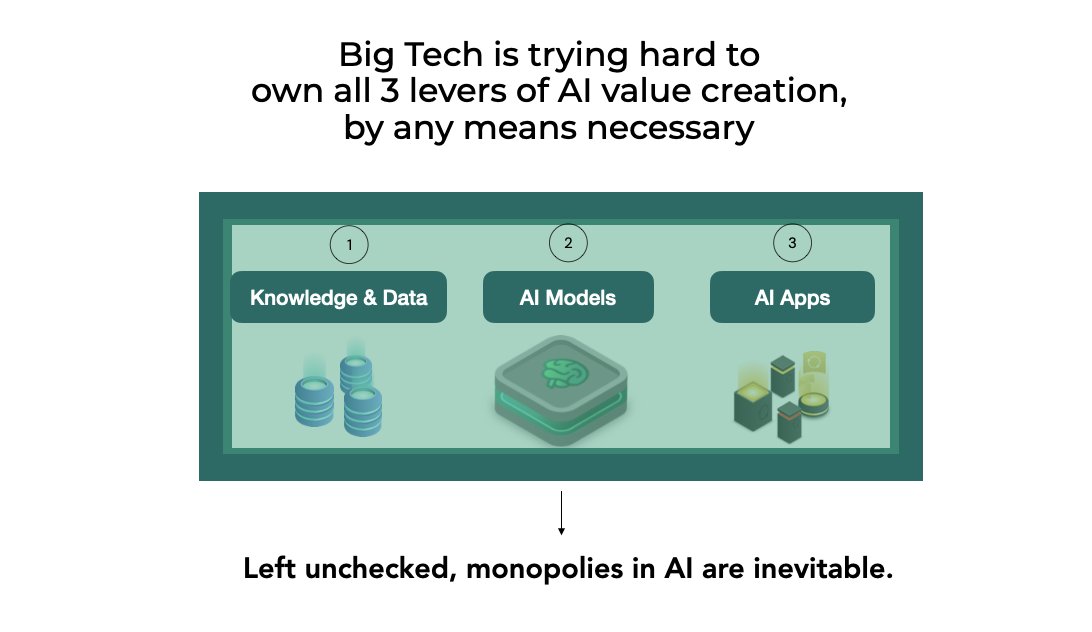

Let's imagine a situation where one entity owns all three levers of AI value creation: there's no need to split payments collected from the users between the parties, as it's just internal accounting.

But the flipside of that is: if we are not ok with ONE ENTITY OWNING ALL 3 LEVERS OF AI VALUE CREATION ( 📱, 🤖 , 📚 ), we must solve the issue of how to split money between the different industries of AI Value Creators.

Without solving "The Money Problem", ( 📱, 🤖 , 📚 ) cannot each maintain their independence and freedom to trade.

And a monopoly is already forming right now.

Here's our opinion on how the monopolistic battleplan of OpenAI will work:

- OpenAI obviously has some of the most powerful models - closed-source models like GPT-4, which were trained using our collective knowledge as published and scraped from the open internet over many years. That powers their apps like ChatGPT, and the user-made GPTs.

- Via their Copyright Shield - that is, their commitment to pay the legal fees of anyone found to be uploading copyrighted data to their platform - they embolden and encourage their users to upload data to their closed platform without fearing legal consequences.

- Given that OpenAI is a centralised, closed-source web2 platform, we should ask ourselves: does the data uploaded by users - whether to ChatGPT or the GPTs apps - still belong to the uploaders?

- So with their existing models, unapologetic scraping of any and all data, Copyright Shield, and their huge war-chest, you have probably the most voracious data vacuum cleaner ever created, sucking in data and resources to feed their models.

Put all the above together (and their 7 tttttrillion dollar raise for hardware) and it's not difficult to see that total monopolisation of AI development by one or a few companies will be inevitable, unless something is done.

For reasons we've already shared, we passionately believe that AI monopolisation is bad for humanity, and are actively fighting against it.

4⃣THE SIGNIFICANCE OF DECENTRALISING RAG

RAG involves all 3 core levers of AI value creation ( 📱, 🤖 , 📚 ).

Thus, by building a framework for decentralising RAG, KIP essentially builds a framework for decentralising control over value creation in AI, thus giving a level playing field for all value creators to fight AI monopolies.

We allow AI to function efficiently as a collaborative effort involving millions of small- and large-scale creators, without the need for one huge company to coordinate each of the core functions.

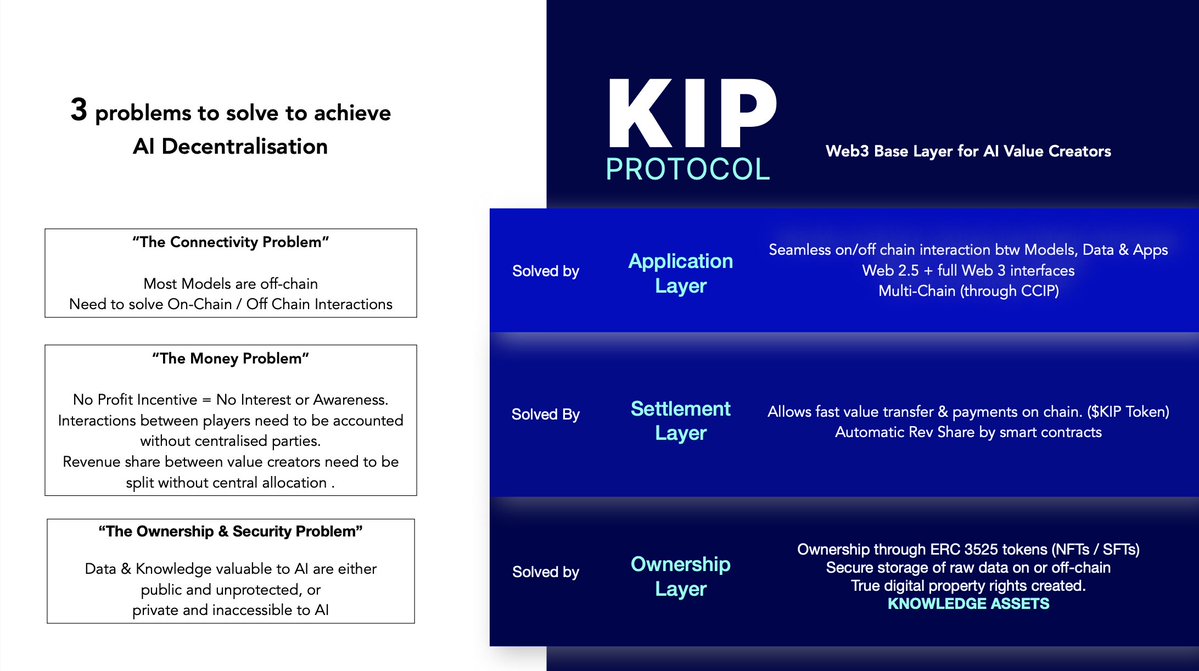

We will do that by first solving 3 base level problems that have been a barrier to the decentralisation of RAG:

1. Ownership: Ensuring that ( 📱, 🤖 , 📚 ) can publish easily and securely to web3 easily, creating their web3 "trading entity" in the form of ERC 3525 Semi-Fungible Tokens, thus enabling them to prove their digital property rights on chain.

2. Connectivity: Ensuring smooth off-chain and on-chain interactions, providing an open environment for 📱, 🤖 , 📚 to connect to each other easily and freely

3. Monetisation: Providing a common framework for recording & accounting for the contributions of each AI Value Creator, as well as an automated revenue share and withdrawals.

By bringing about decentralised RAG (d/RAG), KIP is crafting the first crucial blueprint for fighting AI Monopolies.

Unlocking digital property rights for each AI value creator, and empowering each to transact while remaining independent, is the exact opposite of what Big Tech is trying to achieve.

KIP Protocol arms AI Value Creators with the weapons ⚔️ necessary to fight the monopolists in AI.

English

KIP Explainer Series: #1

Why is KIP the Web3 Base Layer for AI?

First off: KIP is not a single AI app, nor a large language model, nor a database / knowledge base.

KIP is a decentralized protocol that AI app owners 📱, model owners🤖 and knowledge base / data owners📚 will find essential to decentralise their work & monetise in Web3.

(For brevity, we call these 3 categories 📱, 🤖, 📚 : AI Value Creators)

Decentralisation of AI is an extremely large & important topic, and there are multiple groundbreaking projects taking different approaches at the problem.

For us at KIP, we are focused on solving the base level problems that AI Value Creators will face when trying to deploy and monetise their work in Web3.

AI MODELS 🤖 NEED APPS 📱& DATA 📚 TO CREATE ECONOMIC VALUE

While there are easily 20 over categories of companies creating solutions in AI, most of the attention in Generative AI over the past year has been on the AI Models (and there is a huge variety of different categories and approaches here from transformers to GANs to diffusion models to name a few).

Indeed, these models represent the true breakthrough of this new era of computing—the true Brains behind it all.

But to build a business ecosystem within AI, models need to rely on at least 2 other essential Value Creators.

1) AI Apps📱: 'The Face of AI'

It's easy to overlook the importance of apps amidst all the excitement over models.

AI Apps are essential for getting users into AI. Apps can take many forms, like chatbots, image generators, search bots, analysis bots or in its most elegant form, simply prompts.

They craft the user experience, acquire the users, and perhaps most importantly, collect the fees from the users.

Many forget that ChatGPT is OpenAI’s app, powered by OpenAI’s various models (GPT 3.5, GPT 4). The then ground-breaking human-like responses of the OpenAI chatbot were largely coded on the app-end, not the model end. (Connecting to the models directly via API, and comparing the answers, will tell you this.)

TLDR: without Apps, models would just be sets of code and weights sitting in a metal box somewhere with no way to utilise them.

2) Data📚: 'The Lifeblood of AI'

Data is needed for:

a) Training & Fine Tuning Models, &

b) Retrieval-Augmented Generation (RAG)

All models are trained and fine-tuned on data. Without fine-tuning, models don't get stronger or smarter.

But by using data to finetune or train models, it causes the data essentially to be 'assimilated' or 'absorbed' into the models, manifested in the adjustment of the model weights.

So in situations where it is impossible, impractical, or illegal to just use data to directly train the models, the innovative technique called Retrieval-Augmented Generation (RAG) steps up to deliver.

RAG combines the power of retrieving information from external databases with the capability of generating responses via an AI Model. It's like having a super-smart assistant who understands your questions but also knows where to find the answers even if it doesn't know the answer itself.

While RAG is still relatively new, it’s our firm belief that given increased sensitivity and protectionism over data, RAG techniques could emerge as a leading approach, driving significant business value through real-world applications, and making it a dominant framework under which most people access AI in the future.

TLDR: Regardless of which approach, continued AI innovation is simply not possible without data.

A VIBRANT AI ECOSYSTEM REQUIRES MULTIPLE INDEPENDENT INDUSTRIES OF VALUE CREATORS

Individuals and companies skilled at training and finetuning models, may not be the same people who are great at designing and marketing customer-facing apps.

Similarly the researchers and domain experts with valuable data sets and knowledge bases, may not have the right skill sets to train AI models or design applications.

But in a vibrant and diverse ecosystem, they don’t have to . Seperate industries of companies and individuals can work together to create use cases and economic value for users.

An app designer can choose the AI model most suitable for his product plans, and pre-select the external knowledge bases most helpful for his users.

But what if all 3 potentially vibrant and independent industries are being slowly absorbed into one closed ecosystem?

Because that’s exactly what’s happening right now. We will cover this in detail in future articles, but for now: do a web-search for "openai copyright shield" and consider the implications for data ownership in the AI future.

WHY KIP WANTS TO CATALYSE AI DECENTRALISATION

Monopolies in AI are uniquely dangerous, and decentralisation of AI is an urgent and necessary response to the subjugation of our collective interests to that of a narrow set of corporate interests.

We are 100% for AI accelerationism (e/acc), and we do not deny the significant contributions of Big Tech in advancing AI innovation.

But large companies will act only in the best interests of their shareholders, and they will do whatever they can get away with. It’s the nature of capitalism; to expect them to change their nature and ignore their driving motivations is to deny reality.

We need a state of adversarial equilibrium in AI, with a multitude of different actors participating and competing in the market, fostering an environment where innovation can thrive. The future of AI must not become subjugated to any megacorp’s corporate interests.

And decentralization of AI is, in our opinion, THE ONLY WAY to bring about that desired state.

HOW KIP CATALYSES DECENTRALISATION OF AI

KIP solves three base level problems that AI apps, models, and data owners will face when trying to decentralize.

1) On-chain / Off-Chain Connectivity

2) Monetisation & Accounting

3) Ownership & Security

The “Connectivity” Problem

There are > 400,000 models on Hugging Face, which is indicative of how vibrant, but also how nascent the entire AI industry really is.

Current blockchain technology cannot deliver the core inference function of models (ie totally decentralised models) at a cost or speed that most ordinary users would find acceptable (although advances in edge computing may get us there soon)

Thus most, if not all, of these models are off-chain, and we can expect more innovation and tinkering to be done in off-chain models.

In order to unleash all those ideas and innovation in web3, KIP makes it easy to account for off-chain inference, on-chain.

KIP facilitates this through a framework that allows the heavy computational tasks associated with machine learning inference to be processed outside the blockchain, while still maintaining the integrity and principles of a decentralized system.

The “Money” Problem

The best technology in the world would not see adoption if adopters do not enjoy increased economic benefits.

The basic revenue model framework for AI can be described as “pay-per-query”, as every single query from a user expends GPU compute, and thus have to be paid for by someone. And to answer a single user query, multiple AI value creators contribute to the answering of that question.

We are not advocating decentralization merely for decentralization’s sake, but rather decentralization as an alternative to monopolization.

Thus, for AI decentralisation to succeed, we need to ensure that the parties who are decentralizing their AI work are able to earn revenues.

This sounds obvious enough, but in the case of AI, this is not as straightforward as it sounds.

Let’s give an example of a query run via RAG

1. A user makes a query to an AI Chatbot.

2. The AI Chatbot passes the query over to its brain the AI model.

3. The model retrieves only relevant chunks of data from the Knowledge Base it requires to answer the question, formulates the answer and sends it back to the App.

4. The App packages the answer and delivers it back to the user.

In this simplified example, you will see how all 3 actors each contribute towards the answering of the user query.

If one platform owns and controls all three (🤖,📱,📚) under a centralized ecosystem (like what OpenAI is trying to do in the 2nd diagram above), then you just have to pay that one centralised platform, as the rest is internal accounting.

But if we want decentralization rather than monopolization, then each party needs to be paid, thus requiring solutions for:

1. Recording (on-chain) the contributions of each,

2. Splitting / allocating the revenues from the users

3. Enabling each to withdraw their revenues

This is the “money problem” in decentralizing AI that KIP solves.

We do this through a low gas, high efficiency Web3 infrastructure that provides for connectivity between AI value creators, a way to collect payments from the user, and a way to withdraw earnings. (We will cover this in an upcoming KIP Explainer)

Without solving the money problem first, decentralization of AI will be much more difficult, and will be far more unlikely to gain wide-spread adoption beyond a few true believers.

The “Ownership” Problem

Monetisation is merely a weak privilege, if it is not tied to true ownership.

We have all seen how accounts on centralized platforms can be shutdown, banned, shadowbanned at a moment’s notice.

KIP solves this through using blockchain tokens, specifically the ERC-3525 token (SFTs), to “wrap” the work of the AI Value Creator.

1.For data owners: the SFTs wrap vectorised knowledge bases, or a link to an encrypted raw data file to be used for model training.

2.For model makers: the SFTs could wrap an API to an off-chain model, or a set of model weights ready for sale

3.For app devs: the SFTs could wrap the front end APIs, or the prompt itself.

These SFTs serve as 'accounting entities' that can interact with each other on-chain, and record the amounts each SFT earned from a particular transaction.

By solving these problems, KIP makes it possible and easy for AI Value Creators to decentralize their work, creating the starting conditions for a vibrant and much larger decentralised AI ecosystem.

KIP is the necessary Web3 Base Layer for AI.

English

@VonIvanov @DreadBong0 C'mon mate, the game is the game. Someone makes a call, we decide to follow or not. It's completely in our hands. If someone makes terrible calls, stop following. This year Dread has hit winners, they may not stay winners for long but like I said, it's the game.

English

@prophit_crypto @DreadBong0 We have “crypto boys” that had 2 million followers for a “good reason” and they have been arrested. That’s not the point. The real argument is about responsibility and accountability.

English

When I first invested in $TAO under $10 there was not even 1,000 holders..

There was a smart core group of people who truly understood #Bittensor and what they were investing in

Now we have over 45,000 holders in $TAO..

But.. I'm not sure the number of educated holders has grown much

Those OGs understand this is a long, arduous road with many hurdles to overcome

They also comprehend that the reward lies in holding a tokenized piece of the neural internet

A priceless commitment, akin to its weight in gold..

Contributing to the restoration of power and control to the people

$TAO

English

@DreadBong0 @VonIvanov Ignore him @DreadBong0, you hit a 100k for good reason. Keep posting, we're reading!

English

@VonIvanov Dude.. just unfollow

I also haven't forgot my shitty investments.. I'm always honest about them

I ain't making no one invest in anything.. I talk about shit I like because its MY twitter

I can talk about what the hell I want

So either unfollow or just shut the fuck up 👍

English

@Watch_LFC If they had nothing to hide then they would release it straight away.

English

Prophit Crypto retweetet

@NFT_GOD Thanks Alex, my bookmarks are full of your posts!

When writing long form posts does it matter if you cut and paste from a doc or is it better to write directly on X?

English

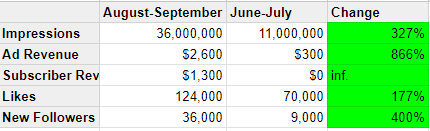

I've been running an experiment on the X algo the last 2 months

It has resulted in:

• 36 million impressions

• $2,600 of ad revenue

• $1,300 of subscriber revenue (just 1 week)

• 124,000 likes

• 36,000 new followers

And all I had to change was a few small things:

1. All of my posts (minus 2) were long form posts

2. I included multimedia (specifically short videos) in as many posts as possible

3. I spent a majority of my time replying to an X List of accounts I want to network with

4. I ran/talked on 2 Spaces a week

That's it. Those were the changes.

All those metrics I listed are at least triple the metrics from the previous 2 month period

Here's why they worked:

1. Long form posts get people reading longer. Longer time on content = algo pushes your content more

2. Multimedia not only makes your content better, but keeps them on the content even longer. Once again, more time on content = more impressions

3. Hammering a List of accounts I wanted to network with made me a lot of new friends. Friends I now mutually engage with daily

4. Spaces are the biggest growth event you can have. Every Space I ran got me 1000+ new followers.

BONUS: I ran a subscriber only space every week, which built me incredibly strong connections with my core audience. I'm now engaging with a lot of them every day.

These aren't major changes honestly.

These are minor tweaks that got people spending WAY more time on my content

There's no excuse for you not implementing these either

And this isn't just about "boosting metrics"

This is about expanding your network, building new relationships, and unlocking opportunities that didn't exist for you before.

Try these out and let me know what you think.

English