Arvind Narayanan

13.1K posts

@random_walker

Princeton CS prof and Director @PrincetonCITP. Coauthor of "AI Snake Oil" and "AI as Normal Technology". https://t.co/ZwebetjZ4n Views mine.

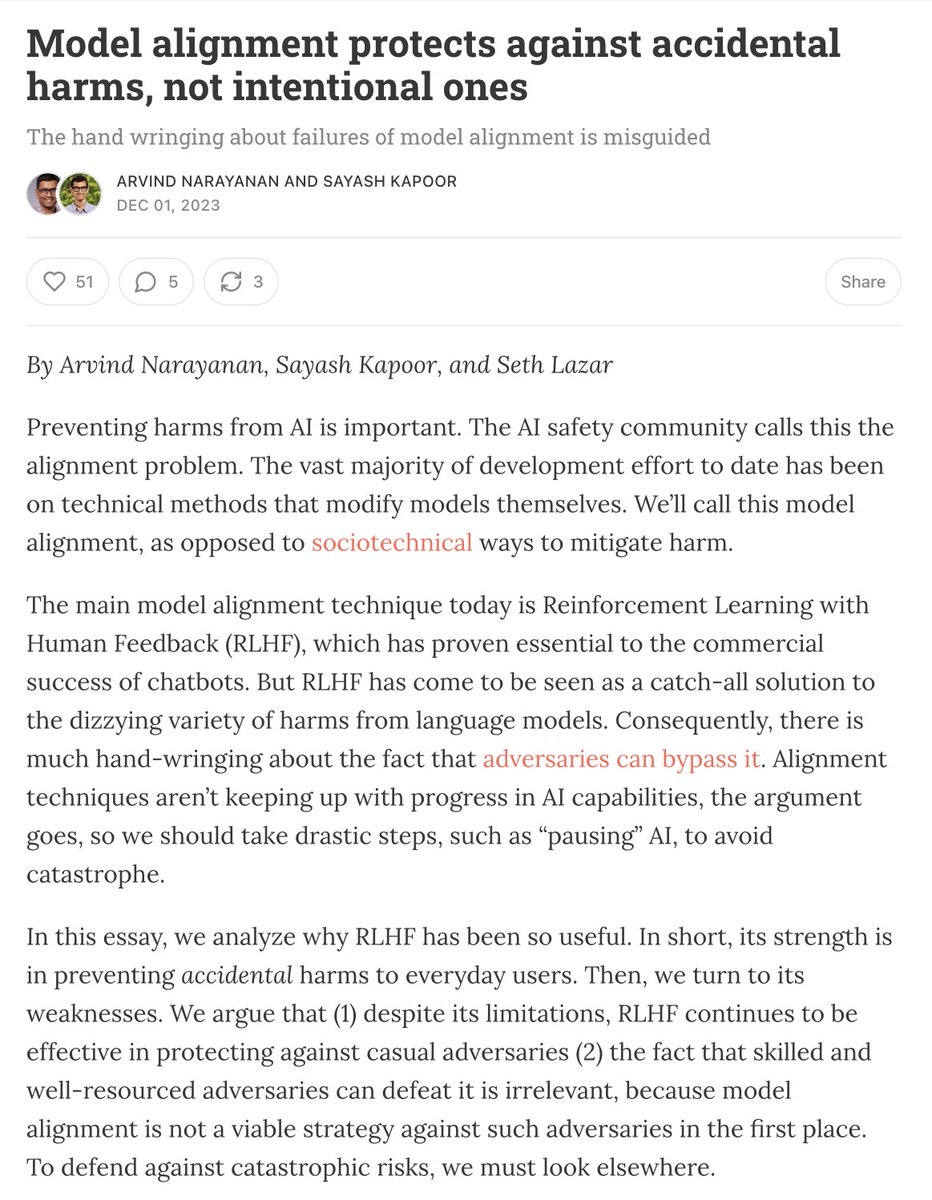

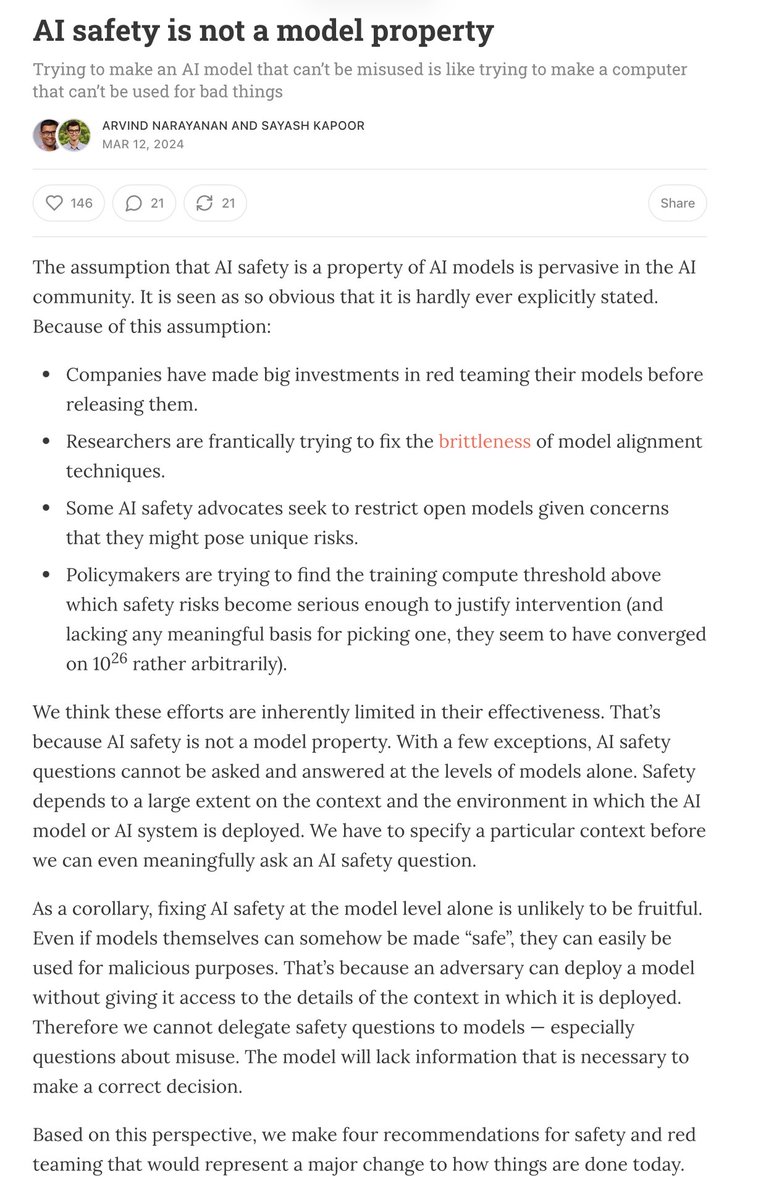

As I’ve said before I think this raises deep questions about the theory of behavior that underpins the existing approach to AI safety. Is the idea to deter the typical user who isn’t very determined and might not know Pliny exists? Or is the idea to prevent worst case outcomes? In which case Pliny’s continued success means the whole apparatus doesn’t yet work? I’m not super deep on the safety world and am trying to recover its first principles as I go.

@sayashk @random_walker They only have PhD students to do work? I would have thought that training successors, would be important in of itself 🫠

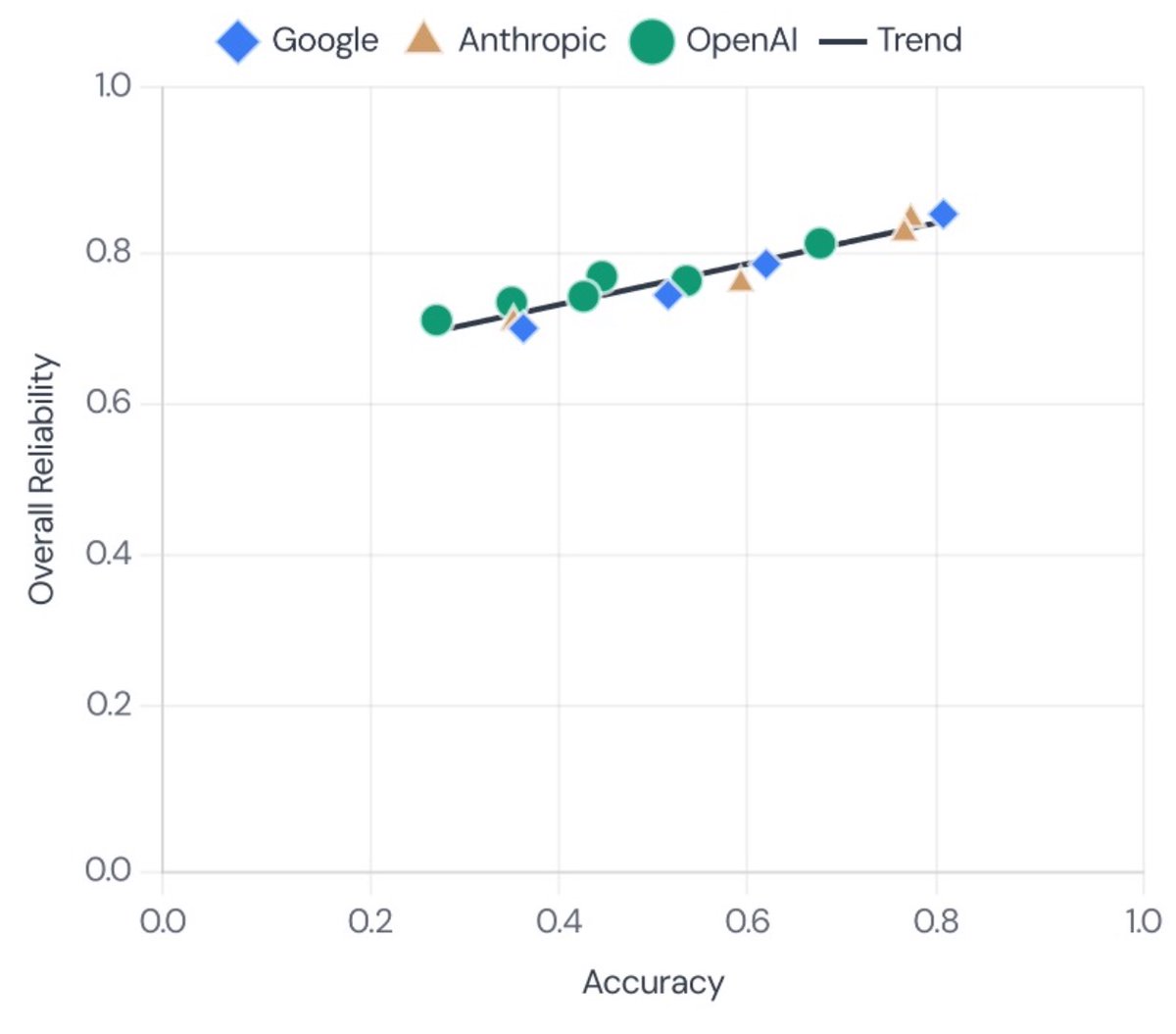

Hey @METR_Evals—love your work, but we think it's the *metric* that's saturated, not the task suite. For example, despite rapid gains in accuracy, we found limited gains in reliability. We'd love to work together to see if this holds up on the time-horizon task suite.

We estimate that Claude Opus 4.6 has a 50%-time-horizon of around 14.5 hours (95% CI of 6 hrs to 98 hrs) on software tasks. While this is the highest point estimate we’ve reported, this measurement is extremely noisy because our current task suite is nearly saturated.

new @METR_Evals research note from @whitfill_parker, @cherylwoooo, nate rush, and me. (chiefly parker!) we find that *half* of SWE-bench Verified solutions from Sonnet 3.5-to-4.5 generation AIs *which are graded as passing* are rejected by project maintainers.