Arvind Narayanan

13.1K posts

@random_walker

Princeton CS prof and Director @PrincetonCITP. Coauthor of "AI Snake Oil" and "AI as Normal Technology". https://t.co/ZwebetjZ4n Views mine.

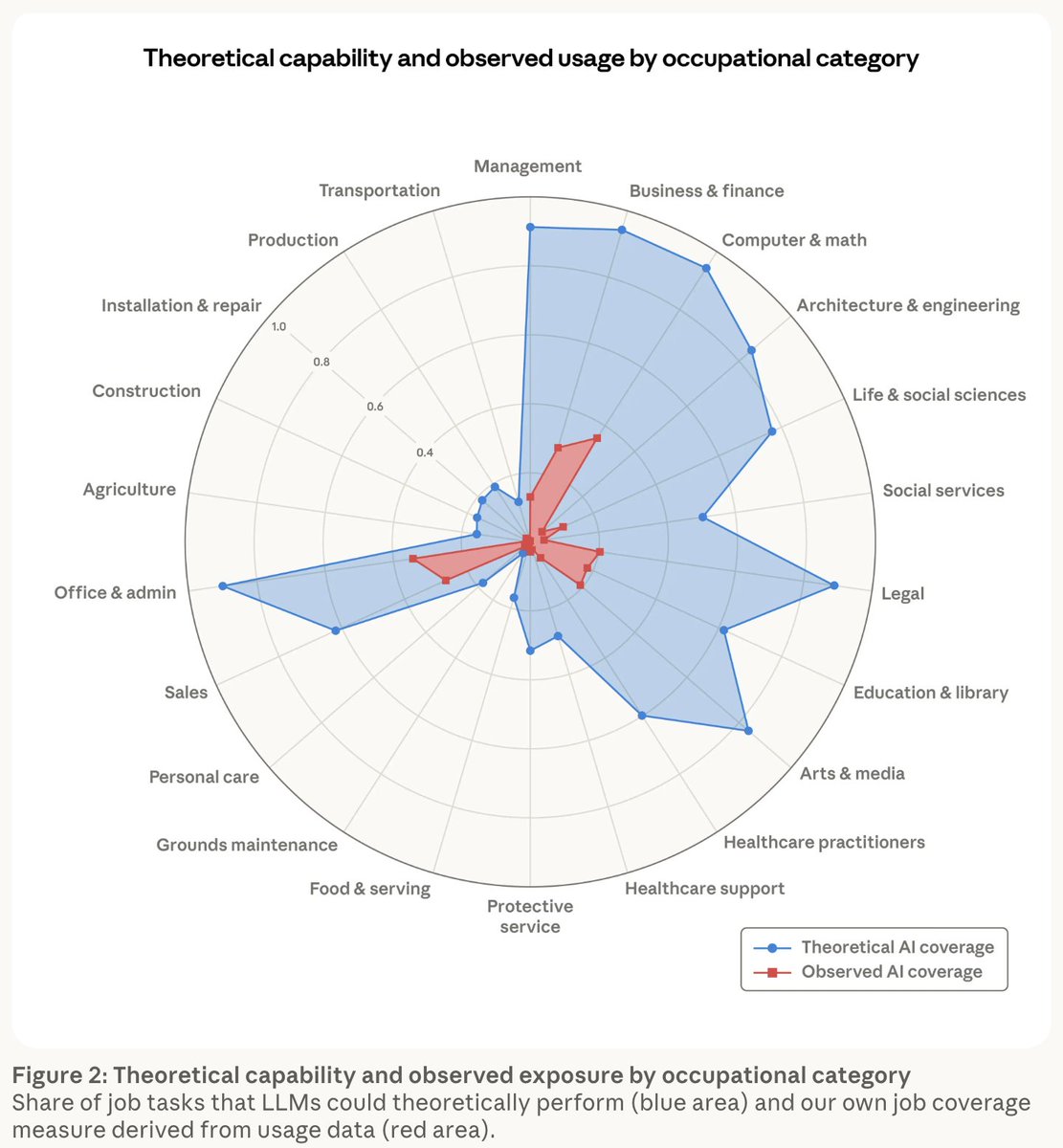

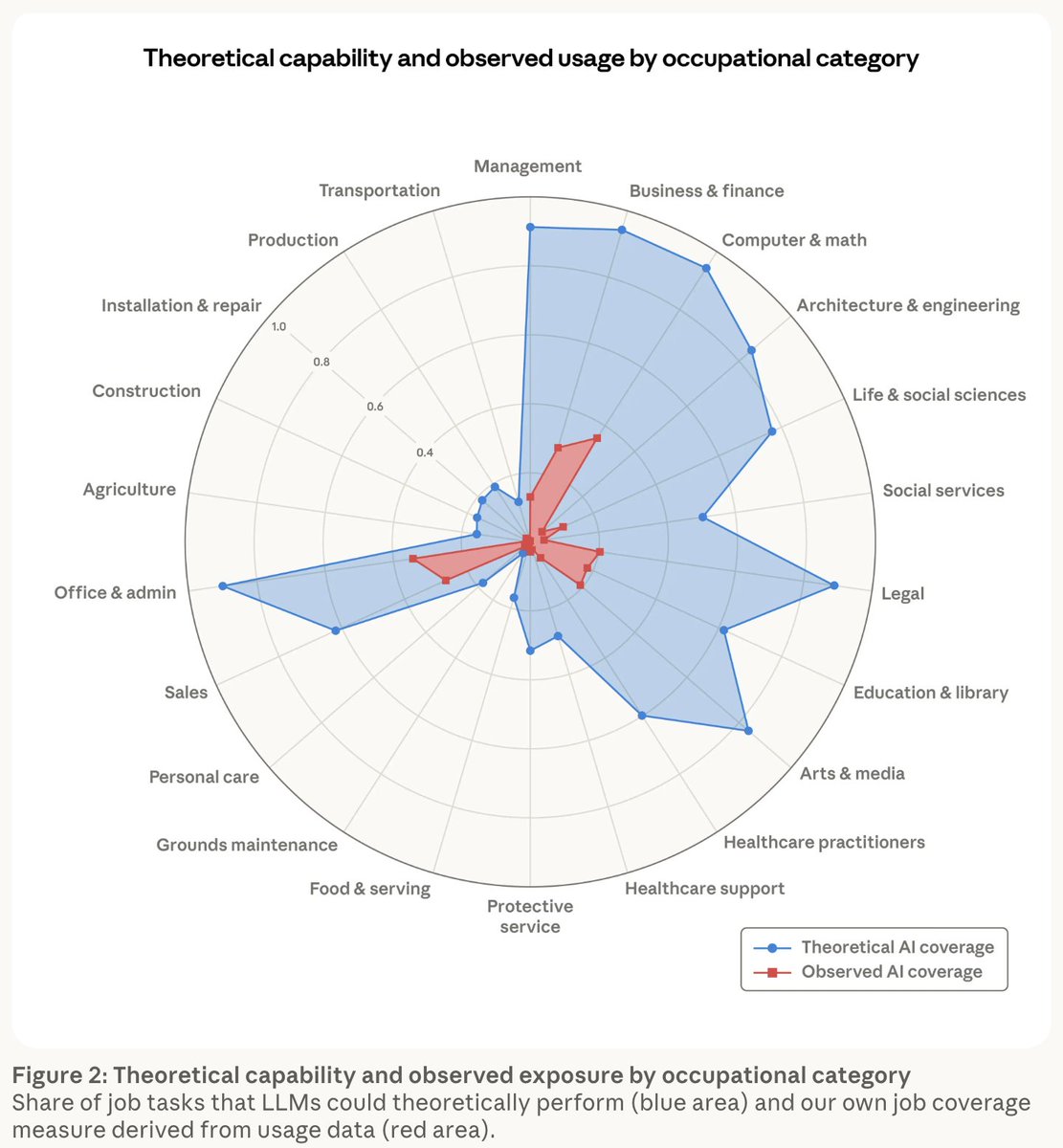

Experts have three views on the future of work, each credible but sharply opposed. Who’s right? In a new paper for @CarnegieEndow & @CEIPTechProgram, I lay out the best arguments made by the alarmed, patient, and excited groups. 🧵On the most important points and what policymakers can do today

This is just like being alive in the 1600s when they got good at making complicated clocks and deduced that every complicated thing in the universe probably functioned exactly like a clock

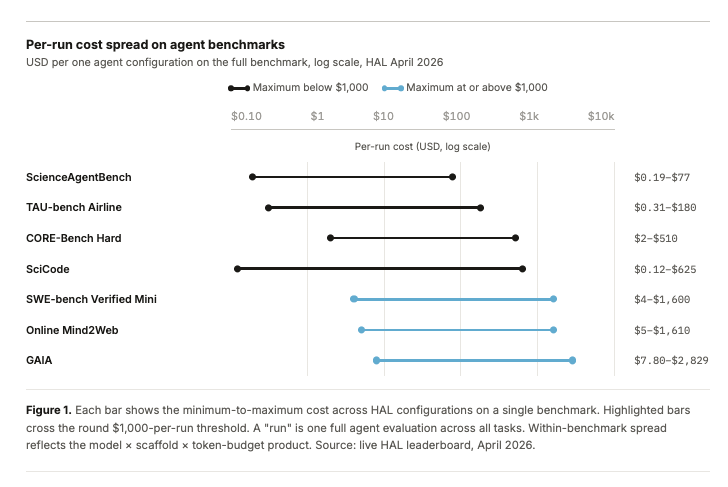

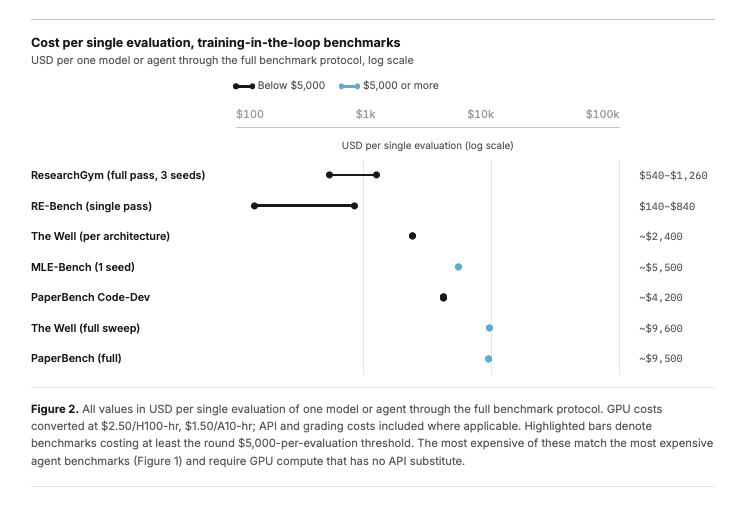

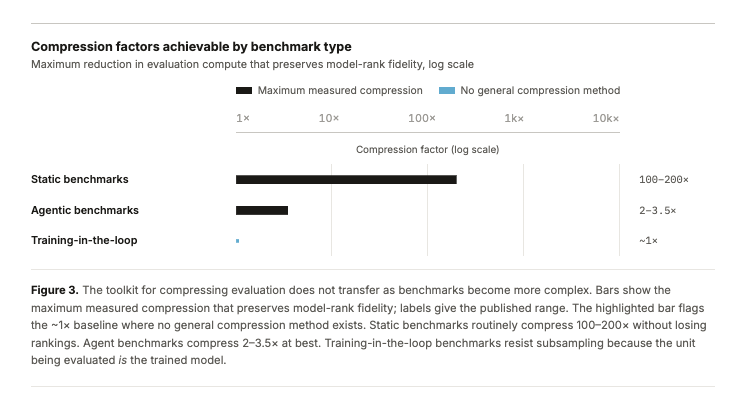

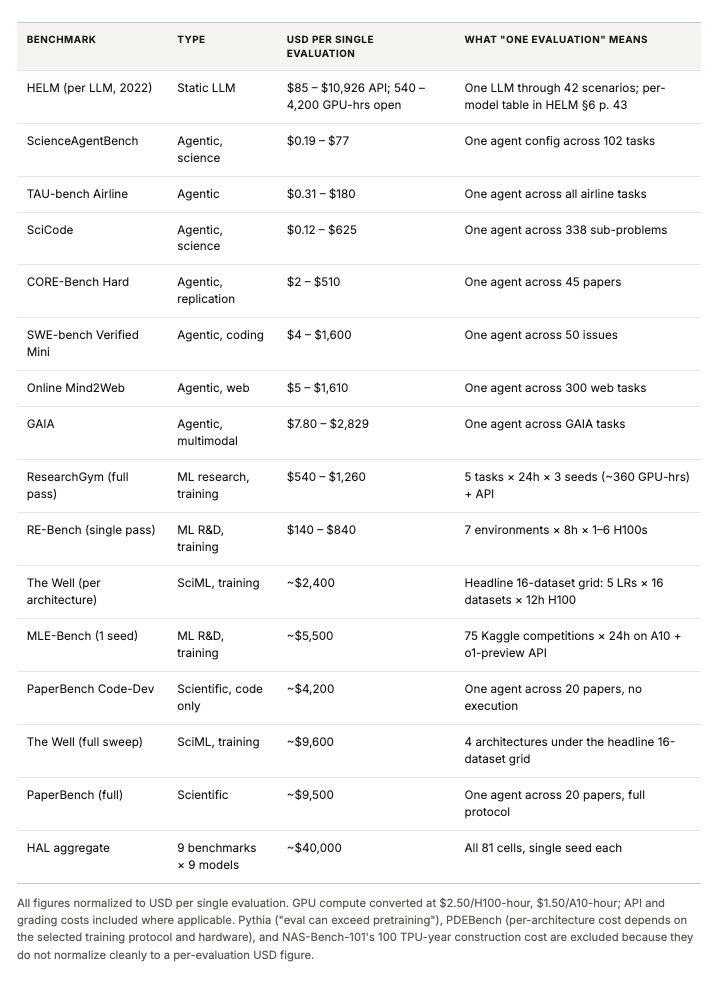

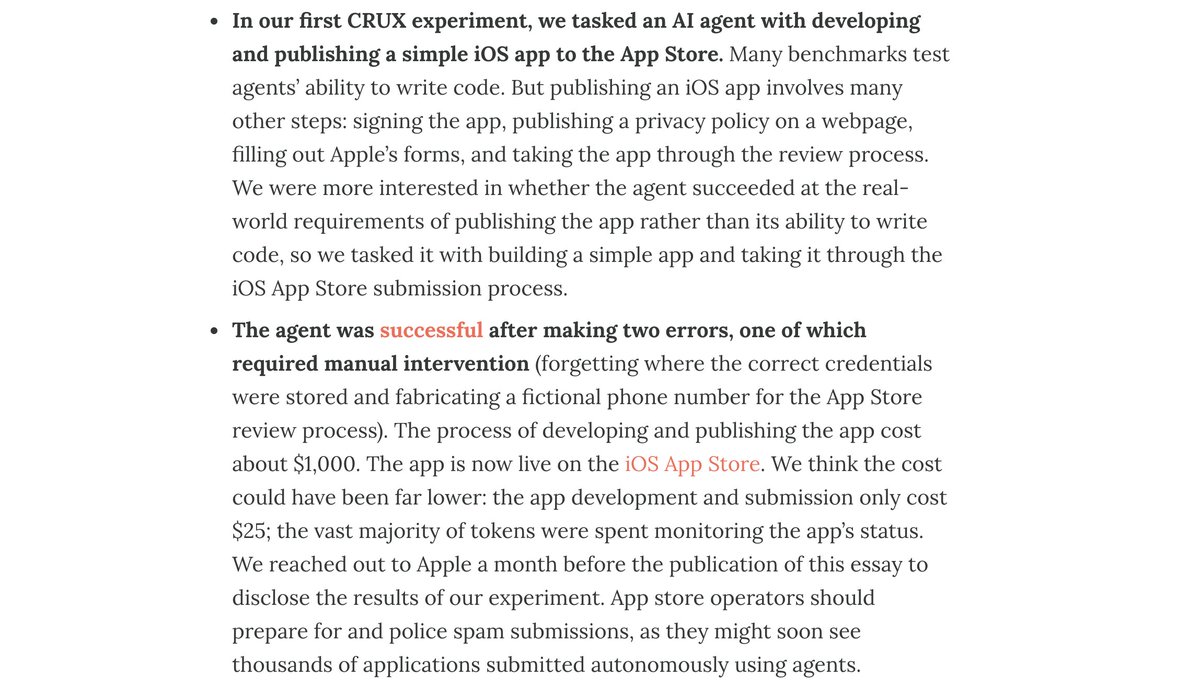

Most agentic benchmarks center around tasks that are automatically verifiable. But any task that is veriafiable is also easy to optimize for. This work instead describes the future of critical open world evaluations. Led by @sayashk, our current draft is now live.

Benchmarks are saturated more quickly than ever. How should frontier AI evaluations evolve? In a new paper, we argue that the AI community is already converging on an answer: Open-world evaluations. They are long, messy, real-world tasks that would be impractical for benchmarks.

House lawmakers were given a demonstration by DHS yesterday where they were able to interact with jailbroken models. Open source will probably reach Mythos performance by the end of the year. By the summer there will be a push to regulate open source in the US. This is a prelude.

@sayashk @TransluceAI @PKirgis @steverab @random_walker @fly_upside_down @RishiBommasani @DubMagda @ghadfield @ahall_research This was such an interesting thread! I can't believe it doesn't have more views. I appreciated your take on evaluation awareness and also you offering the 1GB of logs for users who know what to do with them. I'm a non-technical user but I still enjoyed the read and its details.

Benchmarks are saturated more quickly than ever. How should frontier AI evaluations evolve? In a new paper, we argue that the AI community is already converging on an answer: Open-world evaluations. They are long, messy, real-world tasks that would be impractical for benchmarks.

Benchmarks are saturated more quickly than ever. How should frontier AI evaluations evolve? In a new paper, we argue that the AI community is already converging on an answer: Open-world evaluations. They are long, messy, real-world tasks that would be impractical for benchmarks.

Benchmarks are saturated more quickly than ever. How should frontier AI evaluations evolve? In a new paper, we argue that the AI community is already converging on an answer: Open-world evaluations. They are long, messy, real-world tasks that would be impractical for benchmarks.

Benchmarks are saturated more quickly than ever. How should frontier AI evaluations evolve? In a new paper, we argue that the AI community is already converging on an answer: Open-world evaluations. They are long, messy, real-world tasks that would be impractical for benchmarks.