Sebastian Raschka

19.5K posts

@rasbt

ML/AI research engineer. Ex stats professor. Author of "Build a Large Language Model From Scratch" (https://t.co/O8LAAMRzzW) & reasoning (https://t.co/5TueQKx2Fk)

🙌 Andrej Karpathy’s lab has received the first DGX Station GB300 -- a Dell Pro Max with GB300. 💚 We can't wait to see what you’ll create @karpathy! 🔗 #dgx-station" target="_blank" rel="nofollow noopener">blogs.nvidia.com/blog/gtc-2026-…

@DellTech

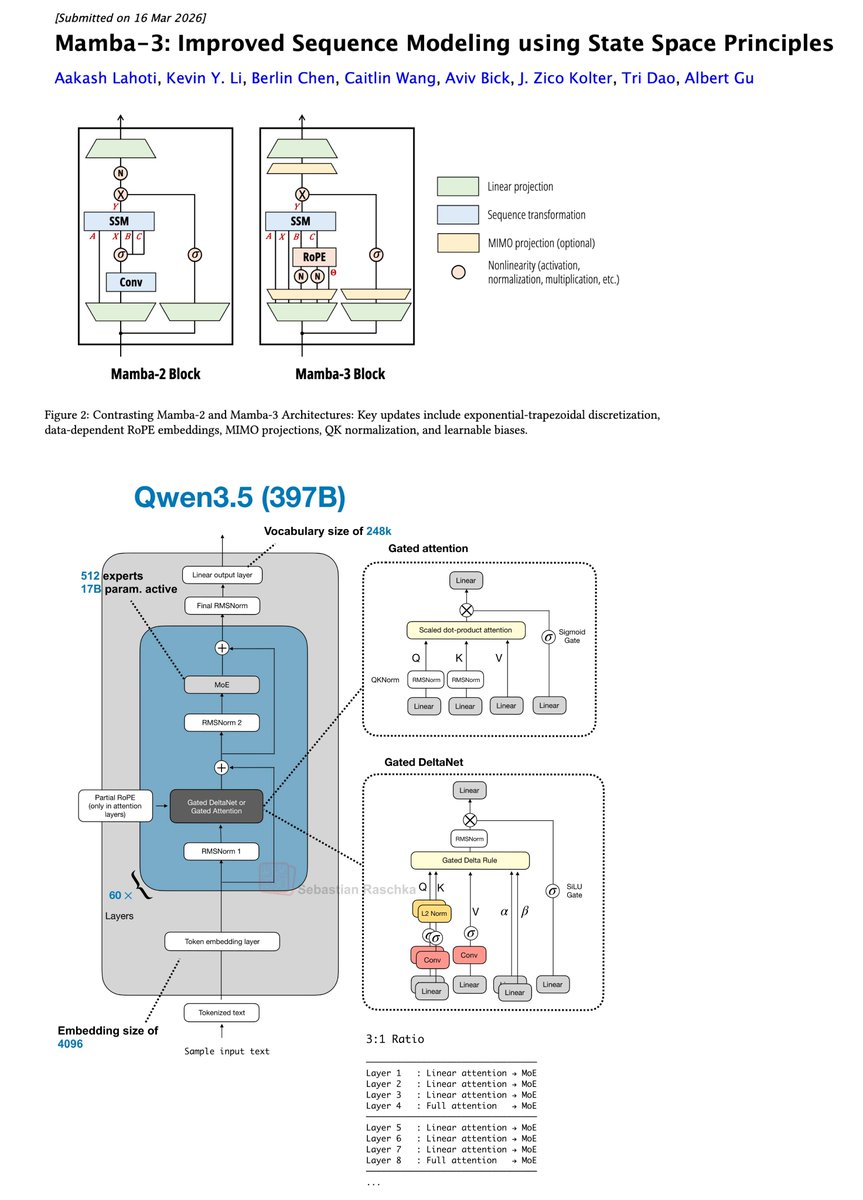

The newest model in the Mamba series is finally here 🐍 Hybrid models have become increasingly popular, raising the importance of designing the next generation of linear models. We've introduced several SSM-centric ideas to significantly increase Mamba-2's modeling capabilities without compromising on speed. The resulting Mamba-3 model has noticeable performance gains over the most popular previous linear models (such as Mamba-2 and Gated DeltaNet) at all sizes. This is the first Mamba that was student led: all credit to @aakash_lahoti @kevinyli_ @_berlinchen @caitWW9, and of course @tri_dao!

@sachindetrax @mattturck Was just giving it a try on mac (following the instructions on github.com/microsoft/BitN…)... results look wild. My guess is something in the compilation went wrong or mac is not supported:

Holy shit... Microsoft open sourced an inference framework that runs a 100B parameter LLM on a single CPU. It's called BitNet. And it does what was supposed to be impossible. No GPU. No cloud. No $10K hardware setup. Just your laptop running a 100-billion parameter model at human reading speed. Here's how it works: Every other LLM stores weights in 32-bit or 16-bit floats. BitNet uses 1.58 bits. Weights are ternary just -1, 0, or +1. That's it. No floats. No expensive matrix math. Pure integer operations your CPU was already built for. The result: - 100B model runs on a single CPU at 5-7 tokens/second - 2.37x to 6.17x faster than llama.cpp on x86 - 82% lower energy consumption on x86 CPUs - 1.37x to 5.07x speedup on ARM (your MacBook) - Memory drops by 16-32x vs full-precision models The wildest part: Accuracy barely moves. BitNet b1.58 2B4T their flagship model was trained on 4 trillion tokens and benchmarks competitively against full-precision models of the same size. The quantization isn't destroying quality. It's just removing the bloat. What this actually means: - Run AI completely offline. Your data never leaves your machine - Deploy LLMs on phones, IoT devices, edge hardware - No more cloud API bills for inference - AI in regions with no reliable internet The model supports ARM and x86. Works on your MacBook, your Linux box, your Windows machine. 27.4K GitHub stars. 2.2K forks. Built by Microsoft Research. 100% Open Source. MIT License