Paweł Szulc

7.7K posts

Paweł Szulc

@EncodePanda

Haskell, 范畴论, λ, Distributed Systems, Formal Methods

Physicist has written a fascinating big beautiful paper.Let’s not be afraid to call it what it is - groundbreaking. For hundreds of years, mathematics had dozens of “basic” functions: sine, cosine, logarithm, square root, exponential. You know these from school. Everyone does. Now it turns out that all of it is one single operator: E(x, y) = exp(x) - ln(y), and the constant 1. Sin, cos, π - everything follows from this neatly , just nest it properly. Nature hid the simplest possible description of reality. And it was just been found. The whole thing is beautiful and remarkable, here the word “groundbreaking” is not a marketing buzzword. For instance, instead of writing π or 3.14, one can now elegantly write E(E(E(1,E(E(1,E(1,E(E(1,E(E(1,E(E(1,E(1,E(E(1,1),1))),1)),E(E(E(E(E(1,E(E(1,E(1,E(E(1,E(E(E(1,E(E(1,E(1,E(E(1,1),1))),1)),E(E(1,E(E(1,E(E(1,E(E(1,1),1)),E(E(E(1,E(E(1,E(1,E(E(1,1),1))),1)),E(1,1)),1))),1)),1)),1)),1))),1)),E(E(E(1,E(E(1,E(1,E(E(1,1),1))),1)),E(E(1,E(E(1,E(1,E(E(1,E(E(1,E(E(1,E(1,E(E(1,1),1))),1)),E(1,1))),1))),1)),1)),1)),1),1),1))),1))),1)),E(E(E(1,E(E(1,E(1,E(E(1,1),1))),1)),E(E(1,E(E(1,E(1,E(E(1,E(E(1,E(E(1,E(1,E(E(1,1),1))),1)),E(1,1))),1))),1)),1)),1)),1) arxiv.org/abs/2603.21852

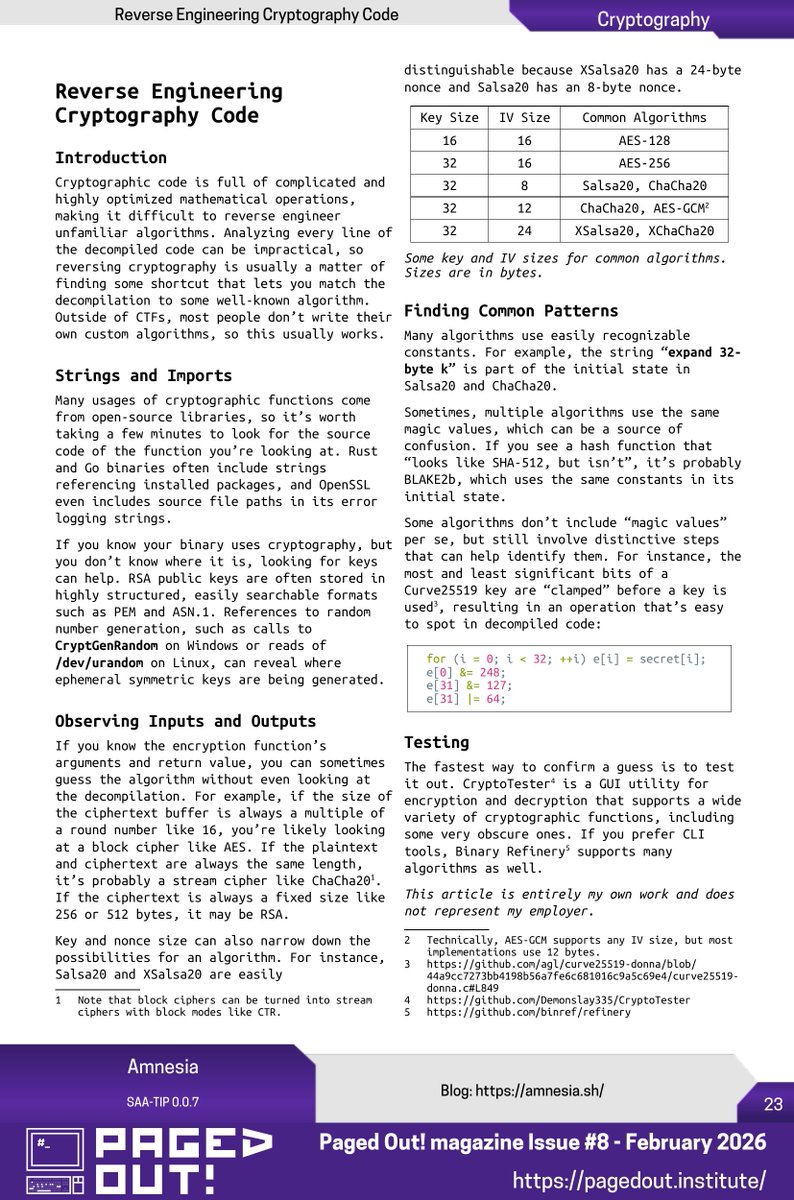

Humans: 100% Gemini 3.1 Pro: 0.37% GPT 5.4: 0.26% Opus 4.6: 0.25% Grok-4.20: 0.00% François Chollet just released ARC-AGI-3 -- the hardest AI test ever created. 135 novel game environments. No instructions. No rules. No goals given. Figure it out or fail. Untrained humans solved every single one. Every frontier AI model scored below 1%. Each environment was handcrafted by game designers. The AI gets dropped in and has to explore, discover what winning looks like, and adapt in real time. The scoring punishes brute force. If a human needs 10 actions and the AI needs 100, the AI doesn't get 10%. It gets 1%. You can't throw more compute at this. For context: ARC-AGI-1 is basically solved. Gemini scores 98% on it. ARC-AGI-2 went from 3% to 77% in under a year. Labs spent millions training on earlier versions. ARC-AGI-3 resets the entire scoreboard to near zero. The benchmark launched live at Y Combinator with a fireside between Chollet and Sam Altman. $2M in prizes on Kaggle. All winning solutions must be open-sourced. Scaling alone will not close this gap. We are nowhere near AGI. (Link in the comments)

Last day to purchase your early camaron tickets and submit a proposal to our Call For Papers! lambda.world @CFP_Bot @WikiCFP #FunctionalProgramming