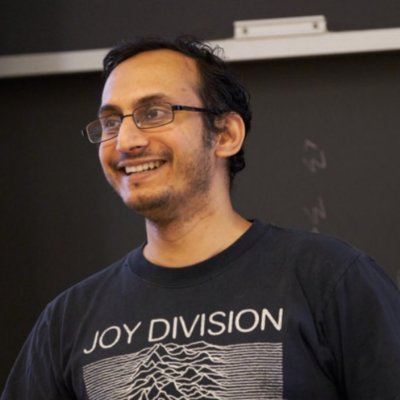

Adi Renduchintala

463 posts

@rendu_a

Applied Research Scientist @NVIDIA, former: Research Scientist @MetaAI, PhD @jhuclsp also lurking on Mastodon [email protected]

Accidentally said “understood” instead of “grokked” and they kicked me out of sf

We're excited to share leaderboard-topping 🏆 NVIDIA Nemotron Nano 2, a groundbreaking 9B parameter open, multilingual reasoning model that's redefining efficiency in AI and earned the leading spot on the @ArtificialAnlys Intelligence Index leaderboard among open models within the same parameter range. It's built on a unique hybrid Transformer-Mamba architecture, a combination that delivers the same accuracy you expect, but with higher throughput. This enables it to achieve high performance/cost, making it perfect for real-world applications like customer service agents and chatbots. 🏗️ Hybrid Architecture: By combining the strengths of Transformer and Mamba architectures, achieves up to 6X faster throughput compared to other 8B open models and highest reasoning accuracy. 🏦 Thinking Budget: Reduces unnecessary token generation to cut costs by up to 60%, making it an ideal solution for balancing performance and total cost of ownership (TCO). 🔢 Open Datasets: The training datasets of this model are fully open, giving maximum transparency in using the model for enterprise applications. 🤗 Technical details on @HuggingFace ➡️ nvda.ws/3JfcKST 🏆 Leaderboard ➡️ nvda.ws/47B7iUh

👀 Nemotron-H tackles large-scale reasoning while maintaining speed -- with 4x the throughput of comparable transformer models.⚡ See how #NVIDIAResearch accomplished this using a hybrid Mamba-Transformer architecture, and model fine-tuning ➡️ nvda.ws/43PMrJm