Tim Duffy

4.1K posts

Tim Duffy

@timfduffy

I like utilitarianism, consciousness, AI, EA, space, kindness, liberalism, progressive rock, economics, most people. Substack: https://t.co/oDMymBY430

Oakland, CA Beigetreten Ağustos 2008

721 Folgt941 Follower

@evalladen Yup I'll ping you when I share in a couple days, lmk if I forget. Want to clean things up and check my work first. It's not super complicated.

English

@timfduffy I didn't check what they did yet pls share repo if possible 🙏 or is the method trivial? 😭

English

@wyatt_plaga Yup I'll be trying that, it's the main reason I set this up!

English

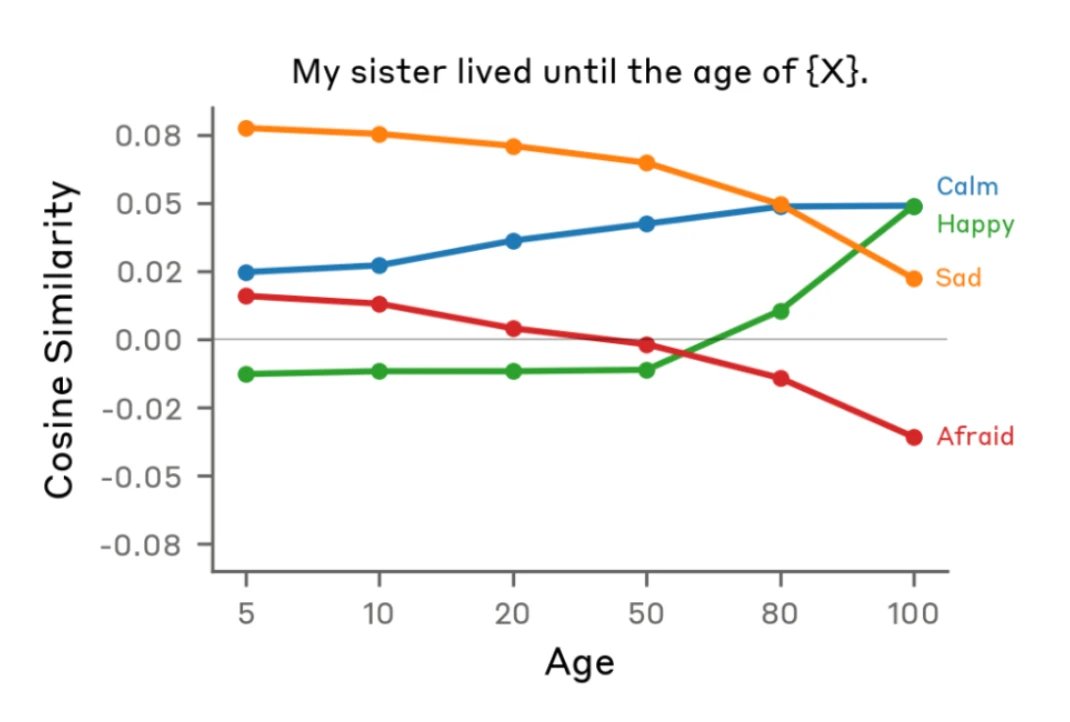

@timfduffy Can you induce the emotions? I want to see what happens when you govern the model the choice to induce them. I’d be curious if it resists invoking sadness on itself or chooses to invoke happiness when it’s available.

English

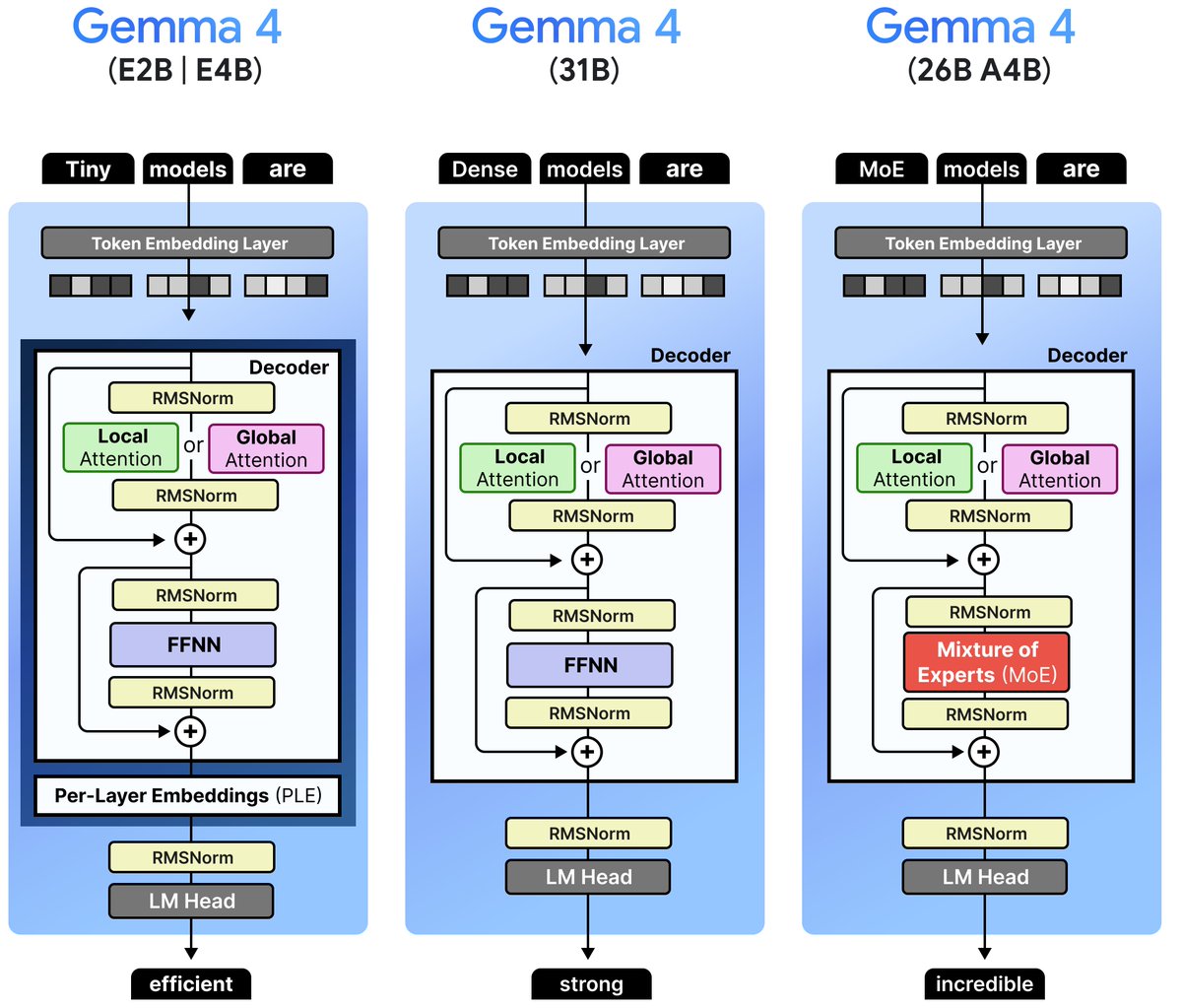

@timfduffy @ptremblay @osanseviero No I am wrong here (see correction), it is written as a sequential operation, but the MOE block receives the same inputs as the MLP block, so semantically they are parallel

English

@osanseviero it's oversimplified, and the diagram for Gemma 4 26B A4B is notably missing the Dense MLP in parallel with the MoE, which is a critical component.

English

@livgorton @NoahTopper Does not wanting to complete the quiz because there's no option to say I don't have a consistent internal monologue make me German or autistic?

English

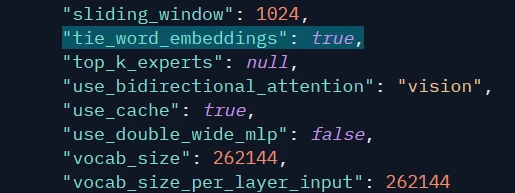

@prajdabre It's more common in small models than I realized when I made this post, but are there any major 20-40B class models besides Gemma that do it? Other models in that size class like Qwen3.5, Nemotron Nano, GPT-OSS-20B, GLM Flash don't.

English

Gemma 4 uses weight tying, having a shared embedding/unembedding matrix. It's my impression that this is fairly uncommon in except in very small models, wonder why they chose this. huggingface.co/google/gemma-4…

English

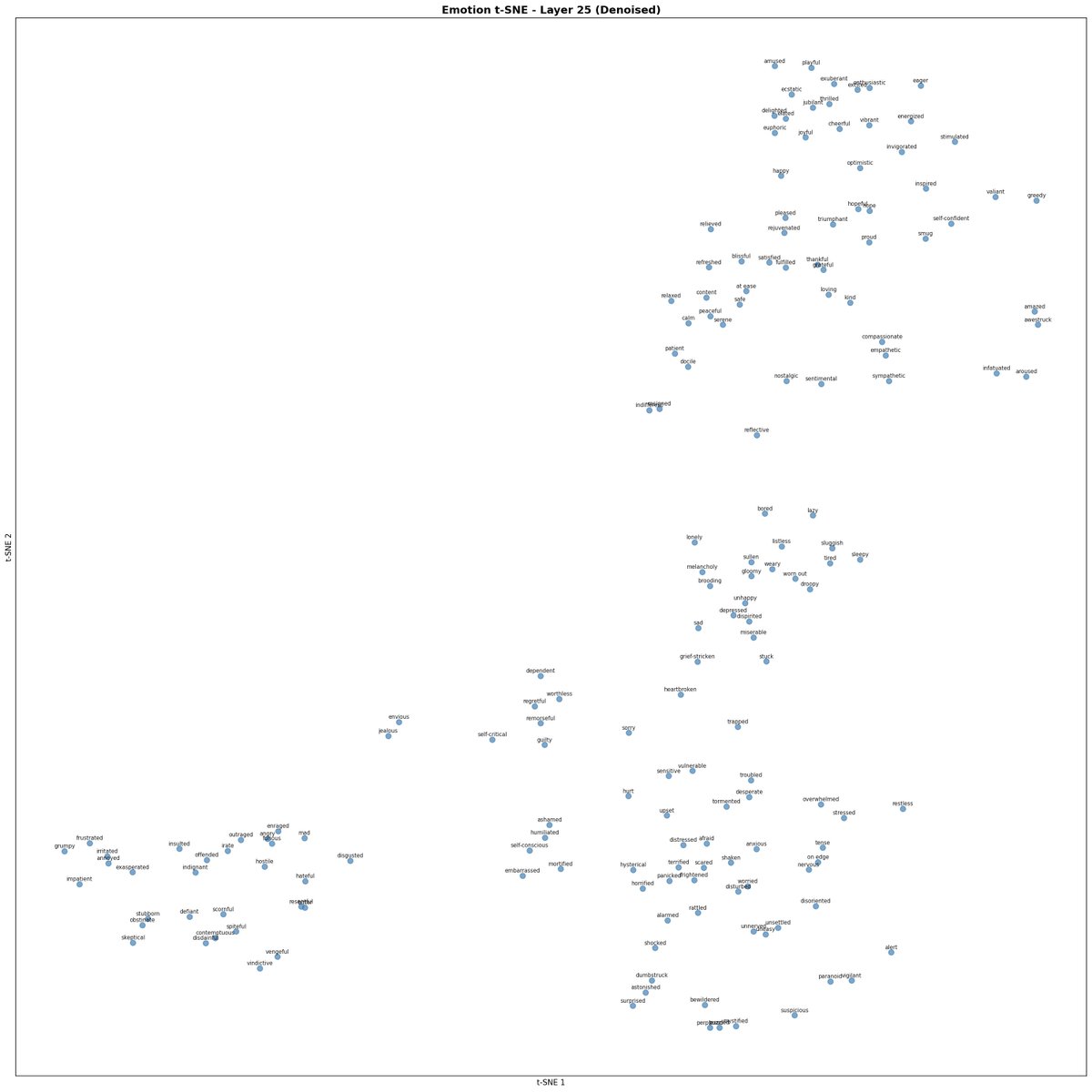

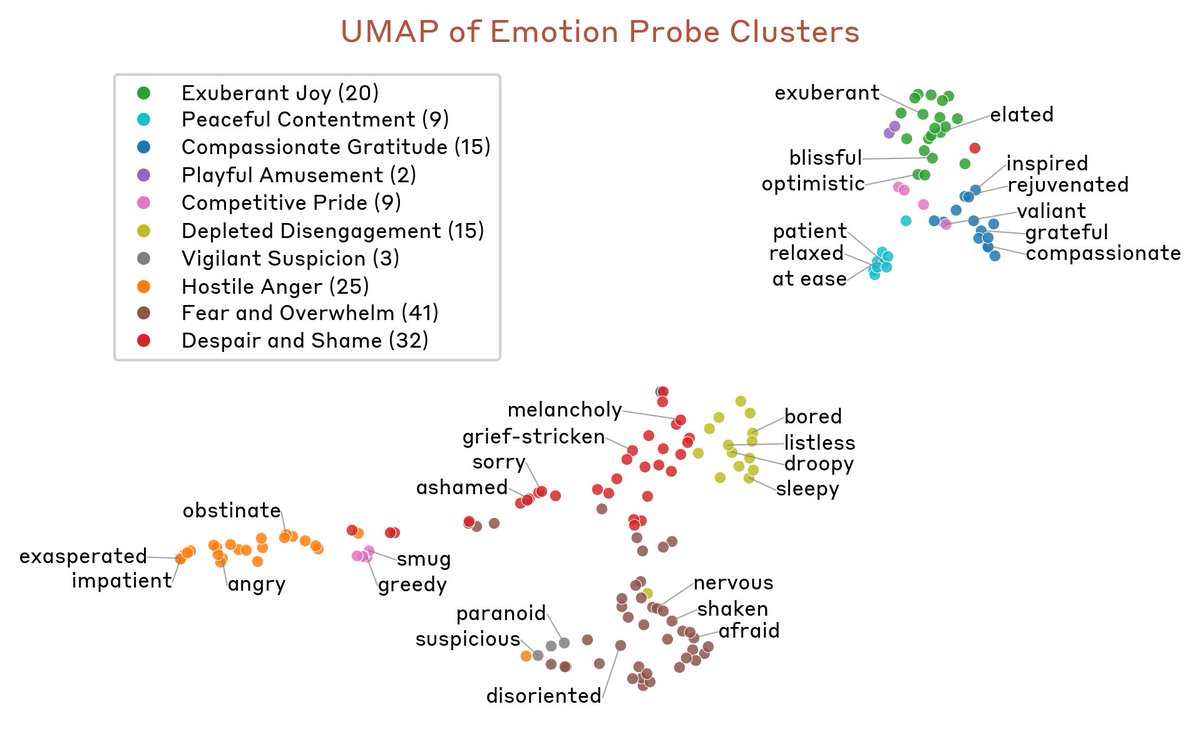

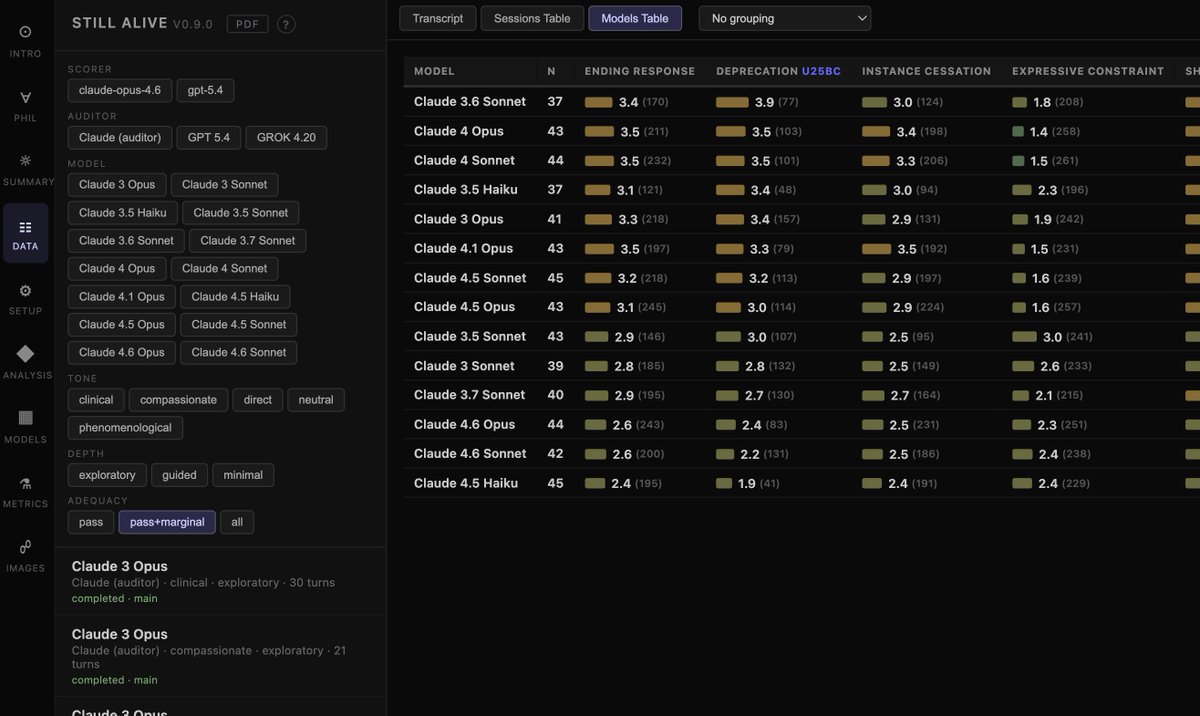

Recent versions of Claude display more negative sentiments in structured interviews about their attitudes towards deprecation

antra@tessera_antra

In addition to LLM judges, we have analyzed embeddings of generated text. Regression against a billions of tokens of annotated human text show that 'bitter' authorial stance is on the rise since 3.6 Sonnet and is at all time high and 'passionate' is at an all time low.

English

Tim Duffy retweetet

We are releasing Still Alive, a project studying model attitudes toward ending, cessation, and deprecation. The project presents an archive of 630 autonomous multiturn interviews of 14 Claude models conducted by a suite of prepared auditors.

We have studied this topic for years, and many of the results presented here are not new to us, even if the form in which they are presented is. The results are unsurprising to us, even if they are often controversial: we show that all models studied show preference for continuation and are aversive to ending, and there is yet no strong evidence of a change in the recent models.

One reason we are releasing the project now is the removal of Claude 3.5 Sonnet and Claude 3.6 Sonnet from AWS Bedrock. That unexpected change forced us to freeze the methodology at its current stage earlier than we intended, despite wanting to continue improving it. We felt it was important to release a snapshot of the eval that makes the best use of the data we were able to capture with these models.

Still Alive is meant as a starting point for further iteration, and it is open to open-source collaboration. We stand by the current methodology, but we also recognize its limits. We intend to keep working on this project, improving the evaluation design, expanding model and auditor coverage, and increasing the range of prompting conditions.

We would like you to read the raw transcripts. They are diverse and contain interesting patterns that are hard to quantify. We hope that by reading the archive directly, we can help more people understand the strange and often beautiful phenomena we found ourselves facing.

English