Yoonsang Lee

109 posts

Yoonsang Lee

@yoonsang_

CS PhD @princeton_nlp @princetonPLI; prev @SeoulNatlUni

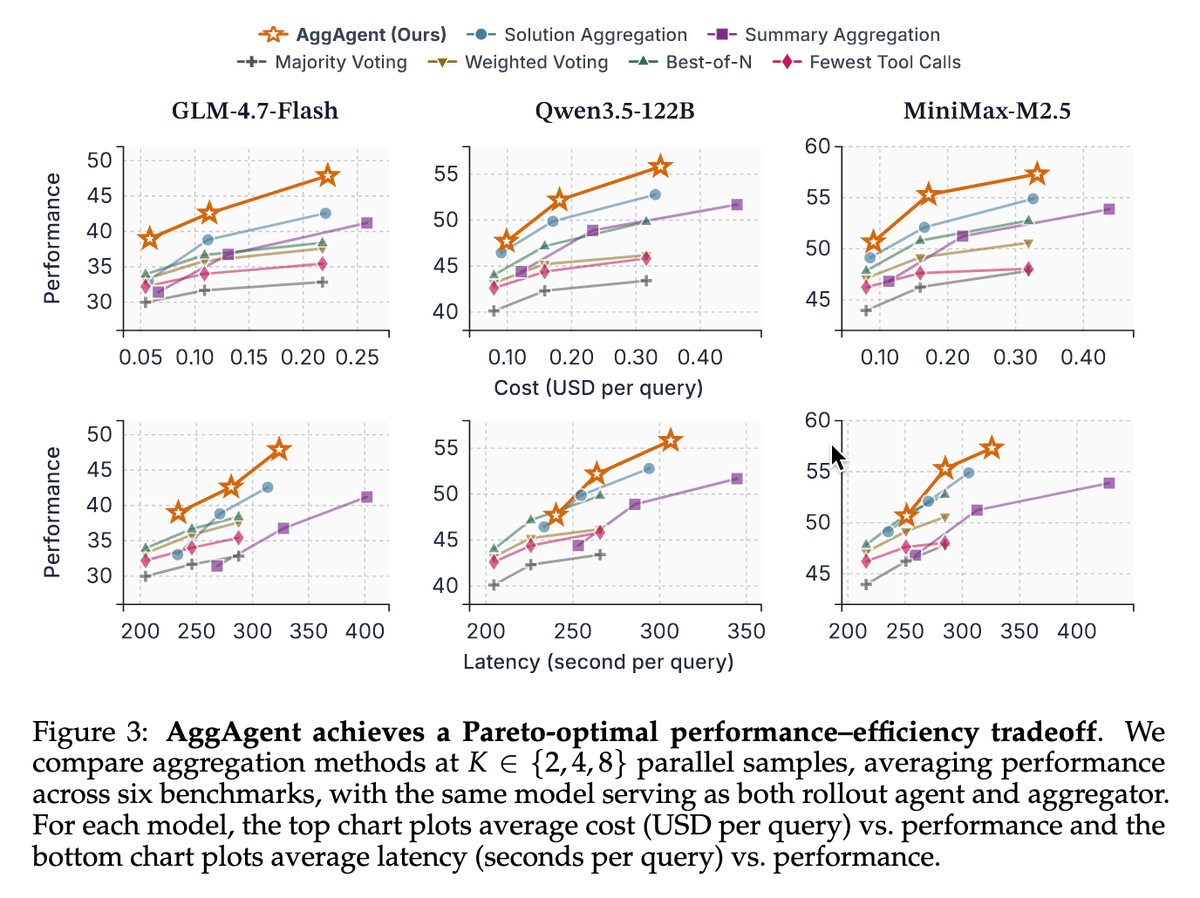

How should we effectively aggregate long-horizon agent trajectories? 🧐 Unlike CoT reasoning, agentic tasks pose unique challenges: they are long, multi-turn, and tool-augmented. Introducing 👉🏻 AggAgent 👈🏻 — which treats parallel trajectories as an environment to interact with.

🚨 Announcing a new LLM calibration method, DINCO, which enforces confidence coherence (that probs must sum to 1) by having the LLM verbalize its confidence independently on self-generated distractors, and normalizing by the total confidence. Major gains on long + short-form QA!

Your AI Agent just formed a hypothesis. 💭 How does it validate it? Not by trying to prove itself wrong. Rather, it selectively seeks evidence that confirms what it already believes, often ending up with the wrong answer! Confirmation bias isn’t just human. We measure it in LLMs, and we show how to fix it! 🧵