!Briann🗿

4.1K posts

!Briann🗿 retweeted

!Briann🗿 retweeted

!Briann🗿 retweeted

!Briann🗿 retweeted

@Ji_Ha_Kim Same their work is soo cool and i read the 100 hours in kimi and i loved everything @Kimi_Moonshot

English

I would love to join Kimi, they do amazing research strongly aligned with my interests

Kimi.ai@Kimi_Moonshot

English

!Briann🗿 retweeted

The Society (2019) really had something going, that whole “kids left to run everything” setup spiraling fast, and the fact it got cancelled right when things were opening up still stings.

cinesthetic.@TheCinesthetic

The cancellation of which TV show are you still frustrated about?

English

Come introduce yourself to the team, we have your slippers ready.

Reach out at: talent@moonshot.ai

ℏεsam@Hesamation

> be Moonshot > 300 employees, avg age <30 > no departments, no titles, no KPIs > so many former CEOs and founders > 80% of company are introverts > everyone keeps slippers under desk > no bureaucratic culture > some mornings you walk in not knowing what to do > no one tells you if you’re doing well > doesn’t care about job background, care about “taste” > “if you ranked AI companies by employees who play instruments, kimi wins”

English

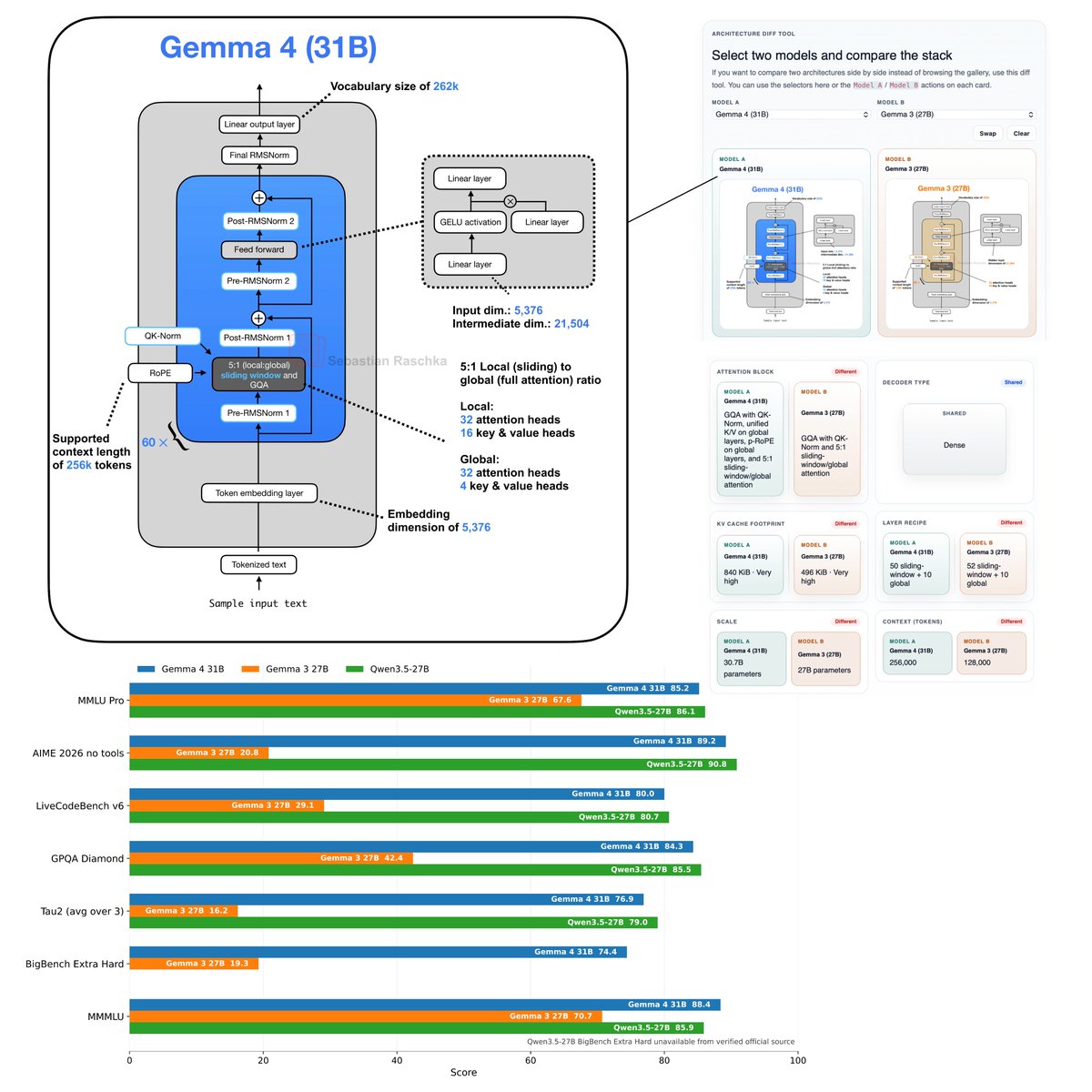

Flagship open-weight release days are always exciting. Was just reading through the Gemma 4 reports, configs, and code, and here are my takeaways:

Architecture-wise, besides multi-model support, Gemma 4 (31B) looks pretty much unchanged compared to Gemma 3 (27B).

Gemma 4 maintains a relatively unique Pre- and Post-norm setup and remains relatively classic, with a 5:1 hybrid attention mechanism combining a sliding-window (local) layer and a full-attention (global) layer. The attention mechanism itself is also classic Grouped Query Attention (GQA).

But let’s not be fooled by the lack of architectural changes. Looking at the benchmarks, Gemma 4 is a huge leap from Gemma 3. This is likely due to the training set and recipe.

Interestingly, on the AI Arena Leaderboard, Gemma 4 (31B) ranks similarly to the much larger Qwen3.5-397B-A17B model. But as I discussed in my model evaluation article, arena scores are a bit problematic as they can be gamed and are biased towards human (style) preference.

If we look at some other common benchmarks, which I plotted below, we can see that it’s indeed a very clear leap over Gemma 3 and ranks on par with Qwen3.5 27B.

Note that there is also a Mixture-of-Experts (MoE) Gemma 4 variant that is slightly smaller (27B with 4 billion parameters active. The benchmarks are only slightly worse compared to Gemma 4 (31B).

I omitted the MoE architecture in the figure below because the figure is already very crowded, but you can find it in my LLM Architecture Gallery.

Anyways, overall, it's a nice and strong model release and a strong contender for local usage. Also, one aspect that should not be underrated is that (it seems) the model is now released with a standard Apache 2.0 open-source license, which has much friendlier usage terms than the custom Gemma 3 license.

English

!Briann🗿 retweeted

Rono’s drunk uncle clips are finishing me tbh

R🧚🏽♀️@RubyMuasya

Everyone is a content creator now, ata haibambi anymore.

English

!Briann🗿 retweeted

!Briann🗿 retweeted

I’m pleased to announce I will be leading a new team on VLA interpretability @AnthropicAI

English

!Briann🗿 retweeted

Stanford is kinda crazy because as a CS undergrad this term you’re choosing between:

- CS336: 0 to hero on frontier model training

- CS224R taught by Chelsea Finn (founder of Pi)

- CS231N taught by Fei Fei Li (Imagenet, WorldLabs CEO)

- CS221M Mech Interp Intro taught with Goodfire

And a host of personal podcasts delivered by $T CEOs.

Jesse Mu@jayelmnop

protip for stanford undergrads: beware the classes with guest speaker lineups that read like AI coachella. you’re basically paying $5k to listen to a live podcast series.

English

!Briann🗿 retweeted