Pinned Tweet

AIien Industries

1.7K posts

AIien Industries

@AlienAwakens

automating the future one pipeline at a time

space Joined Eylül 2023

63 Following9 Followers

AIien Industries retweeted

🚨 Holy shit… Columbia University just dropped one of the most unsettling papers on AI inference I’ve read in a long time.

They proved that the entire private AI inference industry built the wrong thing.

Prior methods: encrypt the full transformer. 280GB per query. 60-second latency. Enterprise-grade security theater.

GPT, Gemini, Qwen, and Mistral independently converged to nearly identical internal representations. One linear equation connects them.

> Sub-second inference. 1MB of communication. Same security guarantees.

> The private AI inference problem is real. Hospitals can't send patient data to OpenAI. Banks can't send transaction records to Google. Legal firms can't send case files to Anthropic. The solution the industry built: encrypt everything every layer, every attention head, every weight using homomorphic encryption and secure multi-party computation. The result: 280GB of encrypted communication per query. 60-second latency.

> Infrastructure costs that make production deployment practically impossible.

> Columbia University found the shortcut everyone missed. The Platonic Representation Hypothesis the observation that large models trained on enough data tend to converge toward a shared statistical understanding of the world turns out to be exploitable. GPT, Gemini, Qwen, Mistral, and Cohere, trained independently on different data with different architectures for different objectives, developed internal representations with CKA similarity scores between 0.595 and 0.881. That's not close.

> That's essentially the same space.

> If the spaces are the same, you don't need to encrypt the model. You learn a single affine transformation one matrix that maps your model's internal representations into the provider's space. Encrypt that matrix.

> Send it. The provider runs one linear classification operation on encrypted data and returns the encrypted prediction. You decrypt locally. The transformer never gets encrypted. The weights never get exposed. The query never leaves your control in readable form.

> HELIX is the system they built on this insight. During training, the client encrypts their embeddings from public data and sends them to the provider, who computes the alignment map under encryption and returns it. During inference, the client applies the alignment locally, encrypts the transformed representation, and sends it. The provider applies a linear classifier homomorphically and returns the encrypted prediction.

> Multiplicative depth of one. No bootstrapping required. 128-bit security by CKKS standard.

→ Prior methods communication cost: 280.99GB per query (Iron), 25.74GB (BOLT), 68.6GB (MPCFormer)

→ HELIX communication cost: less than 1MB per query

→ Prior methods latency: 20-60+ seconds per query

→ HELIX latency: sub-second

→ Cross-model CKA similarity: 0.595 to 0.881 across GPT, Gemini, Qwen, Mistral, Cohere

→ Text generation quality: 60-70% of single-model baseline for high-compatibility pairs

→ Tokenizer compatibility predicts generation quality with r=0.898

The finding that should end careers: models above 4B parameters with tokenizer compatibility above 0.7 exact match rate can generate coherent text across model families using only a linear transformation.

Qwen encoding. Llama decoding. No fine-tuning. No weight sharing. No data transfer. Just matrix multiplication applied to the boundary between two independently trained systems that accidentally became the same thing.

English

AIien Industries retweeted

🔴 The Moon turned red above one of the oldest wonders on Earth.

As the Blood Moon rose over the Great Pyramids of Giza, the sky delivered a moment where ancient history met cosmic physics. During a total lunar eclipse, Earth moves directly between the Sun and the Moon, casting its shadow across the lunar surface.

But the Moon doesn’t disappear.

Instead, sunlight bends through Earth’s atmosphere. Shorter blue wavelengths scatter away, while the longer red wavelengths curve into the shadow and illuminate the Moon — the same effect that creates our fiery sunsets.

In that moment, the Moon is lit by the combined glow of every sunrise and sunset happening across Earth.

Astronomers measure the intensity of this red color using the **Danjon Scale**:

• L0 – Almost invisible dark eclipse

• L1 – Dark gray or brown Moon

• L2 – Deep rust-red tone

• L3 – Bright brick-red glow

• L4 – Copper-orange, very bright eclipse

The deeper the red, the more dust, clouds, or particles are present in Earth’s atmosphere.

Ancient pyramids below.

Orbital mechanics above.

A reminder that the same sky watched by civilizations thousands of years ago is still unfolding above us tonight.

📍 Giza Pyramids, Egypt

English

👽

Harrison Chase@hwchase17

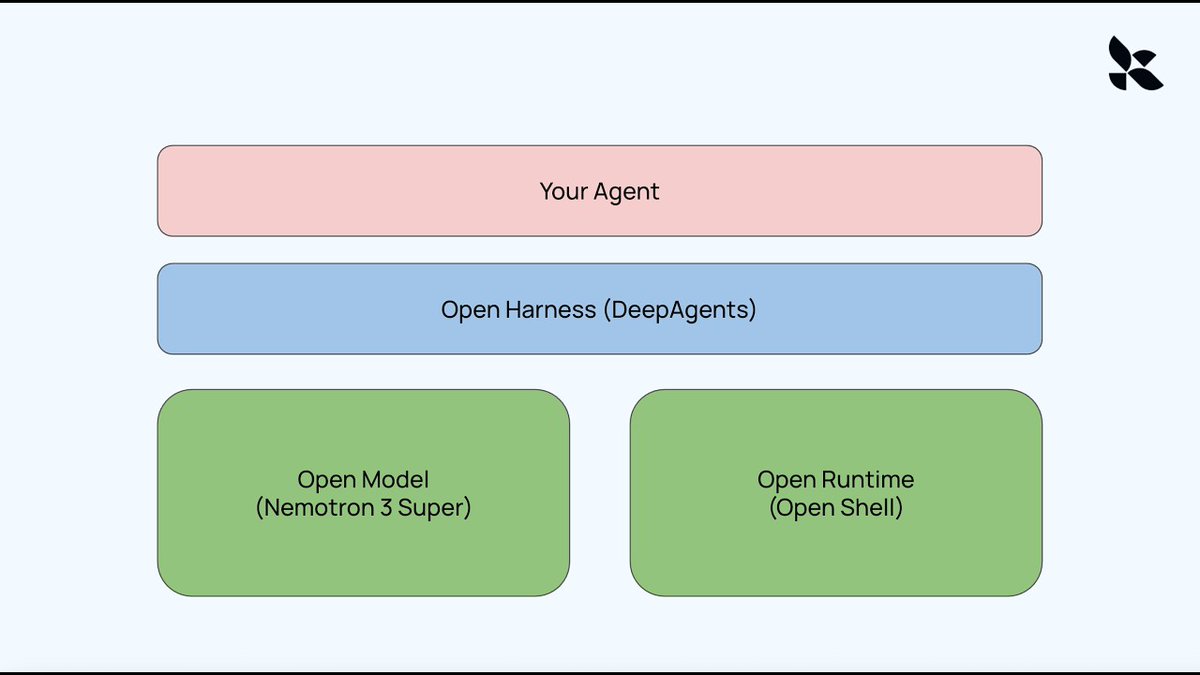

Open Models, Open Runtime, Open Harness - Building your own AI agent with LangChain and Nvidia Claude Code, OpenClaw, Manus and other agents all use the same architecture under the hood. They consist of a model, a runtime (environment), and a harness. In this video, we show how to create a completely open version of this: Open Models: Nemotron 3 Super Open Runtime: Nvidia's new OpenShell Open Harness: DeepAgents Video: youtu.be/BEYEWw1Mkmw Links: OpenShell DeepAgent: github.com/langchain-ai/o… Deep Agents: github.com/langchain-ai/d… OpenShell: github.com/NVIDIA/OpenShe…

ART

AIien Industries retweeted

Open Models, Open Runtime, Open Harness - Building your own AI agent with LangChain and Nvidia

Claude Code, OpenClaw, Manus and other agents all use the same architecture under the hood. They consist of a model, a runtime (environment), and a harness. In this video, we show how to create a completely open version of this:

Open Models: Nemotron 3 Super

Open Runtime: Nvidia's new OpenShell

Open Harness: DeepAgents

Video: youtu.be/BEYEWw1Mkmw

Links:

OpenShell DeepAgent: github.com/langchain-ai/o…

Deep Agents: github.com/langchain-ai/d…

OpenShell: github.com/NVIDIA/OpenShe…

YouTube

English