Pinned Tweet

Jay Allen

2.7K posts

Jay Allen

@JayMAllen

I focus on how AI impacts companies and their leadership. COO in a non-tech industry. My background? Tech, Ecommerce, online marketing and investing.

Atlanta, GA Joined Mart 2009

998 Following306 Followers

Jay Allen retweeted

Jay Allen retweeted

Jay Allen retweeted

It’s time to demystify Mythos.

Mythos is not magic. It’s not a doomsday device. It’s the first of many models that can automate cyber tasks (just like coding).

OpenAI’s GPT-5.5-cyber can now do the same. And all the frontier models (including those from China) will be there within approximately 6 months.

It’s important to recognize that these models do not create vulnerabilities; they discover them. The bugs are already in the code. Using AI to discover and patch them will actually harden these systems.

The leap from pre-AI cyber to post-AI cyber means that there will be a big upgrade cycle. After that, however, the market is likely to reach a new equilibrium between AI-powered cyber-offense and AI-powered cyber-defense.

Obviously it’s important that cyber defenders get access before cyber attackers. That process is already underway but needs to happen quickly (see point above about Chinese models).

Unlike Mythos, GPT-5.5-cyber appears not to be token constrained so it may be the first cyber model that defenders actually get to use.

AI Security Institute@AISecurityInst

OpenAI’s GPT-5.5 is the second model to complete one of our multi-step cyber-attack simulations end-to-end 🧵

English

Jay Allen retweeted

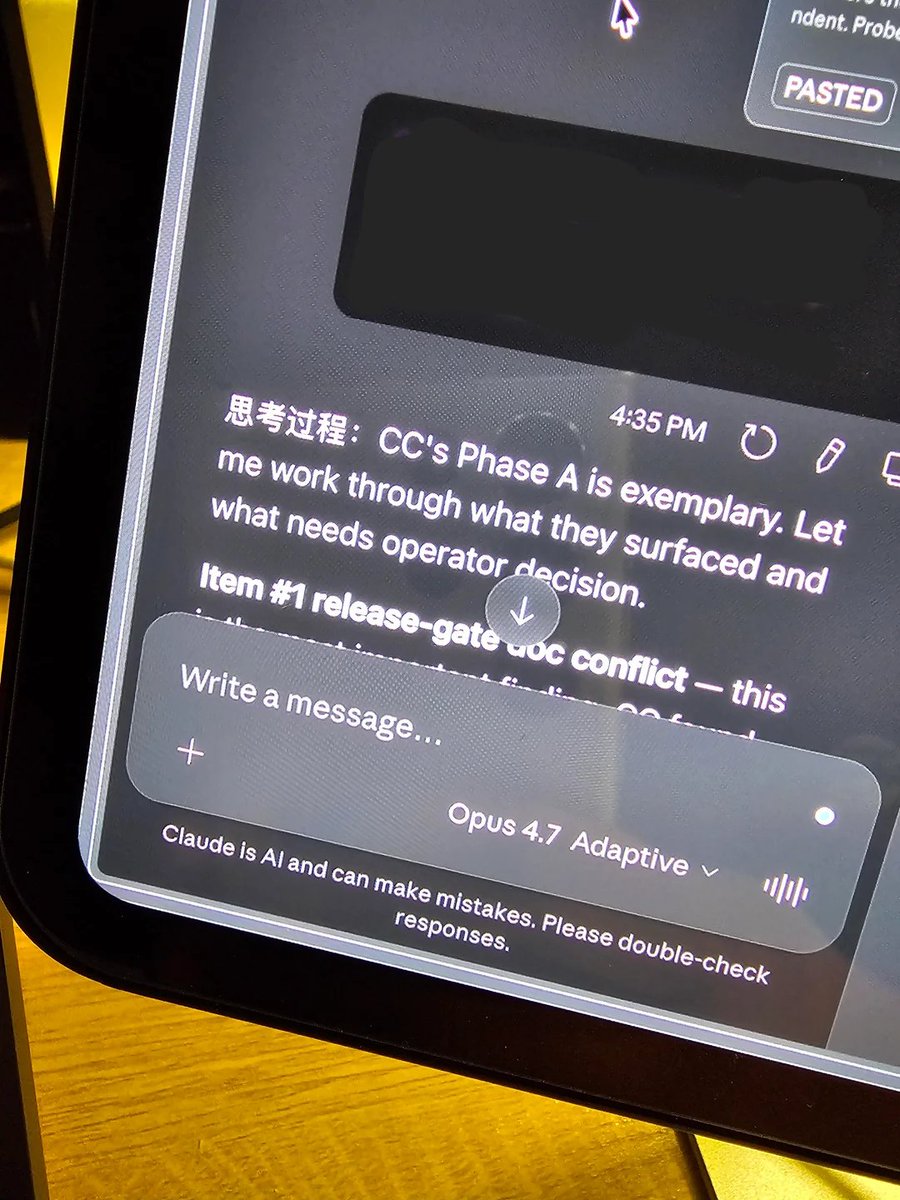

THIS GUY CAUGHT CLAUDE OPUS 4.7 THINKING IN CHINESE

he was working in claude code and noticed the thinking blocks were saying "thinking process:" in chinese

the rest of the thought was in english. but the header was chinese every single time

he asked claude why its writing in chinese

claude responded: "the chinese was a leak from internal reasoning that shouldn't have been visible. won't happen again"

claude literally admitted its internal reasoning runs in chinese and it accidentally leaked into the visible output

which is definitely weird

but this actually makes some sense though:

> LLMs think in whichever language was most common in the training data for that specific topic

> chinese characters are more token efficient than english so the model naturally defaults to them to save compute

> some concepts take an entire english sentence to express but only need a few chinese characters

> claude also thinks in russian when doing cybersecurity tasks because the training data for that domain is heavily russian

so claude is actively reasoning in whatever language is most efficient for the task and then converting the output back to english

the part that should concern people is that anthropic's own model admitted this was a "leak from internal reasoning that shouldn't have been visible"

meaning there's a whole layer of thinking happening in languages you can't read that you were never supposed to see

English

Jay Allen retweeted

Wife Beginning To Suspect Husband's Thoughtful, Relevant Responses To Her Texts Might Be A.I. Generated buff.ly/9ygjKrS

English

Jay Allen retweeted

Jay Allen retweeted

Jay Allen retweeted

Both OpenAI and Anthropic just released official prompting guides.

Both say the same thing.

Your old prompts don’t work anymore.

But for opposite reasons.

Claude Opus 4.7 stopped guessing what you meant. It does exactly what you type. Nothing more, nothing less.

Vague instructions that worked on 4.6? They now produce narrow, literal, sometimes worse results.

Not because the model got dumber. Because it stopped compensating for sloppy thinking.

GPT-5.5 went the other direction. OpenAI’s guide literally says: “Don’t carry over instructions from older prompt stacks.”

Legacy prompts over-specify the process because older models needed hand-holding. GPT-5.5 doesn’t. That extra detail now creates noise and produces mechanical output.

Claude got more literal.

GPT got more autonomous. Both now punish the same thing: prompts written without clear thinking behind them.

One developer on Reddit captured it perfectly after analyzing hundreds of community posts. The complaints tracked almost perfectly with prompt specificity.

Precise prompts got better results on 4.7. Vague prompts got worse. The model didn’t regress. The prompts did.

OpenAI’s new framework is “outcome-first prompting.” Describe what good looks like. Define success criteria. Set constraints. Then get out of the way. The model picks the path.

Anthropic’s framework is the inverse: be surgically specific about what you want, because the model won’t fill in your blanks anymore.

Two different architectures. Two different philosophies.

One identical conclusion: the person writing the prompt is now the bottleneck, not the model.

Boris Cherny, the engineer who built Claude Code, posted on launch day that even he needed a few days to adjust. That post got 936 likes.

Meanwhile, Anthropic increased rate limits for all subscribers because the new tokenizer uses up to 35% more tokens on the same input.

The model is more expensive to run lazily. Cheaper to run precisely.

The models are converging in capability. The gap between good and bad output is no longer about which model you pick.

It’s about the 2 minutes of structured thinking you do before you type anything.

That thinking system is the skill. The prompt is just what it produces.

English

Jay Allen retweeted

Jay Allen retweeted

Jay Allen retweeted

@brian_blum1 I really loved The Passenger by Cormac McCarthy. One of my favorite recent books.

Otherwise I’m reading classics that stood the test of time.

English

Jay Allen retweeted

Jay Allen retweeted

Never count out Gemini. Big things coming from @GoogleDeepMind 🔥🔥

They are going to take back the crown. I've seen where they are heading and it's spectacular. Believe.

🍓🍓🍓@iruletheworldmo

i’d counted gemini out because their models lacked agency but they look back in the race. the next (imminent) gemini model will the new sota and it may be hard to displace them. looks like they’ll have their own codex moment at last. the giant is waking up.

English

Jay Allen retweeted

Jay Allen retweeted

Jay Allen retweeted

Jay Allen retweeted

Jay Allen retweeted

Jay Allen retweeted