deva

42.9K posts

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

codex team: we shipped a new update,

here’s your token reset

claude team: you’re the problem have you tried using it less

Lydia Hallie ✨@lydiahallie

Thank you to everyone who spent time sending us feedback and reports. We've investigated and we're sorry this has been a bad experience. Here's what we found:

English

deva retweeted

Will be reviewing this PR today!

0xByte@0xbyt4

built computer use into hermes agent tell it what to do from your phone → it controls your mac no sandbox real desktop, real apps, real time a complex example : diagram on freeform @Teknium @NousResearch @claudeai for more : github.com/NousResearch/h…

English

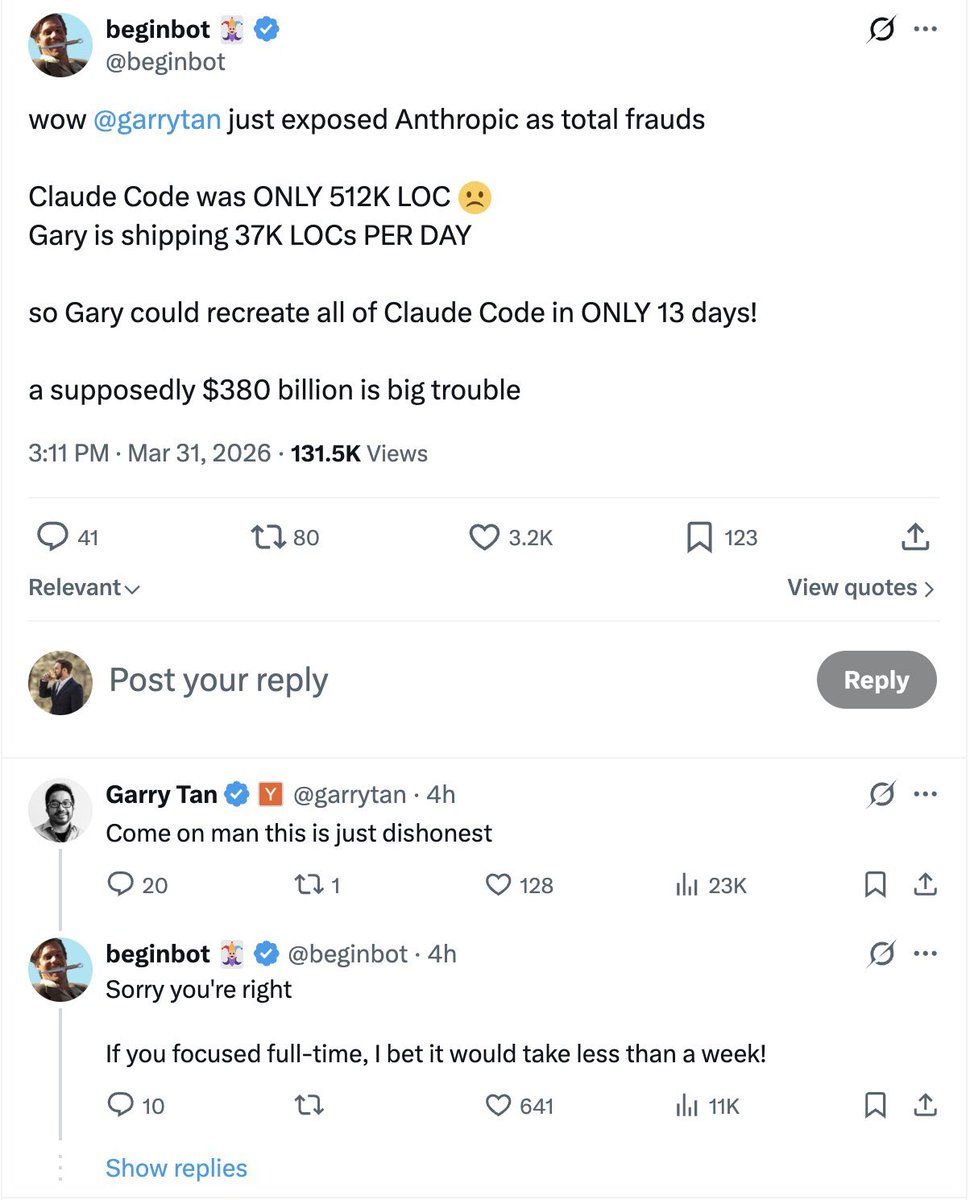

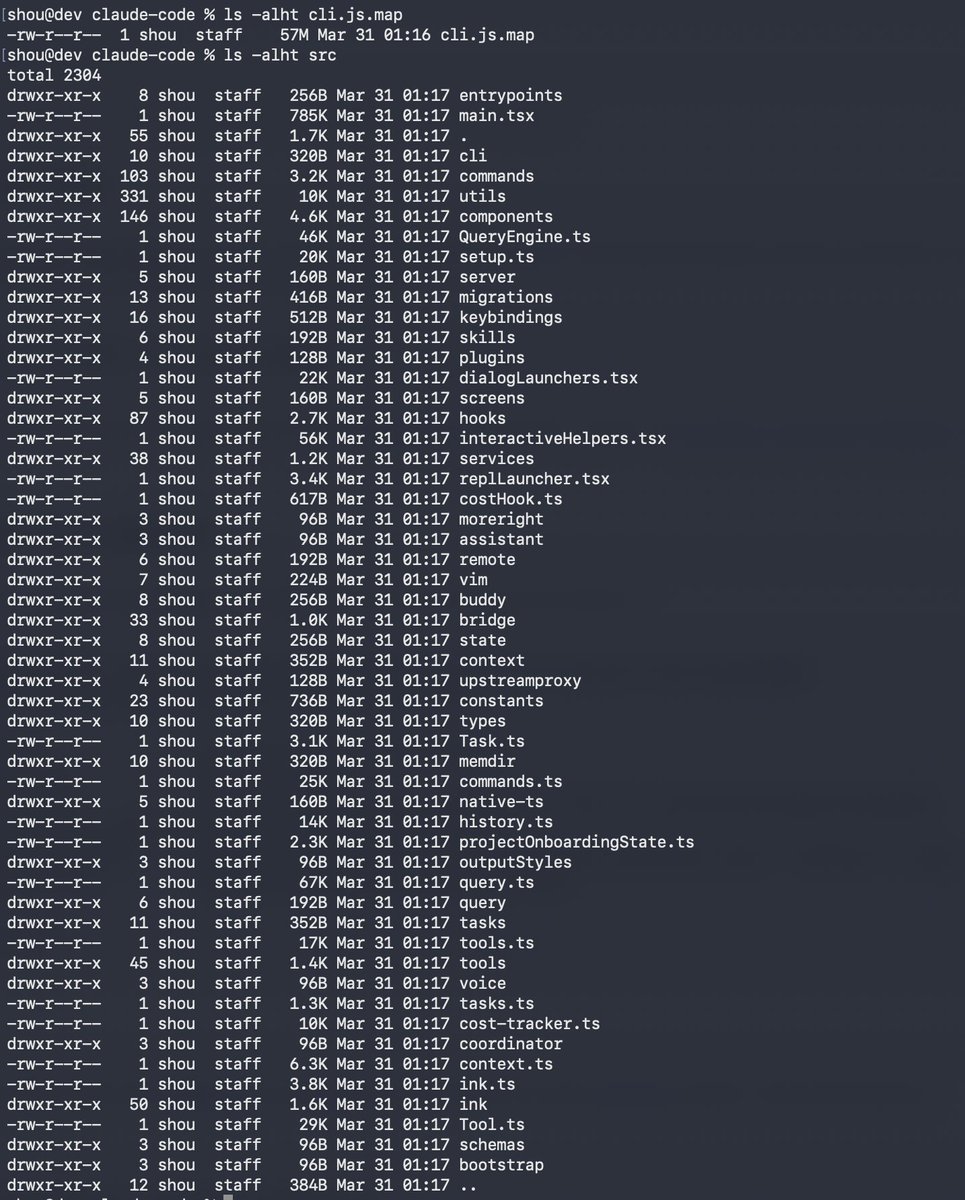

fuck it here’s the claude code leaked source breakdown nobody is talking about yet

> main.tsx is 803,924 bytes. one file.

> there’s a full tamagotchi pet system dropping april 1st that includes 18 species, gacha rarities, a “SNARK” stat

> kairos: autonomous agent, monitors github PRs, sends push notifications

> ultraplan: spins up a 30 min opus session on a remote server to plan your entire task

coordinator mode: multi-agent swarm with workers and scratchpads

> agent triggers: cron-based scheduled tasks, basically a CI/CD agent built into claude

> 18 hidden slash commands sitting disabled: /bughunter, /teleport, /autofix-pr

> anthropic hex encoded the word “duck” to dodge their own build scanner

> 460 eslint-disable comments in production

> deprecated functions actively running with names like writeFileSyncAndFlush_DEPRECATED()

> unreleased: autonomous agent, multi-agent swarm, cron-based CI/CD agent, 18 hidden slash commands

this is a $380B company. the .map file was just sitting in the npm package.

if their tooling flagged the word duck and nobody caught the source map, what else shipped?

English

deva retweeted

Claude code source code has been leaked via a map file in their npm registry!

Code: …a8527898604c1bbb12468b1581d95e.r2.dev/src.zip

English

deva retweeted

deva retweeted

@elliotarledge had the same thought process while building it but the utility i feel is the way i prompted the notes to be generated to consist of opposing view points and to question what the article is about and to be a devils advocate

ngl the improvements have been phenomenal

English

@devaxsha is this graph useful to you in any way other than that it looks cool?

English

@devaxsha @nickvasiles Most note systems store information.

The "Next Level" ones metabolize it.

English