ebbi

34.8K posts

Pinned Tweet

ebbi retweeted

ebbi retweeted

If you are born into a family with financial security I actually think dedicating your life to actively trying to help people is probably the best you could do.

𝐍𝐢𝐨𝐡 𝐁𝐞𝐫𝐠 🇮🇷 ✡︎@NiohBerg

Privilege is having rich parents who set you up for life so that you can spend your entire youth doing useless activism while never worrying about having a real job.

English

ebbi retweeted

ebbi retweeted

ebbi retweeted

ebbi retweeted

ebbi retweeted

ebbi retweeted

Tobey was Christian

Andrew was Jewish

So it’s only natural

Steve@itz_Steve__

welcome to brand new day

English

ebbi retweeted

ebbi retweeted

ebbi retweeted

ebbi retweeted

i didn’t even realize it at first but this gotta be 10/10 gif usage 😭😭

shay᭡.ִֶָ𓂃@yappingbambix

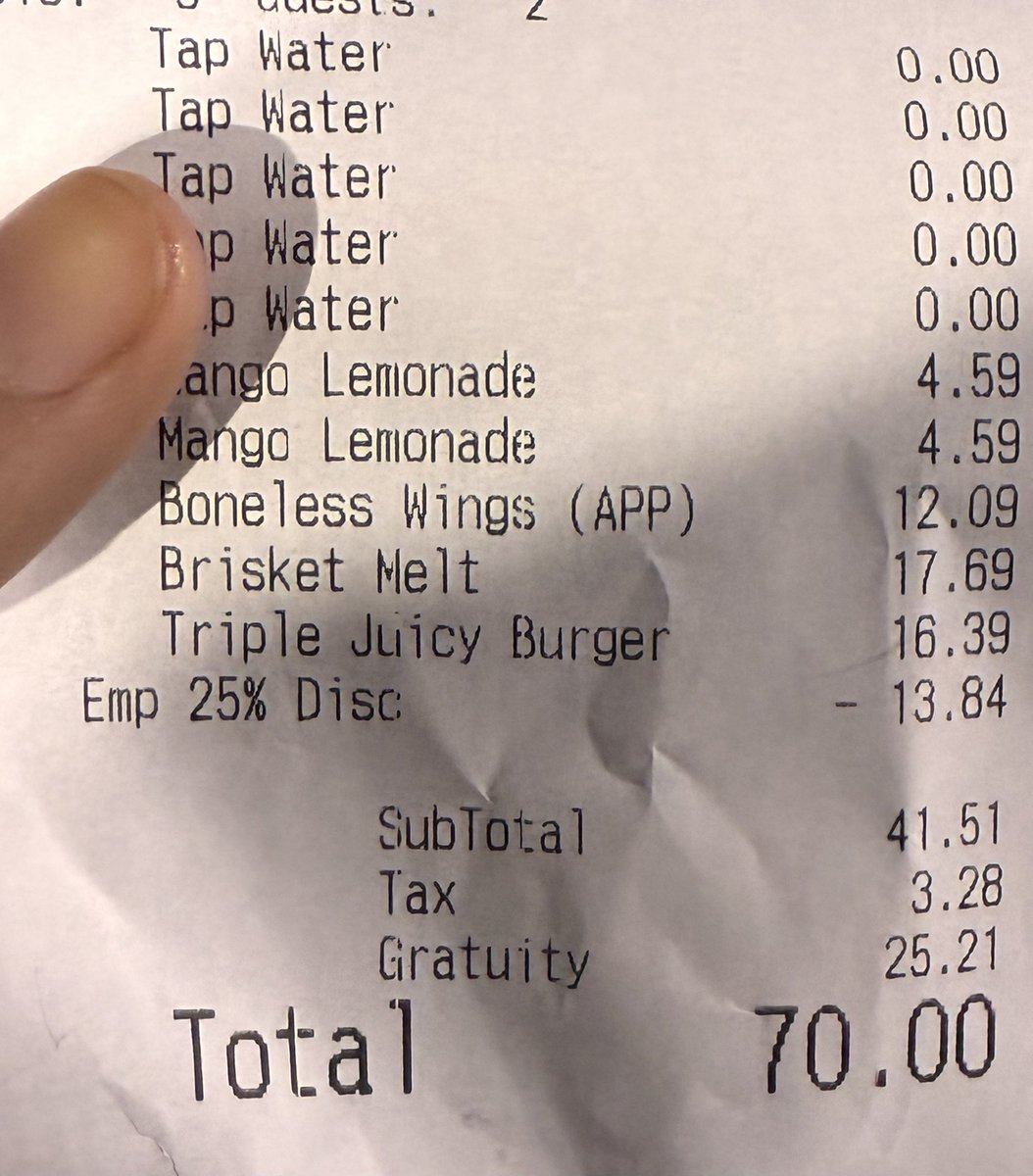

I bought my dad a gift with his money

English

ebbi retweeted

ebbi retweeted

ebbi retweeted

ebbi retweeted

It was never going to be honey.

Food Blogger@foodietechlab

Honey isn’t available, what are you topping these pancakes with ?

English

ebbi retweeted

I can see why the Italians saw this and invented unemployment

James Lucas@JamesLucasIT

One of the most beautiful views on Earth With a population of only 379, Portofino is among the most stunning towns on the Italian Riviera.

English

ebbi retweeted

ebbi retweeted

The Entire Japanese Team is doing the Luffy Gear poses 😭 x.com/kyouchan0127/s…

English