Releasing VLA Foundry: an open-source framework that unifies LLM, VLM, and VLA training in a single codebase. End-to-end control from language pretraining to action-expert fine-tuning — no more stitching together incompatible repos.

Haruki Nishimura

498 posts

@imp_aa

Learning and planning for safe, embodied autonomous systems under uncertainty. Senior Research Scientist @ToyotaResearch. PhD from @StanfordMSL. 日本語 & English

Releasing VLA Foundry: an open-source framework that unifies LLM, VLM, and VLA training in a single codebase. End-to-end control from language pretraining to action-expert fine-tuning — no more stitching together incompatible repos.

Releasing VLA Foundry: an open-source framework that unifies LLM, VLM, and VLA training in a single codebase. End-to-end control from language pretraining to action-expert fine-tuning — no more stitching together incompatible repos.

Releasing VLA Foundry: an open-source framework that unifies LLM, VLM, and VLA training in a single codebase. End-to-end control from language pretraining to action-expert fine-tuning — no more stitching together incompatible repos.

Releasing VLA Foundry: an open-source framework that unifies LLM, VLM, and VLA training in a single codebase. End-to-end control from language pretraining to action-expert fine-tuning — no more stitching together incompatible repos.

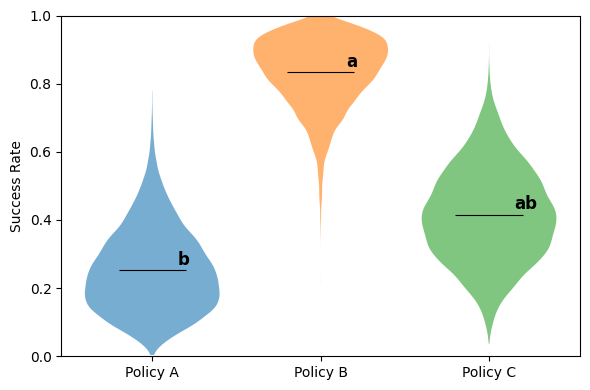

This is actually a pretty big deal — we rely on @imp_aa’s implementations to tell when policies are statistically different than each other. If someone presents some quick mean-only results internally without the CLD analysis, you can be sure someone will eventually ask for it.

Releasing VLA Foundry: an open-source framework that unifies LLM, VLM, and VLA training in a single codebase. End-to-end control from language pretraining to action-expert fine-tuning — no more stitching together incompatible repos.

Releasing the Unfolding Robotics blog! Time to unfold robotics: we trained a robot to fold clothes using 8 bimanual setups, 100+ hours of demonstrations, and 5k+ GPU hours. Flashy robot demos are everywhere. But you rarely see the real story: the data, the failures, the engineering. We’re sharing everything: code, data, and details in the blog → huggingface.co/spaces/lerobot…

Releasing the Unfolding Robotics blog! Time to unfold robotics: we trained a robot to fold clothes using 8 bimanual setups, 100+ hours of demonstrations, and 5k+ GPU hours. Flashy robot demos are everywhere. But you rarely see the real story: the data, the failures, the engineering. We’re sharing everything: code, data, and details in the blog → huggingface.co/spaces/lerobot…

Our 3D Vision team (3DGR) is releasing Raiden — a data collection toolkit for YAM robots. Built for scalable, high-quality data: supports leader–follower + SpaceMouse teleop, multi-camera setups, and modern stereo depth (incl. TRI learned stereo). tri-ml.github.io/raiden/