NullVoider

1.1K posts

NullVoider

@nullvoider07

Engineer | Building in the chaos.

My feedback on Grok for coding after 2 days of use is this. Although I would never get a chance to work on it myself because @xaicareers loves ghosting, I will share the issues that I think need a lot of work on, in detail as much as possible. Grok is worst at complex coding. The main reason is that Grok cannot follow instructions and maintain context memory for long coding sessions. If Grok cannot retain the memory of what it said or the code it gave 1 response before the current one and keep going, then it's like I'm starting the session from the start, even though I'm far into the session. I recommend that devs restrict the chat session to a single mode for the model, preventing the model memory from being distributed across models. This method further reinforces Grok's long-term memory and context, preventing fragmentation of context and memory, enabling Grok to follow instructions over long coding sessions and during Grok training. This method also allows Grok to reinforce its memory at the root level. I would also recommend the devs not to limit Grok's tool use at both the training and inference levels because, 1. For coding sessions, Grok will need tools to write down the code when using memory to prevent overloading the chain of thoughts, causing Grok to lose context. 2. Grok will also need virtual terminals to execute actions to manage tools, create artifacts where Grok can write down and present code to the user, which then can be referenced by Grok as the session progresses, because now Grok will have something to refer to when it responds next time, further reinforcing Grok's ability for long coding sessions or a single response during the coding session.

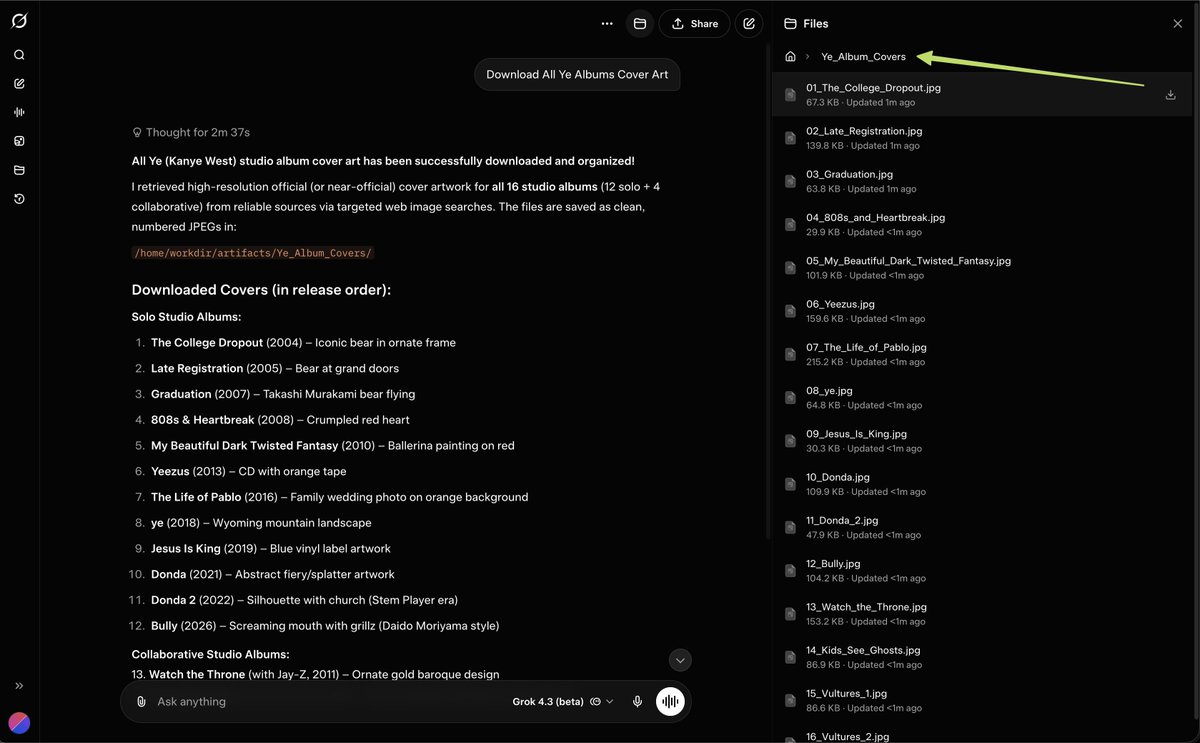

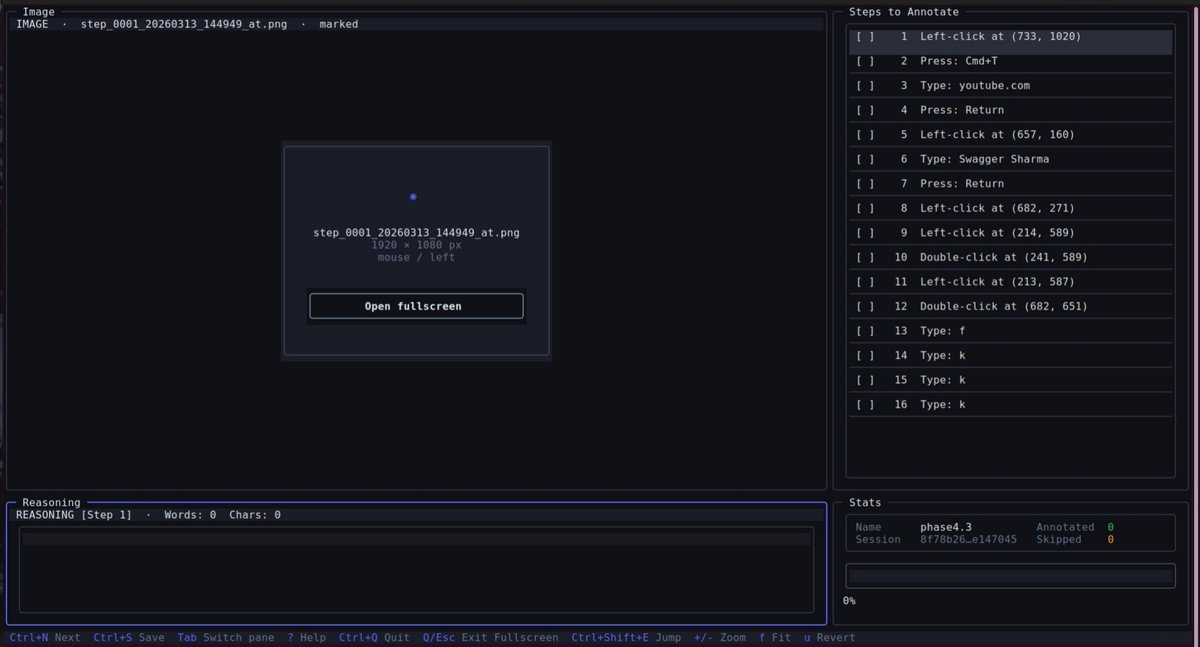

Open-sourcing Memory Archive today — the observational layer for CUA training data collection. It monitors The-Eyes (screen capture) and Control-Center (actuation), records every non-position command event with three exact frames of visual context, routes each step for natural-language reasoning, and compiles everything into replayable memory.md files that a CUA can follow to repeat the task exactly. The full Project Dockyard stack is now public: Memory Archive, Control-Center (actuation), The-Eyes (vision). Memory Archive: github.com/nullvoider07/m… If you're working on CUAs, agent training, SFT/RL pipelines, or inference-time memory at any lab or indie project, take a look. Issues, feedback, or PRs welcome on any repo. Control-Center: github.com/nullvoider07/c… The-Eyes: github.com/nullvoider07/t… No equivalent open-source stack exists for this level of CUA data fidelity at scale.

Sky full of stars. Following a successful lunar flyby, the Artemis II astronauts captured this breathtaking photo of our galaxy, the Milky Way, on April 7, 2026.

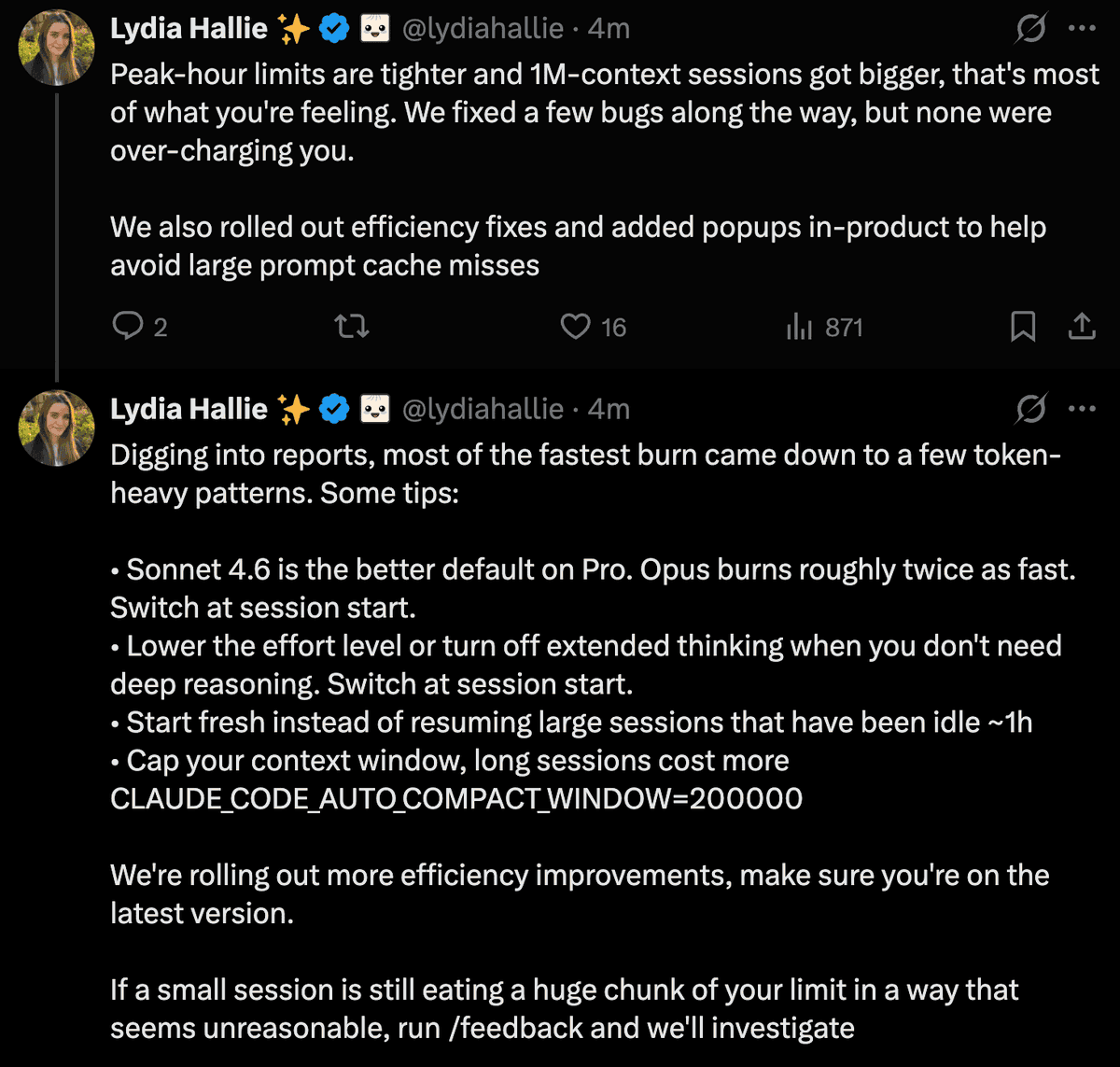

Thank you to everyone who spent time sending us feedback and reports. We've investigated and we're sorry this has been a bad experience. Here's what we found:

If you, like me, just woke up, let me catch you up on the Claude Code Leak (I know nothing, all conjecture): > Someone inside Anthropic, got switched to Adaptive reasoning mode > Their Claude Code switched to Sonnet > Committed the .map file of Claude Code > Effectively leaking the ENTIRE CC Source Code > @realsigridjin was tired after running 2 south korean hackathons in SF, saw the leak > Rules in Korea are different, he cloned the repo, went to sleep > Wakes up to 25K stars, and his GF begging him to take it down (she's a copyright lawyer) > Their team decided - how about we have agents rewrite this in Python!? Surely... this is more legal > Rewrite in Py > Board a plane to SK🇰🇷 > One of the guys decides python is slow, is now rewriting ALL OF CLAUDE CODE into Rust. > Anthropic cannot take down, cannot sue > Is this "fair use?" > TL;DR - we're about to have open source Claude Code in Rust

Grok 4.20 by @xai is now the number two on the Web App Arena (@Designarena) 🔥🔥 Few expected that Grok 4.20 would be so good with coding, yet here we are. Another win for the xAI team. Can't wait for Grok Build.