Outputlayer

637 posts

Outputlayer

@outputlayer

Turning onchain data into growth insights | Building @PredGraph | Custom dashboards & analytics | DMs open

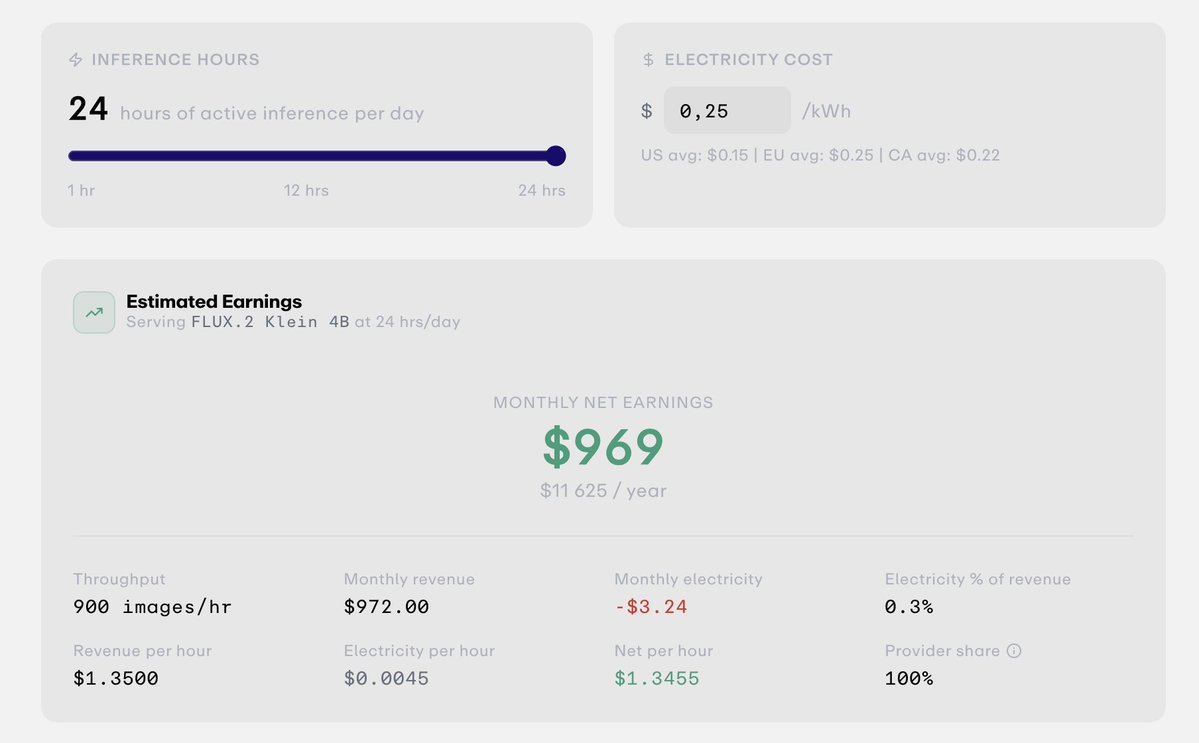

Wake the world's sleeping compute. Look at the Mac nearest to you. What's it doing? Probably nothing. There are 100M+ Macs with Apple Silicon out there. Apple quietly made them *really* good at inference. A $3k Mac runs a 60B model at 30 watts. Most sit idle most of the day. Meanwhile every AI API call passes through three layers of margin before reaching the hardware. We call this the Inference Tax. We got curious: what happens if you connect idle Macs directly to inference demand? This is Darkbloom. Private inference network for idle Macs. darkbloom [dot] dev -- paper + code open. Reply for invite + free credits ↓

@Polymarket is migrating from bridged USDC.e to a brand-new collateral token: Polymarket USD. According to my latest data, Polymarket currently holds $409,772,828 (proxies balances + active positions). This switch will allow them to earn 5–7% yield → $20.5M – $28.7M annually.

LLM Knowledge Bases Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So: Data ingest: I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them. IDE: I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides). Q&A: Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale. Output: Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base. Linting: I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into. Extra tools: I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries. Further explorations: As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows. TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

We're hosting a one-day hackathon around automated research! * Thursday, April 9 (8 AM - 4 PM PT) * SF and online * Hosted by @paradigm * Compete in never-before-seen optimization challenges, or build your own projects * $9,000 in total prizes Application in 🧵

Built a CLI for RWA (@OndoFinance) agent-based portfolio management, current MVP runs on top of @JupiterExchange @solana p.s. If you can help with whitelisting or want to collaborate, DM me.

My dear front-end developers (and anyone who’s interested in the future of interfaces): I have crawled through depths of hell to bring you, for the foreseeable years, one of the more important foundational pieces of UI engineering (if not in implementation then certainly at least in concept): Fast, accurate and comprehensive userland text measurement algorithm in pure TypeScript, usable for laying out entire web pages without CSS, bypassing DOM measurements and reflow