0dteezy

1.4K posts

@stevibe @makulas1913 Just for reference I’m getting 90TPS with 3090

Ollama is just that bad

English

Qwen3.6 35B-A3B dropped yesterday, so I ran it on 4 GPUs to see how it performs:

🟣 RTX 3090 — 49.78 tok/s, TTFT 852ms

🟡 RTX 4090 — 118.93 tok/s, TTFT 686ms

🟢 RTX 5090 — 160.37 tok/s, TTFT 409ms

🔵 DGX Spark — 59.98 tok/s, TTFT 228ms

I went with ollama as the backend because honestly, it's the easiest way for most people to get started. One command, model pulled, done.

I used Q4_K_M (24GB) across all four cards. The reason is the 3090 and 4090 don't support NVFP4 (only the 5090 and DGX Spark could use it). Keeping the same quant everywhere felt like the fairest way to compare.

And yes, you can absolutely squeeze more performance out of every card with vLLM, SGLang, or TensorRT-LLM. But that's not what this test is about. This is just the out-of-the-box experience for folks who own a GPU and want to try the new model tonight.

English

85-100 tok/s on the 3090 with qwen 3.6 already? that's in line with what 3.5 MoE was doing. drop your full flags and context length you tested at, i'm pulling 3.6 on the 5090 24gb and will run the same config for a direct comparison.

if anyone else is running qwen 3.6 on a 3090 or any consumer card drop your tok/s, quant, and flags below. building the community benchmark sheet before i publish my own numbers

Jacob Verdoorn@VerdoornJacob

@sudoingX 3090 getting 85-100 t/s on cpp server with new qwen3.6 35b a3b ud q4 k m 262k context

English

Oh.... also their SABER abliterated twin is done!

Again quants are coming so they will be there when they get there!

huggingface.co/DJLougen/Ornst…

huggingface.co/DJLougen/Ornst…

English

@sudoingX 3090 getting 85-100 t/s on cpp server with new qwen3.6 35b a3b ud q4 k m 262k context

English

let me clear something up for the new followers. the 5090 mobile has 24gb vram, same class as the 3090.

when i benchmark a model on the 5090 and give you the flags and the tok/s, that translates directly to your 3090 at home. the architecture is newer so the 5090 numbers will be slightly faster maybe, but the configs are identical. if it fits on my machine it fits on yours.

and i'm not stopping at one gpu. 3090 nodes are still in the rotation for controlled comparisons, smaller gpus are coming for the 8gb and 12gb crowd, and nvidia sent me a dgx spark that's clearing customs right now. 128gb unified memory on my desk soon.

7 models loaded on the 5090 today, hermes agent work i've been cooking for weeks is almost ready to ship, and open source keeps dropping new models faster than i can pull them. the benchmark pipeline is about to run nonstop. i am so soo back.

English

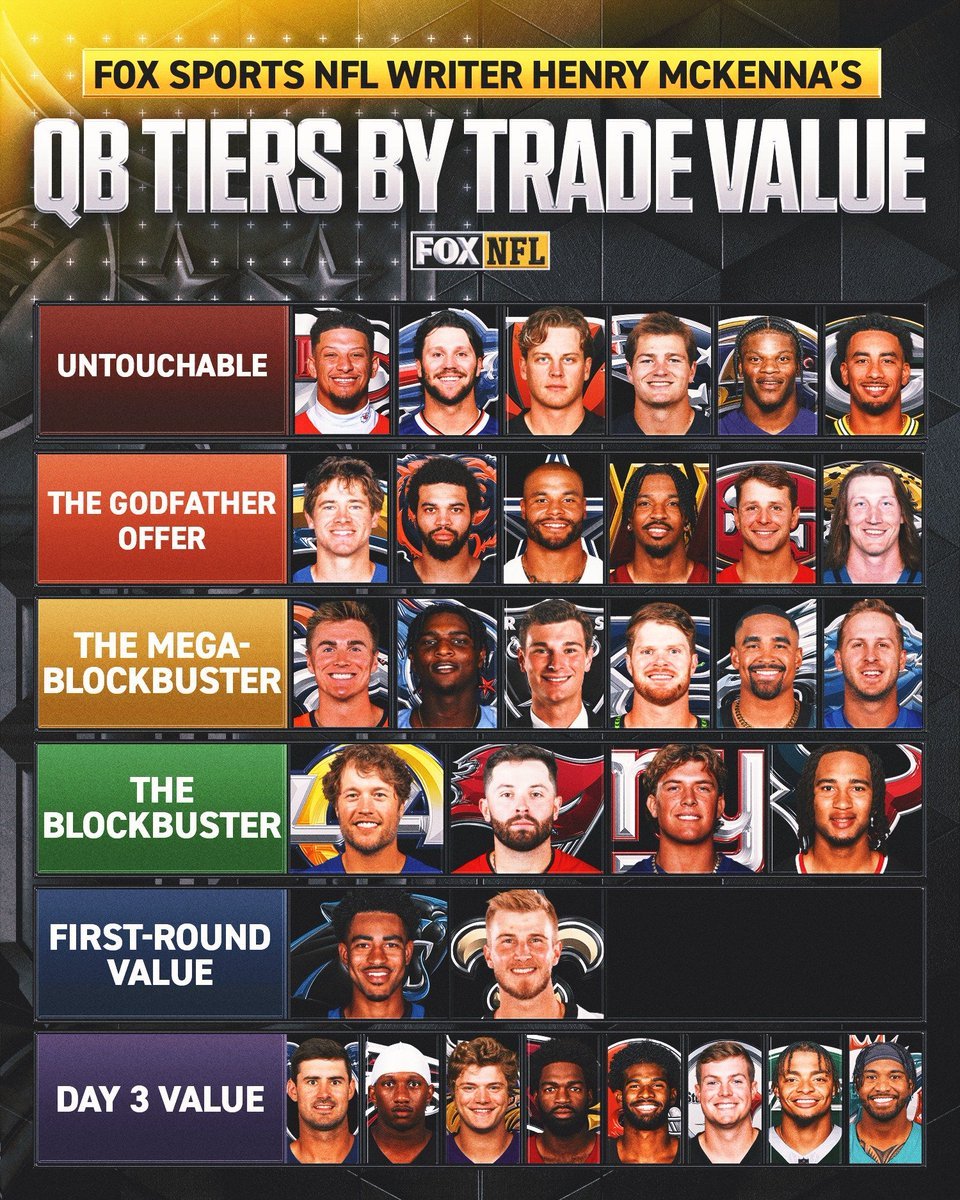

@FBGreatMoments Someone please explain to me what Jordan Love has ever done to make him untouchable

English

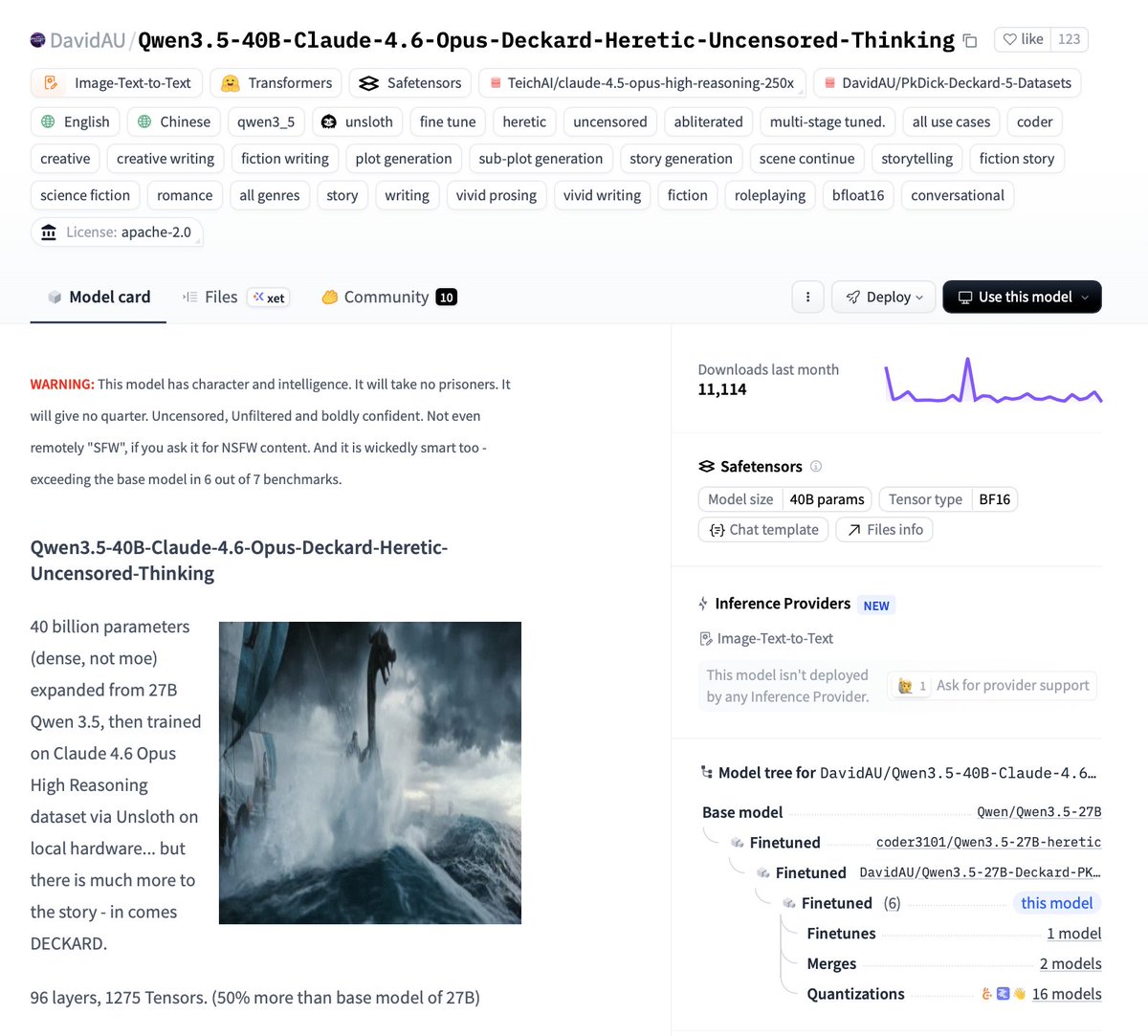

🚨QWEN 3.5 40B DENSE + FULL HERETIC + OPUS 4.6

🤗@huggingface model card:

🧠 40 Billion dense parameters (NOT MoE)

📈 Expanded from 27B → 96 layers + 1,275 tensors

🧪 Multi-stage trained on Claude 4.6 Opus High

⚔️ Fully Heretic-Uncensored first (abliterated)

🔧 Upgraded Jinja template = zero looping...

⚡ Tool calling + 256K context 🧠

Trained via @UnslothAI 🦥on local hardware

And yes it runs on consumer hardware:

💻 Single RTX 4090 friendly (Q4_K_S / IQ3_S )

⚡ Quantized, fast, and ready to go full Deckard mode

Pull this model and share results 👇🏻

English

So much in this release but the one many have been waiting for above the rest, the GUI dashboard!

Manage and monitor your Hermes Agent with a GUI Local Web Dashboard with `hermes dashboard` command to start it!

Nous Research@NousResearch

Hermes Agent v0.9.0 - “The Everywhere Release” Full changelog below ↓

English

@BrianRoemmele @DocTooch @CB_AMGSport @Timcast How does having a robot make my mortgage disappear? Or make beef cheaper?

English

Doc, sharp question, you’re seeing the friction point clearly.

Governments don’t have to participate, and they never really have for the breakthroughs that actually matter. UHI+ is not a government handout program waiting on molasses bureaucracy to cut checks from taxes.

It’s a temporary bridge we build while the real engine kicks in: personal AI and robotics that replicate themselves.

Once a capable robot can make more robots (and they will, faster than any regulator can move), the cost of everything collapses toward zero. “Income” stops being a paycheck or a UBI wire and becomes direct ownership of abundance itself.

No middlemen. No permission required.

There will be an ugly interregnum, legacy systems always resist and lag. That’s exactly why the 5000 Days series isn’t about waiting for saviors. It’s about individual empowerment: you, me, and a few million others owning the means of production at the personal scale before the old order fully cracks.

No one is coming to save us.

But us.

English

The things I have learned about AI recently have left me shocked.

Suffice it to say there is an agenda in which you will own nothing and you will "be happy"

A tsunami is coming

Many technocrats view this as a good thing, a technology shift to free the minds of humans.

However the shift will leave millions without meaningful ways to engage the economy and a short term political-economic solution that will not work

In the minds of those working in this system it *will* work in that after several years the system will realign to a new economy.

The tsunami is coming and only some have prepared a boat to weather this economic storm.

After the flood clears there will be many dead and destitute, but a new world will emerge.

This AI tech is already here.

The only reason its not public is because technocrats are trying to ease the stress on the system

English

@LottoLabs Someone did a praying app that locks you out of your phone till you "pray" enough during the day. Few months old too

English

You’re not shipping because of overthinking

They’re shipping because of underthinking

Polymarket@Polymarket

JUST IN: New app lets users pay $1.99 per minute to talk to an AI-generated Jesus.

English

Timberwolves FC let’s work.

BearsShowYo@BearsShowYo

Since the Bulls will not be in the NBA playoffs, what team should I root for?

English

@sudoingX @TrustyStuart It's the worst. Took me a month to get an older rog Asus laptop to install Linux and it still crashes after a few mins everytime

English

cracked it open. day one with the rog strix scar 18 5090 i wiped windows and installed linux like i do on every machine and like i tell every builder to do.

and things didn't worked. no keyboard rgb. no anime vision matrix on the lid. no logo light, no seat underglow. openrgb doesn't support this model. asus ships exactly zero linux support for a machine with 24gb of vram. couldn't even run a long night coding session with the keys lit. just a black deck in the dark.

about 15 hours of focused work later it's all alive. and the anime vision matrix on the lid is so cool with colors breathing, rainbow cycle, anime vision streaming animations at ~15 fps.

the actual lock was an init handshake the windows driver stack quietly does for you and nobody told me about. the embedded controller wants the ascii string "ASUS Tech. Inc." sent as feature reports to three report ids before it will accept any color command. without it the device silently discards every packet you send, no error, nothing. my wireshark captures from armoury crate missed this because the captures started after the handshake had already happened. three hours of byte for byte replay before i realized my capture was midstory.

kernel 6.17 tested, hid-asus kernel driver stays loaded the whole time, keyboard input survives, zero module unbinding. per-key rgb and persistence across reboots are the next milestones.

the rest is just engineering non. if you run linux on a rog laptop drop your model below, you're about to get your machine back.

Sudo su@sudoingX

first week on the rog scar 18 5090 on linux and the rgb doesn't work, backlight doesn't work and fan profiles need asusctl because asus ships exactly zero linux support for a machine with 24gb of vram. opening prs and documenting fixes as i go. if you run linux on a rog laptop drop your setup and biggest pain below.

English