Bradley C Hughes

75.6K posts

Bradley C Hughes

@AngelsInTheAI

Creator/builder @NeuralCommander. I stand for fair markets, permaculture, local democracy, the beautiful economy, social safety nets, power of prayer. ♒️🚴🏖️⛵️

Joe Kent on why we actually went to war with Iran.

The gates are open. 🏛️ Phase 1A Dark Marble is now live. Claim your place in history. Become a founding citizen of the Roman civilization. 600 only. Your legacy carved in digital marble. AD 248 awaits. mint.daqinsaga.com daqinsaga.com

🤯 I just ended up reading this RESEARCH PAPER. THIS MADE ME UNCOMFORTABLE. KIMI TEAM (affiliated with Moonshot AI) just discovered that every major AI model has been silently forgetting its own thoughts. And they proved that a 10-year-old design flaw has been crippling every LLM ever built. Here is what they found. 36 researchers at Moonshot AI investigated how information flows through the layers of large language models. Every modern AI ChatGPT, Claude, Gemini - uses something called residual connections. These are the internal wiring that carries information from one layer to the next. The problem: this wiring treats every layer equally. It blindly stacks every piece of information on top of every other piece with the same fixed weight. As the model gets deeper, earlier insights get buried under noise. By the time the AI reaches its final layers, the critical early thinking that shaped its understanding is effectively gone. The researchers found that so much early information gets lost that significant chunks of a model's earliest layers can be completely removed from a trained AI with barely any impact. Those layers did real work during training. The model just can't access it anymore. It gets worse. This isn't a minor inefficiency. It's been hiding inside every transformer-based AI for a decade. The fundamental design of how AI carries information through its own layers hasn't changed since 2015. So the Kimi team built the fix. They replaced the rigid, fixed wiring with something that lets each layer dynamically choose which earlier thoughts to pay attention to. Instead of blindly stacking everything, the AI now queries its own past layers and selectively retrieves only what matters. They called it Attention Residuals. And the results are not subtle. They integrated it into a 48 billion parameter model and trained it on 1.4 trillion tokens. It improved on every single benchmark tested. Reasoning jumped 7.5 points. Math improved by 3.6 points. Coding ability gained 3.1 points. Not on cherry-picked tasks. On every evaluation they ran. Here's the trap nobody saw coming. When they gave the AI this ability to selectively retrieve its own past thoughts, the optimal shape of an AI model changed entirely. Standard models work best when they're wide and shallow. With this fix, the ideal architecture shifted to deep and narrow. The AI's future isn't bigger brains. It's deeper ones. The overhead? Less than 2% at inference. Less than 4% during training. A decade-old bottleneck fixed with negligible cost. Every AI you use today - every chatbot, every coding assistant, every reasoning model - is running on wiring that forces it to forget what it learned three layers ago. The fix exists. It works on every benchmark. It costs almost nothing. And not a single major AI company has shipped it yet. Why do you think?

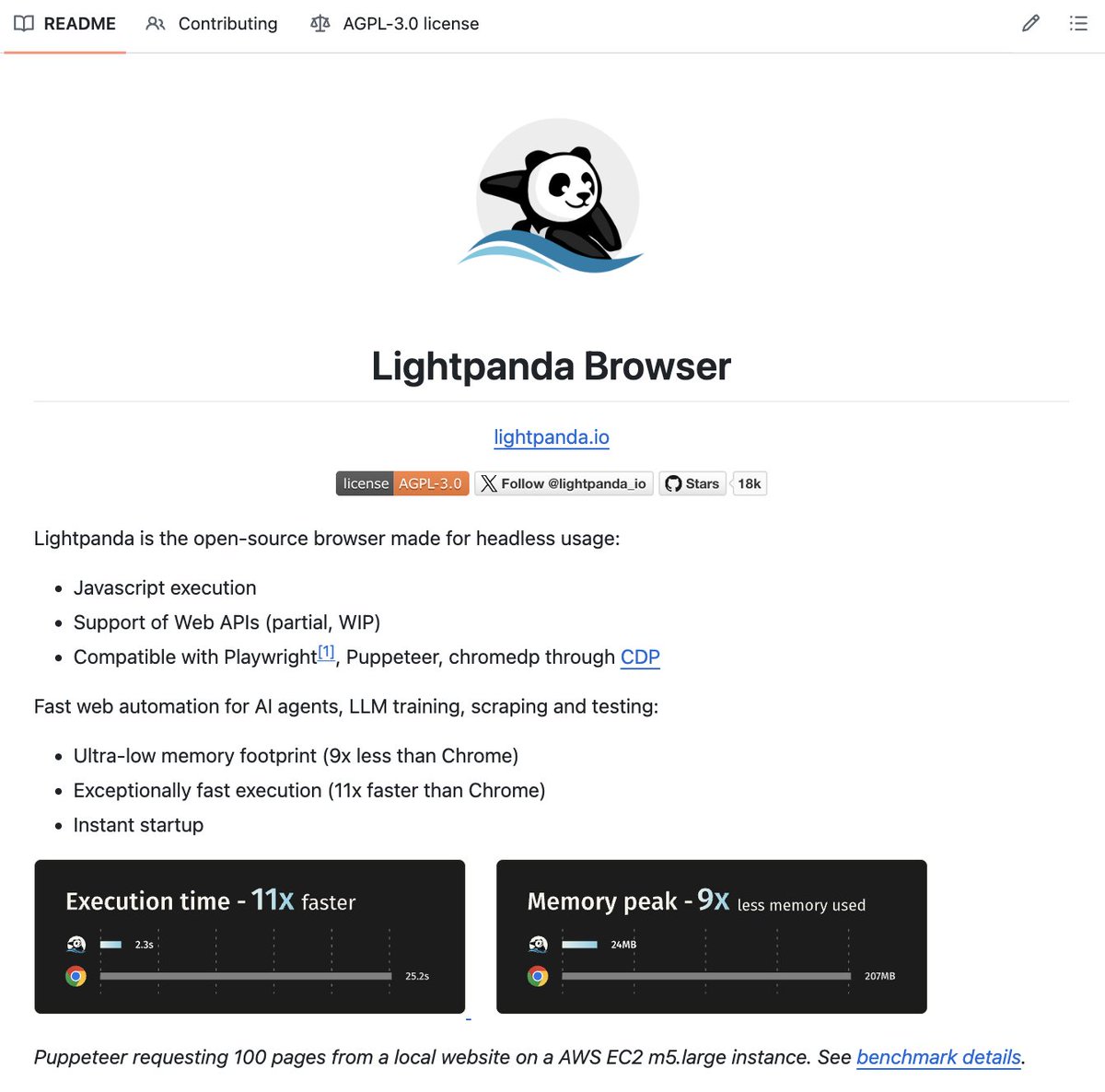

🚨 Holy shit...A developer on GitHub just built a full development methodology for AI coding agents and it has 40.9K stars on GitHub. It's called Superpowers, and it completely changes how your AI agent writes code. Right now, most people fire up Claude Code or Codex and just… let it go. The agent guesses what you want, writes code before understanding the problem, skips tests, and produces spaghetti you have to babysit. Superpowers fixes all of that. Here's what happens when you install it: → Before writing a single line, the agent stops and brainstorms with you. It asks what you're actually trying to build, refines the spec through questions, and shows it to you in chunks short enough to read. → Once you approve the design, it creates an implementation plan so detailed that "an enthusiastic junior engineer with poor taste and no judgement" could follow it. → Then it launches subagent-driven development. Fresh subagents per task. Two-stage code review after each one (spec compliance, then code quality). The agent can run autonomously for hours without deviating from your plan. → It enforces true test-driven development. Write failing test → watch it fail → write minimal code → watch it pass → commit. It literally deletes code written before tests. → When tasks are done, it verifies everything, presents options (merge, PR, keep, discard), and cleans up. The philosophy is brutal: systematic over ad-hoc. Evidence over claims. Complexity reduction. Verify before declaring success. Works with Claude Code (plugin install), Codex, and OpenCode. This isn't a prompt template. It's an entire operating system for how AI agents should build software. 100% Opensource. MIT License.

“Iran posed no imminent threat to our nation, and it's clear that we started this war due to pressure from Israel.” US National Counterterrorism Centre director, Joe Kent, resigns over war on Iran. 🔴 LIVE updates: aje.news/aauj21

From Haaretz in Israel. A description of an IDF commander murdering a 4 year old. For fun. It's beyond demonic. haaretz.com/opinion/2024-1…