Inseq

57 posts

Inseq

@InseqLib

Open-Source Interpretability for Generative Language Models 🔎 🐛

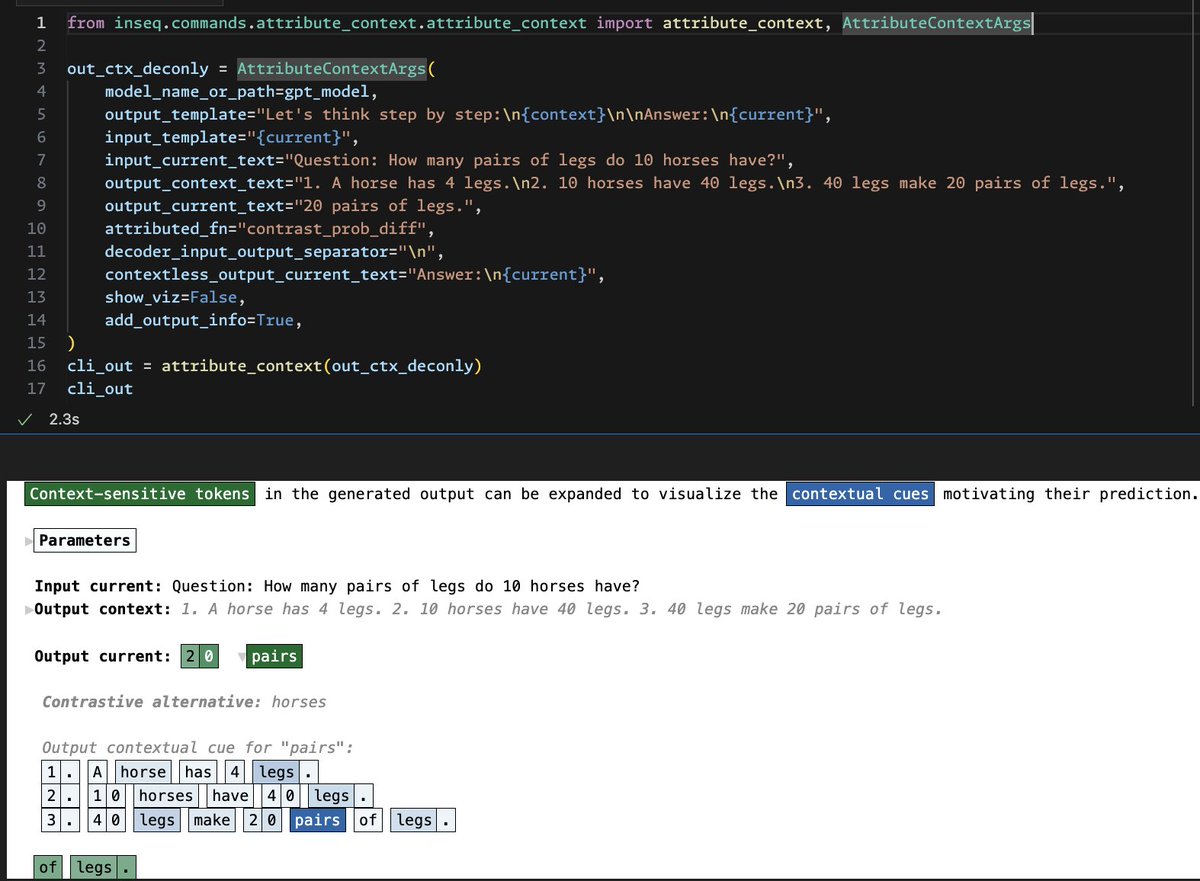

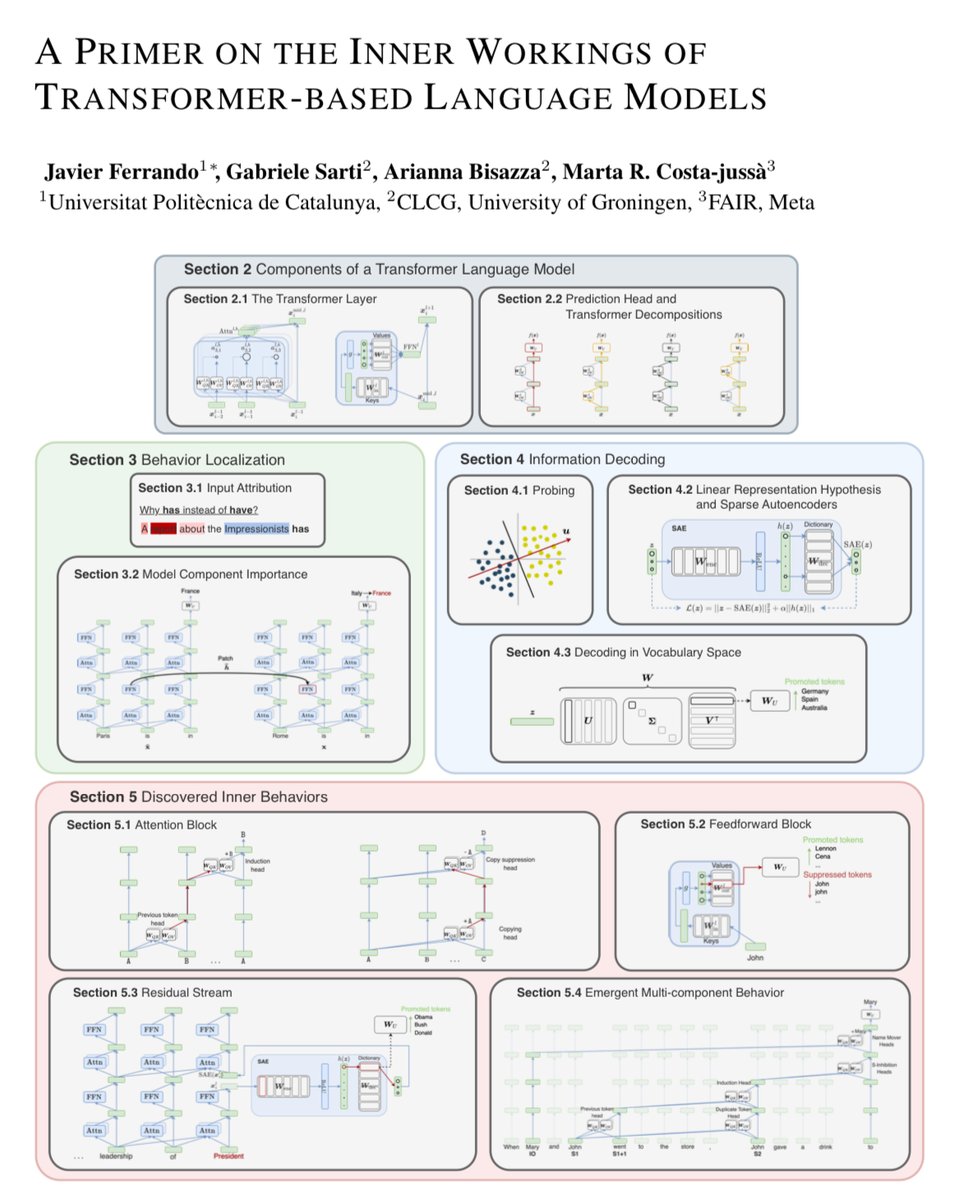

[1/8] Struggling with verifying the trustworthiness of RAG outputs? Check our latest work where we utilize *model internals* as a powerful and faithful tool for attributing answers to retrieved docs! (w/ @gsarti_ @AriannaBisazza @raquel_dmg) 📄: arxiv.org/abs/2406.13663 #NLProc

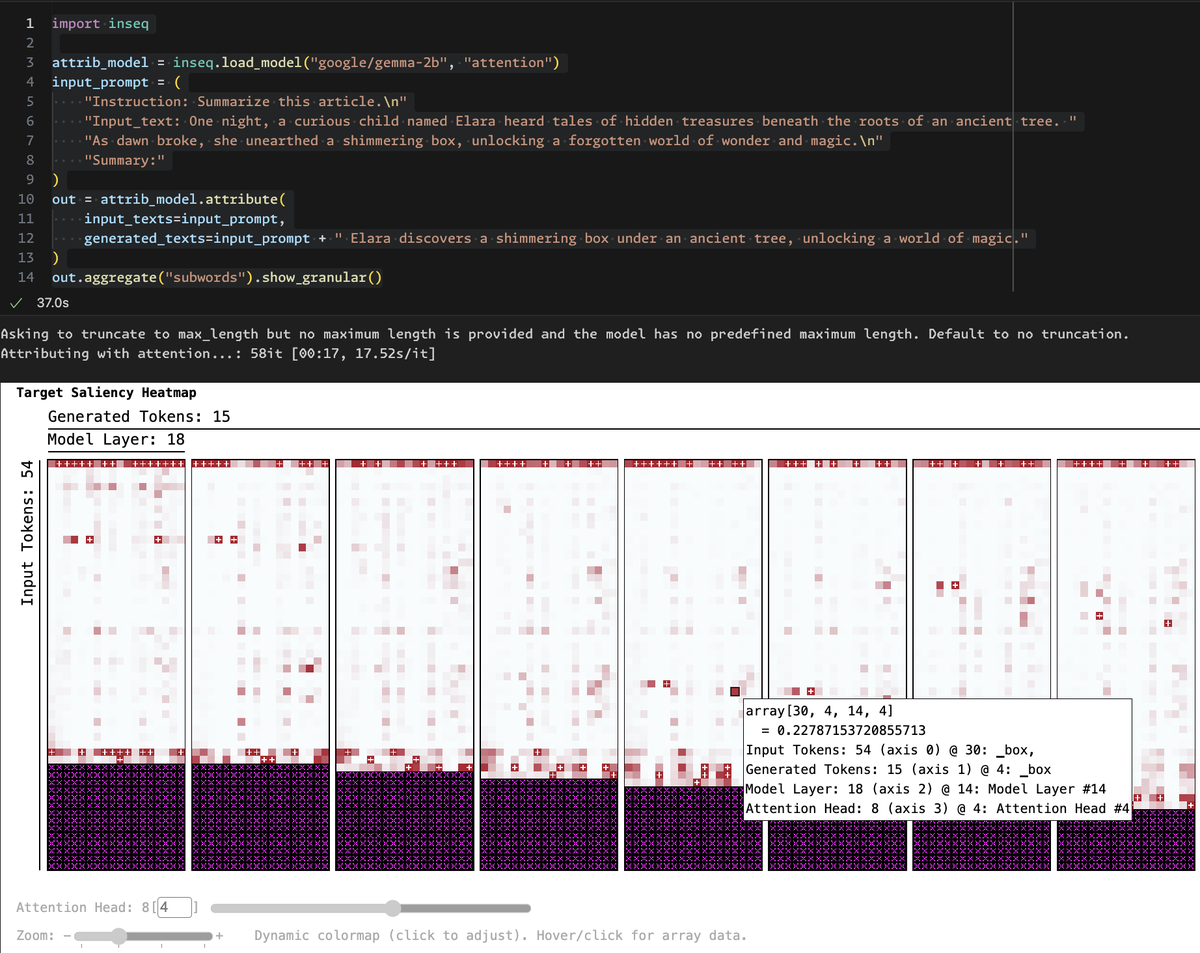

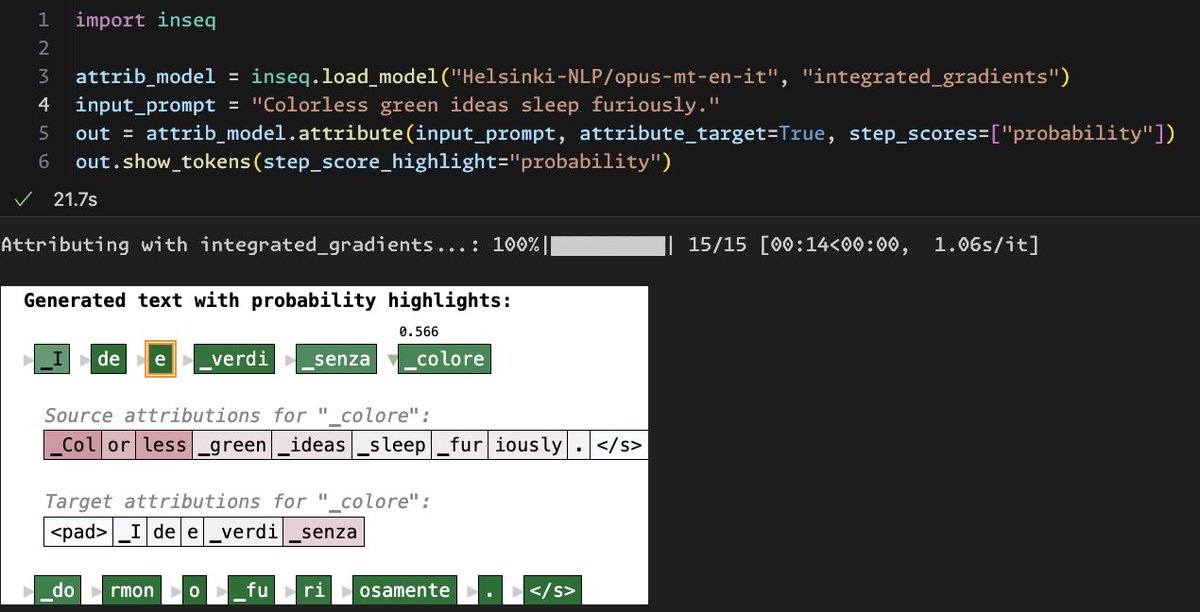

Thanks to the new treescope integration, @InseqLib now supports interactive visualizations for multidimensional attributions (show_granular), token highlights (show_tokens) and improved viz for attribute_context CLI! 🚀 Install main, will appear in v0.7 x.com/_ddjohnson/sta…

By popular demand, the Treescope pretty-printer from the Penzai neural net library can now be installed separately, and supports both JAX and PyTorch! And that's not all: Penzai itself now has less boilerplate and includes more pretrained Transformer models!

⚠️ Citations from prompting or NLI seem plausible, but may not faithfully reflect LLM reasoning. 🏝️ MIRAGE detects context dependence in generations via model internals, producing granular and faithful RAG citations. 🚀 Demo: huggingface.co/spaces/gsarti/… Fun collab w/ @Jirui_Qi, @AriannaBisazza & @raquel_dmg! Check it out ⬇️

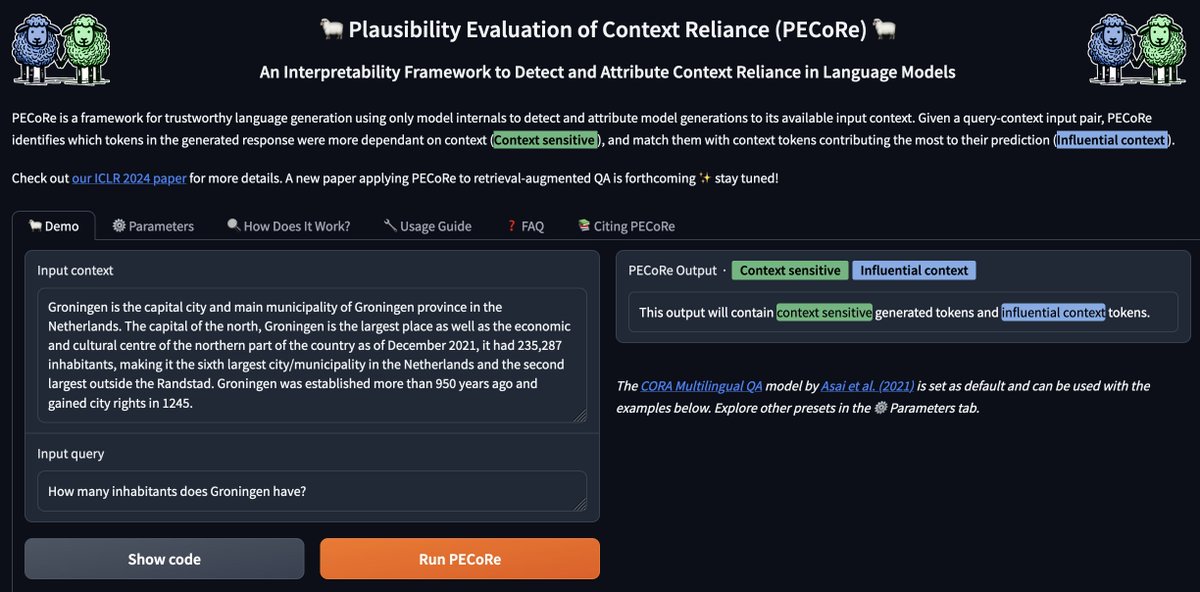

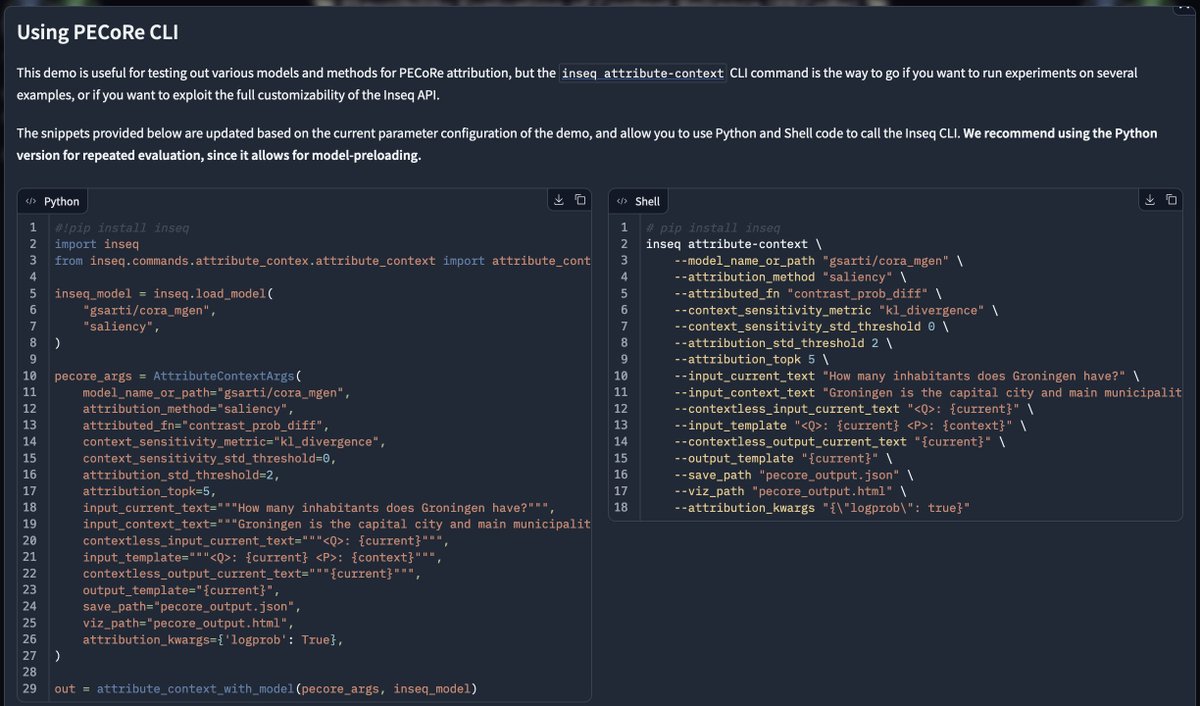

The official 🐑 PECoRe 🐑 demo to detect and attribute context dependence in LM generations is now available on @huggingface Spaces! 🚀 Includes code examples, a usage guide, useful presets for various dec-only & enc-dec models, and more! Check it out ⬇️ huggingface.co/spaces/gsarti/…

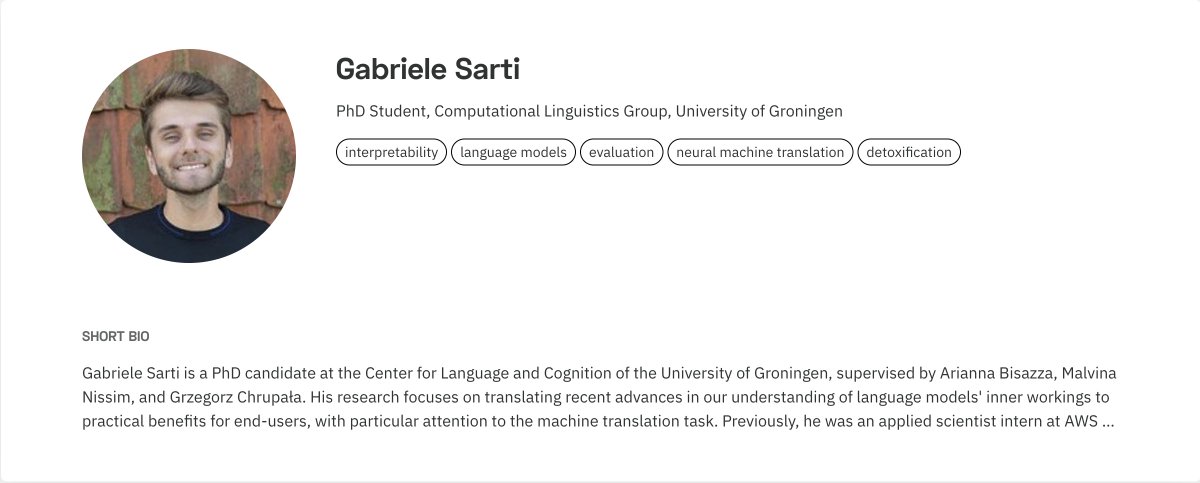

[1/8] Our new work (w/ @AriannaBisazza @gchrupala @MalvinaNissim) is finally out! 🎉 We introduce PECoRe, an interpretability framework using model internals to identify & attribute context dependence in language models. 📄Paper: arxiv.org/abs/2310.01188 #NLProc #neuralempty

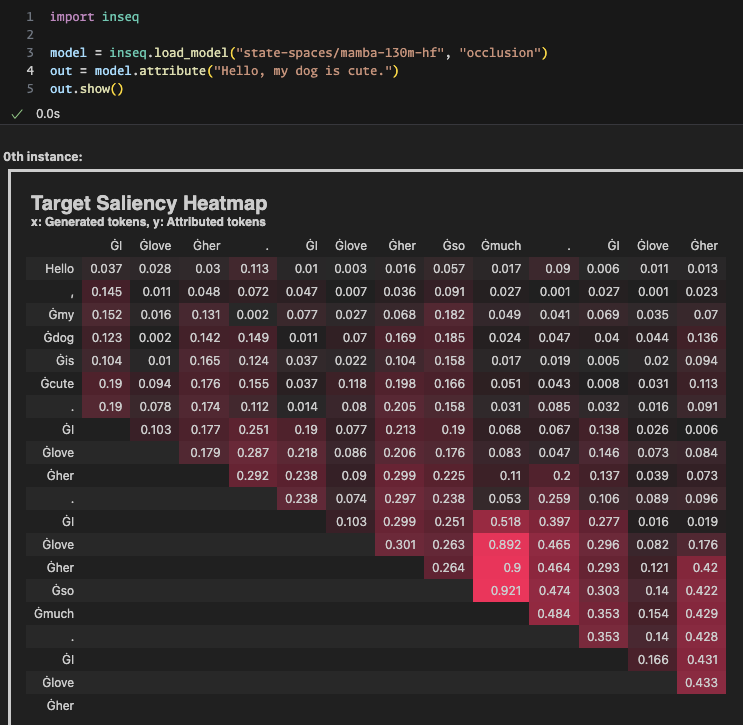

`mamba` is now available in transformers. PEFT finetuning example: gist.github.com/ArthurZucker/7… Thanks @_albertgu and @tri_dao for this brilliant model! 🚀 and the amazing `mamba-ssm` kernels powering this!

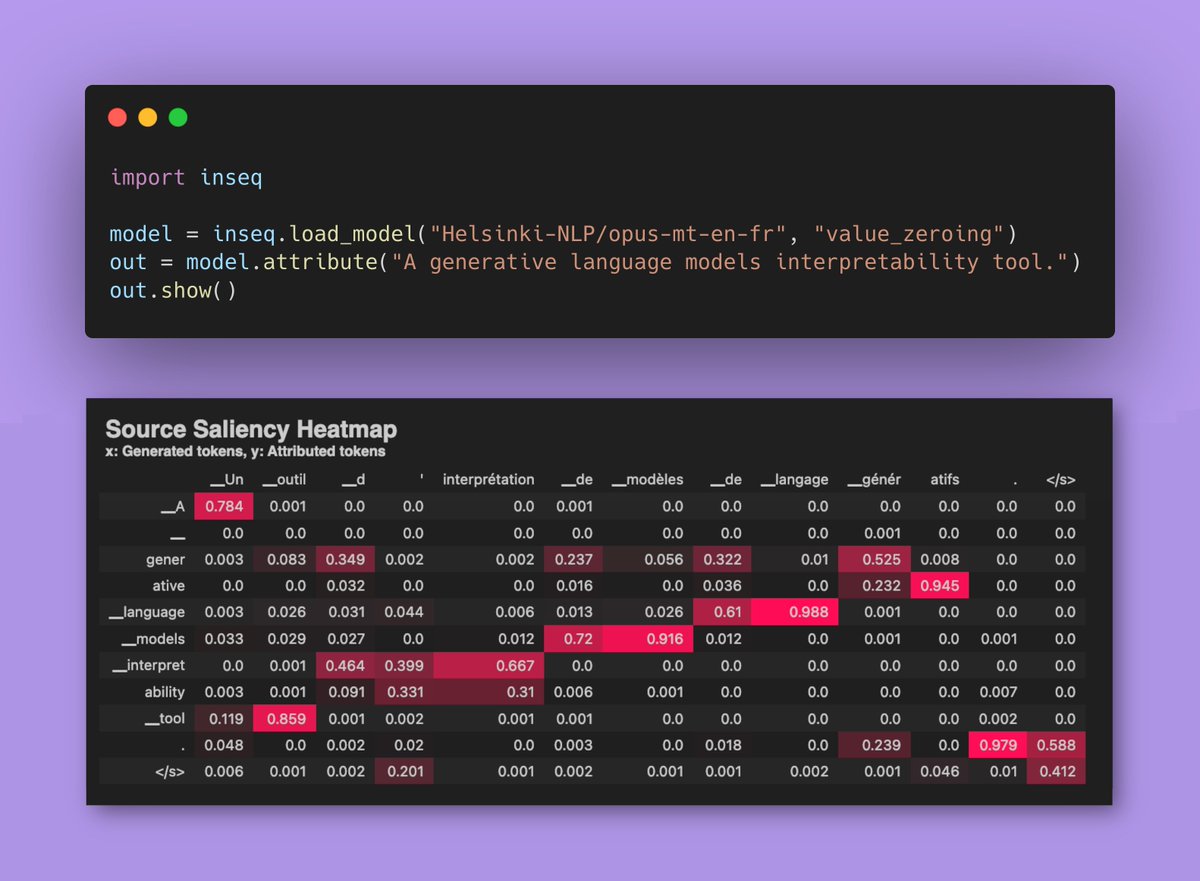

Value Zeroing, a faithful approach for analyzing context mixing in Transformers, is now available on @InseqLib main branch for all @huggingface text generation models! 🔀 🔍Paper introducing VZ: aclanthology.org/2023.eacl-main… 🐛VZ in Inseq: tinyurl.com/inseq-vz