Charlie Marsh

7.9K posts

Charlie Marsh

@charliermarsh

Building @astral_sh: Ruff, uv, and other high-performance Python tools. Prev: Staff engineer @SpringDiscovery, @KhanAcademy, BSE @PrincetonCS.

Brooklyn, NY Katılım Mart 2009

883 Takip Edilen35.7K Takipçiler

Sabitlenmiş Tweet

Varun Gupta going to be the youngest entrant to the Midas list in a generation if him and Raymond decide to share the Caffeinated books. HUP @Varungupta

English

@jarredsumner Thank you so much! And for all your help and support over the past few years 💪

English

Charlie Marsh retweetledi

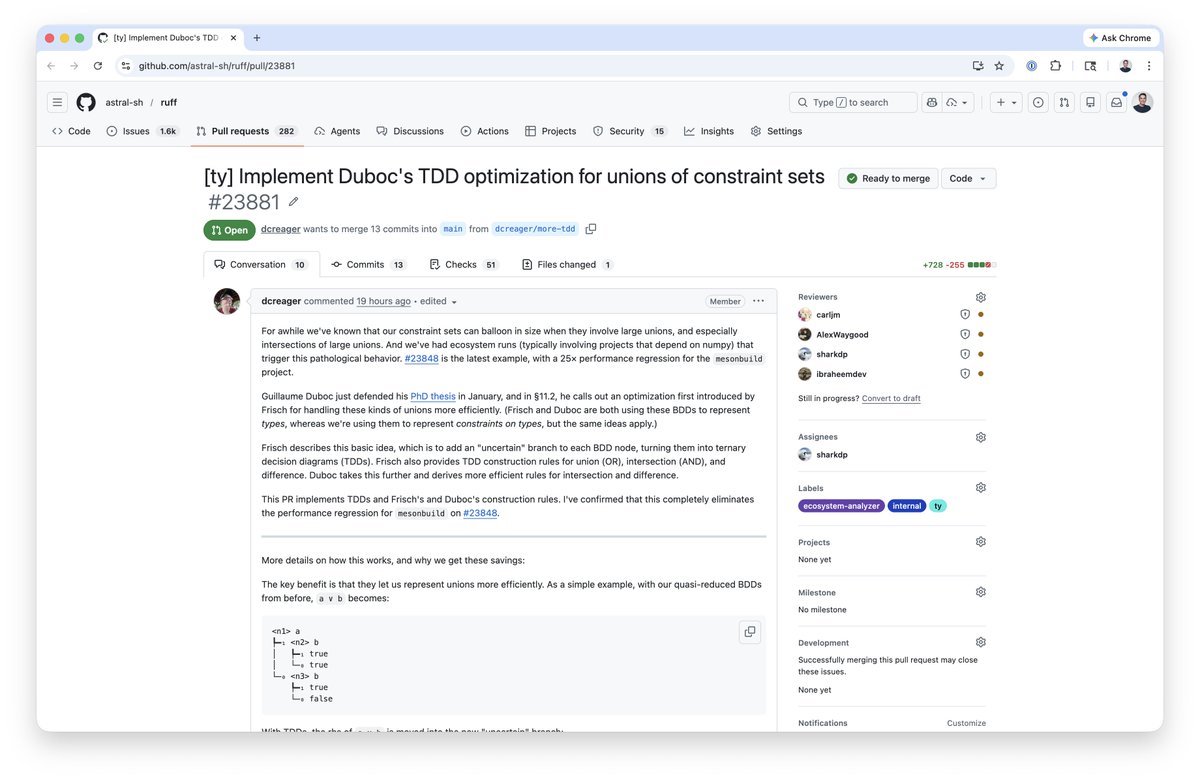

It is very cool to see the work we have done on the Elixir type system is already benefiting other communities, such as Python's ruff/ty: github.com/astral-sh/ruff…

English

Charlie Marsh retweetledi

Finally, Astral Codex

OpenAI Newsroom@OpenAINewsroom

We've reached an agreement to acquire Astral. After we close, OpenAI plans for @astral_sh to join our Codex team, with a continued focus on building great tools and advancing the shared mission of making developers more productive. openai.com/index/openai-t…

English

Charlie Marsh retweetledi

Check out how @astral_sh made CI runs up to 4.7x faster with Depot.

depot.dev/customers/astr…

@charliermarsh

English

@TheAhmadOsman @aloktiwari310 Can you explain what you mean here? Where does uv take performance shortcuts?

English

@aloktiwari310 Great for Python in general but still not as optimal in terms of architecture optimization for CUDA. When you aim for UX sometimes you take performance shortcuts.

I use UV exclusively for any non-LLM related projects because the trade-offs are negligible but not here.

English

PRO TIP

>Build your inference engine from source

>Learn how to optimize the build for your architecture and hardware

>Bonus: Codex/Claude Code/Kimi Cli/Droid/OpenCode can help you with this

>Use PIP not UV (this one will get heat but I said what I said)

Performance will go up

Ahmad@TheAhmadOsman

I was assuming it’s common knowledge at this point not to use Windows for local LLMs But just in case: DO NOT USE Windows for local LLMs

English

@charliermarsh @xeophon Does ty/ruff support this already??

English

@charliermarsh if the story is anything to go by, it’ll be a rough era followed by an incredible positive transformation, so the end result seems ok 👌

English

@jarredsumner I think startup has caused me to lose weight (in a bad way) lol

English