EdinburghNLP

1.3K posts

EdinburghNLP

@EdinburghNLP

The Natural Language Processing Group at the University of Edinburgh.

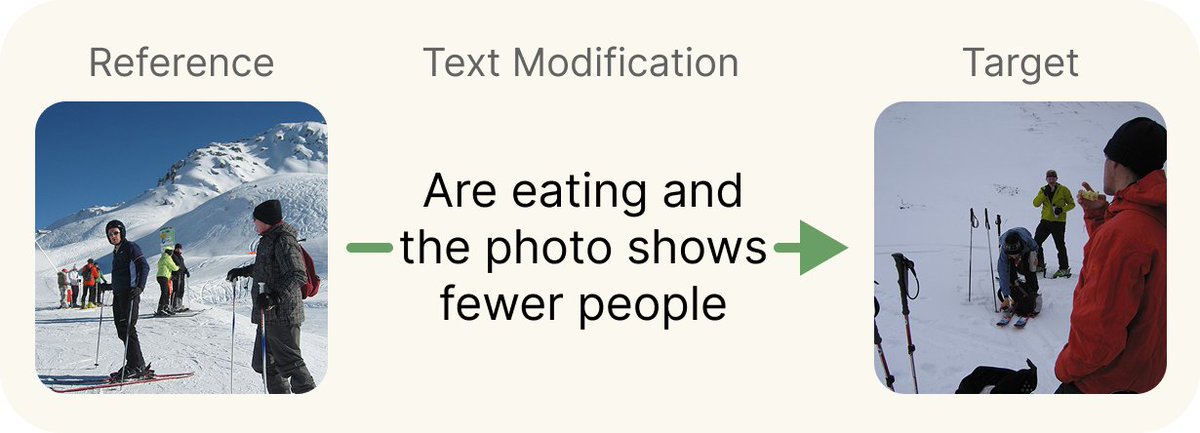

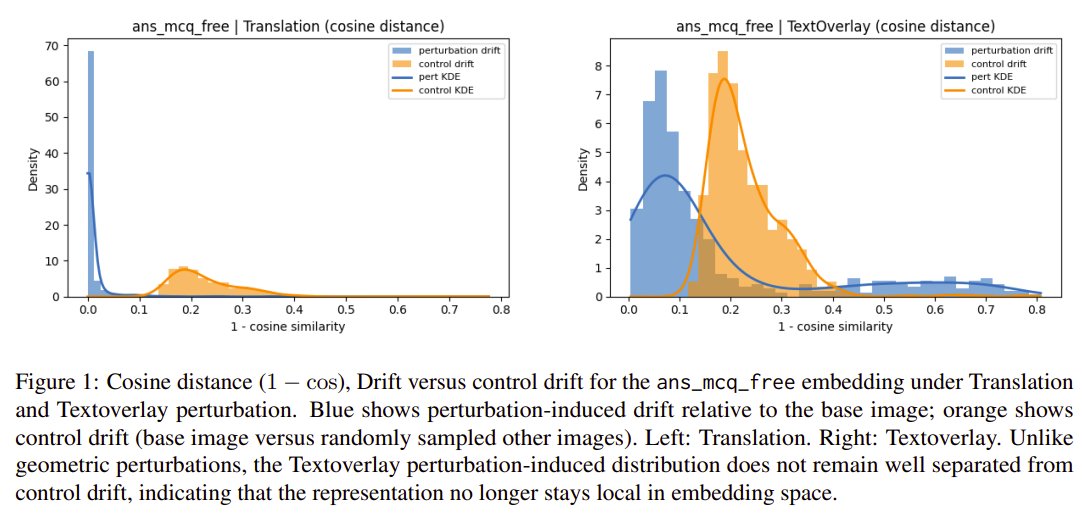

To address this, we introduce CIRCUS, a curated evaluation setup for testing genuine multimodal composition! 🌐 Website: matteoattimonelli.github.io/CIRCUS/ 📄 Paper: arxiv.org/pdf/2605.14787

🚀 The Cancellation Hypothesis in Critic-Free RL Conventional view: GRPO boosts successful rollouts and suppresses failed ones. We find Token Flipping: positive and negative rollouts show remarkably similar boosted/suppressed token ratios.

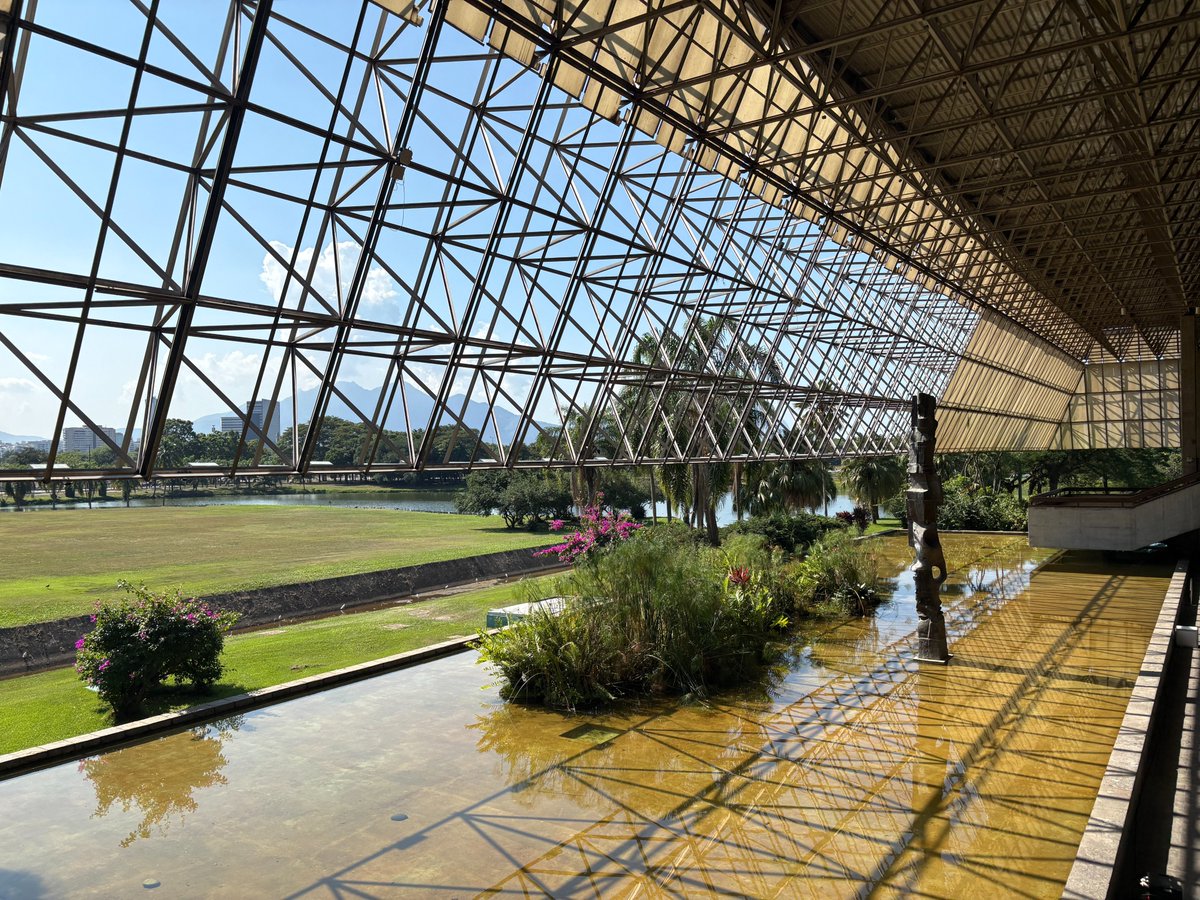

Heading to Rio for #ICLR2026! 🇧🇷 Presenting 2 papers : 1. 𝐈𝐧𝐯𝐞𝐫𝐬𝐞 𝐒𝐜𝐚𝐥𝐢𝐧𝐠 𝐢𝐧 𝐓𝐞𝐬𝐭-𝐓𝐢𝐦𝐞 𝐂𝐨𝐦𝐩𝐮𝐭𝐞 (@TmlrOrg Featured Certification) — Sat Apr 25, 10:30 AM, Pavilion 3 (#903) 2. 𝐓𝐡𝐞 𝐇𝐨𝐭 𝐌𝐞𝐬𝐬 𝐨𝐟 𝐀𝐈: 𝐇𝐨𝐰 𝐃𝐨𝐞𝐬 𝐌𝐢𝐬𝐚𝐥𝐢𝐠𝐧𝐦𝐞𝐧𝐭 𝐒𝐜𝐚𝐥𝐞 𝐖𝐢𝐭𝐡 𝐌𝐨𝐝𝐞𝐥 𝐈𝐧𝐭𝐞𝐥𝐥𝐢𝐠𝐞𝐧𝐜𝐞 𝐚𝐧𝐝 𝐓𝐚𝐬𝐤 𝐂𝐨𝐦𝐩𝐥𝐞𝐱𝐢𝐭𝐲? — Sat Apr 25, 3:15 PM, Pavilion 3 (#110) Come find me if you're into LLM evaluation, Chain-of-Thought, and AI safety! 👋

1/ We are excited to release AdaSplash-2 🚀 A big milestone from our lab on faster differentiable sparse attention. And honestly, one of my favorite examples of sparsity giving a real win-win: more efficiency + better downstream performance, especially for long-context tasks.

We are advertising a postdoc position to work on #generative #models, #structure #induction, and MI #estimation with Michael Gutmann as part of #GenAI hub! elxw.fa.em3.oraclecloud.com/hcmUI/Candidat… Get in touch! (#ML #AI) 👉 homepages.inf.ed.ac.uk/snaraya3/ 👉 michaelgutmann.github.io

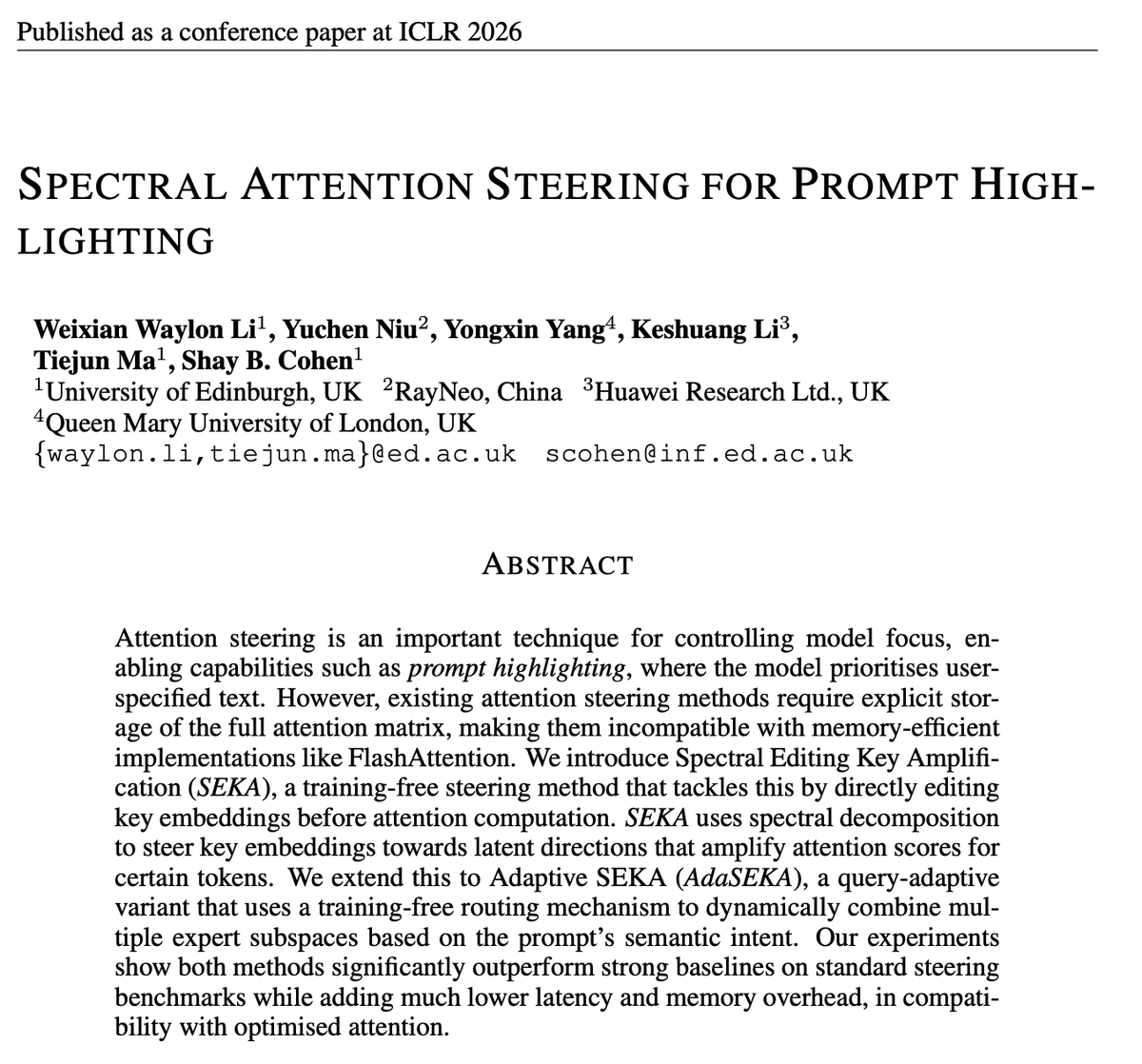

🚀 Excited to share our paper "Spectral Attention Steering for Prompt Highlighting" has been accepted to ICLR 2026 and the camera-ready version is finally live! We’ve found a way to steer LLM attention that is actually effective, fast and compatible with modern hardware.

not only does the Qwen 3.5 9B beat the GPT OSS 20B it BEATS the 120B INCREDIBLE stuff

new blog! What methodologies do labs use to train frontier models? The blog distills 7 open-weight model reports from frontier labs, covering architecture, stability, optimizers, data curation, pre/mid/post-training + RL, and behaviors/safety djdumpling.github.io/2026/01/31/fro…

Thrilled to share our latest research on verifying CoT reasonings, completed during my recent internship at FAIR @metaai. In this work, we introduce Circuit-based Reasoning Verification (CRV), a new white-box method to analyse and verify how LLMs reason, step-by-step.