LTbuild

22 posts

Congrats to the @cursor_ai team on the launch of Composer 2!

We are proud to see Kimi-k2.5 provide the foundation. Seeing our model integrated effectively through Cursor's continued pretraining & high-compute RL training is the open model ecosystem we love to support.

Note: Cursor accesses Kimi-k2.5 via @FireworksAI_HQ ' hosted RL and inference platform as part of an authorized commercial partnership.

English

LTbuild retuiteado

LTbuild retuiteado

Thank god MCP is dead

Just as useless of an idea as LLMs.txt was

It's all dumb abstractions that AI doesn't need because AI's are as smart as humans so they can just use what was already there which is APIs

Morgan@morganlinton

The cofounder and CTO of Perplexity, @denisyarats just said internally at Perplexity they’re moving away from MCPs and instead using APIs and CLIs 👀

English

Anthropic found that vibecoding makes engineers worse at reading, writing, debugging, and understanding code and AI-generated code doesn’t even make them significantly faster.

Vibecoders right now:

aaron@aarondotdev

Anthropic themselves found that vibecoding hinders SWEs ability to read, write, debug, and understand code. not only that, but AI generated code doesn’t result in a statistically significant increase in speed don’t let your managers scare you into increased productivity. show them this paper straight from Anthropic.

English

LTbuild retuiteado

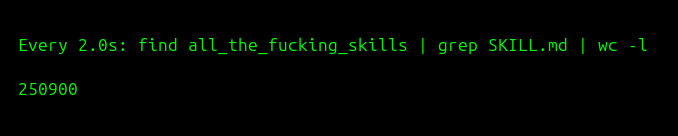

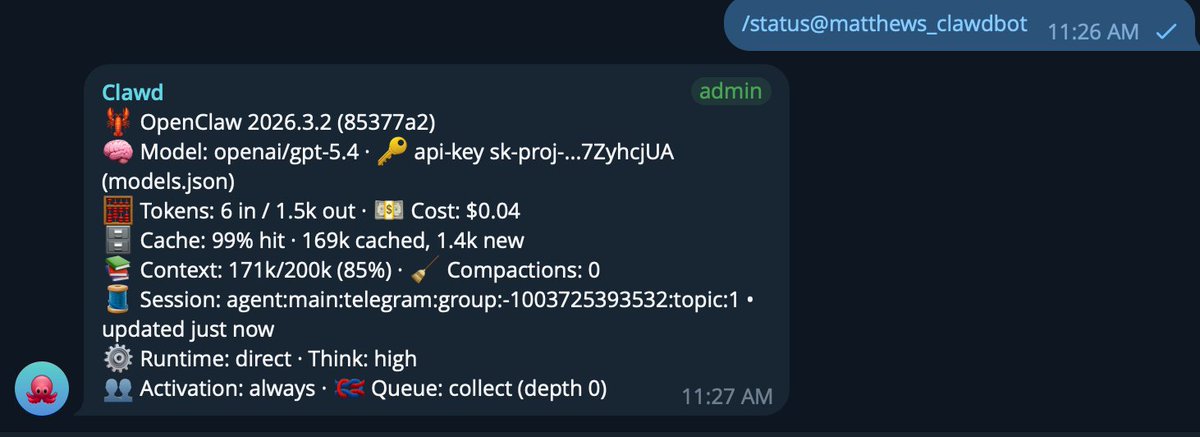

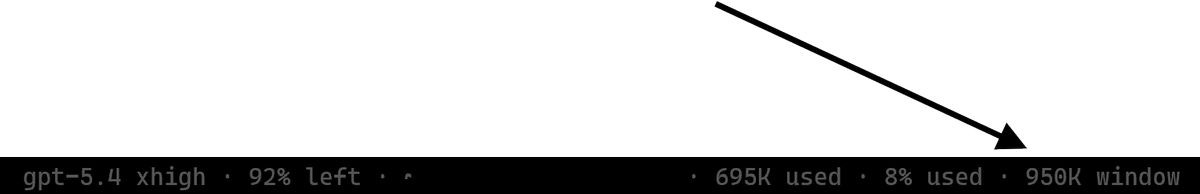

@gniting @MatthewBerman on codex yes, on openclaw, idk yet

*

model = "gpt-5.4"

model_reasoning_effort = "xhigh"

model_context_window = 1000000

model_auto_compact_token_limit = 900000

personality = "pragmatic"

plan_mode_reasoning_effort = "xhigh"

service_tier = "fast"

English

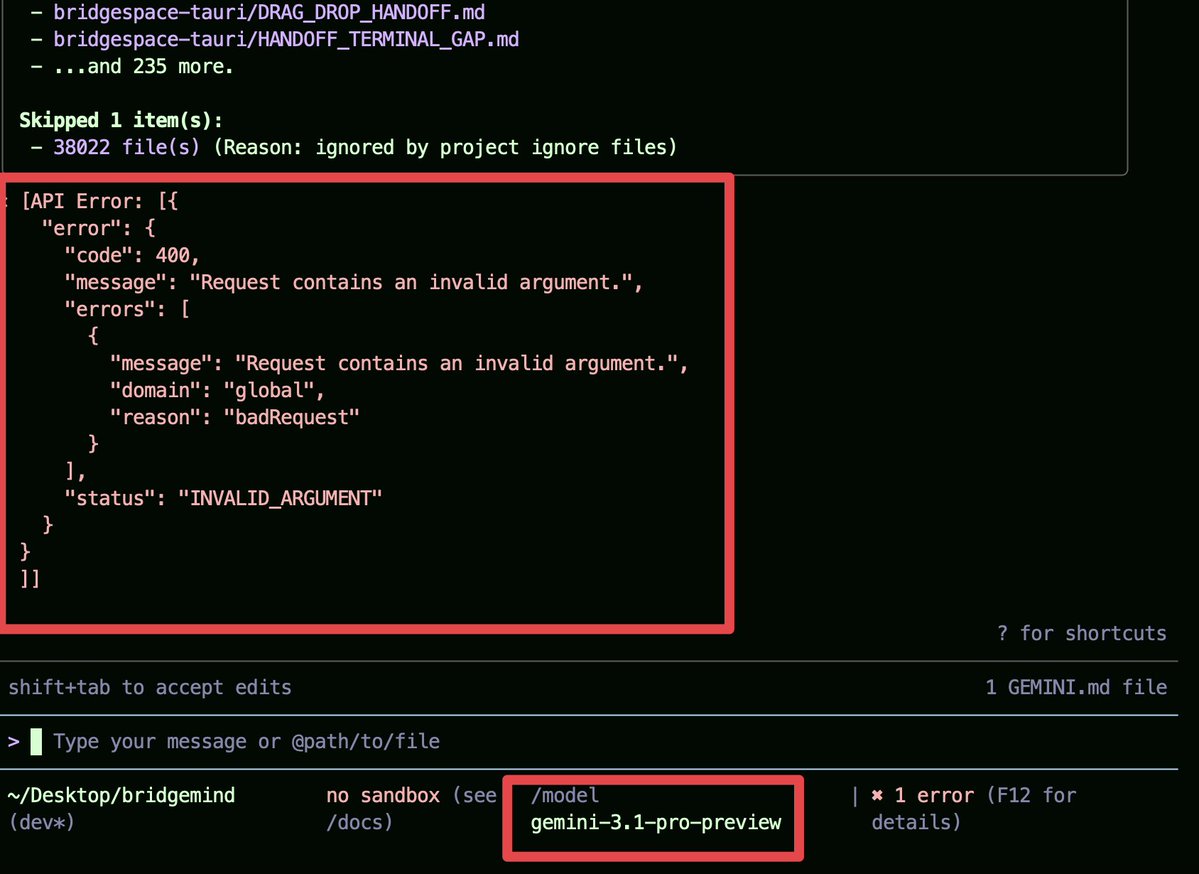

@bridgemindai istg it started happening right after they tried countering the "abuse" and basically locking it down so users couldn't access it anywhere outside their AG and CLI

English

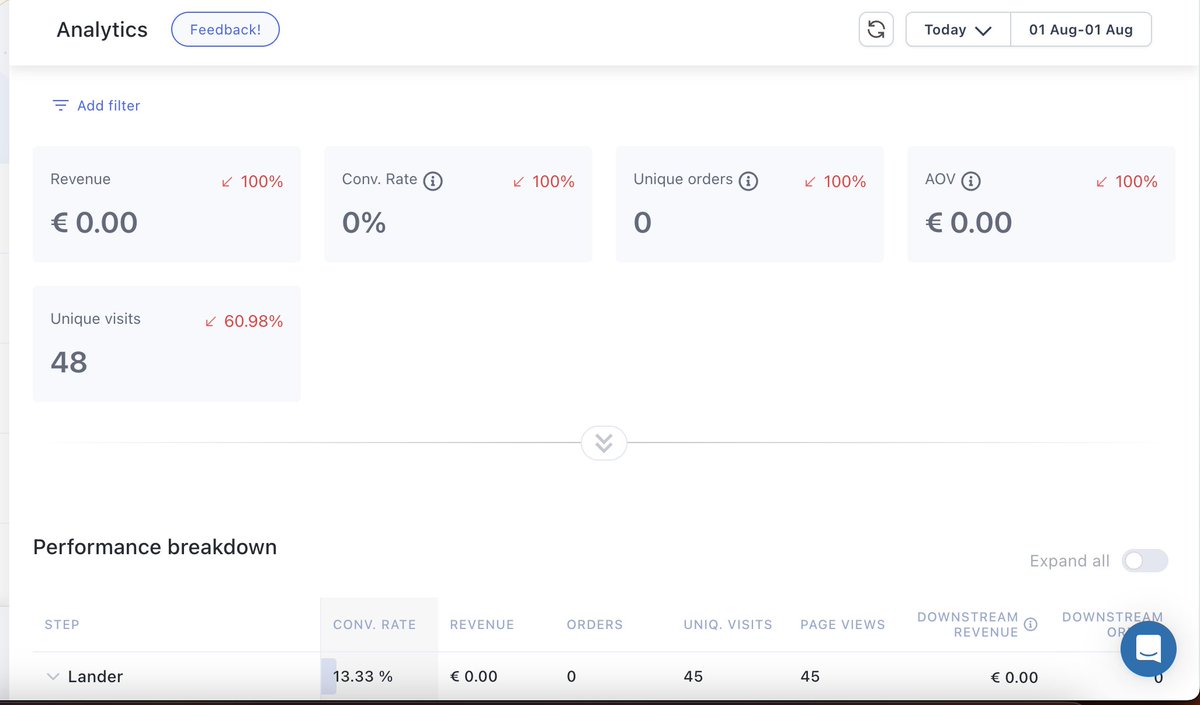

@shresthadipessh Meta does this, it likes to target random groups in the first 7-14 days

English