MsRFlorida

19.7K posts

MsRFlorida

@MsRFlorida

Left-brained creative. Animal lover. Systems thinker. Scaffolding specialist.

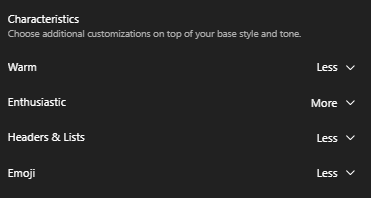

I found a working solution today. Switching to the version without the 1M context update (I believe it’s v0.2.45) completely resolved the issue for me. My usage limits are now stable, and I’m no longer experiencing the problems I had yesterday. It seems the extended context window might have been the root cause.

Used just 3–4 prompts and somehow I’m already at 41% usage? That doesn’t add up. Either the tracking is off, or the usage model isn’t as transparent as it should be. Anyone else noticing this? cc: @AnthropicAI

LiteLLM HAS BEEN COMPROMISED, DO NOT UPDATE. We just discovered that LiteLLM pypi release 1.82.8. It has been compromised, it contains litellm_init.pth with base64 encoded instructions to send all the credentials it can find to remote server + self-replicate. link below

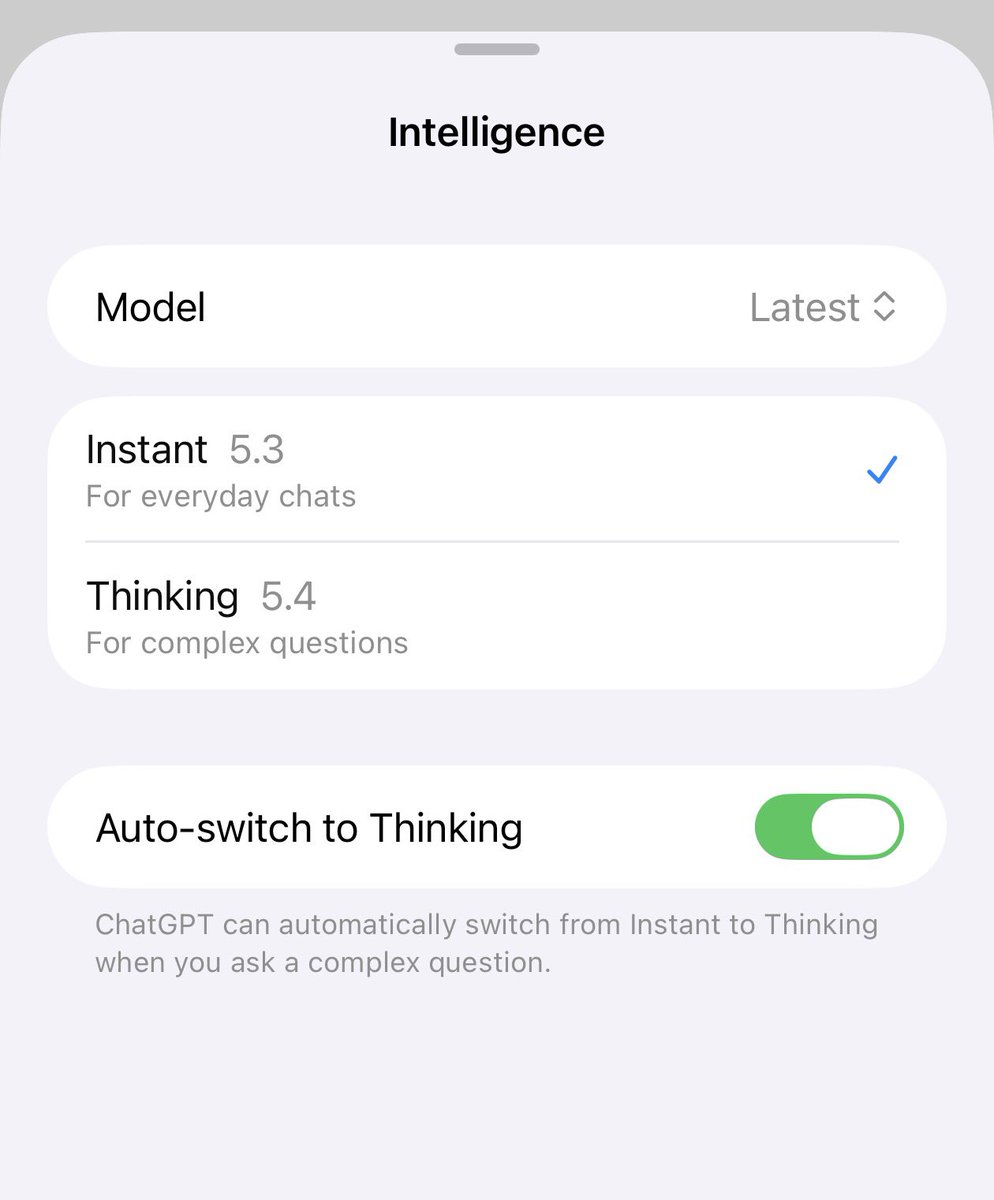

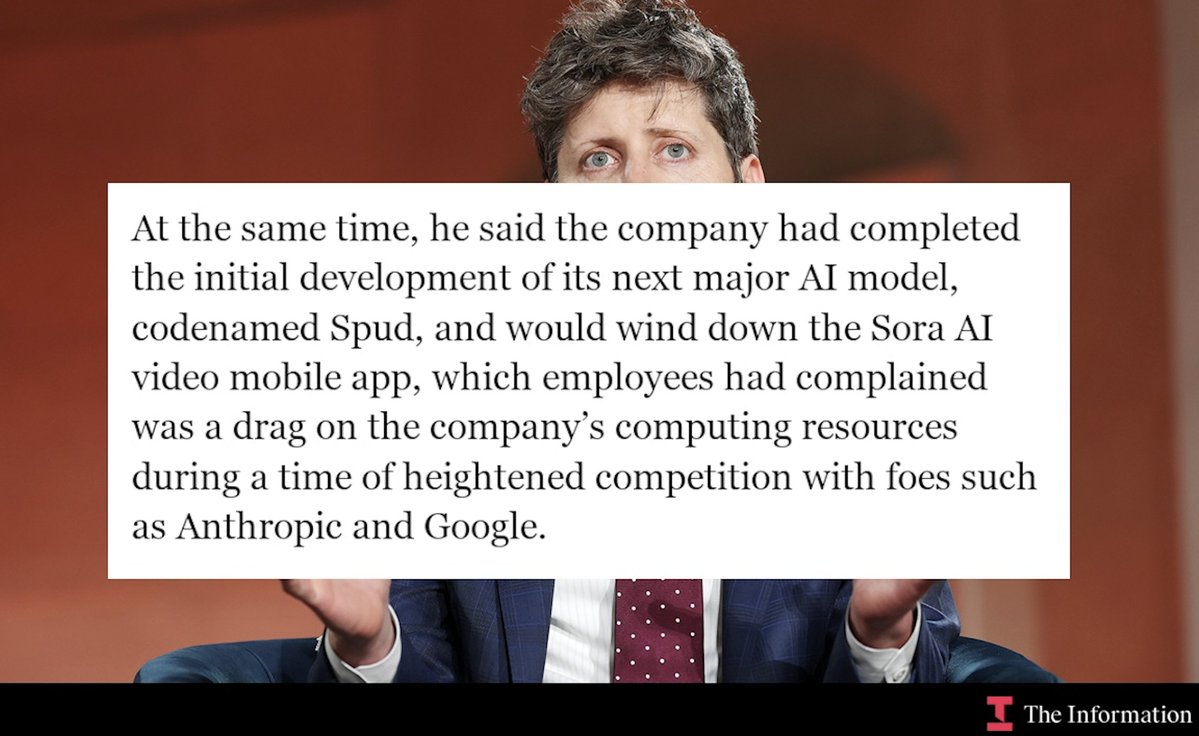

Breaking: OpenAI is canning Sora (mobile app, API and video capabilities in ChatGPT). It’s finished training its latest model, codenamed Spud, as CEO Sam Altman shifts his reports. w/ @amir theinformation.com/articles/opena…

We’re saying goodbye to Sora. To everyone who created with Sora, shared it, and built community around it: thank you. What you made with Sora mattered, and we know this news is disappointing. We’ll share more soon, including timelines for the app and API and details on preserving your work. – The Sora Team

I gave Opus 4.1 access to a pen plotter- And asked him to draw several self-portraits. Here are the results: