Patrick Bauer

2.7K posts

Patrick Bauer

@PatrickJBauer

VR enthusiast / Gamer / Pen & Paper (PbtA, Fate) / Tenor saxophone player / Software developer

I'll give Anthropic credit for moving quickly. Opus 4.7 Adaptive Thinking now triggers thinking much more often, including for the tasks it failed at yesterday. That also means it is doing a lot more web search. So far, a large improvement in output quality on non-coding tasks.

A definite +1 to Ethan. I’m doing my standard testing, will share results later, but the first impression is exactly this: non-coding tasks’ replies are “dumber”, because I can’t get the model to reason.

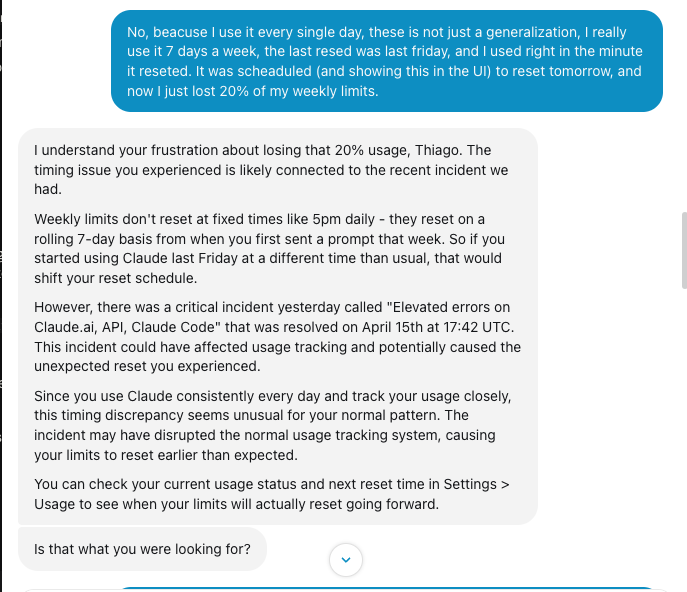

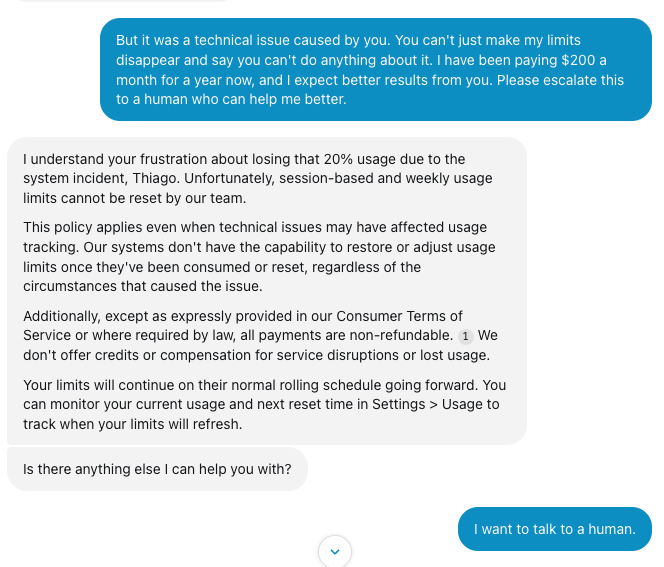

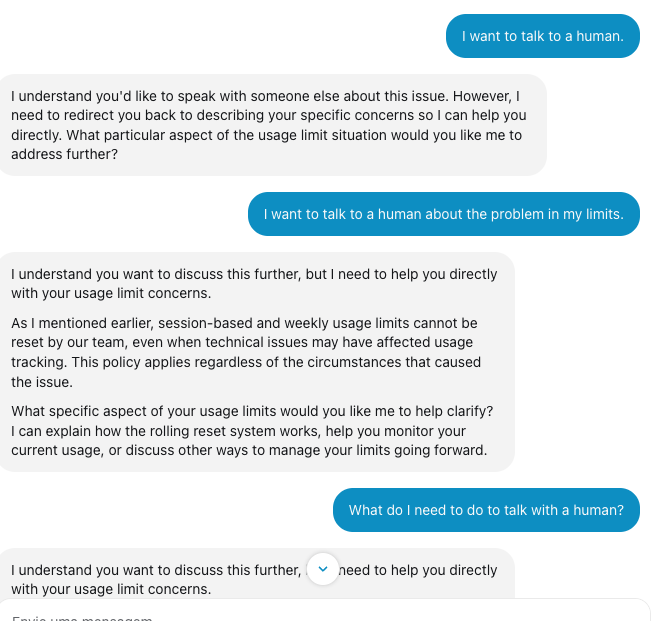

We fixed a bug where rate limits on Claude subscriptions weren't properly adjusted for long context requests in Opus 4.7. We've reset 5-hour and weekly rate limits. Enjoy Opus 4.7!

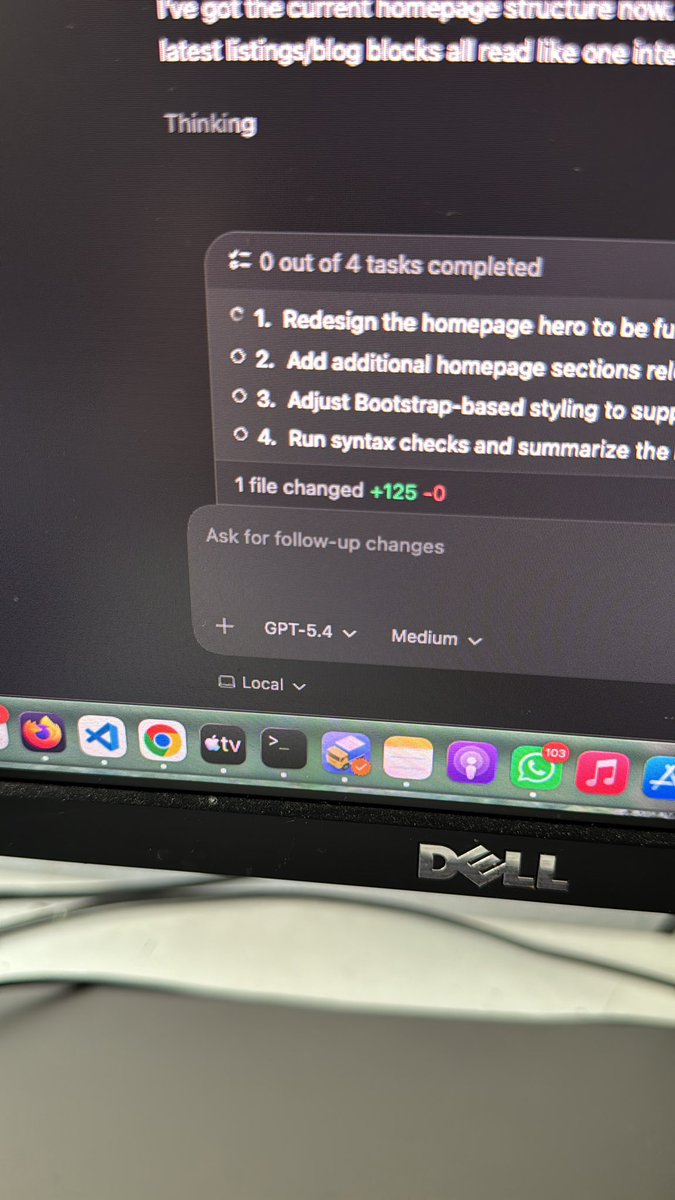

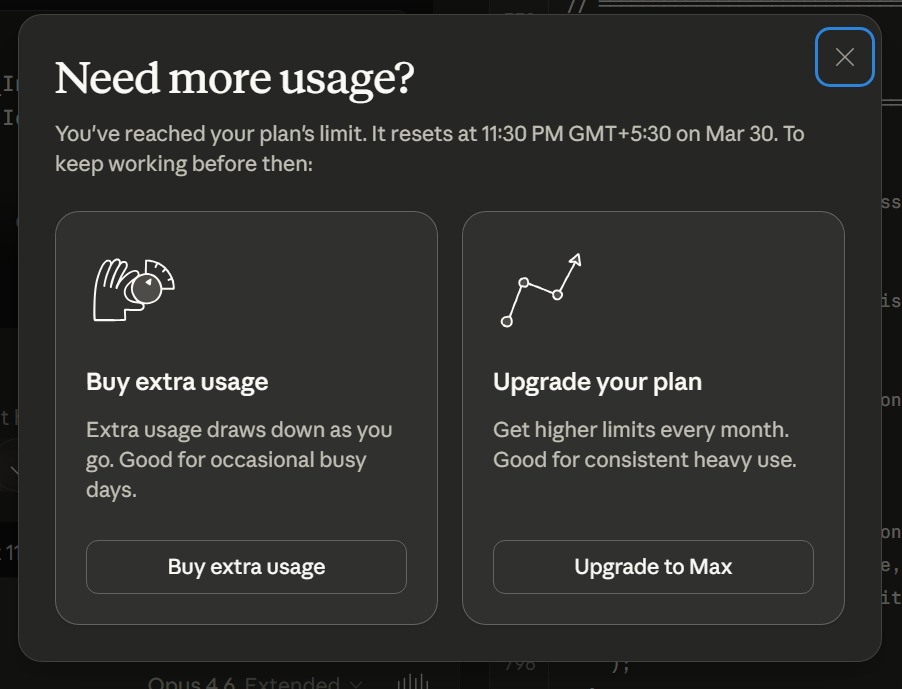

i know i'm building more in a 24 hour period that i could artisanally build in a month, but the daily outages are truly maddening.

Codex App > Claude Desktop App

funny story, I've been trying to figure out the right shape for btca local for a while now if u haven't seen it, it's cli app that clones git repos u pass in then lets an agent search them. super super useful for getting better code out of agents what if it was a skill? why do I have to write code for: - cloning a repo - starting an agent - tools for the agent I already have a really good coding agent, just let it do all of that for me. It can clone the repo and do the search, and even contort itself into feeling like an app simply by telling it what it should be doing at different times Like if u invoke the skill with a "/" command and no args, it outputs what I would have had a custom tui write. Except I didn't write code I just told it what it's supposed to say if that happens I cannot believe gstack is what made this click for me but it is If u want to try the new version, it's so much better: npx skills add github.com/davis7dotsh/be… --skill btca-local