posh the engine.

670 posts

posh the engine.

@poshlovesdata

Data Engineer & AI Systems Builder ⚙️ I build data pipelines, AI agents & automation tools that actually ship.

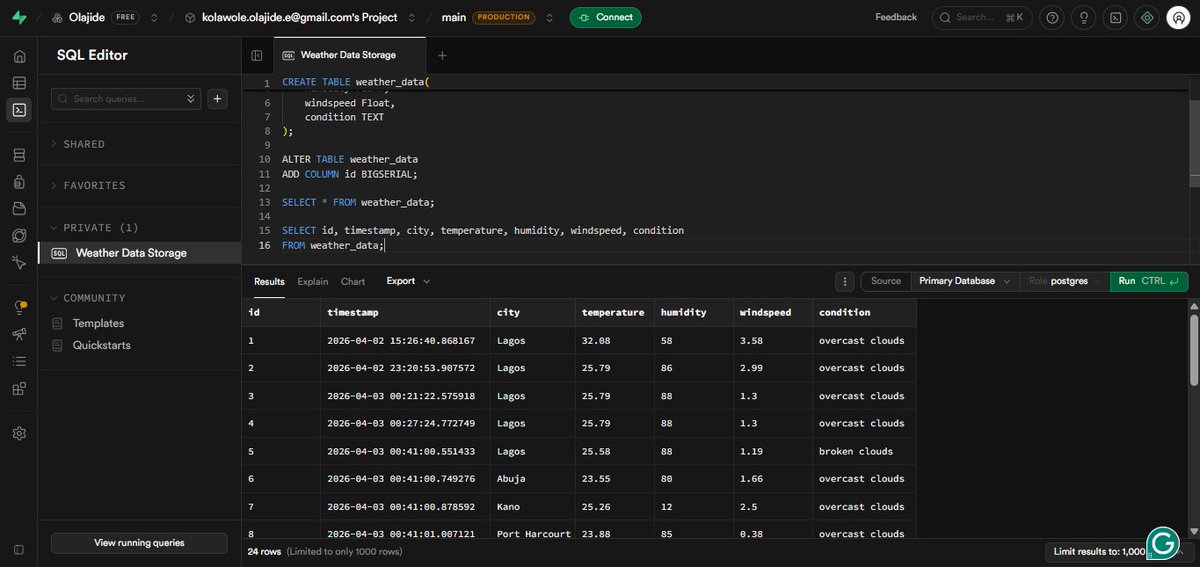

Hi everyone blessed day 🙏 I started late yesterday, so I ended up working through the night trying to migrate my Python script to the cloud (as I mentioned in my last post). Ran into some real challenges. When I deployed the script and tried to run it, I kept getting a “localhost” error. Then it clicked, my PostgreSQL database is running locally, so once the script is in the cloud, it can’t access my local machine. So I made a shift: Decided to move my database online. I explored cloud RDBMS options and chose Supabase (very powerful platform built on PostgreSQL). But… another challenge. When I tried connecting my Python script to Supabase and ingesting data from the API, I ran into a host connection error, something I didn’t face locally. Spent time debugging but couldn’t resolve it before calling it a night. Not going to lie, it was frustrating. But it’s a new day. Today’s goal: Fix the connection issue, get the pipeline running in the cloud, and complete this workflow. Not giving up now 😎 @Smanmalik83 I think I’m starting to understand the challenges you mentioned.

I’ve mostly worked with static datasets in Excel and Power BI. Now I’m taking the next step, working with real-time data. My next focus: API → Python → SQL → Power BI Instead of downloading datasets, I want to: - Pull data directly from APIs - Clean and transform it using Python - Store and query it with SQL - Build dashboards in Power BI The goal is to move closer to how data is actually handled in real-world scenarios. Still learning, but excited to build this end-to-end workflow. If you’ve worked with APIs before, I’d appreciate any tips or resources. @chidirolex @_VictorUgwu @Smanmalik83 @iam_daniiell @ObohX #DataAnalytics #PowerBI #Python #SQL

i need you guys to promise me something this thing’s hard to build. like really hard. and yes it’s gonna be completely free, idc. i just need y’all to promise me you’re gonna use it and put your friends on and use it. not download and keep, actually use and post about it thanks

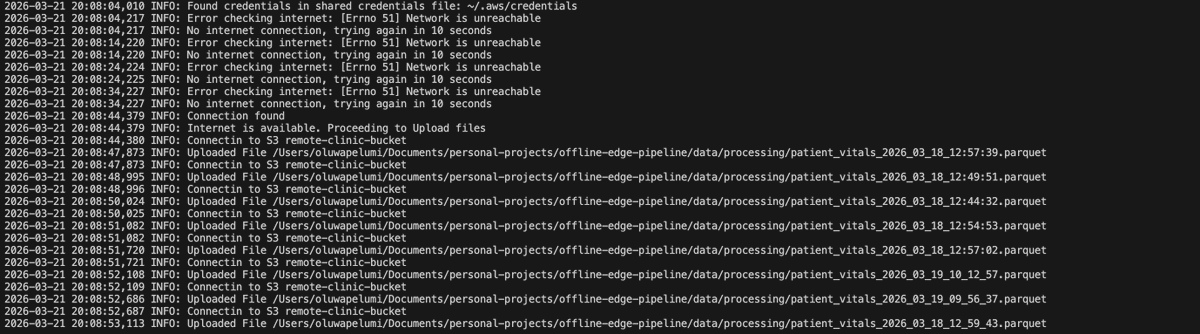

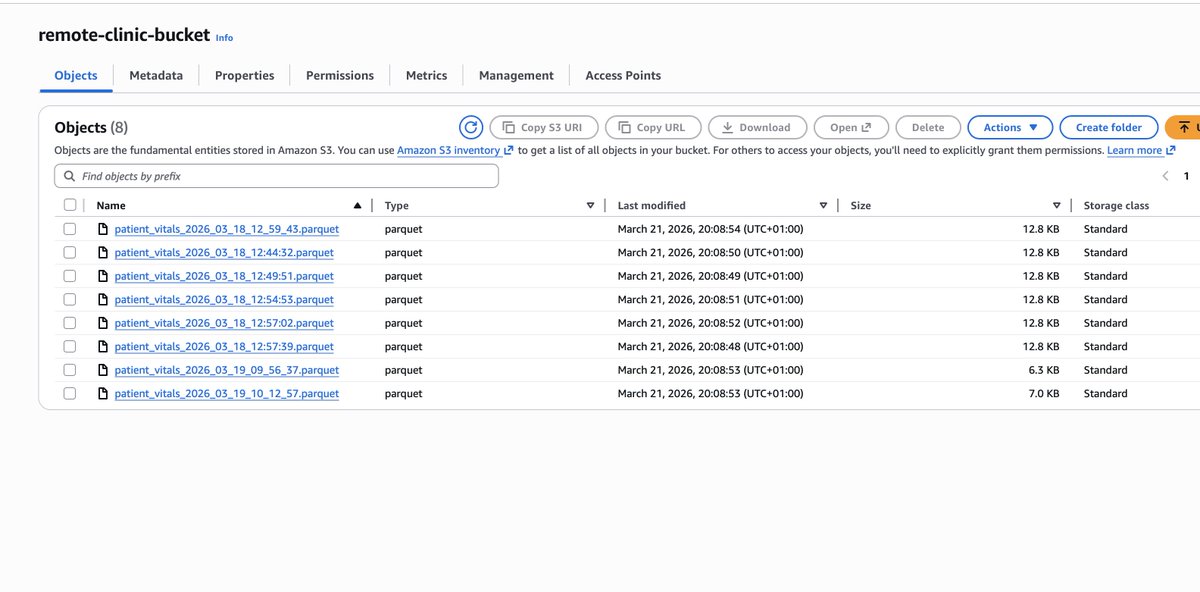

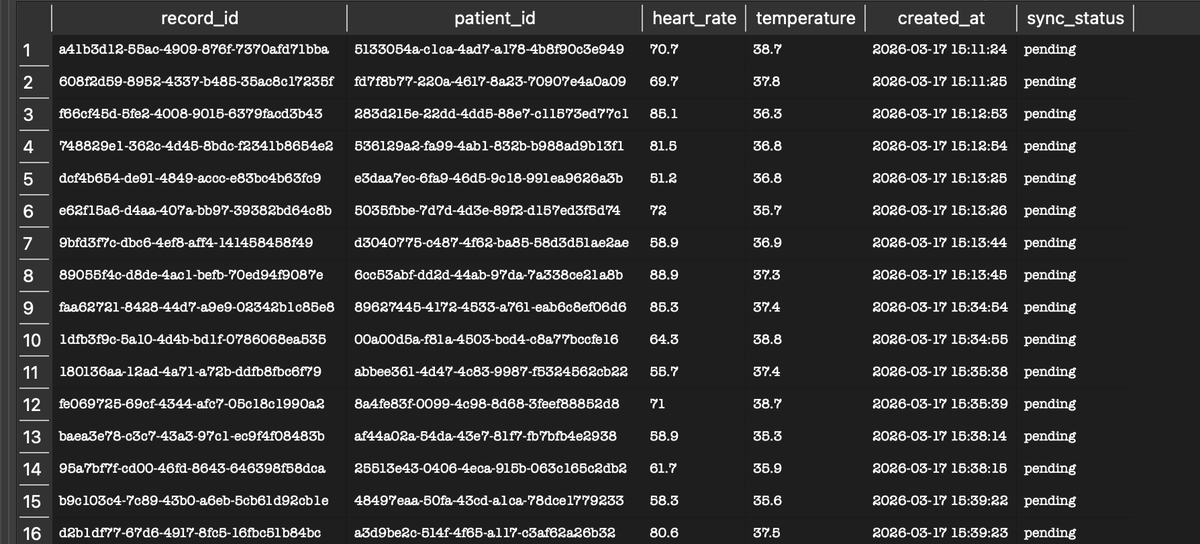

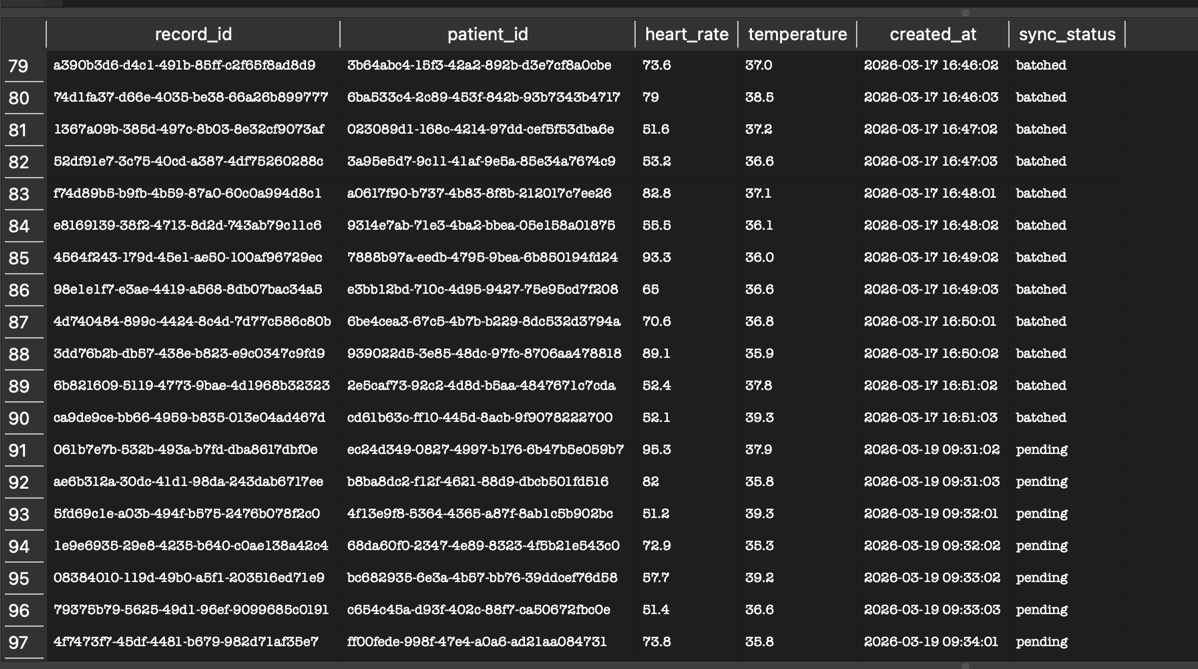

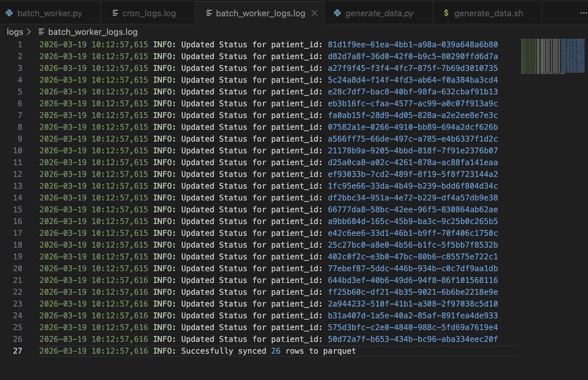

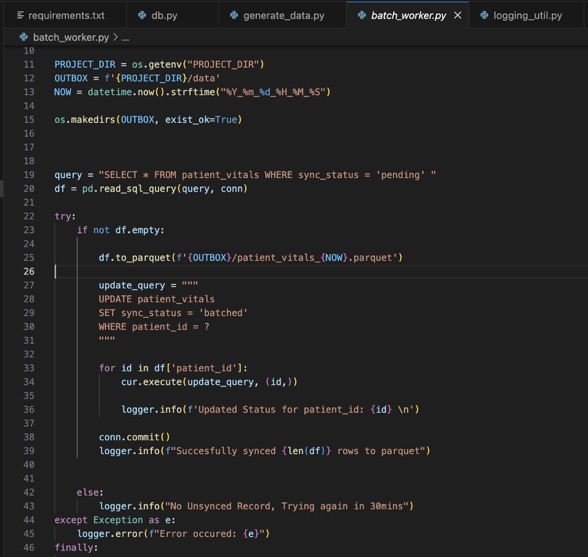

Phase 2:👀 To prepare the data in the SQLite DB to be sent in a compressed format (parquet), what did I do? 1. Created a python script that : > Checks the table for unsynced records (records where the sync_status is in 'pending') > Converts just those records into parquet using Pandas > Then updates the converted records (using the record_id) sync_status to 'synced' in the DB. Why did I do this? So, when the script runs again, it only looks for records that haven't been synced yet. Now I have lightweight data that can be sent over a minimal internet connection. What's next? > I'll be creating an uploader script that polls for an internet connection every 10 seconds. Once a connection is confirmed, it uploads the parquet file into an already provisioned S3 bucket. #DataEngineering #ETL #Python #DataAnalytics

Phase 2:👀 To prepare the data in the SQLite DB to be sent in a compressed format (parquet), what did I do? 1. Created a python script that : > Checks the table for unsynced records (records where the sync_status is in 'pending') > Converts just those records into parquet using Pandas > Then updates the converted records (using the record_id) sync_status to 'synced' in the DB. Why did I do this? So, when the script runs again, it only looks for records that haven't been synced yet. Now I have lightweight data that can be sent over a minimal internet connection. What's next? > I'll be creating an uploader script that polls for an internet connection every 10 seconds. Once a connection is confirmed, it uploads the parquet file into an already provisioned S3 bucket. #DataEngineering #ETL #Python #DataAnalytics

I'll definitely use an offline first approach, and here's how I'd do it: 1. Store the data locally in a light weight DB like Sqlite. 2. Run a cron job to regularly batch and compress that data into Parquet. 3. Another script that polls for internet connection, so once an Internet connection comes up, it uploads the parquet into remote storage like an S3 Bucket 4. From there, S3 event notifications can trigger the ingestion pipeline to deduplicate and model the data.

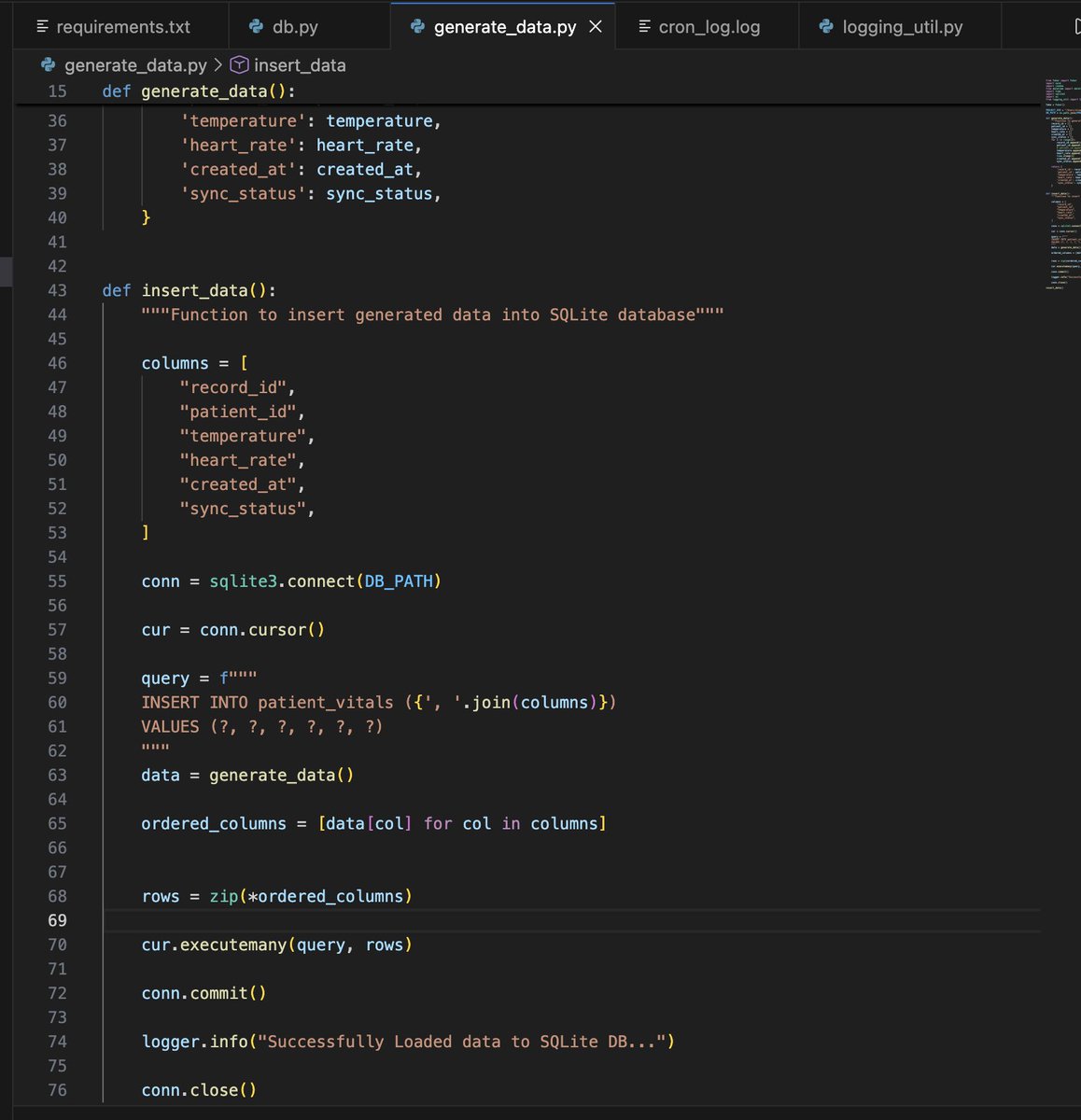

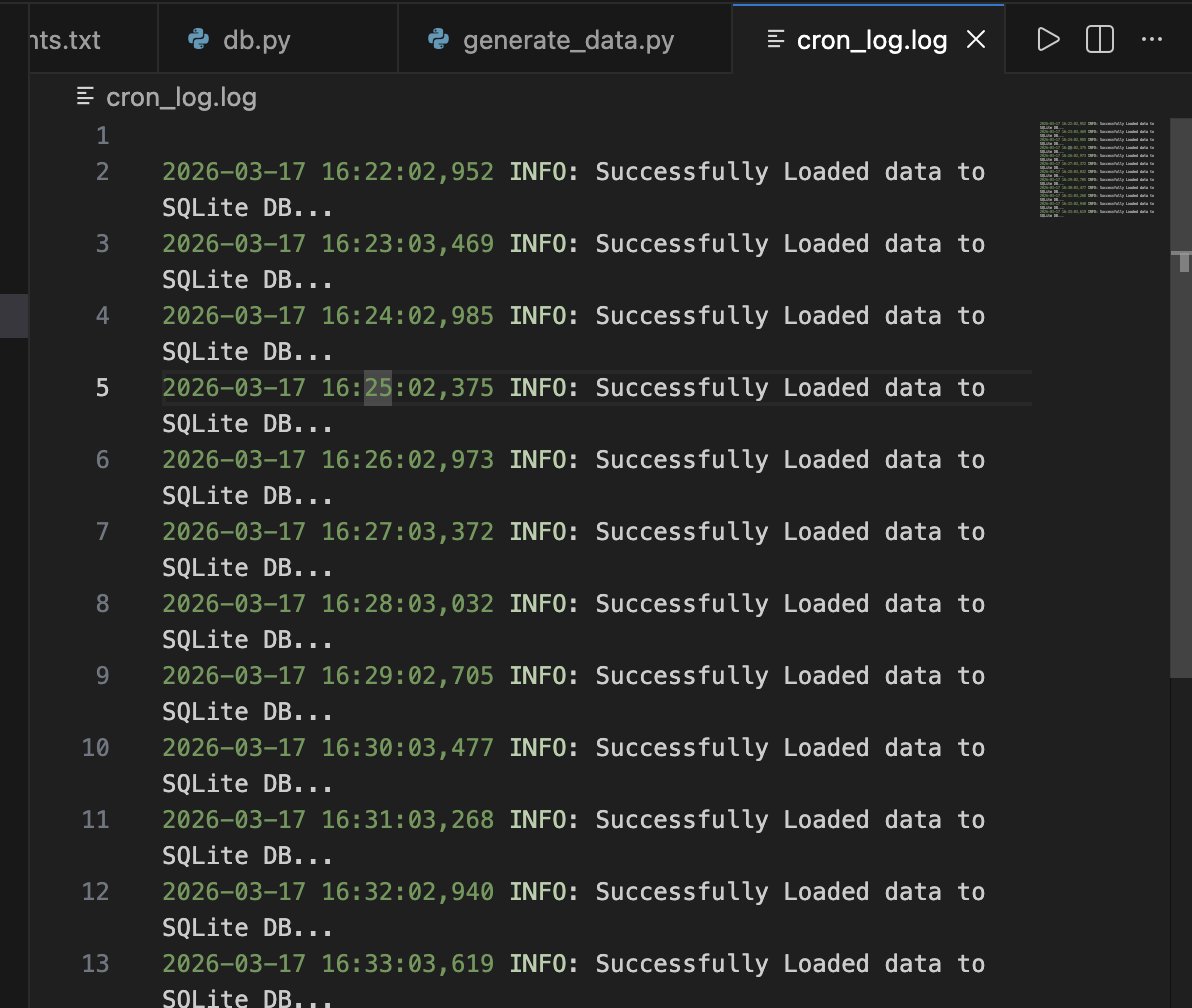

Proof of Concept:😶🌫️ So i started working on this as a project, what I've done: 1. Initialized an SQLite Database and created a table called patient_vitals to store patients vitals. 2. Built a Python script to generate and load data into the SQLite database 3. Automated the data generation and loading using cronjob, which currently runs every minute (would eventually change it to 10 mins) to simulate actual data entry in an health facility. What next? To prepare the data into highly compressed payloads (parquet) so it's ready the millisecond internet is available in the health facility.