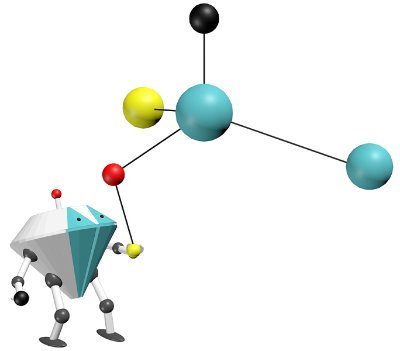

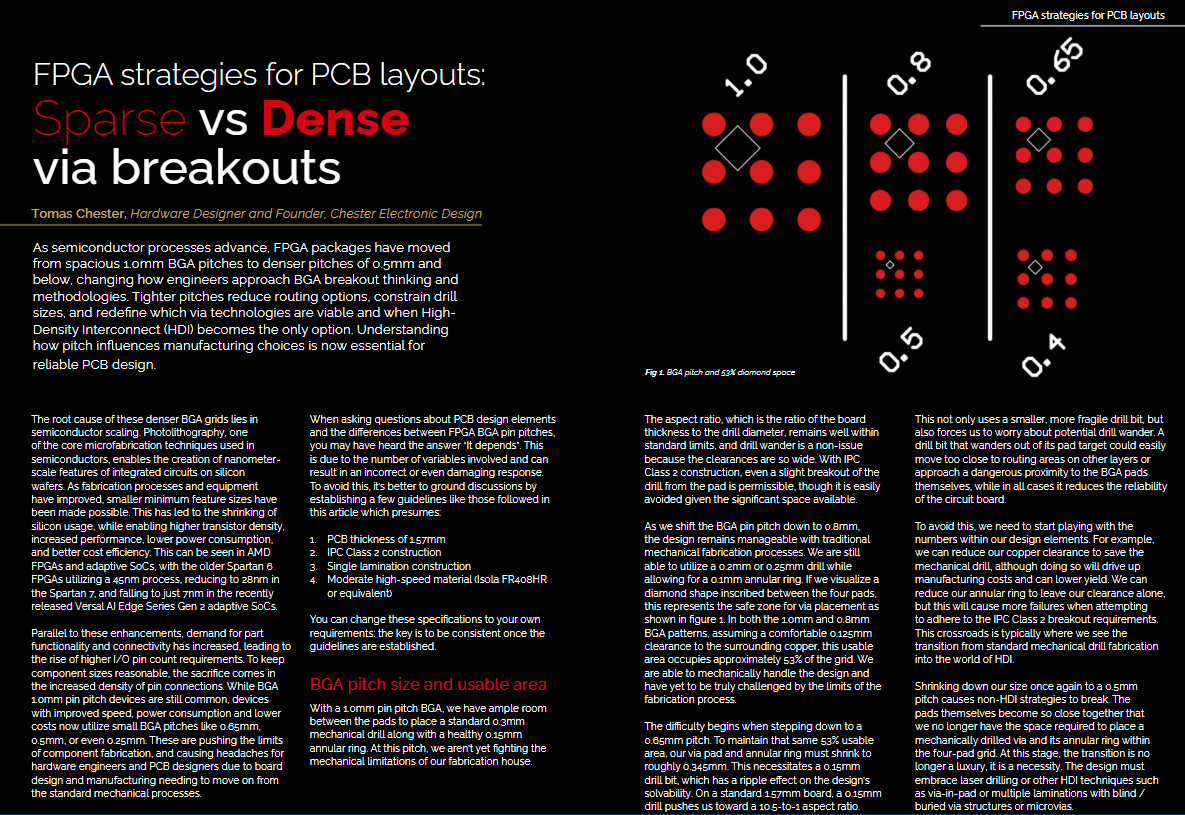

Neural nets don’t just forget. Sometimes, after long training, they lose the ability to learn at all. In our #ICLR2026 poster, we model Loss of Plasticity as gradient dynamics trapped in invariant manifolds: 🔴 frozen units, 🔵 cloned units. The video makes the traps visible.