Jason Yim

338 posts

Jason Yim

@json_yim

Past: @Xaira_Thera, @MIT_CSAIL PhD, @GoogleDeepMind. Interests: generative models, LLMs, science.

AI for Bio is hot again. Given that, I wrote a primer on why this field is so hard. tl;dr it's because the APIs are fuzzier than you might think. ankitg.me/blog/2026/05/0…

New paper! Presenting Discrete Flow Maps: paper: arxiv.org/abs/2604.09784 blog: malbergo.me/discrete-flow-… A laughable problem for me these days is that @nmboffi and I share a research brain, and we have had, time and again, a conversation that ends with “ha so I guess we’re writing the same paper.” Soon we will return to just doing it together :). Here we are doing it again with discrete flow maps and flow language models! A complete and thorough paper led by @PPotaptchik @json_yim @adhisarav @peholderrieth. We took a bit of time to post it to ensure we understood a few more things about the stability of the loss functions. Like @osclsd , @FEijkelboom, and @nmboffi , we think this could be a very helpful paradigm for thinking about fast inference and even better alignment! Here’s our version of the story, and I hope it makes clear how green field this research direction is — we provide a comprehensive picture of the KL losses you can write from the properties of the flow map, some nice geometric proofs about the mean denoiser and the simplex, and find that at this time, the ESD can actually be the most performant, with some caveats. Excited for everyone to work together and push this class of models to their limit!

What if AI could invent enzymes that nature hasn’t seen? 👩🔬🧑🔬 Introducing 🪩 DISCO: Diffusion for Sequence-structure CO-design 14 rounds of directed evolution and over a year of wet lab work. That's what it took to engineer an enzyme for selective C(sp³)–H insertion, one of the most challenging transformations in organic chemistry. DISCO surpasses this with a single plate. No pre-specified catalytic residues, no template, no theozyme, no inverse folding, just joint diffusion over protein sequence and structure. 📝 Blog: disco-design.github.io 📄 Paper: arxiv.org/abs/2604.05181 💻 Code: github.com/DISCO-design/D…

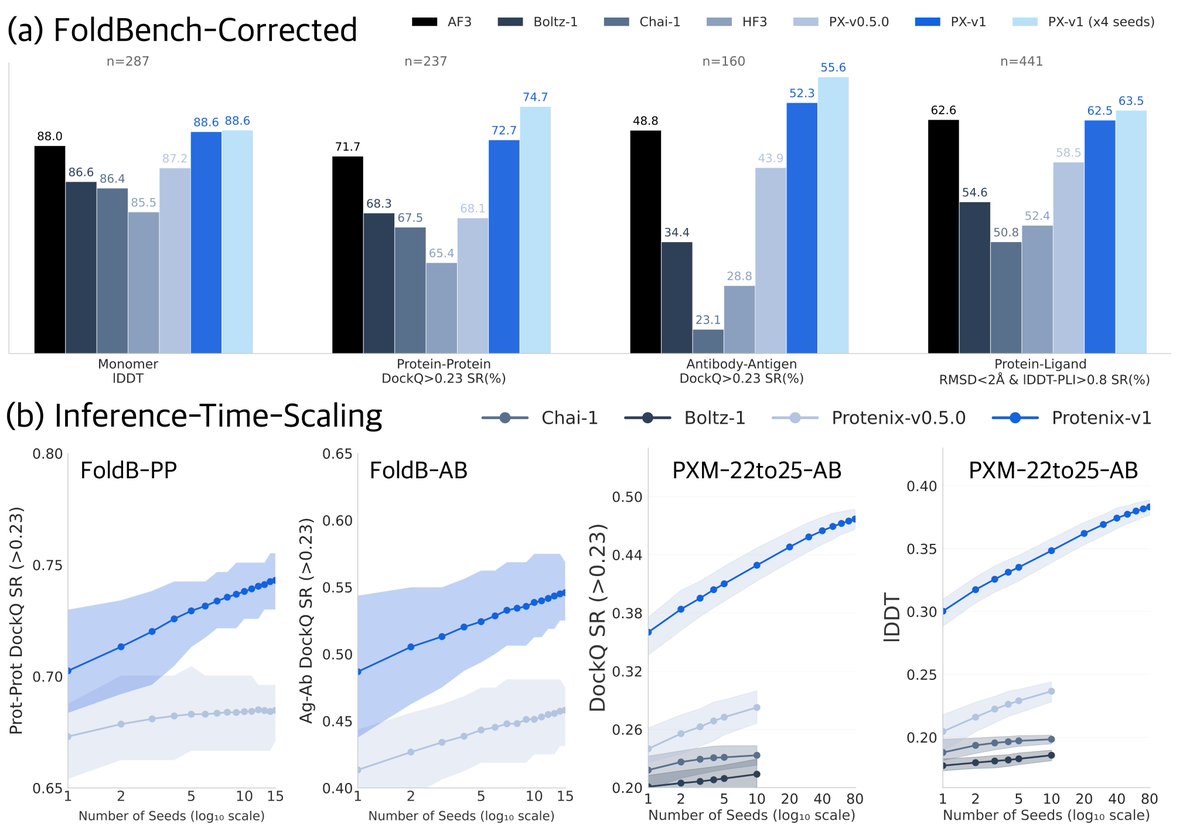

Protenix-v2: A Biomolecular Modeling System for Structure Prediction and Zero-Shot Antibody Design @ai4s_protenix 1. Protenix-v2 achieves massive gains in antibody-antigen structure prediction, with up to 13-point improvements over Protenix-v1 at DockQ >0.23 and comparable gains at the stricter DockQ >0.8 threshold. Most remarkably, its 5-seed performance surpasses previous 1000-seed results, representing a dramatic leap in sampling efficiency. 2. The system demonstrates 100% target-level success rate in zero-shot VHH antibody design across novelty-controlled targets, with BLI-confirmed hit rates ranging from 2% to 48%. The resulting hits show exceptional developability with 100% thermostability pass rate, 98% self-interaction pass rate, and 93% polyreactivity pass rate. 3. On challenging GPCR targets with small and flexible exposed epitopes, Protenix-v2 achieves hit rates of 16%-88% in VHH-Fc format and up to 50% in mAb format, despite testing only 16-30 designs per target. This demonstrates effective sample efficiency on difficult membrane proteins. 4. The model introduces training-free guidance (TFG) variants that significantly improve ligand-related plausibility, reaching 60.46% success rate on recent protein-ligand benchmarks under a revised stricter validity criterion that checks planarity around sp2 centers and non-planarity at sp3 centers. 5. Protenix-v2 successfully designs dual-specific binders against both prototype and Omicron SARS-CoV-2 RBD variants with nanomolar-scale KD, showing potential compensatory mechanisms at the structural level to accommodate sequence differences. 6. The system supports flexible, target-conditioned generation with granular control over CDR loop lengths and integration of predefined frameworks, spanning diverse formats from miniproteins to VHH and full-length antibodies. 💻Code: github.com/bytedance/Prot… 📜Paper: github.com/bytedance/Prot… #ProtenixV2 #AntibodyDesign #StructurePrediction #AlphaFold3 #BiomolecularModeling #DrugDiscovery #GPCR #MachineLearning #ComputationalBiology #ZeroShotDesign

BREAKING: Anthropic Acquires 9-Person Biotech Startup For $400 Million >be coefficient bio >founded the startup 6 months ago >build AI platform for biotech >less than 10 employees >acquired by anthropic for ~$400 million > = $40+ million per head Coefficient Bio was building an AI platform for biotech tasks: planning drug R&D, managing clinical regulatory strategy, identifying new drug opportunities Team is joining Anthropic’s healthcare life sciences group led by Eric Kauderer-Abrams. Anthropic is building specialized tools for industries that actually pay enterprise rates: >software engineering >cybersecurity >life sciences >healthcare >finance Meanwhile OpenAI is buying media companies to control narratives LMAO

🚀MIT Flow Matching and Diffusion Lecture 2026 Released (diffusion.csail.mit.edu)! We just released our new MIT 2026 course on flow matching and diffusion models! We teach the full stack of modern AI image, video, protein generators - theory and practice. We include: 📺 Videos: Step-by-step derivations. 📝 Notes: Mathematically self-contained lecture notes 💻 Coding: Hands-on exercises for every component We fully improved last years’ iteration and added new topics: latent spaces, diffusion transformers, building language models with discrete diffusion models. Everything is available here: diffusion.csail.mit.edu A huge thanks to Tommi Jaakkola for his support in making this class possible and Ashay Athalye (MIT SOUL) for the incredible production! Was fun to do this with @RShprints! #MachineLearning #GenerativeAI #MIT #DiffusionModels #AI

Today we share a technical report demonstrating how our drug design engine achieves a step-change in accuracy for predicting biomolecular structures, more than doubling the performance of AlphaFold 3 on key benchmarks and unlocking rational drug design even for examples it has never seen before. Head to the comments to read our blog.

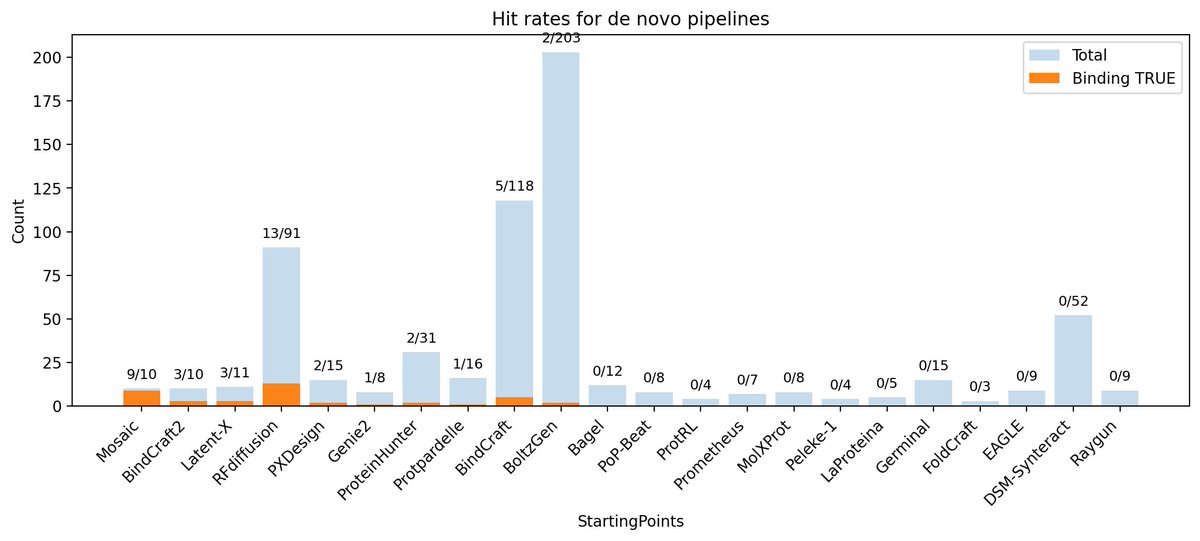

Huge congratulations to Nick Boyd with Mosaic that absolutely killed in the competition! 𝑩𝒊𝒏𝒅𝑪𝒓𝒂𝒇𝒕2 did also pretty well with the second highest hit rate in the competition!

Also, please share 🤓: I'll be at NeurIPS Dec 4-8. I am hiring PhD students and postdocs this year to start at @Harvard @KempnerInst. We work across problems in ML, applied math, probability, and biology, with the goal of all learning from each other. Find me at @NeurIPSConf, DM me, or shoot me an email! For a flavor of recent topics, see: malbergo.me/papers.html malbergo.me/research-theme…