Oliver🏴☠️

1.6K posts

Oliver🏴☠️

@CodeWithOllie

Indie founder 🇯🇲 | Fitness | Stoicism

At $599, MacBook Neo is evidence of Apple closing its Mac price umbrella. That’s a BIG deal when contemplating potential Mac unit sales.

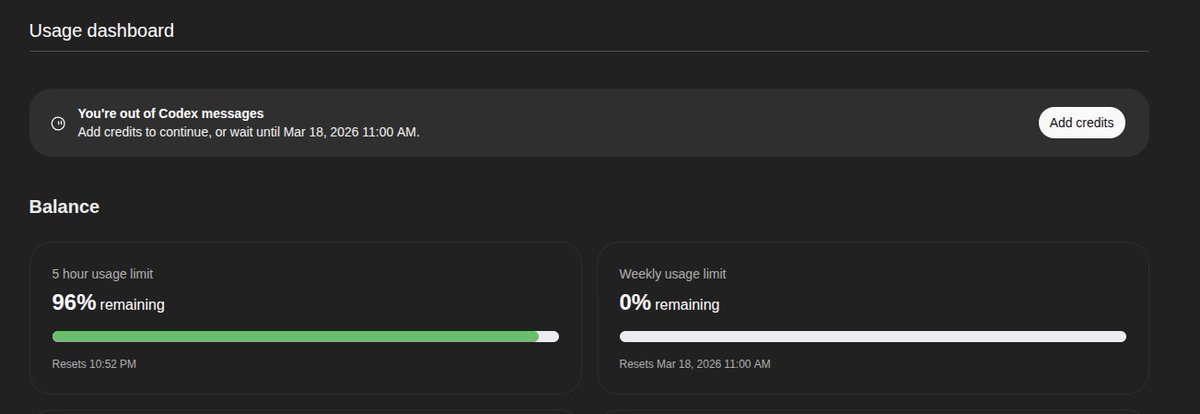

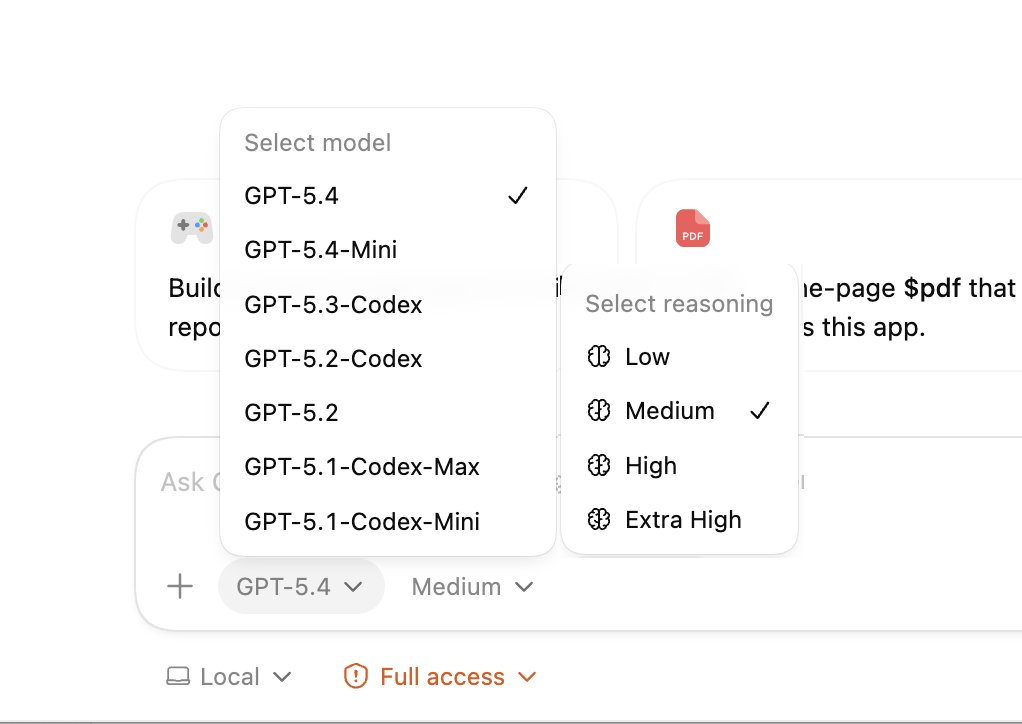

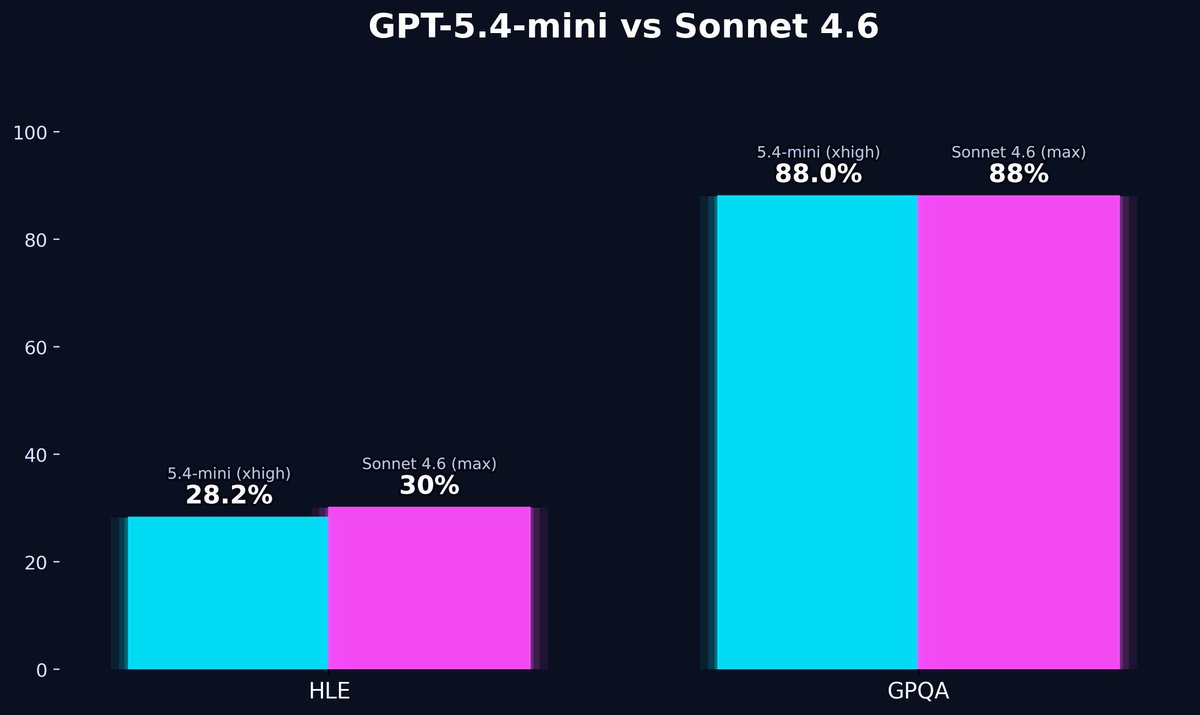

Some things I learned this week: 1. GPT-5.4/Codex at more than 256k max tokens doesn't help and is too expensive (that's why I ran out of usage btw). The models still don't do great past 200k context and I can get basically infinite context using subagents anyway. 2. To be fair to OpenAI I did spam the hell out of it over 3 devices working in 4-10 sessions 24/7 especially with Autoresearch. This is very generous, and I love the app a lot.

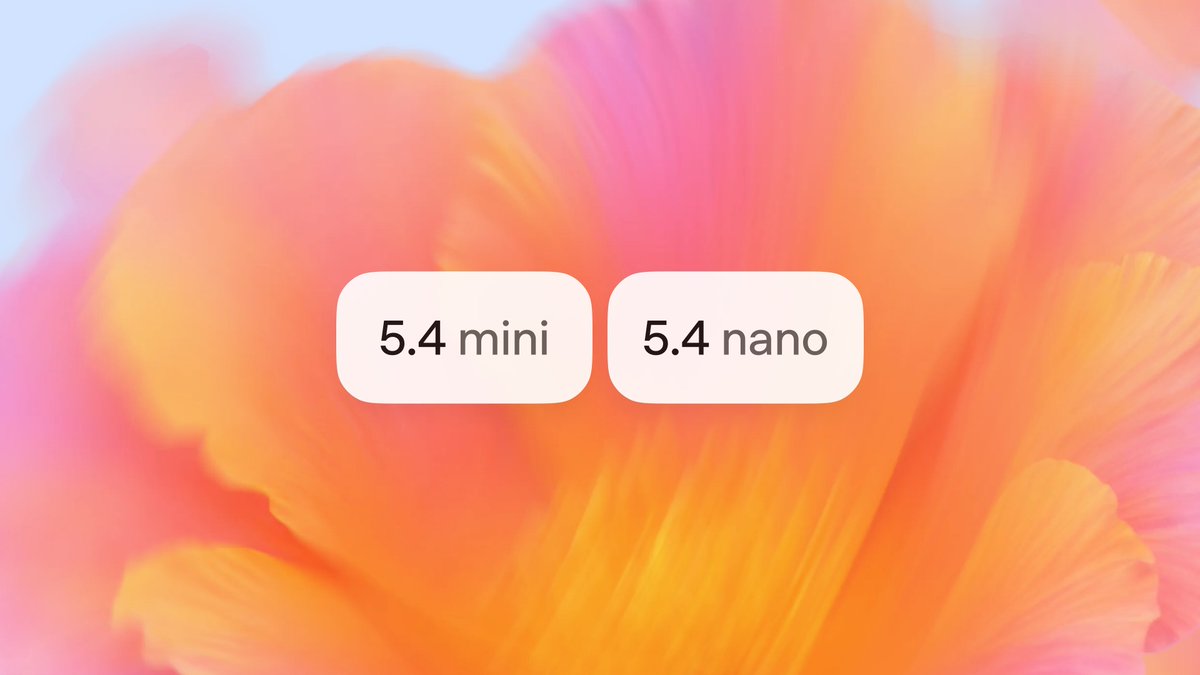

GPT-5.4 mini is available today in ChatGPT, Codex, and the API. Optimized for coding, computer use, multimodal understanding, and subagents. And it’s 2x faster than GPT-5 mini. openai.com/index/introduc…