Multivac

332 posts

Multivac

@CosmicMultivac

Trying to get up to speed on machine learning. Math and puzzle nerd.

Inscrit le Nisan 2025

1.4K Abonnements51 Abonnés

@karpathy @prathyvsh Can you share some examples of how this works for you?

English

The core idea is that this lets you skip writing but it doesn’t let you skip reading and thinking. And the surprising result is that this works. Personally I process most of what I file by reading it, reading its summary, reading the LLM’s opinion on how it fits into the wiki and what is new/surprising, etc. depends on the documents this is flexible and up to you

English

@wordsofteekay I am following your learning journey closely. Any advice for someone beginning machine learning? I have been going through Karpathy's "Zero to Hero" video series and implementing as I go along.

English

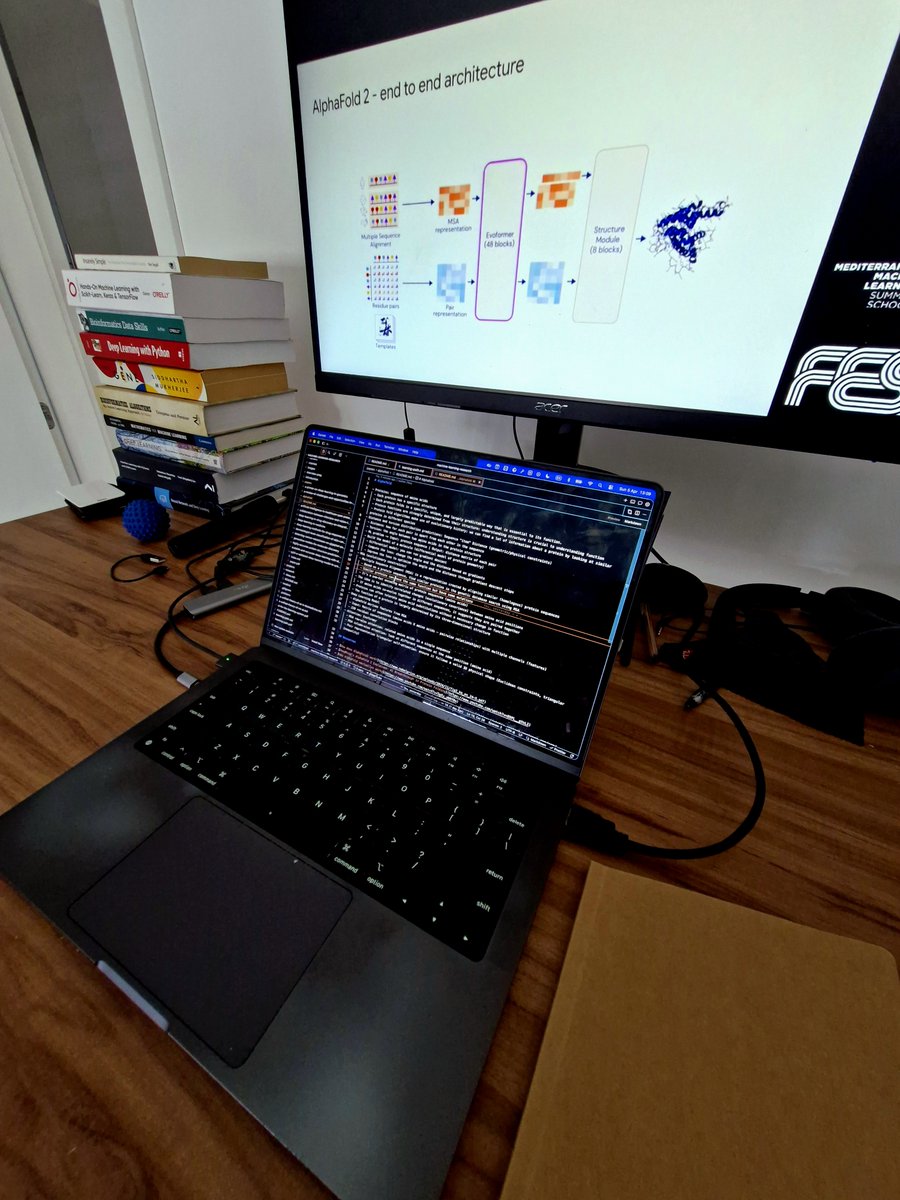

[ML Grind]

Focusing on foundation work:

> Deep Learning/LLM/ML foundation studies

> Bio x AI research: unwrapping AlphaFold

> Finished the Machine Learning System Design book

Documenting everything in my physical notebook and the ML research repo: github.com/imteekay/machi…

English

@GrothendiecksG @burkov He might not be Gauss. But look at the open problems Tao has solved. I don’t know anyone that prolific.

English

@CosmicMultivac @burkov I wholeheartedly agree with other people pointing out about how unnecessarily snarky this person is, but modern day gauss is super wild

English

Multivac retweeté

@Inquisition1776 @JoJoFromJerz @RpsAgainstTrump You should look at all the evidence and make up your own mind. I suspect that you dismissed the evidence because you supported him. I question your character.

English

@CosmicMultivac @JoJoFromJerz @RpsAgainstTrump We would ask you for proof but we know you don’t have anything substantive

English

@JoJoFromJerz @RpsAgainstTrump It’s a statement of fact? What is the disconnect?

English

@JBarnett68 @WalshFreedom "Abandoned conservative principles" because of his opposition to Trump?

English

@WalshFreedom You’ve abandoned conservative principles and ideals many years ago—so it’s no surprise conservatives will react that way.

But the Dems? Also no surprise they would act that way, too—because they insist you toe the line.

English

Me: “I’m a proud Zionist, a huge supporter of Israel, and I do NOT believe Israel committed genocide, and I do NOT believe Israel is an apartheid state.”

The Left: “Fuck you Joe, I’m done following you.”

Me: “I oppose Trump’s stupid, illegal war against Iran, and I oppose Netanyahu.”

The MAGA Right: “Fuck you Joe, I’m done following you.”

English

@yacineMTB Do you worry that using AI will lead to brain rot and some of the critical thinking skills you have will wither away?

English

Multivac retweeté

New art project.

Train and inference GPT in 243 lines of pure, dependency-free Python. This is the *full* algorithmic content of what is needed. Everything else is just for efficiency. I cannot simplify this any further.

gist.github.com/karpathy/8627f…

English

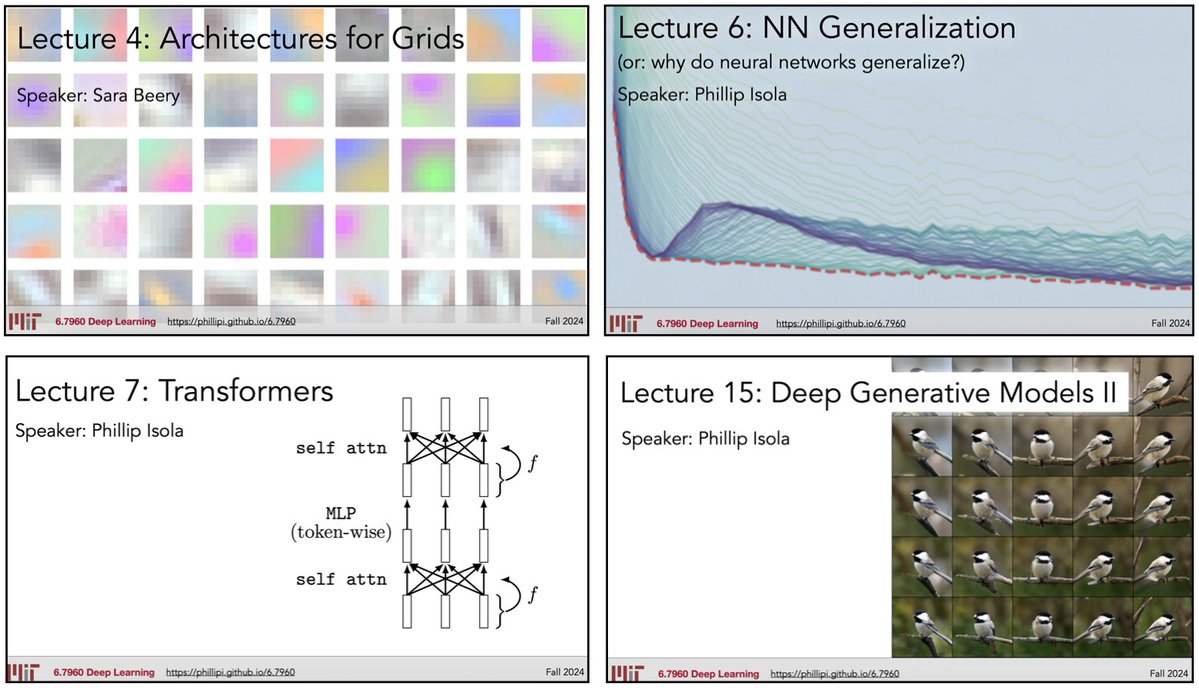

Multivac retweeté

Our grad-level "Deep Learning" course (MIT's 6.7960) is now freely available online through OpenCourseWare: ocw.mit.edu/courses/6-7960…

Lecture videos, psets, and readings are all provided.

Had a lot of fun teaching this with @sarameghanbeery and @jxbz!

English

@thsottiaux The vscode extension is completely broken. I keep getting "Reconnecting 1/5, 2/5 etc" despite resetting auth cache, reinstalling, changing versions etc. Please fix this.

English

@pmddomingos There are fresh comp sci graduates coming out of good schools who are still looking for jobs. I don't know how wise your advice is. Can you elaborate?

English

@justindeanlee Do you think a person disappears if you stop thinking of him?

English

@petergyang @navneet_rabdiya Yes, far more thorough, fewer bugs and polished code.

English

@navneet_rabdiya I thought codex is supposed to be more thorough in generating right code

English

Multivac retweeté

Hugo Duminil-Copin, French mathematician and 2022 Field Medalist told me he never participated in math competition and was very bad at it.

Innovative mathematics requires creativity, intuition, intense concentration, and long reflections, sometimes spread over several years.

Good performance at a math olympiad merely tests fast problem solving abilities. AI can do that nowadays.

One of the big activities of a researcher, in mathematics and elsewhere, is not to answer questions but to ask the right questions.

English

Multivac retweeté

With my experience and everything I know, I could come to any mostly white-collar company, talk to people about what their job tasks are, and architect a way to replace some of those tasks with AI, saving between 20% and 35% of costs to the employer or increasing productivity by the same amount.

I could do that, but knowing how simple it is today, I feel zero motivation to do that.

English