Mark Howell

5.5K posts

Mark Howell

@MNWH

research engineer; control systems; maker; innovator; programmer; dad. Uganda - UK - USA - NZ - ... If you follow me & have 0 posts I will remove and block you

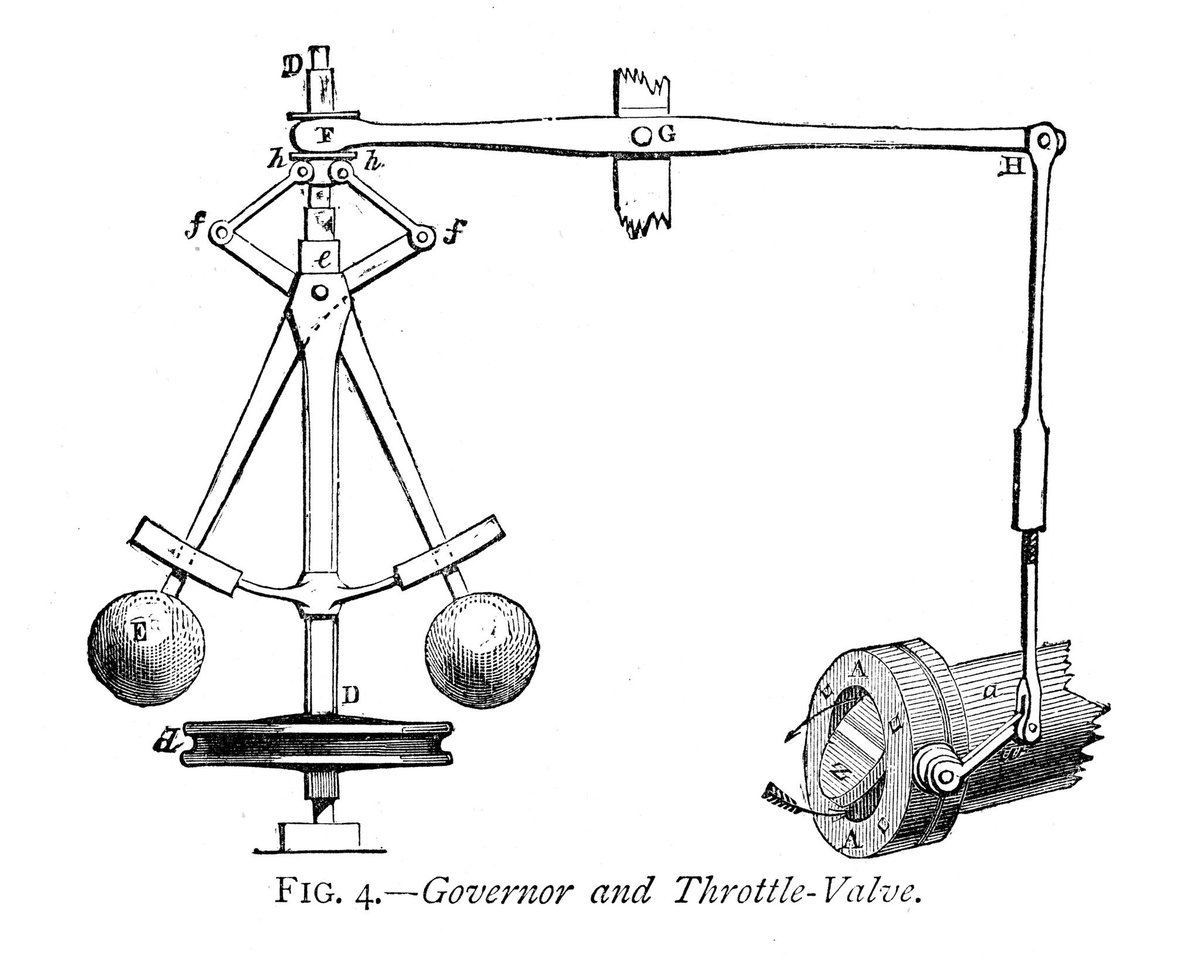

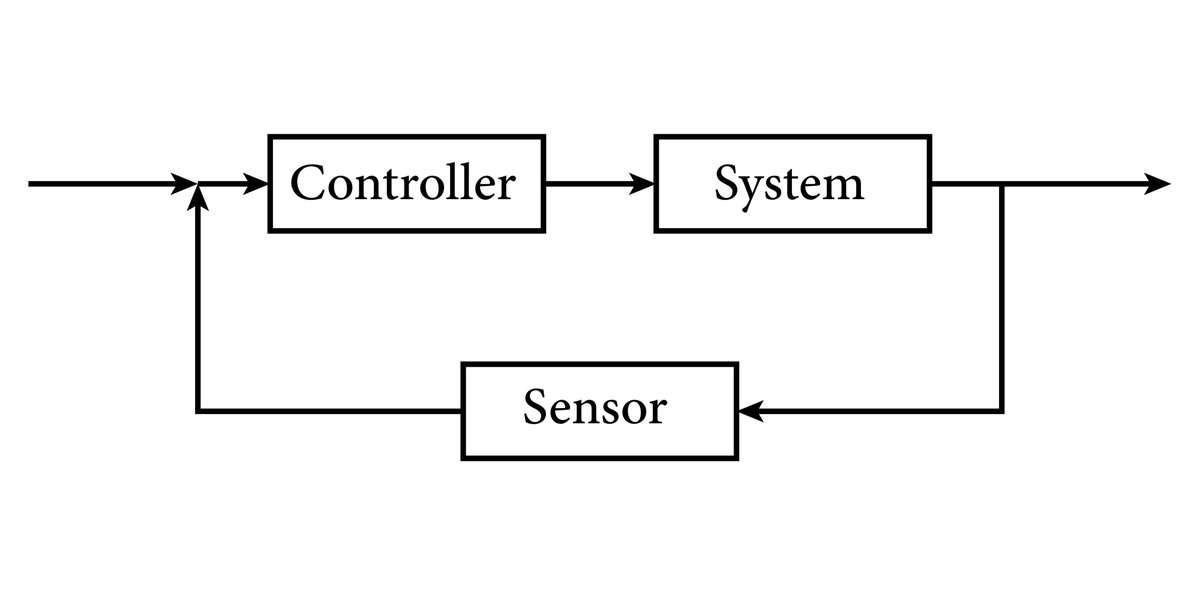

The Dwarkesh/Andrej interview is worth watching. Like many others in the field, my introduction to deep learning was Andrej’s CS231n. In this era when many are involved in wishful thinking driven by simple pattern matching (e.g., extrapolating scaling laws without nuance), it’s refreshing to hear an influential voice that is tethered to reality. One clarification for the podcast is that when Andrej says humans don’t use reinforcement learning, he is really saying humans don't use returns as learning targets. His example of LLMs struggling to learn to solve math problems from outcome-based rewards also elucidates the problem with learning directly from returns. Fortunately for RL, this exact problem is solved by temporal difference (TD) learning. All sample-efficient RL algorithms that show human-like learning (e.g., sample-efficient learning on Atari, and our work on learning from experience directly on a robot) rely on TD learning. Now Andrej is not primarily an RL person; he is looking at RL through the lens of LLMs these days, and all RL done in LLMs uses returns as targets, so it’s understandable that he is assuming that RL is all about learning from observed returns. But this assumption leads him to the incorrect conclusion that we need process-based dense rewards for RL to work. If you embrace TD learning, then you don't necessarily need a dense reward. Once you have learned a value function that encodes useful knowledge about the world, you can learn on the fly in the absence of rewards, just like humans and animals. This is possible because in TD learning there is no difference between learning from an unexpected reward and learning from an unexpected change in perceived value.