Eric Todd

141 posts

Eric Todd

@ericwtodd

Computer Science PhD Student at Northeastern University

Why do distilled diffusion models generate similar-looking images? 🤔 Our Diffusion Target (DT) visualization reveals the secret to diversity. It is the very first time-step! And—there is a simple, training-free way to make them more diverse! Here is how: 🧵👇

Are induction heads necessary for the emergence of in-context learning (ICL)? Their emergence coincides with a sharp ICL improvement, raising the hypothesis they may underlie much of ICL. However, we find that ICL beyond copying can emerge even when we suppress induction heads!

How do protein folding models turn sequence into structure? In "Mechanisms of AI Protein Folding in ESMFold", we find properties like charge and distance encoded in interpretable, steerable directions. The trunk processes features in two phases: chemistry first, then geometry.

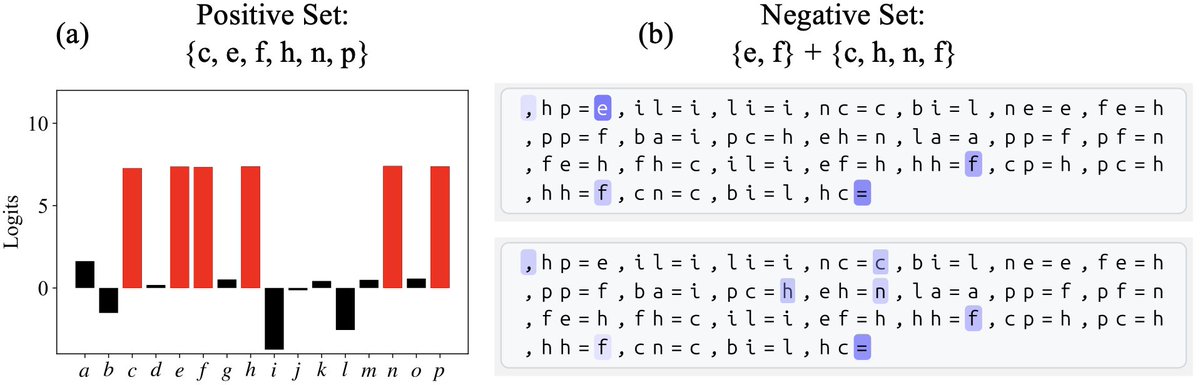

Can you solve this algebra puzzle? 🧩 cb=c, ac=b, ab=? A small transformer can learn to solve problems like this! And since the letters don't have inherent meaning, this lets us study how context alone imparts meaning. Here's what we found:🧵⬇️

@Marshwiggle119 @ericwtodd @davidbau @jannikbrinkmann @rohitgandikota The product is a product of arbitrary group elements, and groups don’t allow for an element which behaves like 0 under multiplication. (Even the multiplicative group of e.g. the real numbers has to explicitly exclude 0.)

Another strategy infers meaning using sets. We have seen models keep track of "positive" and "negative" sets that let it narrow its understanding of a symbol using Sudoku-style cancellation. Red bars (a) show the positive set and blue boxes (b) show the negative.

Can you solve this algebra puzzle? 🧩 cb=c, ac=b, ab=? A small transformer can learn to solve problems like this! And since the letters don't have inherent meaning, this lets us study how context alone imparts meaning. Here's what we found:🧵⬇️