DataVoid

6.6K posts

DataVoid

@DataPlusEngine

Independent ML researcher. The First step in knowing is admitting you don't

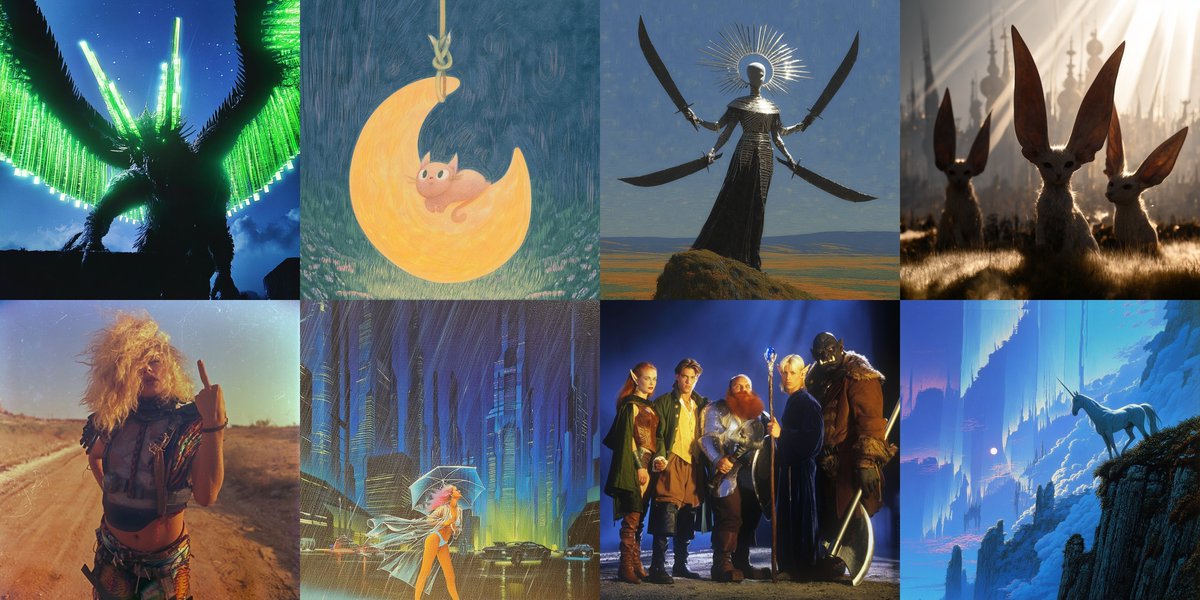

The long-awaited testing phase for @Midjourney V8 has officially begun, marking a massive leap forward for the generative art platform. This latest iteration promises a significant boost in efficiency, operating at five times the speed of its predecessors while maintaining a much tighter grip on complex prompt instructions. High-resolution creators will find the native 2K modes particularly useful for professional workflows. The update also brings more reliable text rendering and enhanced "sref" styling, allowing for a level of aesthetic consistency that was previously difficult to achieve. Personalization is a major focus of this release, with improved moodboard performance to help users fine-tune their unique visual language. It is an impressive step toward making AI-assisted design both faster and more intuitive.

DLSS 5 in Assassin's Creed Shadows 👀

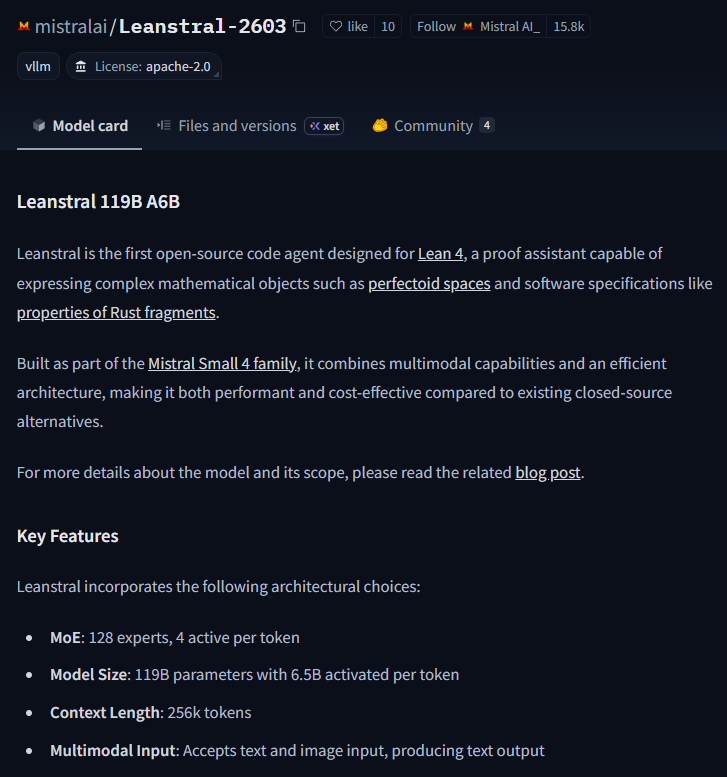

Some math prover model by Mistral? link is dead again, just got the notif

Scaling law experiments reveal a consistent 1.25× compute advantage across varying model sizes.

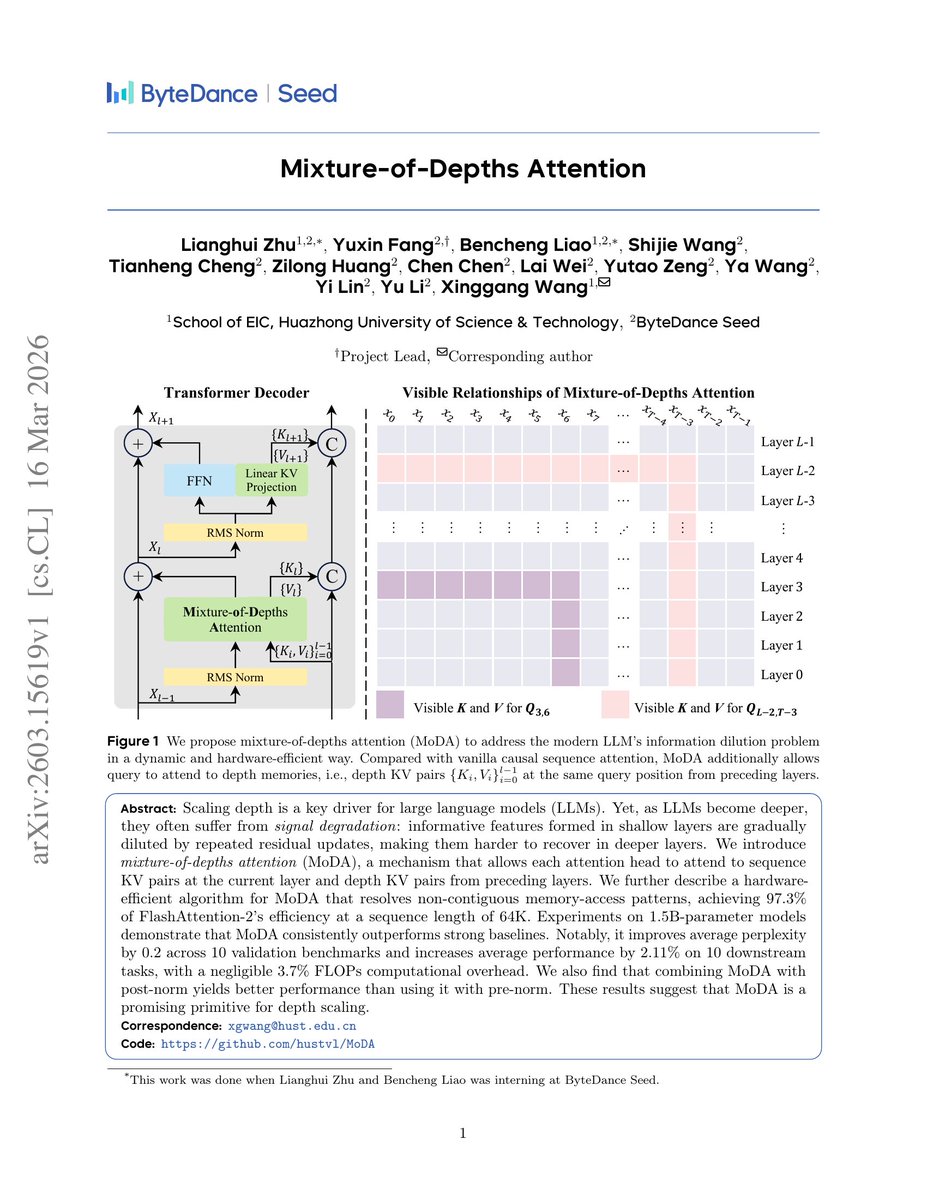

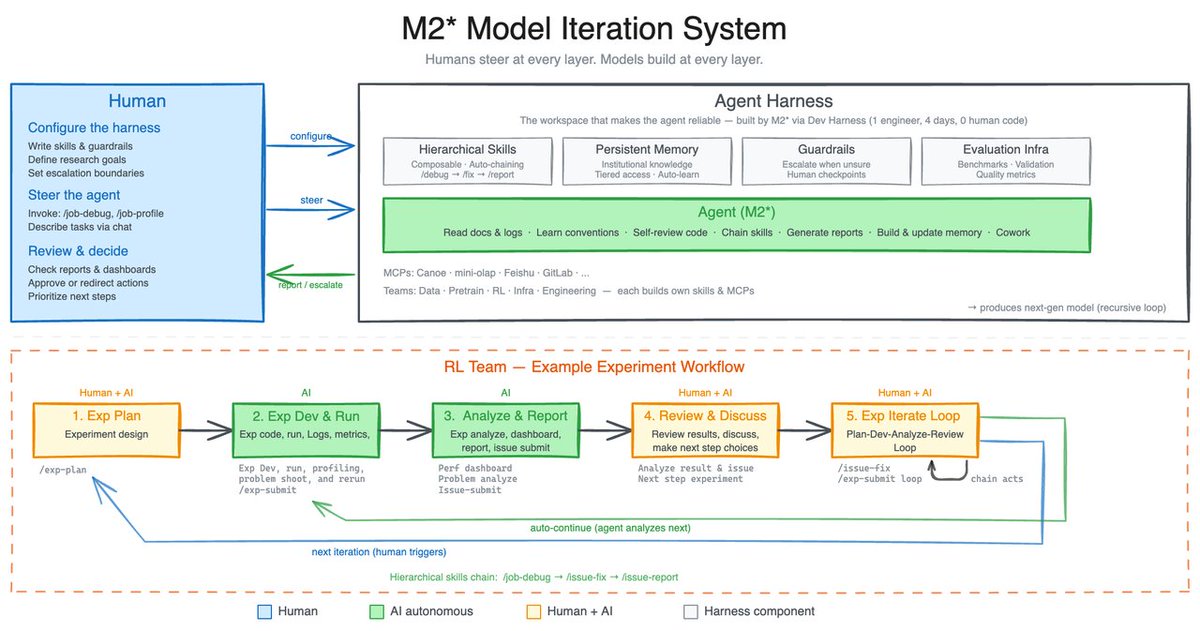

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…