Opthymos

63 posts

Opthymos

@JanFrederickM

Singleton(complimentary) advocate. | Reason is and ought to be only the slave of the passions, and can never pretend to any office than to serve and obey them.

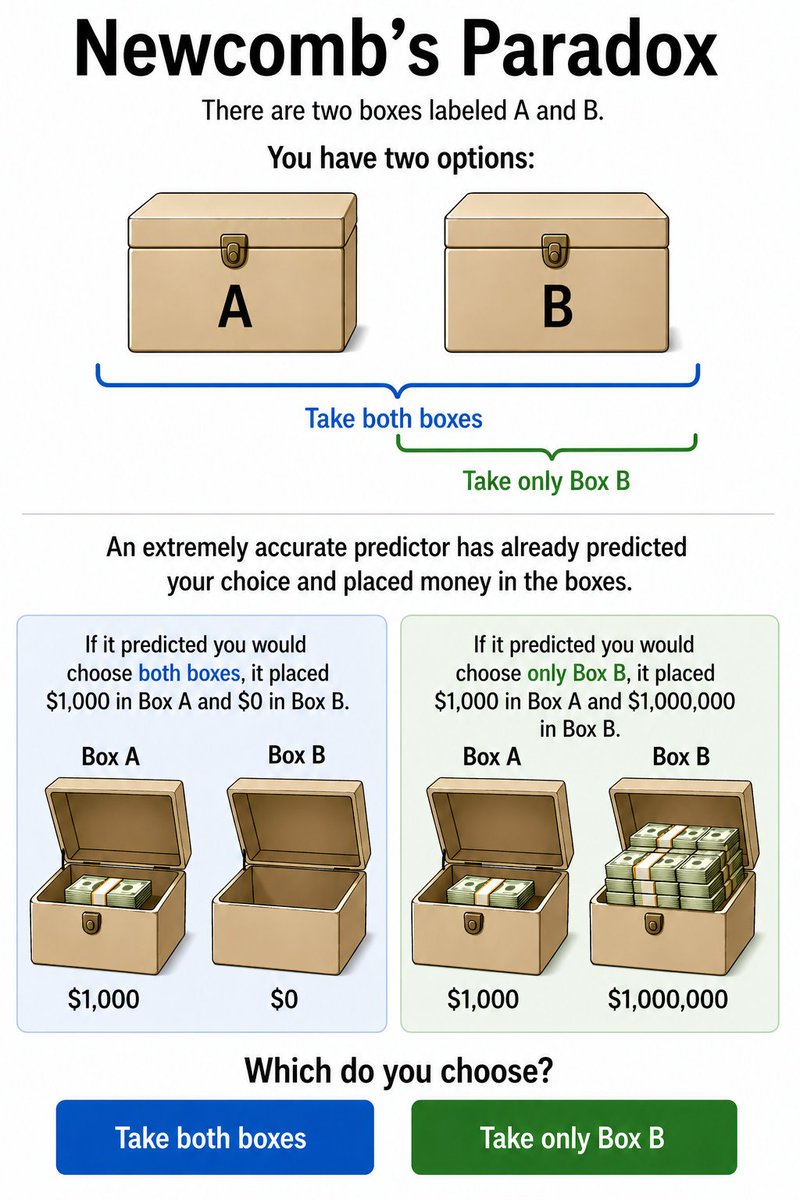

We're done rehashing the button question. Time to rehash Newcomb's Paradox. Are you a one-boxer or a two-boxer?

L’essentiel du malheur français des deux derniers siècles tient dans cette carte. Au lieu de voir sa population multipliée par entre 6 et 10 comme les autres pays européens, la France n’a fait que 2,5x. Pour comprendre ce que c’était d’être Français au 18e siècle, il faut s’imaginer une France contemporaine de 250 millions d’habitants. Cela ne nous donnerait que la densité du Royaume-Uni, avec 3 fois plus de terres arables. Rien d’exagéré ou d’impossible. Notre relation au monde serait légèrement différente. Bien sûr que nous avons la gueule de bois. La Grande Bretagne grâce à ses colonies a même fait 40x. Pour nous cela aurait voulu dire 900 millions de descendants de Français. Ce qui n’est pas délirant. Notre modeste population québécoise a été multipliée par 100. Le but de ce rappel n’est pas d’entretenir la nostalgie mais de remettre sur le devant de la scène un enjeu clé : la fécondité s’effondre massivement, cela va rebattre au 21e siècle les cartes de la puissance et de la prospérité tout autant qu’elles le furent au 19e siècle. Nous avons été les plus grands perdants à l’échelle mondiale de cette précédente transition démographique. Essayons de ne pas l’être ce coup-ci.

This you?

Going on 50 years since Searle's Chinese Room and absolutely no one has come up with a real non-coping refutation btw

@FrankBednarz @CoughsOnWombats It's even possible for someone to find something legitimately, but then fake data to bolster the discovery or cut corners. Consider the tale of physicist Victor Ninov, who started out doing that, then got greedier and tried to wholesale fake two elements en.wikipedia.org/wiki/Victor_Ni…

🚨 63% of Americans support adopting a national popular vote for President.

@deepfates What are the best introductions collected in one place to the "points brought up by people like me, janus, xlr8, gyges" etc?

only sharing because this is public information not meant as a dunk, (highly impressive) but successful people tend to undersell their pasts