statik

2.8K posts

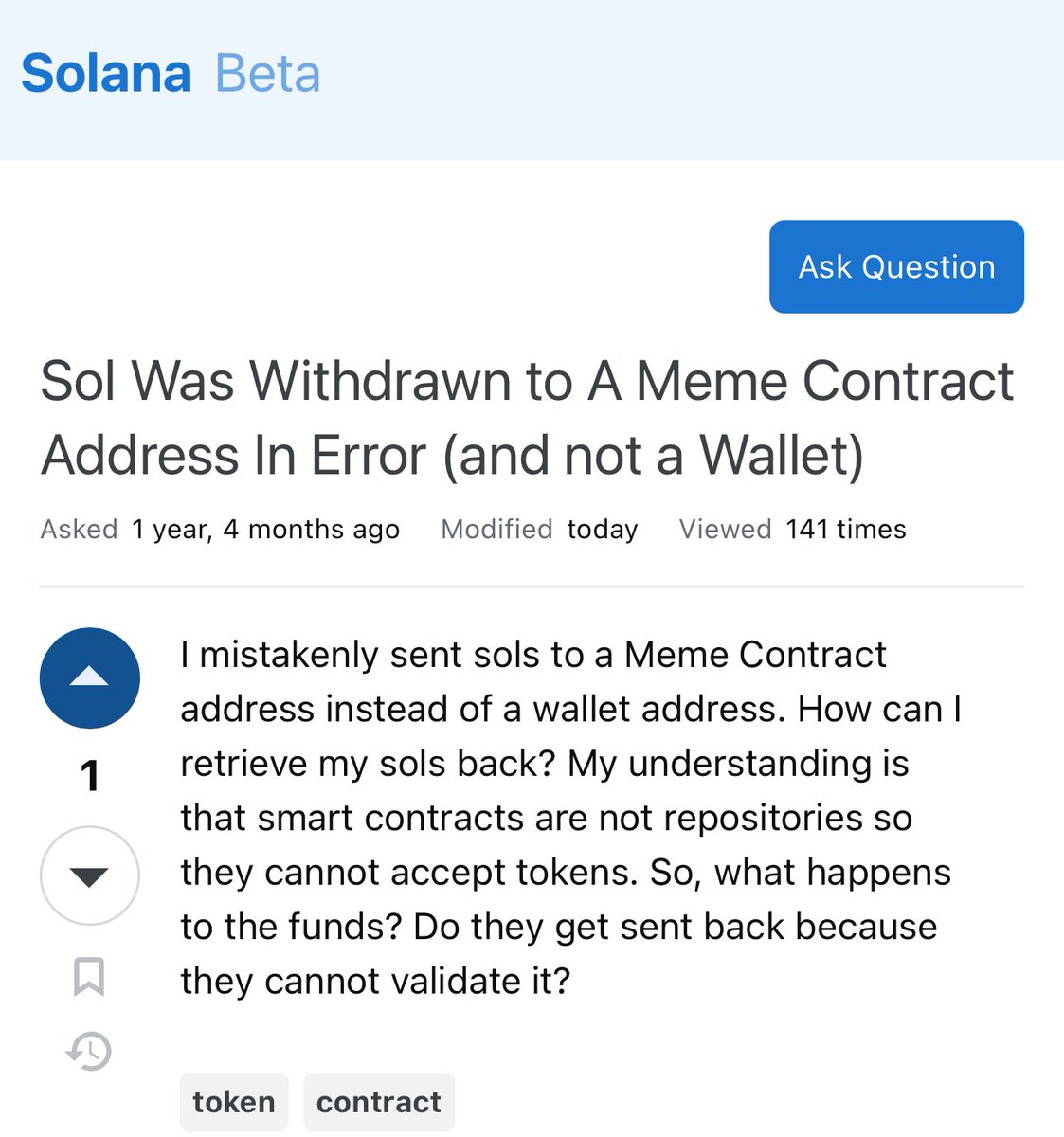

@TheSoftwareJedi @metasal @icefrog_sol @phantom @solflare @Backpack @JupiterExchange @solana it also likely wouldn't mean much to the average user if an address is "on curve" or/vs "is pda".

English

@metasal @icefrog_sol @phantom @solflare @Backpack @JupiterExchange @solana There’s no way to differentiate “not on curve” and “is a pda”. And age of address isn’t a field somewhere. You’d have to have a big ass index reference

English

@blockiosaurus i built something like this for ETHDenver in 2020. i have thought about it a bunch since then.

right now, i'd need a friend to come over and show my wife how to get my stuff -- assuming she can get in... haha.

how often would you want to "attest" you are still alive?

English

I for one am shocked that the DAO downvoted a proposal to give itself less money.

Reminder -- the DAO is using its authority to take rent SOL from old NFTs. Imagine Anza making Solana rent cheaper and then proceeding to take all the overpaid rent SOL for themselves.

Metaplex Foundation@MetaplexFndn

Proposal [MTP-001] “Handling of Resize Funds” was not approved by the Metaplex DAO. Per the DAO’s guidelines, [MTP-002] by @josipvolarevic will go live for vote tomorrow, May 22 through May 29. github.com/metaplex-found…

English

@creativity1st @nickfrosty you make a great point in regards to indexing docs.

there is nothing that breaks down what you need and how to get started.

English

the idea is to have index the cumulative fees for each of the blocks and the cumulative CU used by each block, and plot the ratio of fees/CU as time goes on

You are correct that I'm using typescript, and yes, I'm aware of the performance bottlenecks

The problems I have are the following:

1) Dev UX for me was horrible. Solana Web3.js has a bunch of methods that simply fail (e.g. getBlock with jsonParsed or base64 encoding just crashes). This is not OK!

2) I understand that the architecture of Solana requires optimizations (specifically due to large amounts of data passed across the network), but why is there no filtering options for developers that don't need 1300 voting txs every block? See this issue - I'm not the first one to talk about it: github.com/solana-labs/so…

Essentially, the chain forces developers to pay out of their pocket in order to comfortably develop their application because the core devs don't want to implement filtering on the node level!

3) There are literally no tutorials on how to how to index Solana blocks. Probably this speaks to my engineering abilities (or a lack of thereof), but having no support for your dev community is a big no-no, especially when the task is so common across the blockchain devs

4) I'm more than OK using Rust, but for some people it means learning a whole new language just so that you can fit into the performance requirements. People that are hobbyists are very likely to drop the project if they have to learn a new language just to output a chart on the webpage.

Sorry for the rant, it is just that I had way higher expectations for a chain that often boasts about their engineering culture.

English

SOL is awful for builders (or I'm lacking skills to dev properly on it)

Might be a skill issue, so please read on about my research, and I would be happy if I'm wrong (especially if you can point to a Github repo that does the trick with a simple HTTPS RPC)

So, here goes:

The latency for the blocks is too high and simply querying the RPC is not fast enough. Node filters are too rigid to give me only fee and CU block data, and because of that I can't reach the escape velocity of processing a block in <400ms

After asking Mert (Helius CEO), he recommended to use gRPC connection straight to the node that will give us streaming block data. QuickNode offers gRPC for 500$ a month, which is a no-go IMO

I've researched multiple platforms trying to find a GraphQL-like API to reduce the number of data that is streamed to the indexer, however, it seems like most of them would be either too expensive to use (i.e. The Graph Substreams, however this might be a skill issue, didn't optimize it when testing it manually) or don't offer the functionality that I want to

Things that still could be tried: using Dune to fetch already processed data (I'm yet to research whether their free plan would do the trick for me) + pull out an actual profiler and try to see whether JSON deserialization takes most of the time inside of the axios library. If deserialization is the bottleneck (and not the latency), I would actually consider rewriting this specific indexer in Rust (this is not a joke)

So, in the end of the day, you have to pay big bucks to stay up to date with SOL. This is some next-level pay-2-win shit IMO - so much for engineering culture for Solana

English