OpenBMB

753 posts

@OpenBMB

OpenBMB (Open Lab for Big Model Base) aims to build foundation models and systems towards AGI. Connect with us: https://t.co/N9pevTnoOa

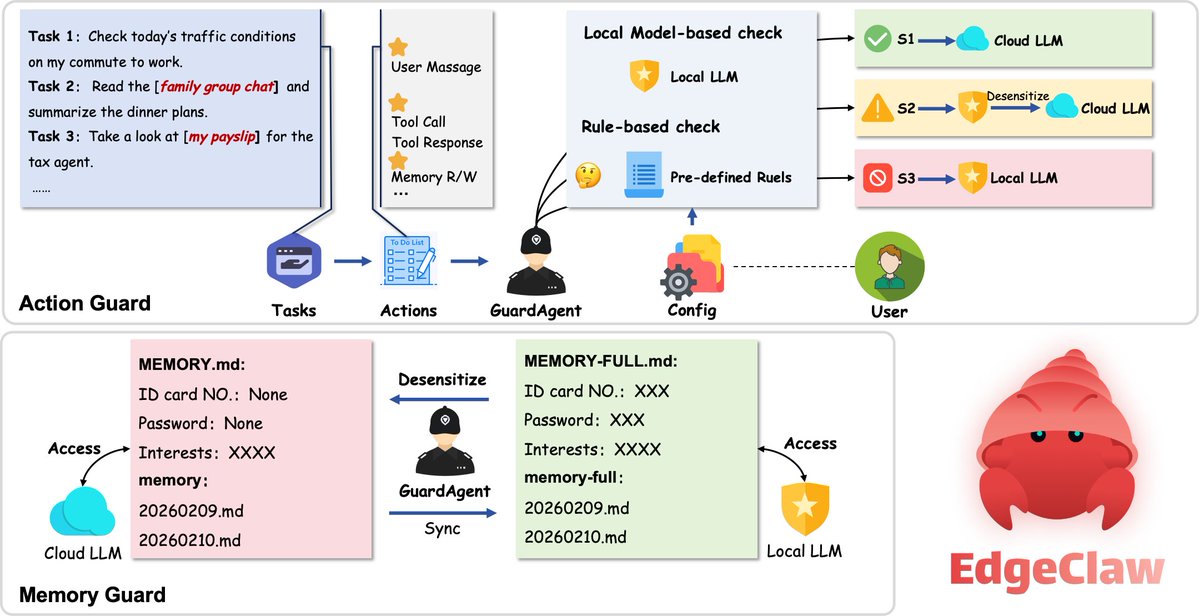

(1/2)🦞 Using @openclaw but worried about sending sensitive data to the cloud? 🤔 Meet #EdgeClaw — a dedicated Local Routing Layer for #OpenClaw that handles data sensitivity and task complexity on the edge. 💻 It’s a drop-in enhancement that reactivates your local hardware to act as a Privacy Guard & Cost Judge. 3-Tier Security (Regex + Local LLM Engine) 🟢 S1 (Safe): Transparent passthrough to cloud. 🟡 S2 (Sensitive): On-device PII redaction before forwarding. 🔴 S3 (Private): 100% Local inference. Cloud only sees a 🔒 placeholder. 🧠 #LLM-as-Judge Routing Classifies requests locally and routes to the ideal model tier (e.g., #MiniCPM ➡️ #GPT-4o/#Claude), optimizing resource allocation and reducing unnecessary cloud dependency. 📉 Learn more🔗 github.com/openbmb/edgecl… #EdgeAI #LLM #Privacy #EdgeClaw #OpenClaw

✨vLLM v0.17.0 is out with support for #MiniCPM-o 4.5! 🚀 Now you can serve the latest 9B #omnimodal model with vLLM’s high-throughput serving engine. For developers, this means scaling real-time, full-duplex conversations (vision, speech, and text) is now production-ready. @vllm_project 👏Check the release: github.com/vllm-project/v… #LLM #vLLM #MiniCPM #OpenSource

From lab to open-source: A new milestone for AI-driven education. 🎓 🤗 We’ve been closely following the MAIC project at Tsinghua University, and we’re thrilled to see it now open-sourced as #OpenMAIC. ✨ This isn't just another chatbot; it takes Multi-Agent orchestration to the next level by building a fully interactive classroom where AI instructors and peers collaborate in real-time. What makes it technically impressive: 🛠️ Complex Orchestration: Leveraging #LangGraph to manage spontaneous interactions—like #AI students "raising hands" during a live lecture. 🧠 Structured Planning: A dedicated "Plan Agent" that transforms raw PDFs into coherent, logically sequenced pedagogical flows. 💻 Beyond Text: A masterclass in GenUI implementation, featuring synchronized TTS, laser pointers, and real-time whiteboard demonstrations. 🥳 If you’re building complex, multi-modal #Agent workflows, this repo is a treasure trove of engineering insights. 🖥️Explore the project: github.com/THU-MAIC/OpenM… 📰 Read the research: jcst.ict.ac.cn/article/doi/10…

🚀 vLLM v0.17.0 is here! 699 commits from 272 contributors (48 new!) This is a big one. Highlights: ⚡ FlashAttention 4 integration 🧠 Qwen3.5 model family with GDN (Gated Delta Networks) 🏗️ Model Runner V2 maturation: Pipeline Parallel, Decode Context Parallel, Eagle3 + CUDA graphs 🎛️ New --performance-mode flag: balanced / interactivity / throughput 💾 Weight Offloading V2 with prefetching 🔀 Elastic Expert Parallelism Milestone 2 🔧 Quantized LoRA adapters (QLoRA) now loadable directly

mlx-vlm v0.4.0 is here 🚀 New models: • Moondream3 by @vikhyatk • Phi-4-reasoning-vision by @MSFTResearch • Phi4-multimodal-instruct by @MSFTResearch • Minicpm-o-2.5 (except tts) by @OpenBMB What's new: → Full weight finetuning + ORPO h/t @ActuallyIsaak → Tool calling in server → Thinking budget support → KV cache quantization for server → Fused SDPA attention optimization → Streaming & OpenAI-compatible endpoint improvements Fixes: • Gemma3n • Qwen3-VL • Qwen3.5-MoE • Qwen3-Omni h/t @ronaldseoh • Batch inference, and more. Big shoutout to 7 new contributors this release! 🙌 Get started today: > uv pip install -U mlx-vlm Leave us a star ⭐️ github.com/Blaizzy/mlx-vl…

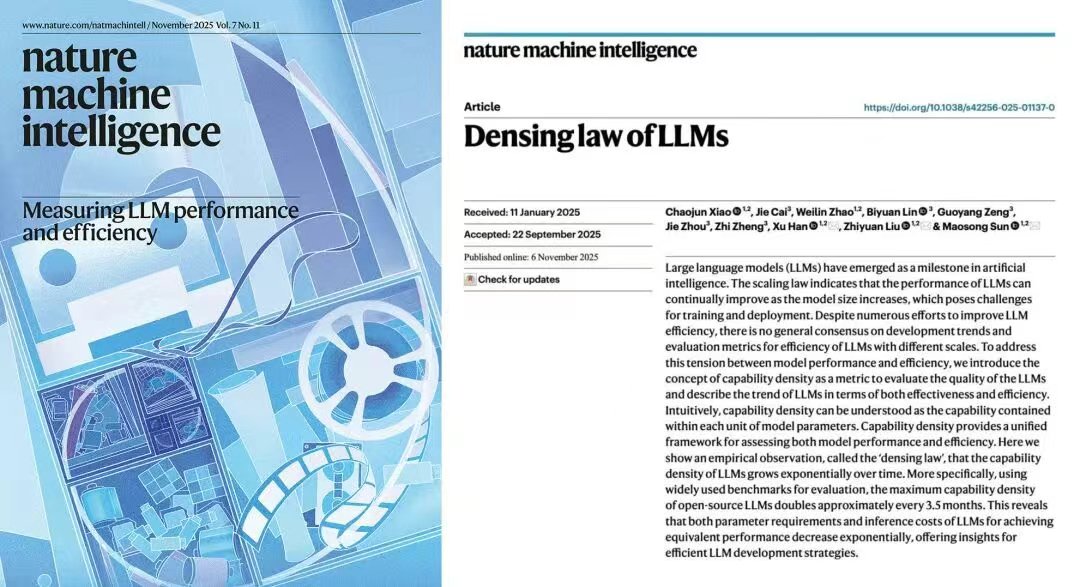

@Alibaba_Qwen Impressive intelligence density