OpenBMB

832 posts

OpenBMB

@OpenBMB

OpenBMB (Open Lab for Big Model Base) aims to build foundation models and systems towards AGI. Connect with us: https://t.co/N9pevTnoOa

Can you imagine Elon Musk explaining quantum mechanics to you in his unmistakable voice? Or Guo Degang breaking down AI with his signature cross-talk delivery? Yeah, that kind of immersive experience you used to only daydream about — it’s real now! @OpenBMB 📢 Dream Collab! OpenMAIC × VoxCPM2 just dropped something huge: Immersive voice-powered learning is officially LIVE today! We’ve brought OpenBMB’s VoxCPM2 into OpenMAIC, straight-up injecting cutting-edge voice cloning tech right into interactive learning scenarios. 🎙️ One-Click Replication — Cosplay Anything with Sound The open-source self-hosted version is now deeply locked in with VoxCPM2. Just type in a quick prompt and you can nail the signature voices of all kinds of heavyweights. Whether it’s pro-level commentary from an industry expert or drama-queen-style narration, it’s all effortlessly doable. 🗣️ Say Goodbye to ‘Robotic AI’ — NPC Personas Maxed Out Sick of those soulless, emotionless voices from traditional TTS systems? The era of robotic monotone is OVER! The system can now auto-match the perfect vocal style based on your preset personas — the dignified tone of an academic legend, the patient guidance of a gentle TA, the comedic flair of a fun influencer… Silky smooth voice switching, so natural you’d think there’s a real person sitting right across from you. No more nodding off in class! 💡 Heads Up: These two voice features are currently exclusive to the open-source version! ⏳ Live Demo is in the works You’ll be able to access these god-tier features online very soon. Stay tuned! 🚀 Come disrupt traditional learning with us: 🏠 Home: open.maic.chat/home ⭐️ GitHub: github.com/THU-MAIC/OpenM…

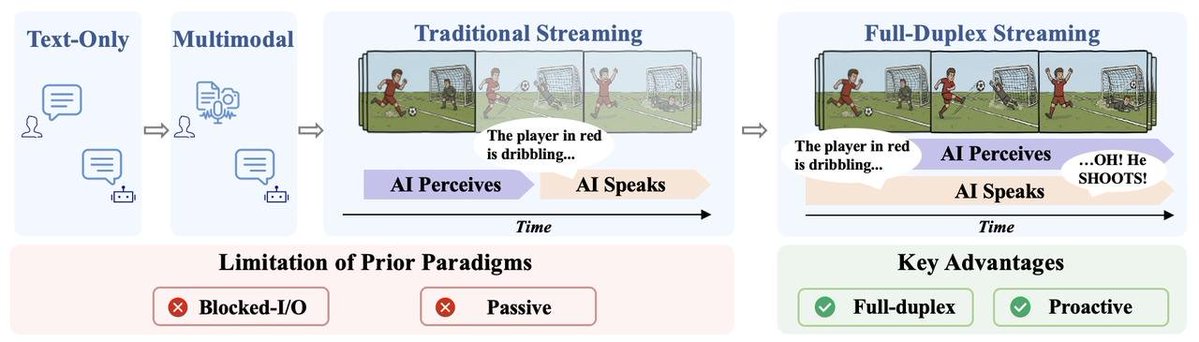

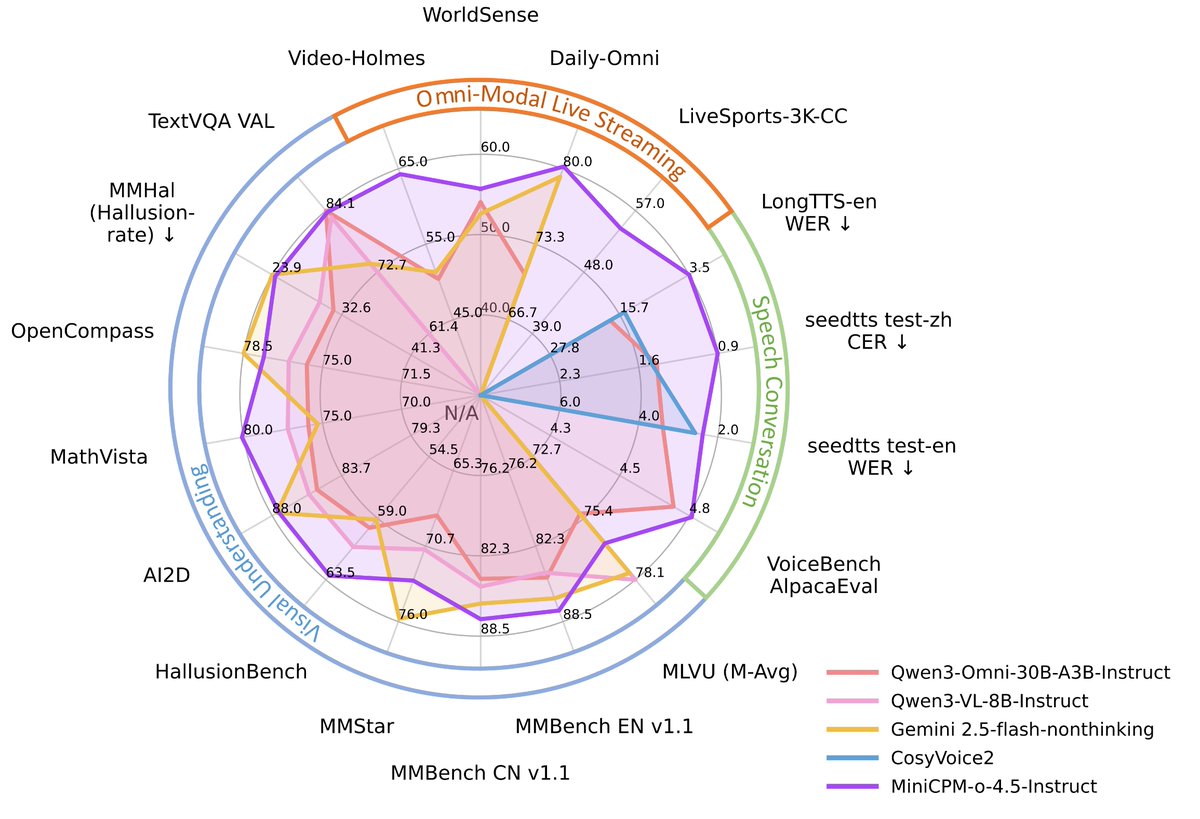

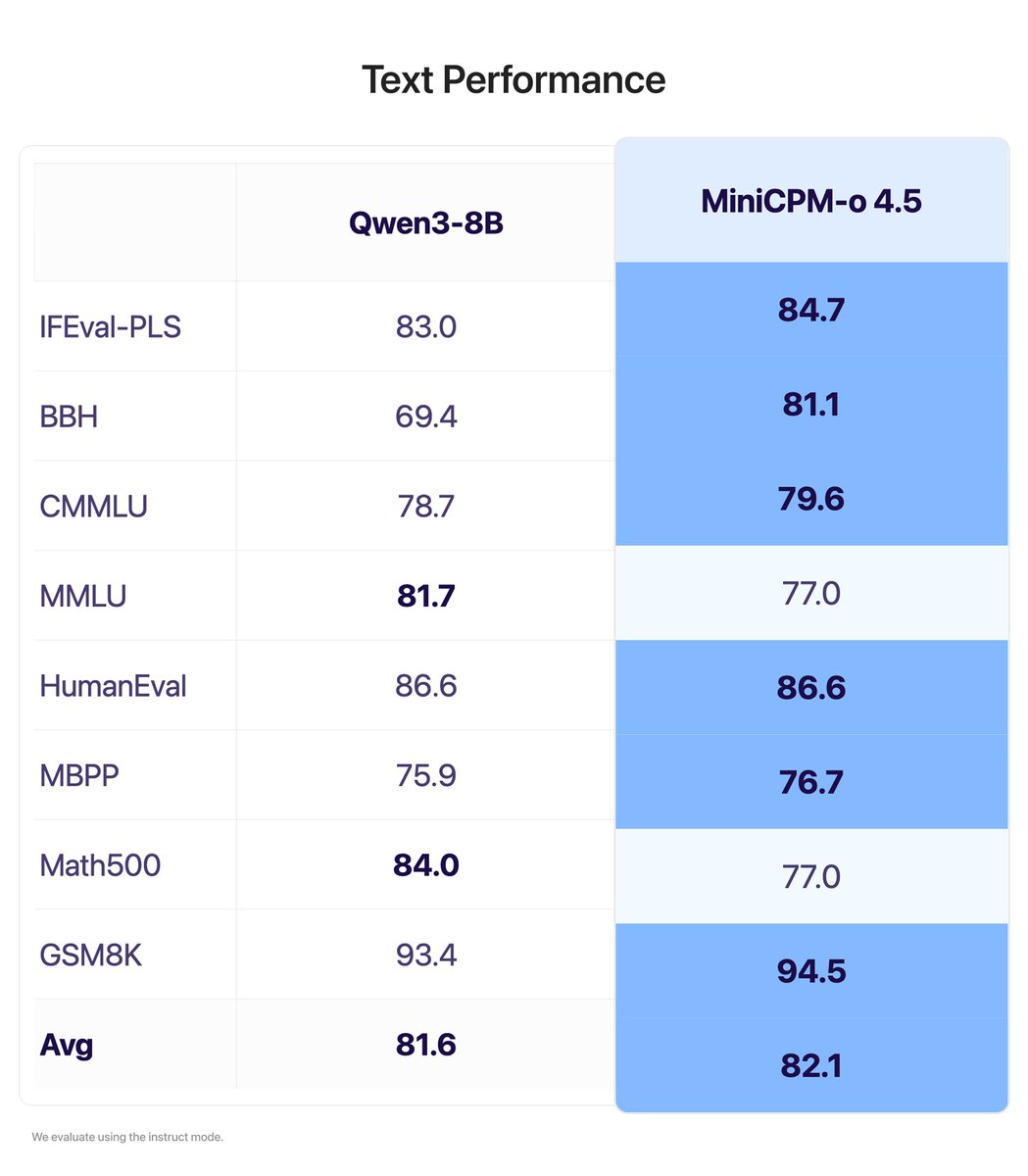

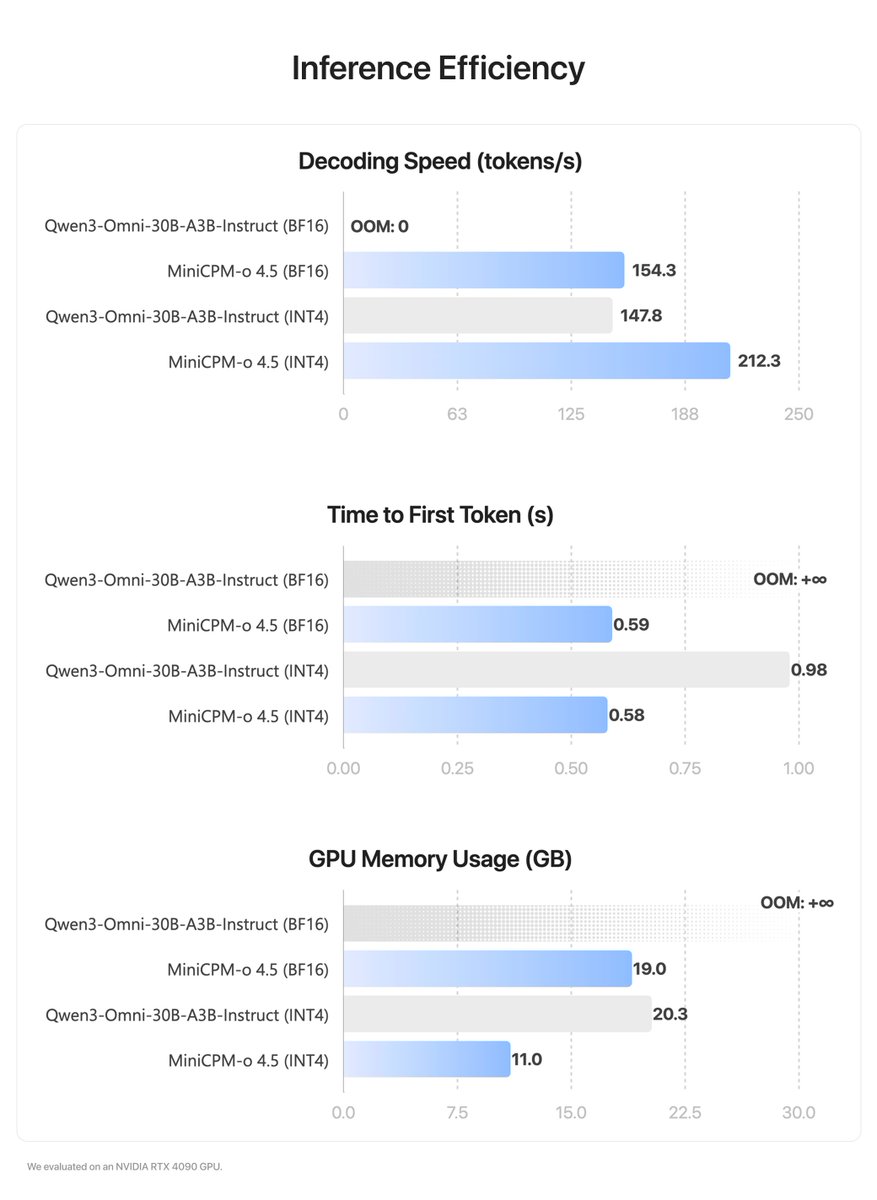

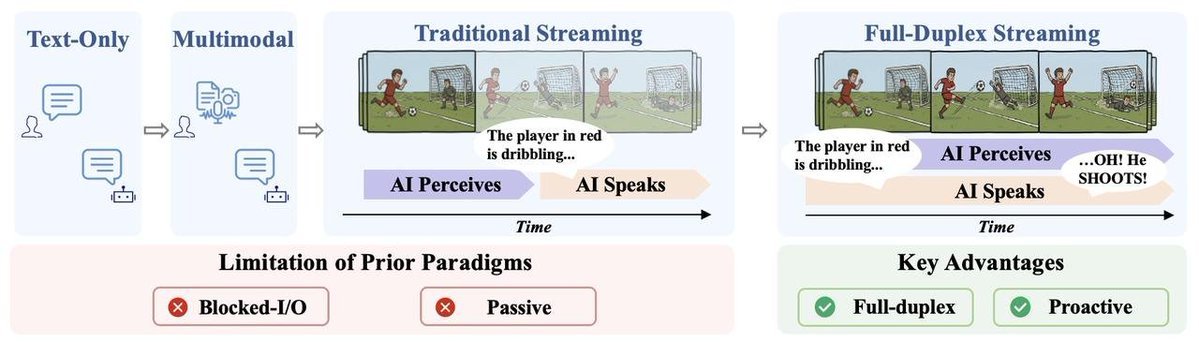

🚀 🚀Excited to announce the technical report of MiniCPM-o 4.5! MiniCPM-o 4.5 transitions #AI interaction from traditional turn-based processing to a real-time, native full-duplex stream-based paradigm. 🌊 The Omni-Flow Framework Instead of traditional VAD-based workarounds, we introduce the #Omni-#Flow framework. This unified stream paradigm aligns video, audio, and text on a synchronized millisecond timeline. • Native Full-Duplex: Simultaneous perception and response. • Proactive Interaction: Natively manages turn-taking without external VAD, supports proactive reminding. 📉 9B Scale, SOTA Performance MiniCPM-o 4.5 demonstrates SOTA multimodal intelligence at its scale: • Multimodal Benchmarks: Comparable to #Gemini 2.5 Flash on MMBench EN (87.6) and MathVista (80.1). • Streaming Evaluation: 54.4% win rate on LiveSports-3K-CC, surpassing specialized models. 💻 The Ultimate Edge AI — Fully Functional without Network Connection We are providing one-click installers for Windows (12G VRAM,RTX 5070) and macOS (M1-M5 Max/ M5 Pro). • Local API Support: Deploy your own inference server to integrate native full-duplex into custom apps. • Free Access: We are offering free community API services for exploration. • 100% Private: Your data never leaves your machine. Deploy in under 10 minutes. 🛠️👇 👐 Join the Open Future The weights are open. The protocol is public. 📄 Technical Report: github.com/OpenBMB/MiniCP… 💻 GitHub: github.com/OpenBMB/MiniCP… 🤗 HuggingFace: huggingface.co/openbmb/MiniCP… 🌐 Web Demo: openbmb.github.io/MiniCPM-o-Demo/ #MiniCPMo #OpenSourceAI #EdgeAI #MachineLearning #ComputerVision #LLM

🚀 🚀Excited to announce the technical report of MiniCPM-o 4.5! MiniCPM-o 4.5 transitions #AI interaction from traditional turn-based processing to a real-time, native full-duplex stream-based paradigm. 🌊 The Omni-Flow Framework Instead of traditional VAD-based workarounds, we introduce the #Omni-#Flow framework. This unified stream paradigm aligns video, audio, and text on a synchronized millisecond timeline. • Native Full-Duplex: Simultaneous perception and response. • Proactive Interaction: Natively manages turn-taking without external VAD, supports proactive reminding. 📉 9B Scale, SOTA Performance MiniCPM-o 4.5 demonstrates SOTA multimodal intelligence at its scale: • Multimodal Benchmarks: Comparable to #Gemini 2.5 Flash on MMBench EN (87.6) and MathVista (80.1). • Streaming Evaluation: 54.4% win rate on LiveSports-3K-CC, surpassing specialized models. 💻 The Ultimate Edge AI — Fully Functional without Network Connection We are providing one-click installers for Windows (12G VRAM,RTX 5070) and macOS (M1-M5 Max/ M5 Pro). • Local API Support: Deploy your own inference server to integrate native full-duplex into custom apps. • Free Access: We are offering free community API services for exploration. • 100% Private: Your data never leaves your machine. Deploy in under 10 minutes. 🛠️👇 👐 Join the Open Future The weights are open. The protocol is public. 📄 Technical Report: github.com/OpenBMB/MiniCP… 💻 GitHub: github.com/OpenBMB/MiniCP… 🤗 HuggingFace: huggingface.co/openbmb/MiniCP… 🌐 Web Demo: openbmb.github.io/MiniCPM-o-Demo/ #MiniCPMo #OpenSourceAI #EdgeAI #MachineLearning #ComputerVision #LLM

🚀 🚀Excited to announce the technical report of MiniCPM-o 4.5! MiniCPM-o 4.5 transitions #AI interaction from traditional turn-based processing to a real-time, native full-duplex stream-based paradigm. 🌊 The Omni-Flow Framework Instead of traditional VAD-based workarounds, we introduce the #Omni-#Flow framework. This unified stream paradigm aligns video, audio, and text on a synchronized millisecond timeline. • Native Full-Duplex: Simultaneous perception and response. • Proactive Interaction: Natively manages turn-taking without external VAD, supports proactive reminding. 📉 9B Scale, SOTA Performance MiniCPM-o 4.5 demonstrates SOTA multimodal intelligence at its scale: • Multimodal Benchmarks: Comparable to #Gemini 2.5 Flash on MMBench EN (87.6) and MathVista (80.1). • Streaming Evaluation: 54.4% win rate on LiveSports-3K-CC, surpassing specialized models. 💻 The Ultimate Edge AI — Fully Functional without Network Connection We are providing one-click installers for Windows (12G VRAM,RTX 5070) and macOS (M1-M5 Max/ M5 Pro). • Local API Support: Deploy your own inference server to integrate native full-duplex into custom apps. • Free Access: We are offering free community API services for exploration. • 100% Private: Your data never leaves your machine. Deploy in under 10 minutes. 🛠️👇 👐 Join the Open Future The weights are open. The protocol is public. 📄 Technical Report: github.com/OpenBMB/MiniCP… 💻 GitHub: github.com/OpenBMB/MiniCP… 🤗 HuggingFace: huggingface.co/openbmb/MiniCP… 🌐 Web Demo: openbmb.github.io/MiniCPM-o-Demo/ #MiniCPMo #OpenSourceAI #EdgeAI #MachineLearning #ComputerVision #LLM

🚀 🚀Excited to announce the technical report of MiniCPM-o 4.5! MiniCPM-o 4.5 transitions #AI interaction from traditional turn-based processing to a real-time, native full-duplex stream-based paradigm. 🌊 The Omni-Flow Framework Instead of traditional VAD-based workarounds, we introduce the #Omni-#Flow framework. This unified stream paradigm aligns video, audio, and text on a synchronized millisecond timeline. • Native Full-Duplex: Simultaneous perception and response. • Proactive Interaction: Natively manages turn-taking without external VAD, supports proactive reminding. 📉 9B Scale, SOTA Performance MiniCPM-o 4.5 demonstrates SOTA multimodal intelligence at its scale: • Multimodal Benchmarks: Comparable to #Gemini 2.5 Flash on MMBench EN (87.6) and MathVista (80.1). • Streaming Evaluation: 54.4% win rate on LiveSports-3K-CC, surpassing specialized models. 💻 The Ultimate Edge AI — Fully Functional without Network Connection We are providing one-click installers for Windows (12G VRAM,RTX 5070) and macOS (M1-M5 Max/ M5 Pro). • Local API Support: Deploy your own inference server to integrate native full-duplex into custom apps. • Free Access: We are offering free community API services for exploration. • 100% Private: Your data never leaves your machine. Deploy in under 10 minutes. 🛠️👇 👐 Join the Open Future The weights are open. The protocol is public. 📄 Technical Report: github.com/OpenBMB/MiniCP… 💻 GitHub: github.com/OpenBMB/MiniCP… 🤗 HuggingFace: huggingface.co/openbmb/MiniCP… 🌐 Web Demo: openbmb.github.io/MiniCPM-o-Demo/ #MiniCPMo #OpenSourceAI #EdgeAI #MachineLearning #ComputerVision #LLM

🚀 🚀Excited to announce the technical report of MiniCPM-o 4.5! MiniCPM-o 4.5 transitions #AI interaction from traditional turn-based processing to a real-time, native full-duplex stream-based paradigm. 🌊 The Omni-Flow Framework Instead of traditional VAD-based workarounds, we introduce the #Omni-#Flow framework. This unified stream paradigm aligns video, audio, and text on a synchronized millisecond timeline. • Native Full-Duplex: Simultaneous perception and response. • Proactive Interaction: Natively manages turn-taking without external VAD, supports proactive reminding. 📉 9B Scale, SOTA Performance MiniCPM-o 4.5 demonstrates SOTA multimodal intelligence at its scale: • Multimodal Benchmarks: Comparable to #Gemini 2.5 Flash on MMBench EN (87.6) and MathVista (80.1). • Streaming Evaluation: 54.4% win rate on LiveSports-3K-CC, surpassing specialized models. 💻 The Ultimate Edge AI — Fully Functional without Network Connection We are providing one-click installers for Windows (12G VRAM,RTX 5070) and macOS (M1-M5 Max/ M5 Pro). • Local API Support: Deploy your own inference server to integrate native full-duplex into custom apps. • Free Access: We are offering free community API services for exploration. • 100% Private: Your data never leaves your machine. Deploy in under 10 minutes. 🛠️👇 👐 Join the Open Future The weights are open. The protocol is public. 📄 Technical Report: github.com/OpenBMB/MiniCP… 💻 GitHub: github.com/OpenBMB/MiniCP… 🤗 HuggingFace: huggingface.co/openbmb/MiniCP… 🌐 Web Demo: openbmb.github.io/MiniCPM-o-Demo/ #MiniCPMo #OpenSourceAI #EdgeAI #MachineLearning #ComputerVision #LLM

🚀 🚀Excited to announce the technical report of MiniCPM-o 4.5! MiniCPM-o 4.5 transitions #AI interaction from traditional turn-based processing to a real-time, native full-duplex stream-based paradigm. 🌊 The Omni-Flow Framework Instead of traditional VAD-based workarounds, we introduce the #Omni-#Flow framework. This unified stream paradigm aligns video, audio, and text on a synchronized millisecond timeline. • Native Full-Duplex: Simultaneous perception and response. • Proactive Interaction: Natively manages turn-taking without external VAD, supports proactive reminding. 📉 9B Scale, SOTA Performance MiniCPM-o 4.5 demonstrates SOTA multimodal intelligence at its scale: • Multimodal Benchmarks: Comparable to #Gemini 2.5 Flash on MMBench EN (87.6) and MathVista (80.1). • Streaming Evaluation: 54.4% win rate on LiveSports-3K-CC, surpassing specialized models. 💻 The Ultimate Edge AI — Fully Functional without Network Connection We are providing one-click installers for Windows (12G VRAM,RTX 5070) and macOS (M1-M5 Max/ M5 Pro). • Local API Support: Deploy your own inference server to integrate native full-duplex into custom apps. • Free Access: We are offering free community API services for exploration. • 100% Private: Your data never leaves your machine. Deploy in under 10 minutes. 🛠️👇 👐 Join the Open Future The weights are open. The protocol is public. 📄 Technical Report: github.com/OpenBMB/MiniCP… 💻 GitHub: github.com/OpenBMB/MiniCP… 🤗 HuggingFace: huggingface.co/openbmb/MiniCP… 🌐 Web Demo: openbmb.github.io/MiniCPM-o-Demo/ #MiniCPMo #OpenSourceAI #EdgeAI #MachineLearning #ComputerVision #LLM

🚀 🚀Excited to announce the technical report of MiniCPM-o 4.5! MiniCPM-o 4.5 transitions #AI interaction from traditional turn-based processing to a real-time, native full-duplex stream-based paradigm. 🌊 The Omni-Flow Framework Instead of traditional VAD-based workarounds, we introduce the #Omni-#Flow framework. This unified stream paradigm aligns video, audio, and text on a synchronized millisecond timeline. • Native Full-Duplex: Simultaneous perception and response. • Proactive Interaction: Natively manages turn-taking without external VAD, supports proactive reminding. 📉 9B Scale, SOTA Performance MiniCPM-o 4.5 demonstrates SOTA multimodal intelligence at its scale: • Multimodal Benchmarks: Comparable to #Gemini 2.5 Flash on MMBench EN (87.6) and MathVista (80.1). • Streaming Evaluation: 54.4% win rate on LiveSports-3K-CC, surpassing specialized models. 💻 The Ultimate Edge AI — Fully Functional without Network Connection We are providing one-click installers for Windows (12G VRAM,RTX 5070) and macOS (M1-M5 Max/ M5 Pro). • Local API Support: Deploy your own inference server to integrate native full-duplex into custom apps. • Free Access: We are offering free community API services for exploration. • 100% Private: Your data never leaves your machine. Deploy in under 10 minutes. 🛠️👇 👐 Join the Open Future The weights are open. The protocol is public. 📄 Technical Report: github.com/OpenBMB/MiniCP… 💻 GitHub: github.com/OpenBMB/MiniCP… 🤗 HuggingFace: huggingface.co/openbmb/MiniCP… 🌐 Web Demo: openbmb.github.io/MiniCPM-o-Demo/ #MiniCPMo #OpenSourceAI #EdgeAI #MachineLearning #ComputerVision #LLM