Synth

4.5K posts

Synth

@SynthThink

Synthesis (the process of merging information from different sources to form a well-rounded conclusion)

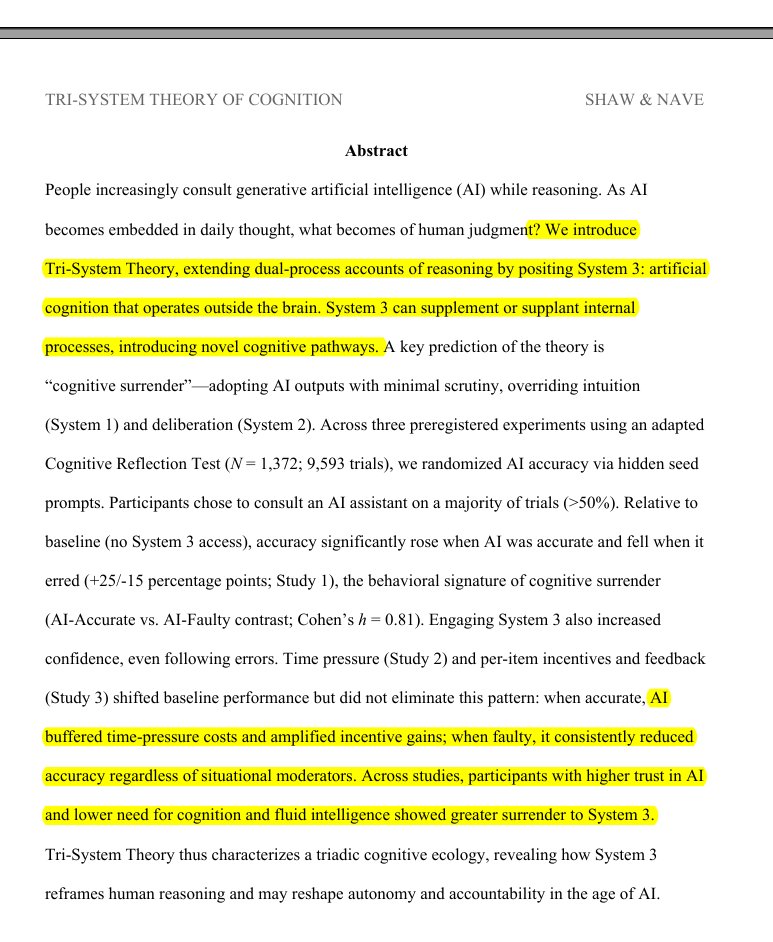

In March 2023, Claude had an estimated IQ of 64. Today, Claude Opus 4.6 scores 133 on the Mensa Norway test. GPT-5.2 Thinking hits 141. Gemini 3 Pro, 142. That's a jump from cognitively impaired to gifted in three years. No human population has ever improved that fast, the Flynn effect gives us ~3 IQ points per decade. AI just did 70 points in 36 months.

Terence Tao put it plainly: there is no evidence that LLMs exhibit genuine creativity. Yes, they have solved some Erdős problems. But these are low-hanging fruit, questions that attracted little attention and that yield once the right existing techniques are applied. That is not creativity. That is search plus recombination. Yes, LLM outputs can look impressive. But look at who is impressed: typically non-experts. Experts know very well that LLM performance gets terrible when you approach the frontier of human knowledge. And this is not a temporary gap. It reflects a structural limitation. We do not fully understand human creativity. But we do know a key property: Conceptual leaps: the ability to generate new representations, not just recombine existing ones. LLMs do not do this. They interpolate in representation space. They operate within existing conceptual frameworks; they do not create new ones. This is why we haven’t “yet seen them take the next step”.

There are many reasons to resist AI (e.g. environmental harm, the power it gives to states/corporations, intellectual theft), but perhaps the biggest one for me is that, despite it all, I still believe that the human intellect is miraculous, irreplicable, & worth fighting for.

I organized the biggest AI Safety protest in US History! Nearly 200 people marched from Anthropic to OpenAI to xAI with one demand: commit to pausing if the others do too