Discrete Diffusion Reading Group

102 posts

@diffusion_llms

📚 Journal club on discrete diffusion models 🎥 Replays available on YouTube! Contact: [email protected] Hosted by @ssahoo_, @jdeschena, @zhihanyang_

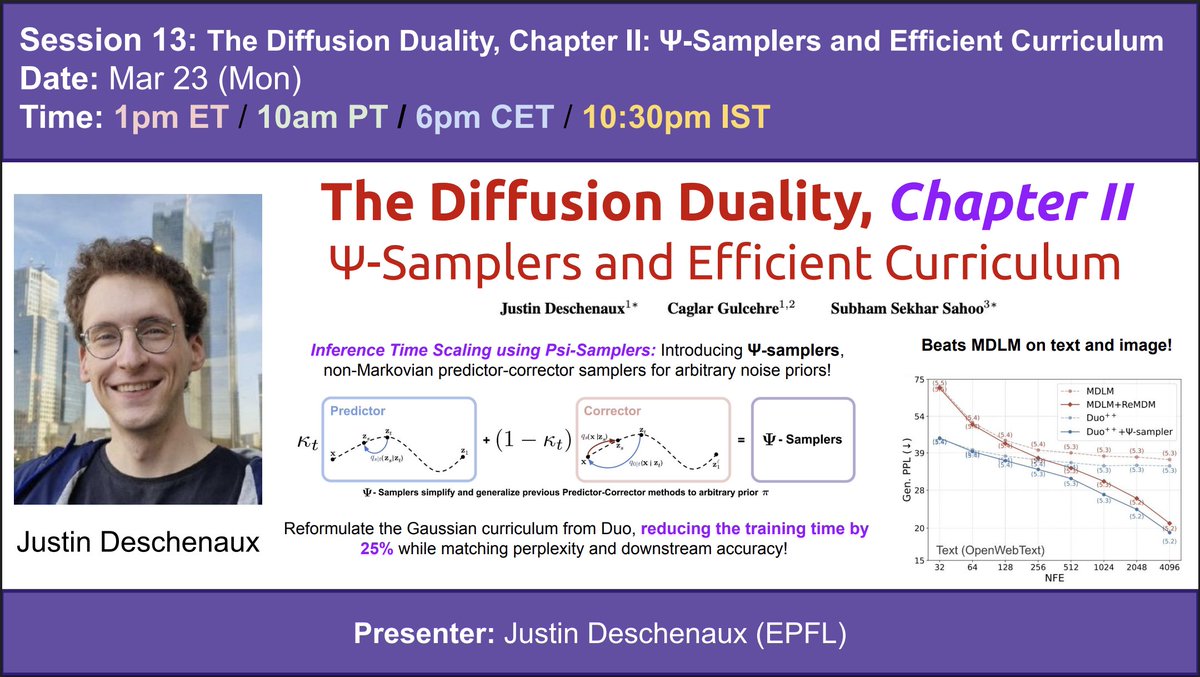

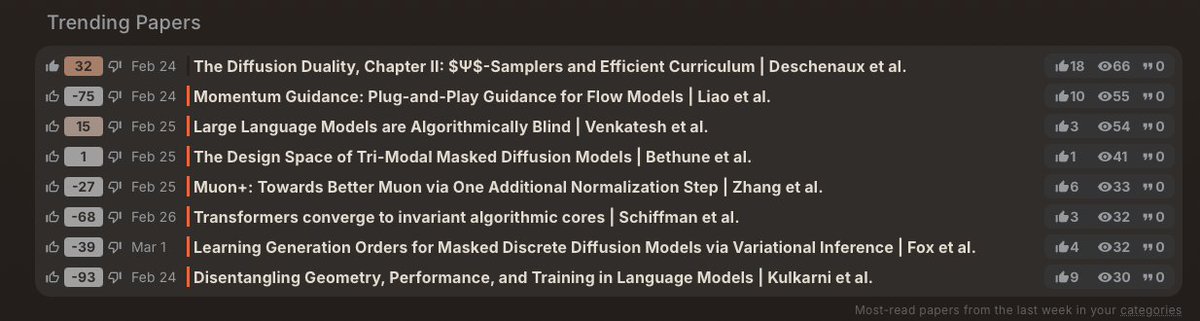

📢 Mar 23 (Mon): The Diffusion Duality, Chapter II: Ψ-Samplers and Efficient Curriculum ☯️The Diffusion Duality (Duo) (ICML 2025) showed that uniform-state discrete diffusion arises from Gaussian diffusion. 🔮The new Chapter II paper (ICLR 2026) introduces Ψ-samplers: non-Markovian predictor-corrector samplers for arbitrary noise priors! Unlike ancestral sampling which plateaus, Ψ-samplers exhibit improved test-time scaling, beating MDLM on language generation (OpenWebText) and image generation (CIFAR-10). ⚡️The authors also reformulated the Gaussian curriculum from Duo, reducing its training time by 25% while matching perplexity and downstream accuracy. This Monday, Justin Deschenaux (@jdeschena) will present his paper, published with collaborators Caglar Gulcehre (@caglarml) and Subham Sahoo (@ssahoo_) Paper link: arxiv.org/abs/2602.21185

📢 Mar 23 (Mon): The Diffusion Duality, Chapter II: Ψ-Samplers and Efficient Curriculum ☯️The Diffusion Duality (Duo) (ICML 2025) showed that uniform-state discrete diffusion arises from Gaussian diffusion. 🔮The new Chapter II paper (ICLR 2026) introduces Ψ-samplers: non-Markovian predictor-corrector samplers for arbitrary noise priors! Unlike ancestral sampling which plateaus, Ψ-samplers exhibit improved test-time scaling, beating MDLM on language generation (OpenWebText) and image generation (CIFAR-10). ⚡️The authors also reformulated the Gaussian curriculum from Duo, reducing its training time by 25% while matching perplexity and downstream accuracy. This Monday, Justin Deschenaux (@jdeschena) will present his paper, published with collaborators Caglar Gulcehre (@caglarml) and Subham Sahoo (@ssahoo_) Paper link: arxiv.org/abs/2602.21185

I'm learning that Flow Matching is not only a bonkers idea, but it was proposed by 3 different groups simultaneously. Goes to show that, when ideas are ripe, they surface. We are mere passive vessels. That's my Monday afternoon nihilistic rant.

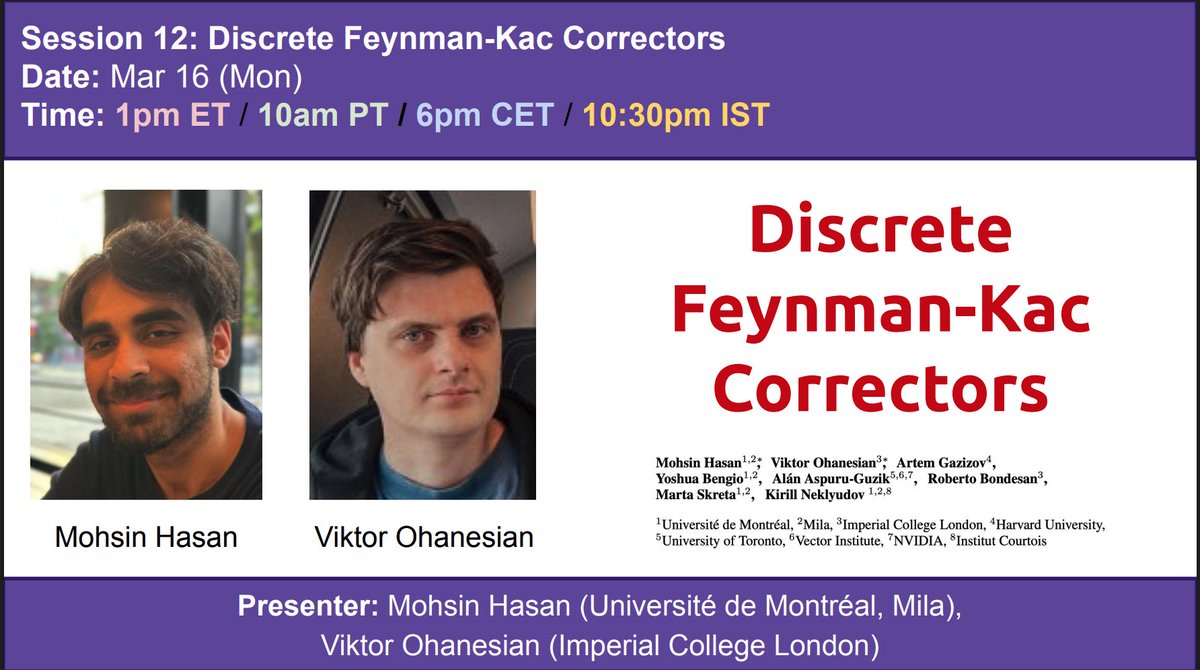

📢Mar 16 (Mon): Discrete Feynman-Kac Correctors 🤔Discrete diffusion models are powerful, but out of the box they give little control over the target distribution!! 🔑Discrete Feynman-Kac Correctors fix this by using Sequential Monte Carlo (SMC) to modify the distribution by - Annealing - Composing multiple models, or - Tilting with external reward functions. All at inference time with no retraining needed! 💡This unlocks things like boosting coding performance, sampling across a range of temperatures in the Ising model, and generating higher quality protein sequences. This Monday, Mohsin Hasan (Université de Montréal, Mila) (hasanmohsin.github.io) and Viktor Ohanesian (Imperial College London) (@OhanesianViktor, scholar.google.com/citations?user…) will co-present their jointly led paper Discrete Feynman-Kac Correctors. Collaborators: Artem Gazizov (Harvard), @Yoshua_Bengio, @A_Aspuru_Guzik, Roberto Bondesan (Imperial College London), @martoskreto, @k_neklyudov Paper link: arxiv.org/abs/2601.10403

📢Mar 16 (Mon): Discrete Feynman-Kac Correctors 🤔Discrete diffusion models are powerful, but out of the box they give little control over the target distribution!! 🔑Discrete Feynman-Kac Correctors fix this by using Sequential Monte Carlo (SMC) to modify the distribution by - Annealing - Composing multiple models, or - Tilting with external reward functions. All at inference time with no retraining needed! 💡This unlocks things like boosting coding performance, sampling across a range of temperatures in the Ising model, and generating higher quality protein sequences. This Monday, Mohsin Hasan (Université de Montréal, Mila) (hasanmohsin.github.io) and Viktor Ohanesian (Imperial College London) (@OhanesianViktor, scholar.google.com/citations?user…) will co-present their jointly led paper Discrete Feynman-Kac Correctors. Collaborators: Artem Gazizov (Harvard), @Yoshua_Bengio, @A_Aspuru_Guzik, Roberto Bondesan (Imperial College London), @martoskreto, @k_neklyudov Paper link: arxiv.org/abs/2601.10403

📢@CVPR 2026: first-ever tutorial dedicated to DISCRETE DIFFUSION 🔥 Part I: Consistency Models + Flow Maps - @JCJesseLai Part II: Discrete Diffusion - by me. ✨Few-step gen + inference-time scaling + live demos Co-orgs: @StefanoErmon @DrYangSong @mittu1204 @gimdong58085414 Full schedule + details👇 (1/3)

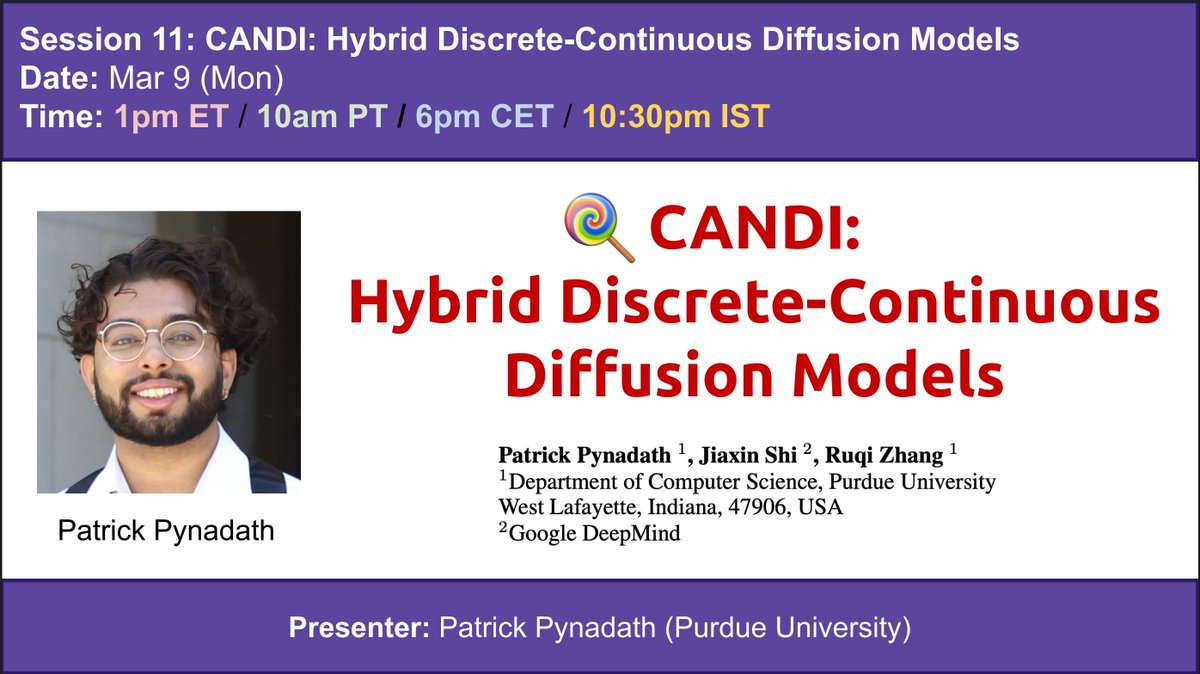

📢Mar 9 (Mon): CANDI: Hybrid Discrete-Continuous Diffusion Models 🤔Continuous diffusion dominates image generation. LLMs process text through continuous embeddings. So why does discrete diffusion still win for language? 🍬CANDI explains why — it’s a “temporal dissonance”: at large vocabulary sizes, Gaussian noise destroys token identity way before it meaningfully degrades the continuous signal. The model can either learn discrete conditional structure or continuous geometry, but not both simultaneously. 🔑The fix? Keep some tokens clean as anchors for discrete structure, corrupt the rest with Gaussian noise. Decoupling the two lets the model learn both simultaneously — enabling off-the-shelf classifier guidance and better low-NFE generation. This Monday, Patrick Pynadath (Purdue) (patrickpynadath1.github.io, @PatrickPyn35903) will present his paper CANDI: Hybrid Discrete-Continuous Diffusion Models. Collaborators: @thjashin and @ruqi_zhang Paper link: arxiv.org/abs/2510.22510

📢Mar 9 (Mon): CANDI: Hybrid Discrete-Continuous Diffusion Models 🤔Continuous diffusion dominates image generation. LLMs process text through continuous embeddings. So why does discrete diffusion still win for language? 🍬CANDI explains why — it’s a “temporal dissonance”: at large vocabulary sizes, Gaussian noise destroys token identity way before it meaningfully degrades the continuous signal. The model can either learn discrete conditional structure or continuous geometry, but not both simultaneously. 🔑The fix? Keep some tokens clean as anchors for discrete structure, corrupt the rest with Gaussian noise. Decoupling the two lets the model learn both simultaneously — enabling off-the-shelf classifier guidance and better low-NFE generation. This Monday, Patrick Pynadath (Purdue) (patrickpynadath1.github.io, @PatrickPyn35903) will present his paper CANDI: Hybrid Discrete-Continuous Diffusion Models. Collaborators: @thjashin and @ruqi_zhang Paper link: arxiv.org/abs/2510.22510

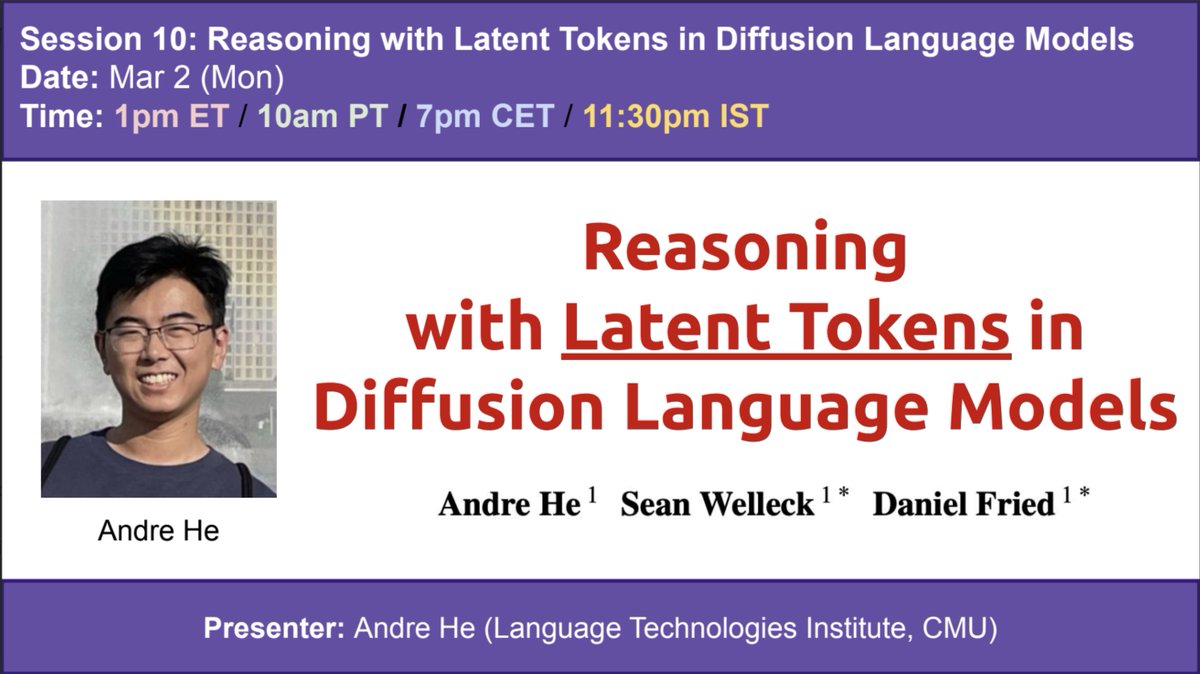

📢Mar 2 (Mon): Reasoning with Latent Tokens in Diffusion Language Models ❓Diffusion language models outperform AR on synthetic reasoning tasks, but why? 🔑This paper traces the answer to a surprising mechanism: diffusion models naturally maintain "latent tokens" -- joint predictions over positions they won't immediately decode -- that enable planning and lookahead! Latent tokens control a smooth tradeoff between inference speed and quality, and that this mechanism yields large gains in AR models on the same reasoning tasks where they've traditionally struggled. This Monday, Andre He (LTI @ CMU) (andrehe02.github.io, @Andre3035858461) will present his recent paper Reasoning with Latent Tokens in Diffusion Language Models! Collaborators: Sean Welleck (@wellecks), Daniel Fried (@dan_fried) Paper link: arxiv.org/abs/2602.03769

🔥New Paper drop: The Diffusion Duality (Ch. 2): 𝚿-Samplers #ICLR2026 🚀 Inference‑time scaling for uniform diffusion‑LLMs (Duo) 🥊 Beats Masked diffusion on text + image generation 🔖 openreview.net/forum?id=RSIoY… 🌐 s-sahoo.com/duo-ch2/ 🖥️ github.com/s-sahoo/duo w/ @jdeschena @caglarml (1 / 3)