Discrete Diffusion Reading Group

137 posts

@diffusion_llms

📚 Journal club on discrete diffusion models 🎥 Replays available on YouTube! Contact: [email protected] Hosted by @ssahoo_, @jdeschena, @zhihanyang_

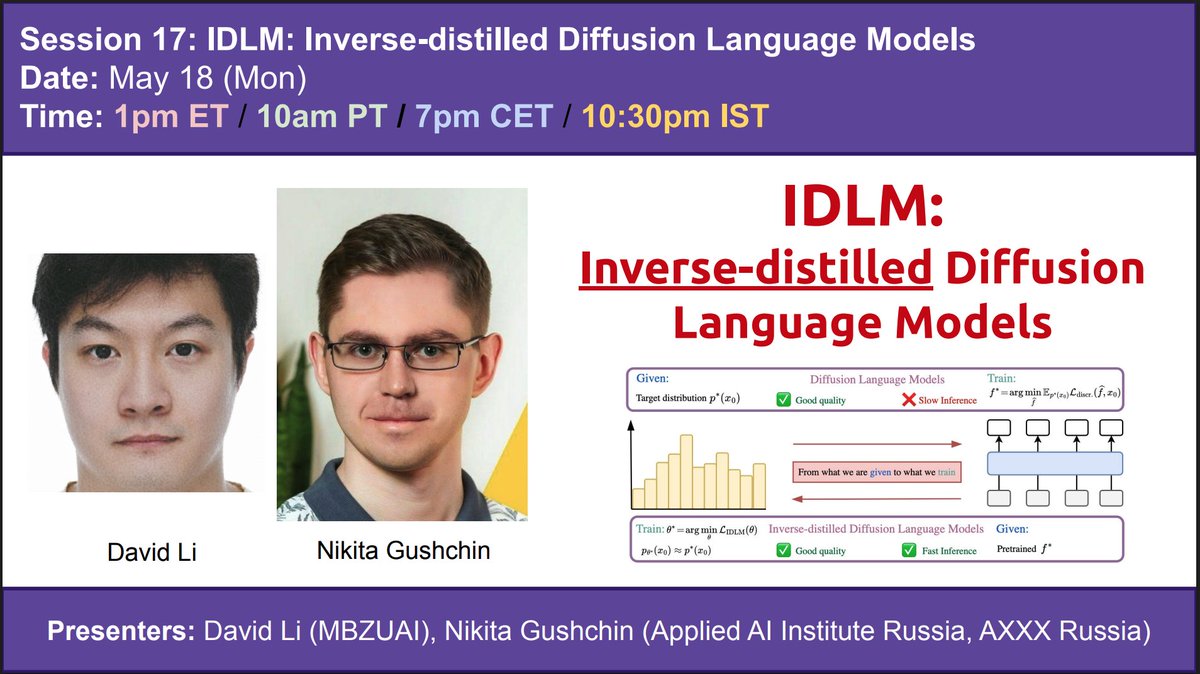

📢 May 18 (Mon): IDLM: Inverse-distilled Diffusion Language Models 🤔Diffusion Language Models (DLMs) have recently achieved strong results in text generation. However, their multi-step sampling leads to slow inference, limiting practical use. 💡To address this, the authors extend Inverse Distillation, a technique originally developed to accelerate continuous diffusion models, to the discrete setting. However, this extension introduces both theoretical and practical challenges. 🔧To overcome these challenges, the authors first provide a theoretical result demonstrating that their inverse formulation admits a unique solution, thereby ensuring valid optimization. They then introduce gradient-stable relaxations to support effective training. 📊As a result, experiments on multiple DLMs show that their method, Inverse-distilled Diffusion Language Models (IDLM), reduces the number of inference steps by 4×—64×, while preserving the teacher model’s entropy and generative perplexity. This Monday, David Li (scholar.google.com/citations?user…) and Nikita Gushchin (scholar.google.com/citations?user…) will present their jointly led paper, which was recently accepted at ICML 2026. Collaborators of this work include: Dmitry Abulkhanov (@dabulkhanov_), Eric Moulines (scholar.google.com/citations?user…), Ivan Oseledets (@oseledetsivan), Maxim Panov (@maxim_panov), Alexander Korotin (akorotin.netlify.app) Paper link: arxiv.org/abs/2602.19066

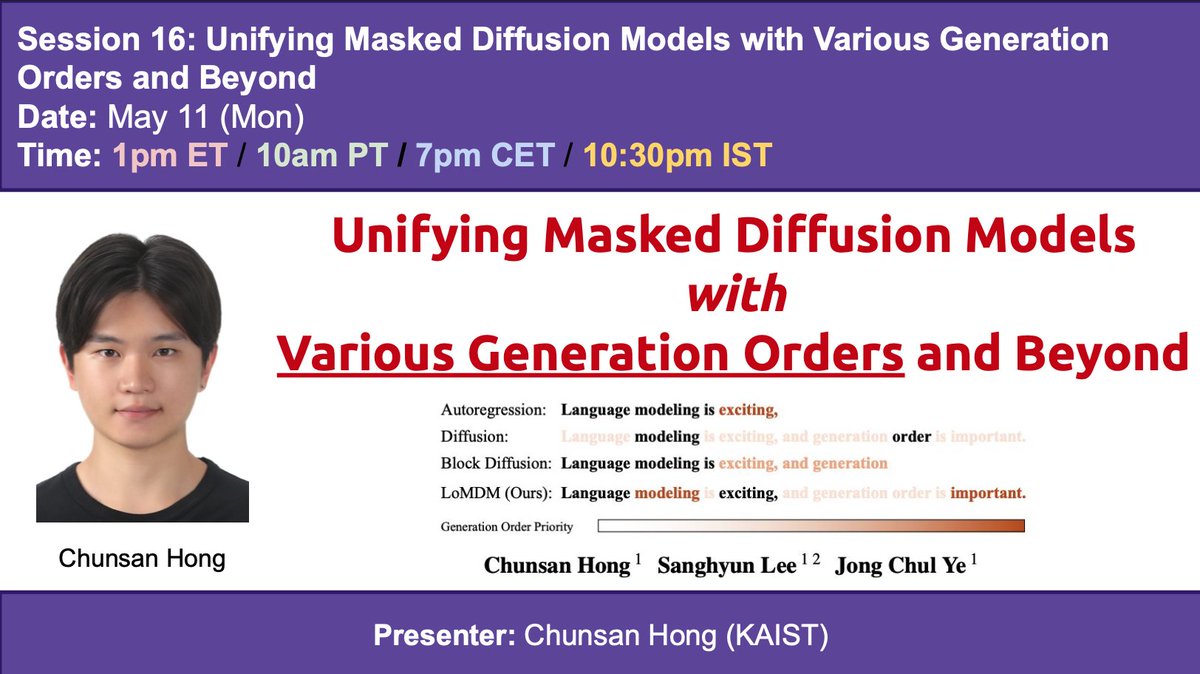

📢 May 11 (Mon): Unifying Masked Diffusion Models with Various Generation Orders and Beyond 🤔AR generates left-to-right; masked diffusion generates in any order; and block diffusion generates block-wise left-to-right, with random order within each block. Can we unify all these frameworks and further learn the generation order jointly with token prediction? 💡The authors propose OeMDM, a unified masked diffusion framework that can express various generation orders, and LoMDM, which jointly learns the generation order and the diffusion model. 🔍Everything comes down to the scheduler: by making the forward and reverse schedulers maximally flexible, it becomes possible to describe all generation orders, even learnable generation orders, within the masked diffusion framework. 📈LoMDM achieves SOTA among discrete diffusion models across all benchmarks, and even outperforms block diffusion models, which strongly benefit from left-to-right bias! This Monday, Chunsan Hong (@ChunsanHong) will present his paper, which received Spotlight at ICML 2026. Collaborators of this work include: Sanghyun Lee, Jong Chul Ye (bispl.weebly.com/professor.html) Paper link: arxiv.org/abs/2602.02112

📢Thrilled to share our new paper: Esoteric Language Models (Eso-LMs) > 🔀Fuses autoregressive (AR) and masked diffusion (MDM) paradigms > 🚀First to unlock KV caching for MDMs (65x speedup!) > 🥇Sets new SOTA on generation speed-vs-quality Pareto frontier How? Dive in👇 [🧵1/13] 📜Paper: arxiv.org/abs/2506.01928 📘Blog: s-sahoo.com/Eso-LMs/ 💻Code: github.com/s-sahoo/Eso-LMs Project co-led with @ssahoo_

Esoteric Language Models 🔥Beats MDLM on the speed-quality Pareto frontier 🔥Exact KV Caching 🔥 Exact Likelihood Computation 🔖 arxiv.org/abs/2506.01928 🖥️s-sahoo.com/Eso-LMs/ x.com/ssahoo_/status…

✈️ Discrete Diffusion Meetup @iclr_conf 📅 RioCentro | April 24 (Thurs) | 4PM I’ll share the exact location in the comments as we get closer. Save this post so you don’t miss the update.

Thank you all for coming out to the discrete diffusion meetup. Turnout was over a 100 people😊

The location was selected! Let's meet in the garden in the middle of the conference center, near the white structure and under the trees 🚀

✈️Discrete Diffusion Meetup @iclr_conf 📅 April 24, 4 pm 📍 RioCentro (TBD; In the comments) If you’re into discrete diffusion, come hang out, talk shop, and meet others working in the space. hosts: @ssahoo_ @jdeschena

📢 April 20 (Mon): Planner Aware Path Learning in Diffusion Language Models Training 🤔A key limitation of diffusion language models is that they are usually trained without accounting for the planner that guides decoding at inference time. 💡Planner Aware Path Learning (PAPL) brings the planning process into the training objective so that learning better matches how generation is actually performed. 🔍By viewing decoding as a coupled planner–denoiser process, PAPL provides a more principled training framework for diffusion language models. 📈Across experiments, this leads to improved sequence generation quality over simple training objectives (2 lines of code change) . This Monday, Fred Zhangzhi Peng (@pengzhangzhi1) and Zachary Bezemek (@bezemekz) will present their jointly led paper, which received Oral at ICLR 2026! Collaborators: Jarrid Rector-Brooks (@jarridrb), Shuibai Zhang (@ShuibaiZ69721), Anru R. Zhang (anruzhang.github.io), Michael Bronstein (@mmbronstein), Alexander Tong (@AlexanderTong7), and Avishek Joey Bose (@bose_joey). Paper link: arxiv.org/abs/2509.23405

📢 April 20 (Mon): Planner Aware Path Learning in Diffusion Language Models Training 🤔A key limitation of diffusion language models is that they are usually trained without accounting for the planner that guides decoding at inference time. 💡Planner Aware Path Learning (PAPL) brings the planning process into the training objective so that learning better matches how generation is actually performed. 🔍By viewing decoding as a coupled planner–denoiser process, PAPL provides a more principled training framework for diffusion language models. 📈Across experiments, this leads to improved sequence generation quality over simple training objectives (2 lines of code change) . This Monday, Fred Zhangzhi Peng (@pengzhangzhi1) and Zachary Bezemek (@bezemekz) will present their jointly led paper, which received Oral at ICLR 2026! Collaborators: Jarrid Rector-Brooks (@jarridrb), Shuibai Zhang (@ShuibaiZ69721), Anru R. Zhang (anruzhang.github.io), Michael Bronstein (@mmbronstein), Alexander Tong (@AlexanderTong7), and Avishek Joey Bose (@bose_joey). Paper link: arxiv.org/abs/2509.23405