Great chatting with Kyle at @NotionHQ! At Delve, we don't like copying playbooks. We invent our own.

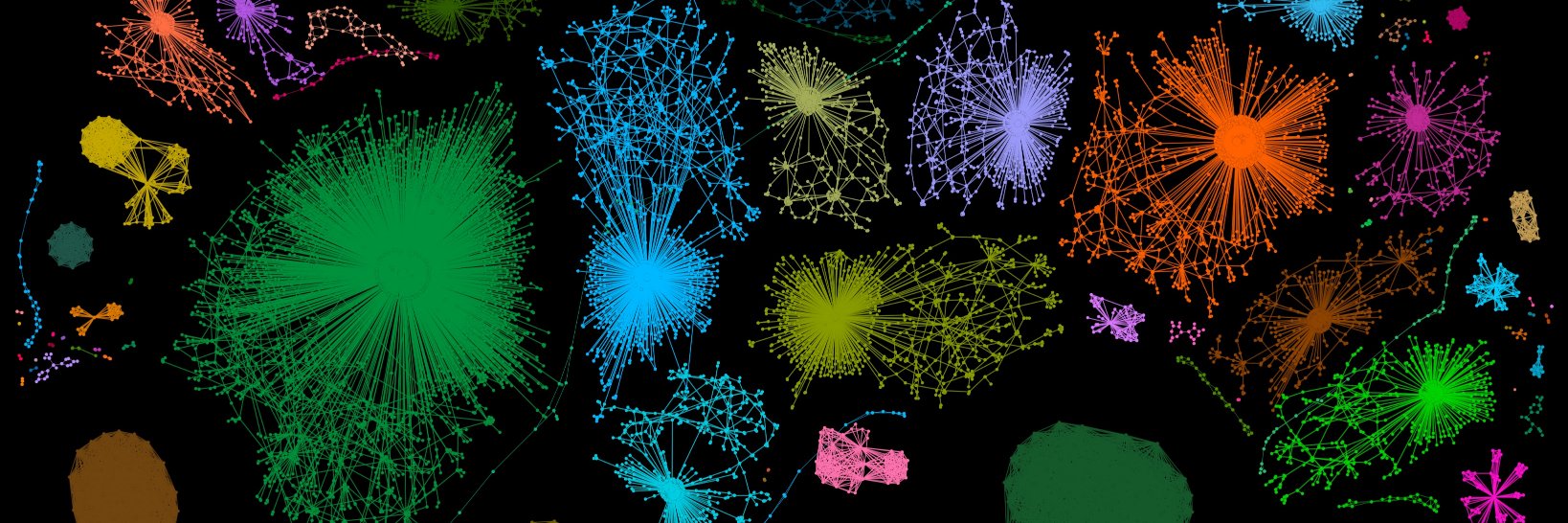

Anton Tsitsulin

1.8K posts

@graph_

Pre-training data. Datamodels for Gemini and Gemma 🧑🍳 Research Scientist @GoogleAI past (?) life: graph machine learning

Great chatting with Kyle at @NotionHQ! At Delve, we don't like copying playbooks. We invent our own.

🙌 Andrej Karpathy’s lab has received the first DGX Station GB300 -- a Dell Pro Max with GB300. 💚 We can't wait to see what you’ll create @karpathy! 🔗 #dgx-station" target="_blank" rel="nofollow noopener">blogs.nvidia.com/blog/gtc-2026-…

@DellTech

The trend of giving subagents names is so silly man imagine having to explain to your boss that Sportacus accidentally wiped out the database

Every single one of the 103 companies Jensen called AI Native today.

𝗞-𝗺𝗲𝗮𝗻𝘀 𝗶𝘀 𝘀𝗶𝗺𝗽𝗹𝗲. 𝗠𝗮𝗸𝗶𝗻𝗴 𝗶𝘁 𝗳𝗮𝘀𝘁 𝗼𝗻 𝗚𝗣𝗨𝘀 𝗶𝘀𝗻’𝘁. That’s why we built Flash-KMeans — an IO-aware implementation of exact k-means that rethinks the algorithm around modern GPU bottlenecks. By attacking the memory bottlenecks directly, Flash-KMeans achieves 30x speedup over cuML and 200x speedup over FAISS — with the same exact algorithm, just engineered for today’s hardware. At the million-scale, Flash-KMeans can complete a k-means iteration in milliseconds. A classic algorithm — redesigned for modern GPUs. Paper: arxiv.org/abs/2603.09229 Code: github.com/svg-project/fl…

This paper is the same as the DeepCrossAttention (DCA) method from more than a year ago: arxiv.org/abs/2502.06785. As far as I understood, here there is no innovation to be excited about, and yet surprisingly there is no citation and discussion about DCA! The level of redundancy in LLM research and then the hype on X is getting worse and worse! DeepCrossAttention is built based on the intuition that depth-wise cross-attention allows for richer interactions between layers at different depths. DCA further provides both empirical and theoretical results to support this approach.

Robert De Niro is a clear example that ears grow roughly 0.22 millimeters per year.

I need to avoid `arc` lm-eval scores going forward. There is a dead-space for this test in that a zeroed weight model or one with random noise weights can still result in a 0.22 score. The scoring range for arc is like a rubber band. It has a head and tail dead-space. Horrible models and great models will both be incorrectly scored by this benchmark.