Dan Kondratyuk

510 posts

Dan Kondratyuk

@hyperparticle

Research Scientist working on Video Generation @LumaLabsAI. Prev. #VideoPoet @GoogleAI. I'm a developer that enjoys solving puzzles, one piece at a time.

Introducing Uni-1, Luma’s first unified understanding and generation model, our next step on the path towards unified general intelligence. lumalabs.ai/uni-1

Introducing Terminal Velocity Matching: a scalable, single-stage generative training method that delivers diffusion-level quality with a 25× fewer inference steps, now trained at 10B+ scale. lumalabs.ai/blog/engineeri…

Luma AIのRay2は動物系の実験が楽しい🐺✨

This is Ray3. The world’s first reasoning video model, and the first to generate studio-grade HDR. Now with an all-new Draft Mode for rapid iteration in creative workflows, and state of the art physics and consistency. Available now for free in Dream Machine.

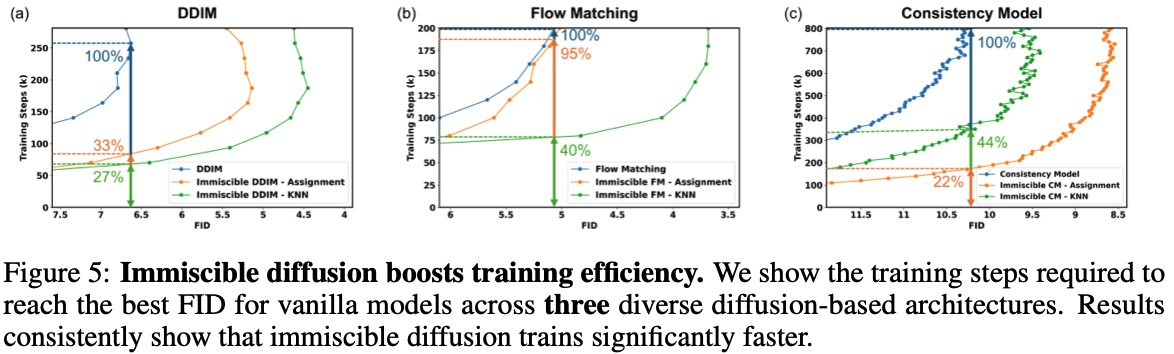

what in the chart crime

New video on the details of diffusion models: youtu.be/iv-5mZ_9CPY Produced by @welchlabs, this is the first in a small series of 3b1b this summer. I enjoyed providing editorial feedback throughout the last several months, and couldn't be happier with the result.

Introducing #Ray2 Camera Motion Concepts in #DreamMachine — 20+ precision-tuned camera motions designed for smooth cinematic control and great reliability. Concepts compose with each other making hundreds of impossible new camera moves possible. Available now.

Very interesting how 4o image generation appears to be some sort of combination of multiscale and autoregressive. At first I thought it was generating a coarse image and then just filling in fine details, but the coarse image itself seems to change during generation (shown here)

Inductive Moment Matching Luma AI introduces a new class of generative models for one- or few-step sampling with a single-stage training procedure. Surpasses diffusion models on ImageNet-256×256 with 1.99 FID using only 8 inference steps and achieves state-of-the-art 2-step FID of 1.98 on CIFAR-10 for a model trained from scratch. Similar to diffusion/flow matching/stochastic interpolants except learning a model to map arbitrarily the marginal noisy distribution from any noisy to less noisy timepoint, using MMD.