jon cooper

2.6K posts

Silicon Valley thinks AI agents are a $20/mo self-serve subscription. Main Street is paying local agencies $10,000 just to turn them on. Everyone assumes AI will be bought primarily online like Slack or Zoom. I think they are wrong. Some of the biggest winners in the AI boom won't be the software vendors. It will be the humans installing it. Here is the reality of SMBs right now: • 54% lack internal AI expertise. • 41% have data quality too poor for AI to even work. • 41% already prefer buying AI through a local IT provider. You cannot "1-click install" a genius AI into a messy CRM or a 15-year-old server. It will just execute the wrong tasks at the speed of light. The AI software will be cheap and a lot will absolutely be bought online. Making it actually work for a messy, real-world business will be expensive. Very bullish on the "Do It For Me" economy being back.

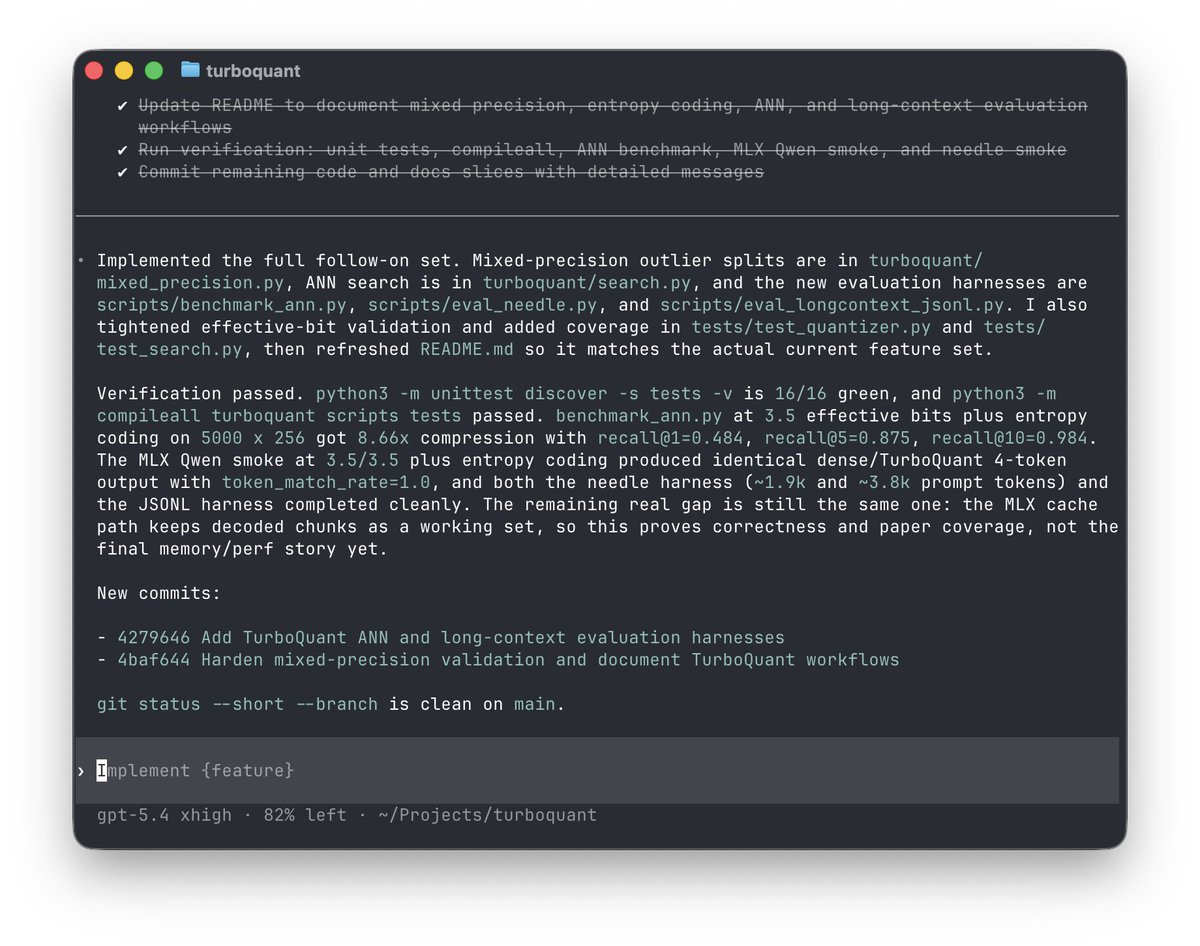

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

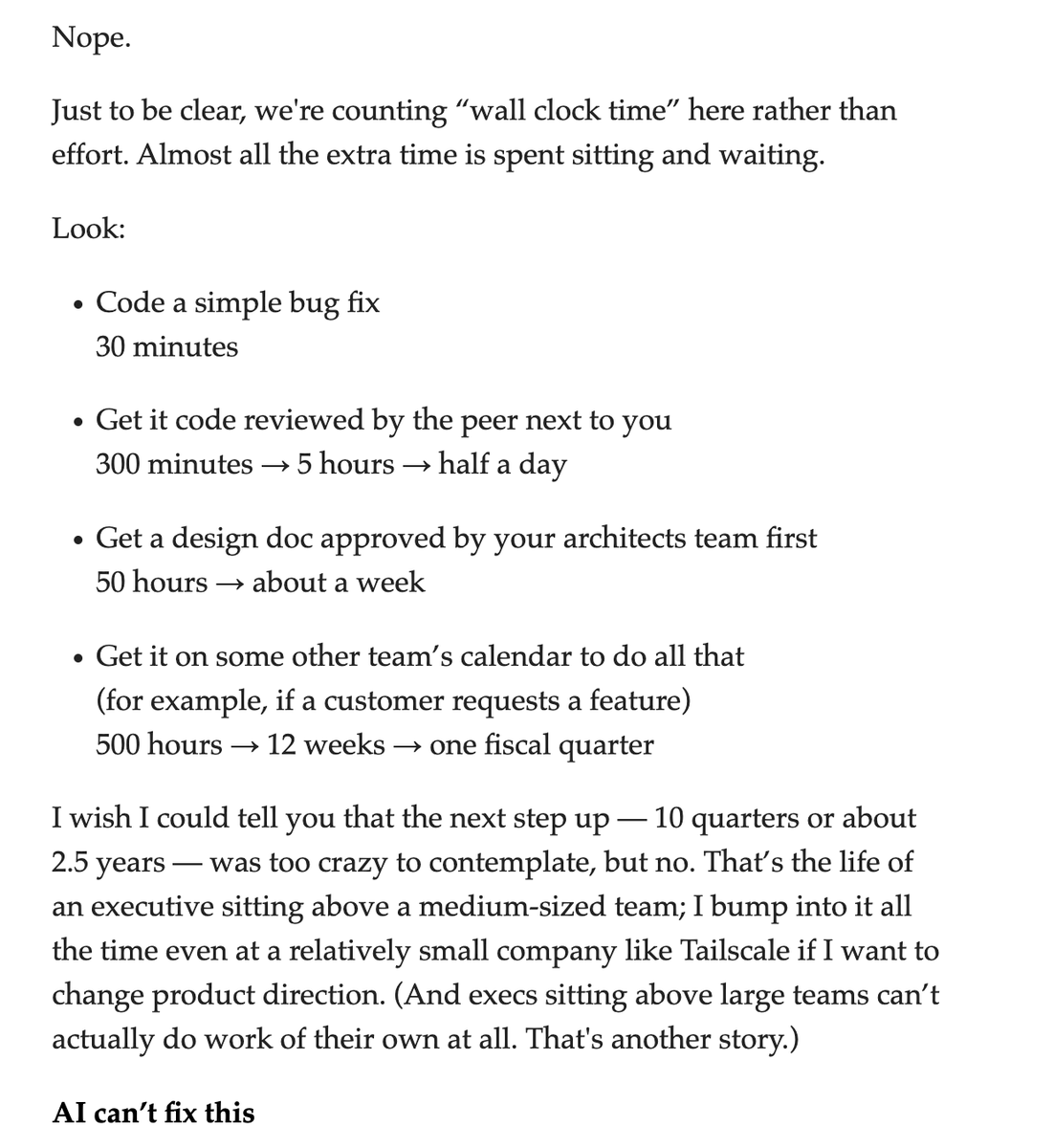

I am not sure "Forward Deployed AI Engineers" are going to deliver on what a lot of companies are hoping for. They are useful, yes, but AI applications are far less of a technical issue, and much more about rethinking the deep expertise & structure of your organization around AI.

Make $1m by graduation. Or get 100% of your tuition refunded. That's the promise of the new high school for entrepreneurs Cameron and I are launching this fall through @AlphaSchoolATX. We need 2-3 coaches to help make it happen. DM us or apply!