The Nextool AI me-retweet

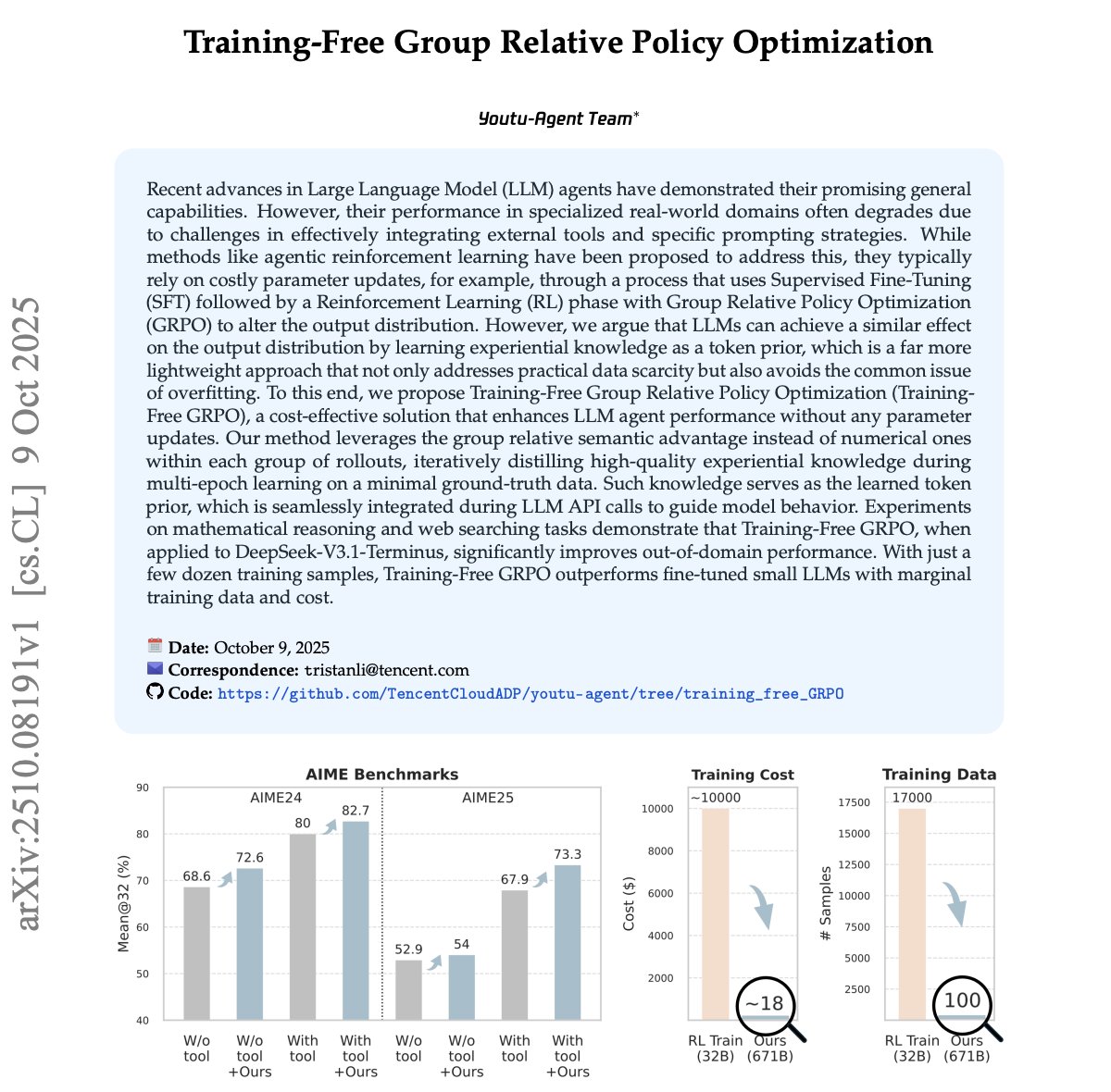

Holy shit... Alibaba just dropped a 30B parameter AI agent that beats GPT-4o and DeepSeek-V3 at deep research using only 3.3B active parameters.

It's called Tongyi DeepResearch and it's completely open-source.

While everyone's scaling to 600B+ parameters, Alibaba proved you can build SOTA reasoning agents by being smarter about training, not bigger.

Here's what makes this insane:

The breakthrough isn't size it's the training paradigm.

Most AI labs do standard post-training (SFT + RL).

Alibaba added "agentic mid-training" a bridge phase that teaches the model how to think like an agent before it even learns specific tasks.

Think of it like this:

Pre-training = learning language

Agentic mid-training = learning how agents behave

Post-training = mastering specific agent tasks

This solves the alignment conflict where models try to learn agentic capabilities and user preferences simultaneously.

The data engine is fully synthetic.

Zero human annotation. Everything from PhD-level research questions to multi-hop reasoning chains is generated by AI.

They built a knowledge graph system that samples entities, injects uncertainty, and scales difficulty automatically.

20% of training samples exceed 32K tokens with 10+ tool invocations. That's superhuman complexity.

The results speak for themselves:

32.9% on Humanity's Last Exam (vs 26.6% OpenAI DeepResearch)

43.4% on BrowseComp (vs 30.0% DeepSeek-V3.1)

75.0% on xbench-DeepSearch (vs 70.0% GLM-4.5)

90.6% on FRAMES (highest score)

With Heavy Mode (parallel agents + synthesis), it hits 38.3% on HLE and 58.3% on BrowseComp.

What's wild: They trained this on 2 H100s for 2 days at <$500 cost for specific tasks.

Most AI companies burn millions scaling to 600B+ parameters.

Alibaba proved parameter efficiency + smart training >>> brute force scale.

The bigger story?

Agentic models are the future. Models that autonomously search, reason, code, and synthesize information across 128K context windows.

Tongyi DeepResearch just showed the entire industry they're overcomplicating it.

Full paper: arxiv. org/abs/2510.24701

GitHub: github. com/Alibaba-NLP/DeepResearch

English