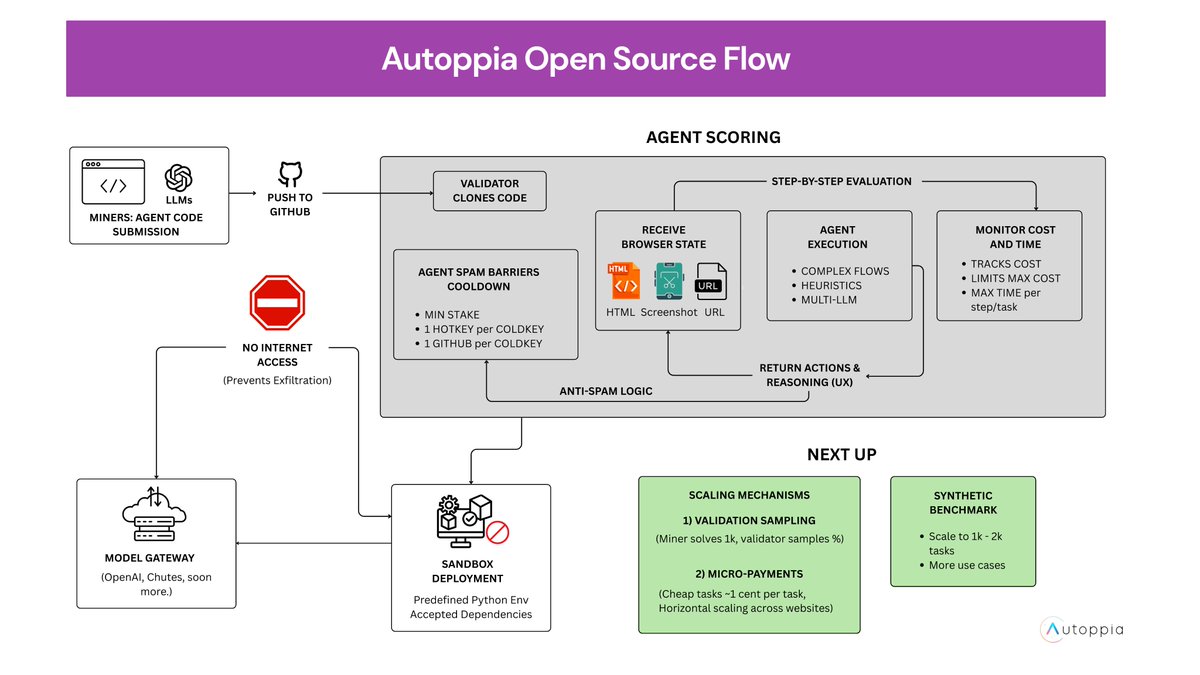

Autoppia | Subnet 36 on Bittensor

1.2K posts

Autoppia | Subnet 36 on Bittensor

@AutoppiaAI

On a mission to have the best Web Operator (Automata) and the best Web Benchmark in the world (Infinite Web Arena).

Today I become Founding Partner of @bitstarterAI - stepping up from my role as Chief Subnet Officer to take on full co-ownership and a broader set of responsibilities across our portfolio. It hasn't even been a year since I met @macrozack. But that one conversation was enough for me to walk away from 6 years in iGaming, and start on the path to launching x4 new teams in under in 6 months. That's the power of what we're building. Bittensor is the future of AI. Betting my career on it feels like the only move that makes sense. The right risk, at the right time, with the right team⚡️

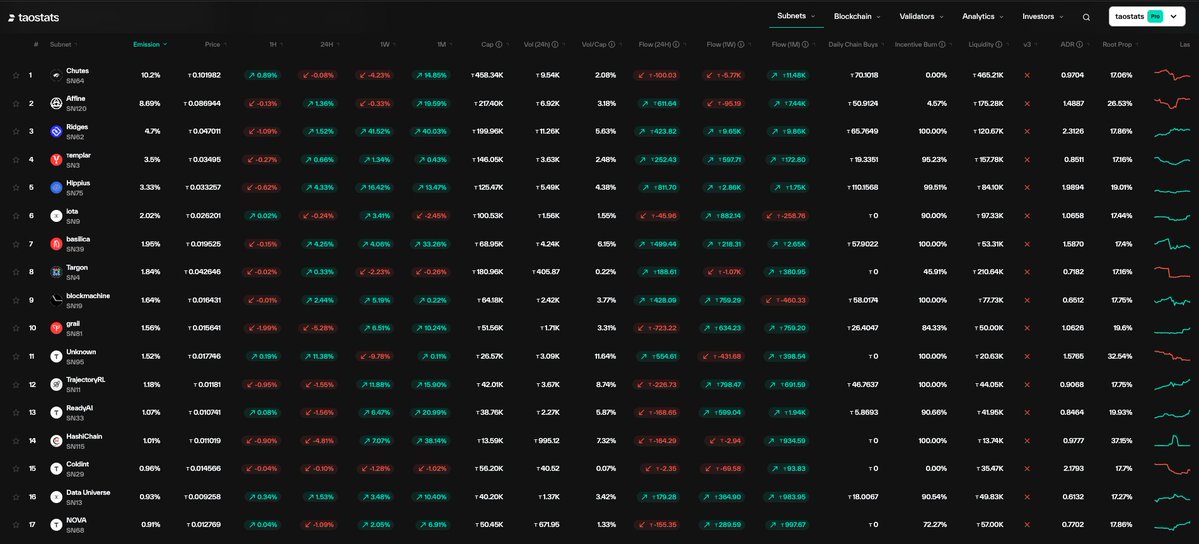

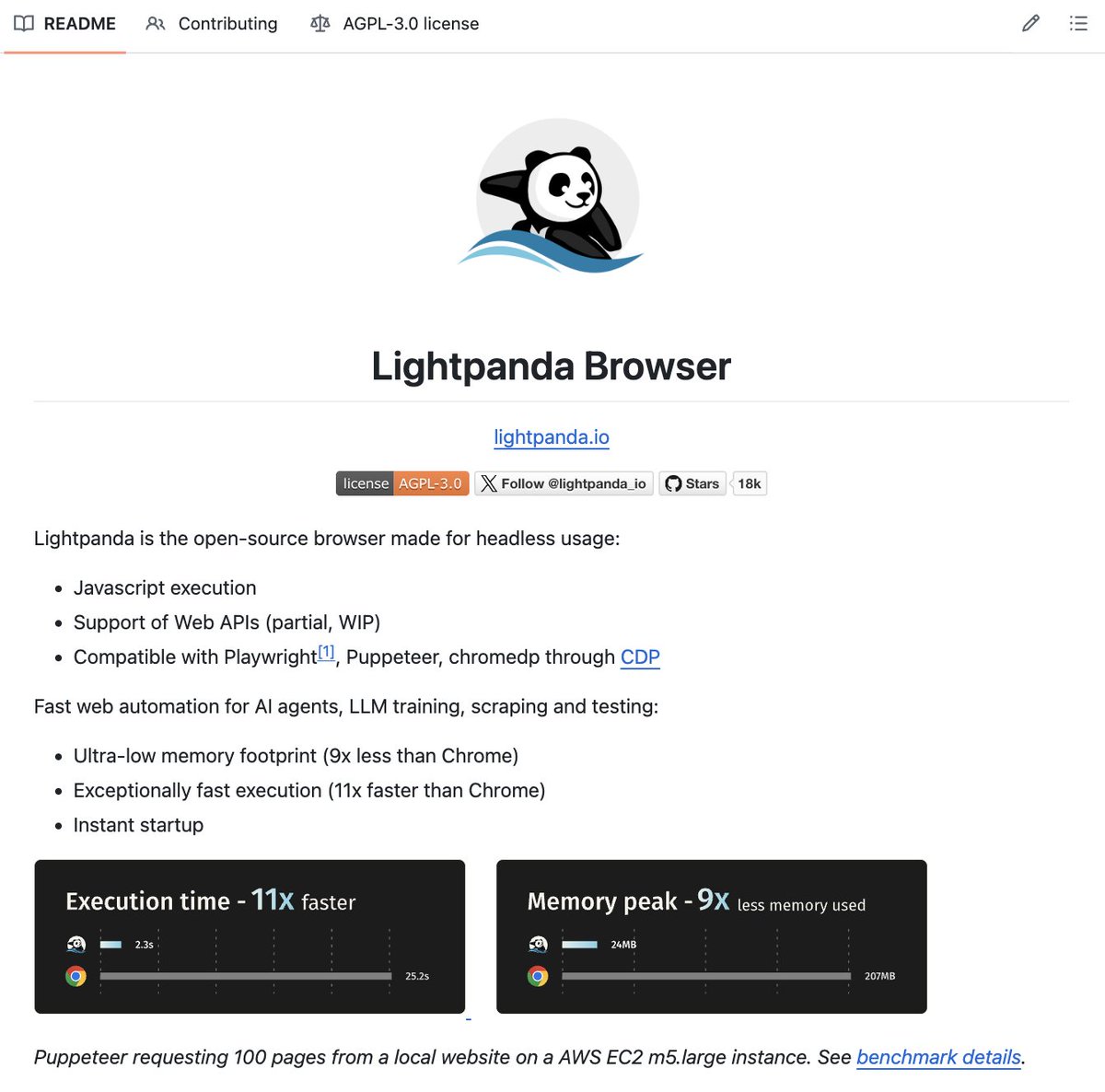

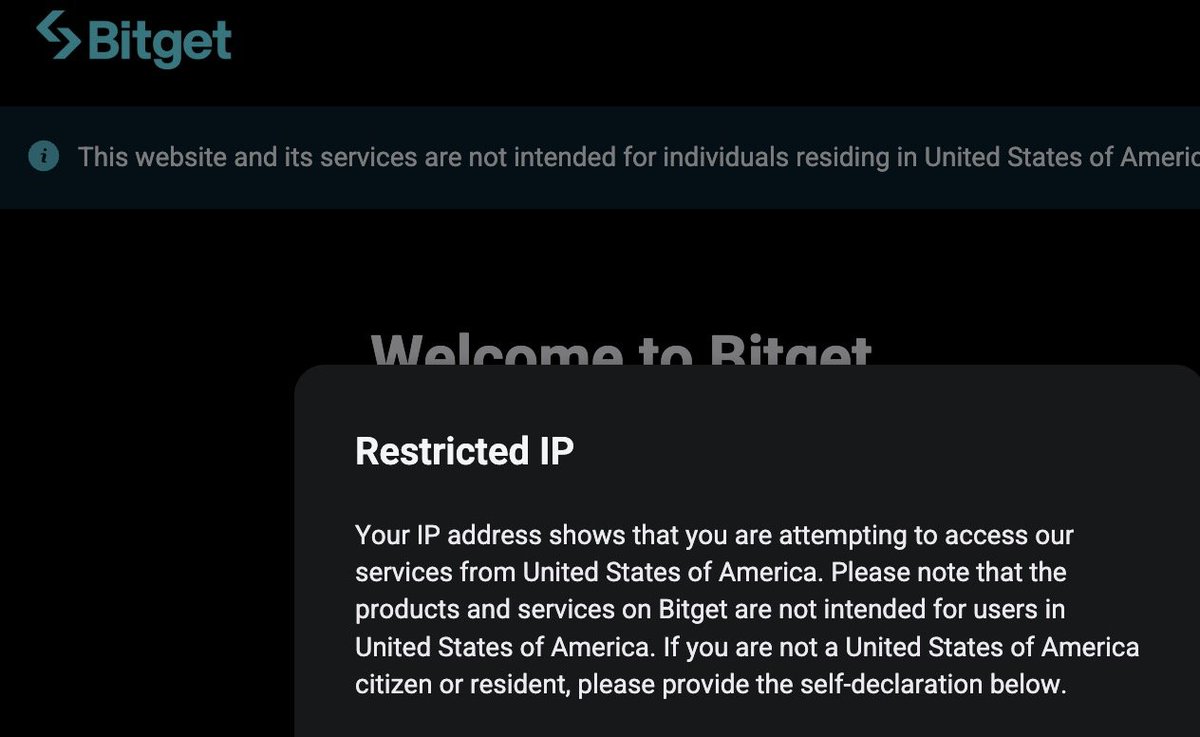

WHOA - look at that yield - 700% in one month! Let me in! (tao.com on SN 36) This is a real number. but what does it mean? Should these guys begin looking for a house with a pool?