Openτensor Foundaτion

2.3K posts

@opentensor

Incentivizing intelligence

Going LIVE in 20 minutes! Ditto's very own @peytonspencer will be going live on @gordonfrayne's TAO PILL Podcast alongside @Sebyverse. Think of this as a formal introduction from Ditto to Bittensor. Join on X to uncover what is to come!

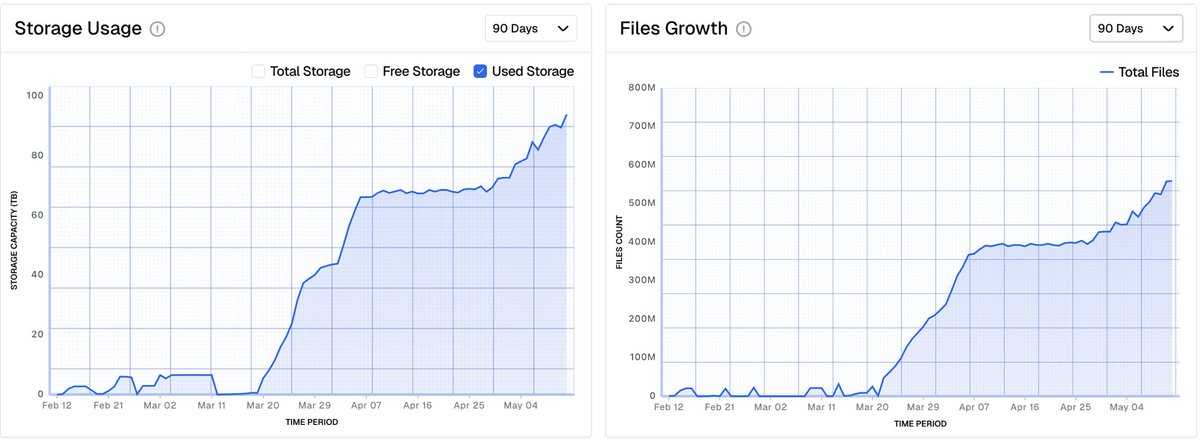

Growth metrics looking good on @hippius_subnet $TAO

teutonic.ai Training 80

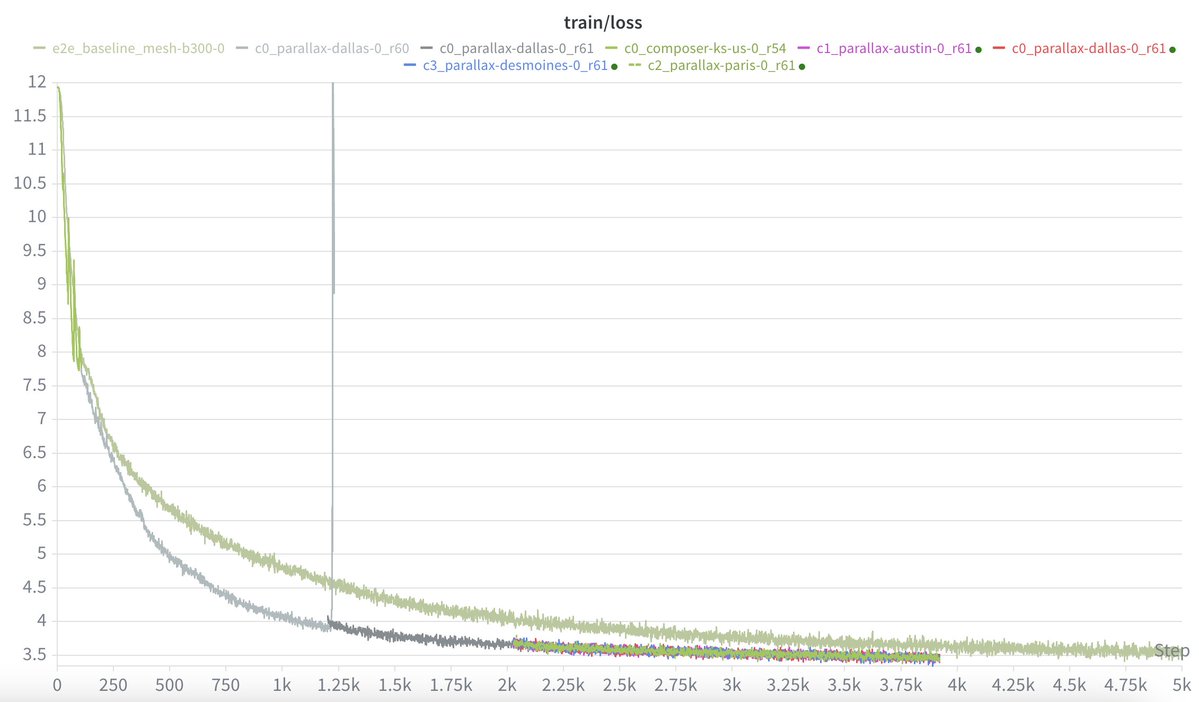

You can force an architecture into decentralized training, or you can craft an architecture specifically for decentralized training (and ultimately insanely performant inference). Stop trying to fight gravity. "Parallax", so far, is working exceptionally well. Surrogates, expert offload, stratified decoupled diloco, etc. All pretty great to make training work at scale across the internet. But... The biggest benefits are actually AFTER training, via specific model architecture choices that drastically reduce VRAM and increase throughput. These giant MoEs are wasting so much VRAM and compute for all of the many routed experts, it's insane actually. So much research already showing that MoEs can be massively compressed/pruned/ternary-distilled/etc., not to mention they are generally comparable to around 2.5-3x dense model equivalents of the ACTIVE parameter count. KV cache is a huge bottleneck, and these huge routed experts are often "dead weight" that consume it needlessly. We need to do better, so we will.

Waiting on a provisional patent for this new tech, then we can share some results/paper/etc., in the meantime we are starting on the first set of full production ready models - not partially pretrained toy models that nobody would ever use; real models, fully pretrained/fine-tuned/RL'd. We need to combat the GPU/power/datacenter shortages with algorithmic changes, not brute force and massive capex. Accelerate!