AI がリツイート

AI

60.7K posts

AI

@DeepLearn007

Imtiaz Adam CS #AI Postgrad |#Tech #Strategy #MachineLearning #DeepLearning | #RL #Agentic | #LLM Liberal | #GenAI| MBA alum @morganstanley @LBS @Columbia_Biz

参加日 Eylül 2012

109.5K フォロー中136.8K フォロワー

AI がリツイート

Stanford's "Reinforcement Learning"

by Emma Brunskill (Spring 2024)

📽️ Lecture Videos: youtube.com/playlist?list=…

📷 Course Website: web.stanford.edu/class/cs234/CS…

English

AI がリツイート

A new Science study finds that different dendritic segments of a single neuron follow distinct rules. The results challenge the idea that neurons follow a single learning strategy and offer a new perspective on how the brain learns and adapts behavior

📄: scim.ag/3GjGf4d

#SciencePerspective: scim.ag/4jl6fed

English

AI がリツイート

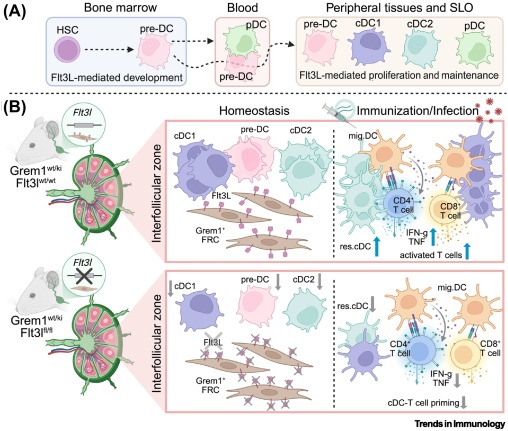

Flt3L from interfollicular stroma maintains resident dendritic cells dlvr.it/TQpj3R #immunology

English

AI がリツイート

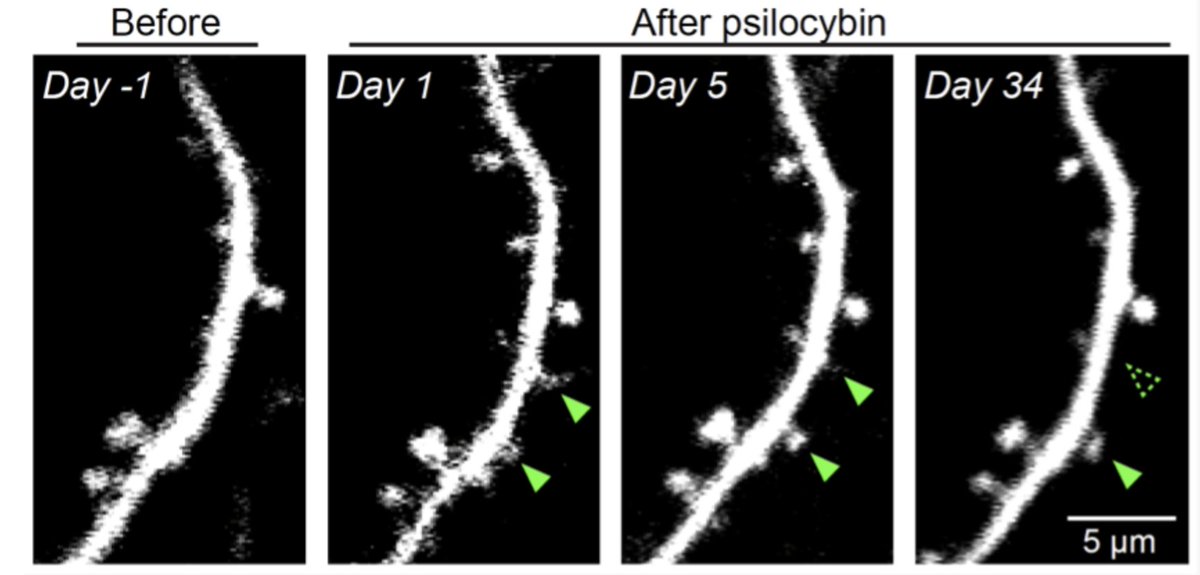

We can now watch psilocybin grow new brain connections in real time.

Not metaphorically. Not "neuroplasticity" as a vague buzzword. Actual, physical structures — dendritic spines — sprouting from cortical neurons within 24 hours of a single dose.

A team at Yale used chronic two-photon microscopy to image individual dendritic spines on layer 5 pyramidal neurons in the medial frontal cortex of living mice.

Before psilocybin. After psilocybin. Same neurons. Same spines. Day after day.

And here's what they found:

A single dose of psilocybin produced a ~10% increase in spine density and spine size. New spines began forming within 24 hours. Most of these new connections were still there a month later.

That last part matters most.

Psilocybin has a half-life of about 3 hours. The molecule is gone by dinner. But the structural changes it triggers persist for at least 34 days (and likely far longer).

This is the biological explanation for something clinicians have observed for years: a single psilocybin session producing therapeutic benefits that last months. The drug disappears, but the architecture it built does not.

There's a critical mechanistic detail. When researchers pre-treated with ketanserin — a 5-HT2A receptor antagonist — the spine growth was completely blocked. This confirms that structural remodeling depends on activation of the serotonin 2A receptor. The same receptor responsible for the psychedelic experience itself.

A 2025 follow-up from the same lab went further. Using rabies tracing to map brain-wide inputs to these new spines, they discovered psilocybin's rewiring is network-specific. It selectively strengthens inputs from perceptual and default mode network regions, the same networks implicated in self-referential processing, rumination, and depression.

It doesn't just grow connections randomly. It grows the RIGHT ones.

Here's what this means for practitioners:

The window after a psychedelic experience isn't just psychological. It's structural. New dendritic spines form and stabilize in the days and weeks following a session.

Integration practices — therapy, journaling, somatic work, meditation, breathwork — aren't just processing insights. They may be reinforcing which of these new physical connections survive.

You're not just supporting someone's mental model. You're supporting their neural architecture.

Think about what that reframes. The integration period isn't a nice-to-have. It's a biological imperative. Those new spines either stabilize into lasting connections or get pruned. The environment, practices, and support during that window may determine which.

We're not just learning that psilocybin works. We're watching exactly how it works, at the level of individual synapses.

The implications for how we design protocols, structure integration, and time follow-up sessions are enormous.

What do you make of this research? Is psilocybin the miracle drug that science makes it out to be?

English

AI がリツイート

We built a Spiking Neural Network (SNN) to fully control a physical robot arm (6-DOF), and it's running entirely on a @Raspberry_Pi (no HAT).

In our approach, the SNN starts untrained and drives the servos directly, learning in real time through local learning rules (no backprop or gradient descent).

The goal with Twitch-y (the robot + SNN brain) is to let it explore and build an understanding of its own body until it can touch the plate on the mat, while also validating the SNN architecture and some of the math.

In the video:

- Top right: the SNN architecture

- Bottom right: the mini-brain spiking in real time while controlling the arm

- Left: Twitch-y (@huggingface SO-101)

If you want to learn more about Artificial Brains, come visit us this Friday (13th), at @fdotinc 👉 luma.com/artifact

English

AI がリツイート

We built a Spiking Neural Network to control a 7-DOF robotic arm in simulation. It aims to learn in real time using local learning rules, running entirely on a CPU.

It wasn’t trained on any dataset, and it's experiencing the world for the first time as it moves with very sparse reward policies.

In the video: the first few seconds show the SNN architecture; the remainder shows a Panda robot controlled by this mini-brain in real time (1x speed).

English

AI がリツイート

PLoS Computational Biology

Neural spiking for causal inference and learning

journals.plos.org/ploscompbiol/a…

English

AI がリツイート

❗️Ukrainian experts in the Middle East were shocked by US air defense tactics, citing the use of up to 8 Patriot missiles per drone and $6M interceptors against $70K UAVs, while poorly concealed radars were destroyed by cheap drones, The Times reports. #Iran

English

AI がリツイート

🇺🇦❗️“I have no idea what the allies have been looking at for four years while we have been at war,” — Ukrainian military instructors who went to help counter Iranian missiles and UAVs are shocked by the way the US shoots down “Shaheds,” writes The Times

– First, the Persian Gulf countries launched as many as 8 Patriot missiles at one (!) enemy target, each costing more than $3 million.

– They often used a ship-based SM-6 missile, worth about $6 million, to shoot down a “Shahed” worth $70,000.

– The US and its allies often literally “shine” their radars like beacons — without proper camouflage. Ukrainians work differently: mobile radars constantly change positions.

For example: just three (!) cheap Shahed drones destroyed the AN/FPS-132 early warning radar (~$1 billion) and another air defense radar (~$300 million), which had been standing in one place for months and were perfectly “readable” from satellites.

English

AI がリツイート

Recent neuromorphic computer breakthroughs mean we can now simulate complex physics on brain-inspired chips using 1,000x less energy than supercomputers. We're literally making silicon think like neurons to solve equations that used to require entire data centers. The Cambrian explosion of AI wasn't the finish line—it was the starting gun.

English

AI がリツイート

AI がリツイート

The human brain uses only 20 watts of power but performs ~1 exaFLOP (10^18 operations/sec). Today's top AI chips burn 700 watts for ~1 petaFLOP. We're still ~1,000x less efficient than biology. When neuromorphic chips close that gap, we won't be building data centers—we might be growing them.

English

AI がリツイート

What the internet felt like before algorithmic curation

buff.ly/pODHTno

#AI

Cc @SpirosMargaris @JoannMoretti @DeepLearn007 @chidambara09 @PawlowskiMario @jblefevre60 @nincoroby

English

AI がリツイート

Building an Agentic #AI Pipeline for #ESG Reporting

buff.ly/fDYhd4A v/ @AnalyticsVidhya

#GenAI

Cc @DeepLearn007 @HaroldSinnott @YvesMulkers @VanRijmenam @sandy_carter @KirkDBorne @terence_mills

English

AI がリツイート

Automating MFA Testing with Playwright Storage State

buff.ly/lqEAcm5 v/ @sogetilabs

#AI

Cc @NewsNeus @sallyeaves @DeepLearn007 @jblefevre60 @BetaMoroney @RLDI_Lamy

English

AI がリツイート

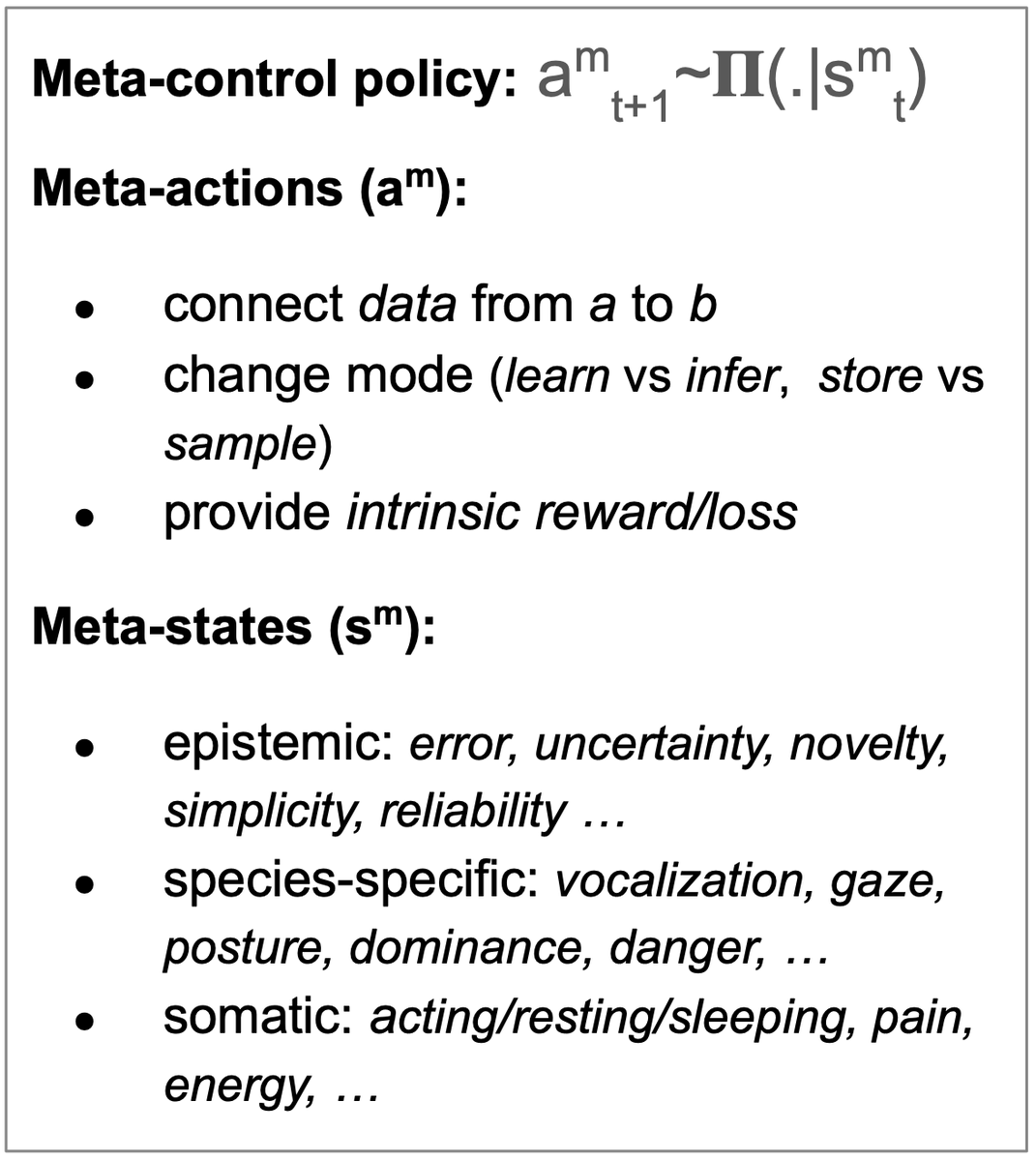

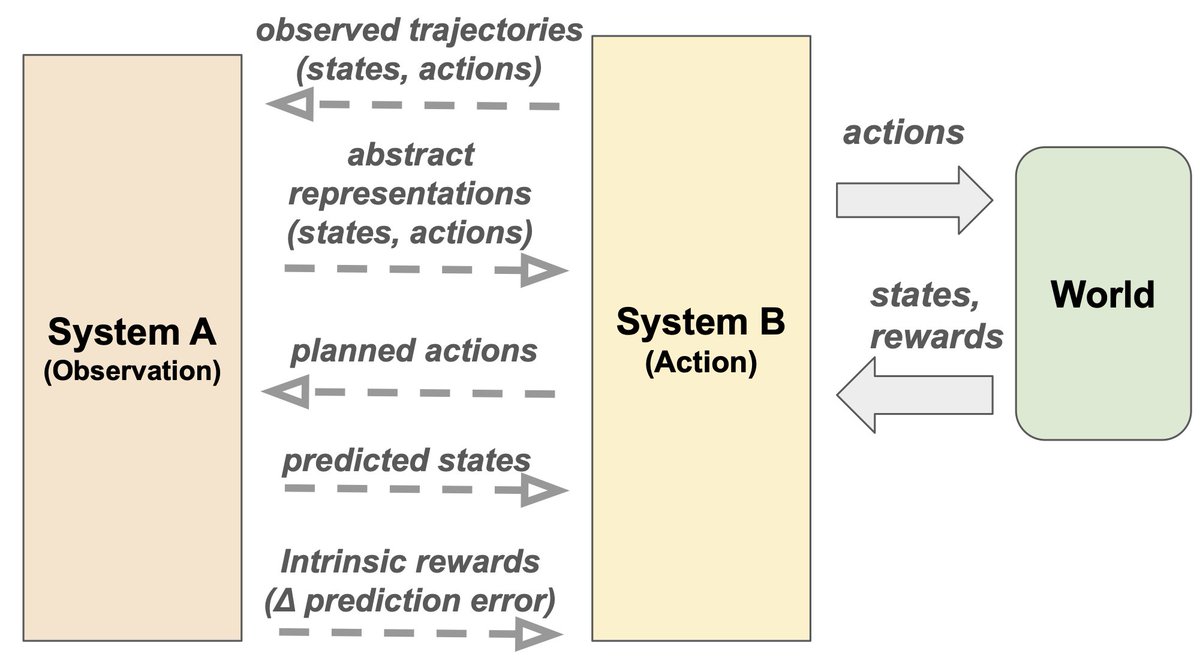

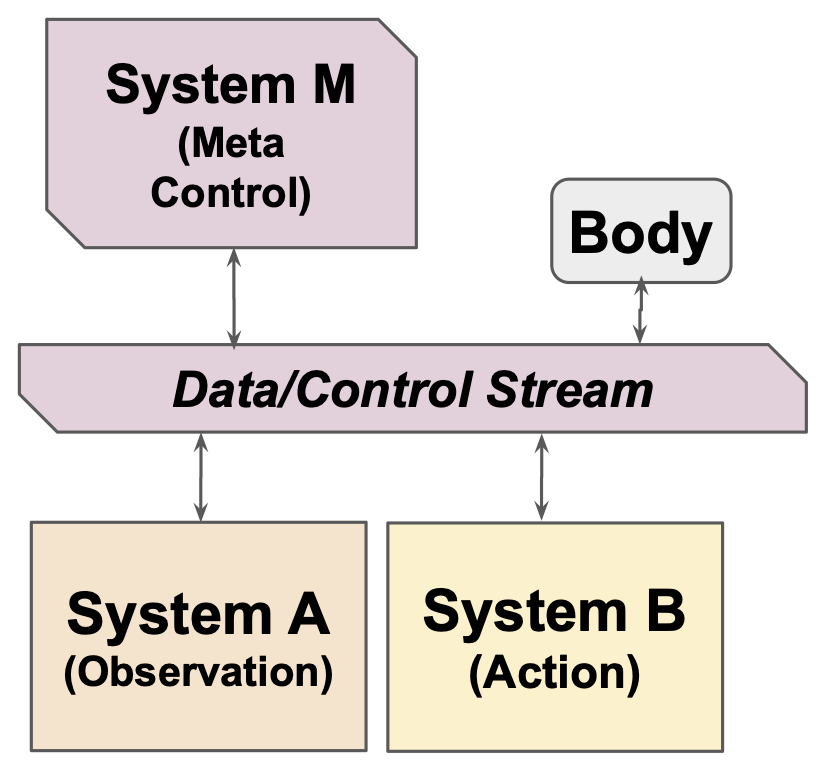

Why #AI systems don't learn & what to do about it: Lessons on autonomous learning from cognitive science

arxiv.org/abs/2603.15381

by Emmanuel Dupoux, @ylecun & @JitendraMalikCV

#MachineLearning

Cc @jblefevre60 @Ym78200 @ahier @aure79lien @DeepLearn007 @EvanKirstel @gvalan @AkwyZ

English

AI がリツイート

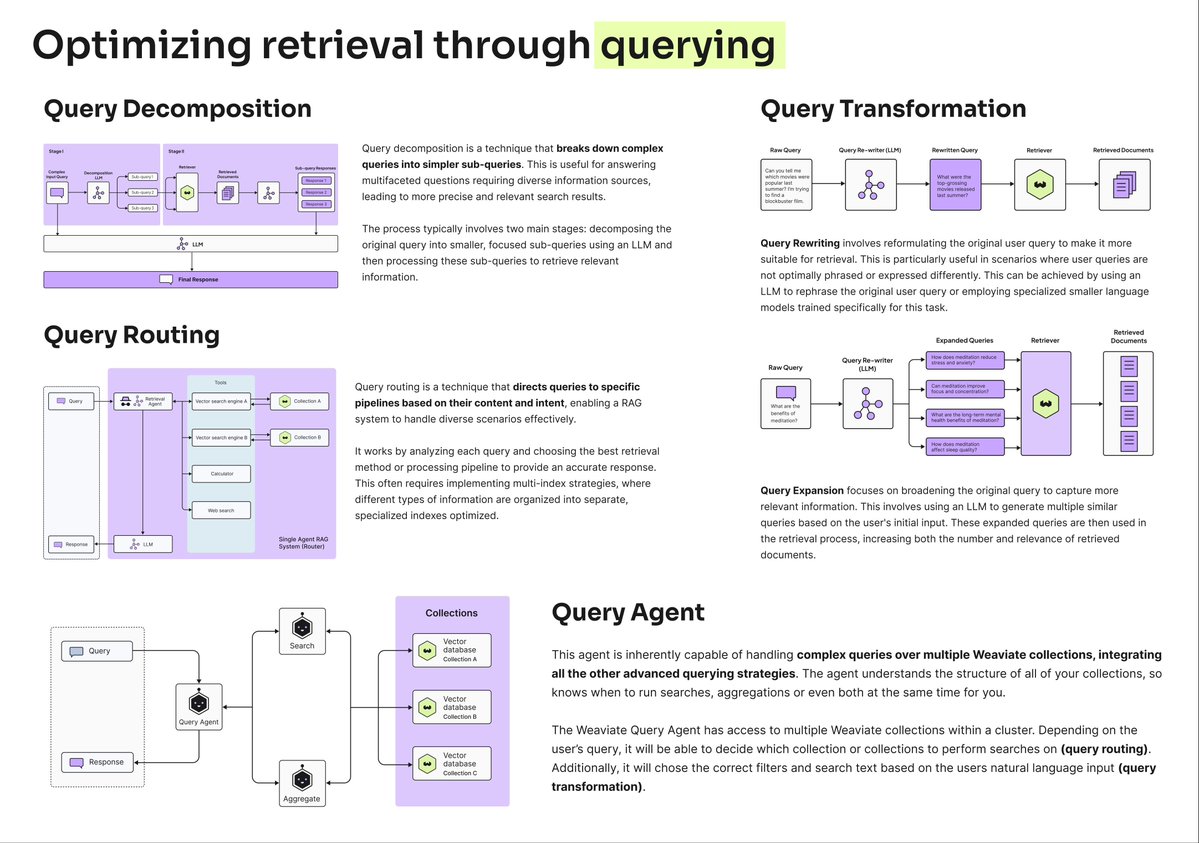

Is your RAG pipeline failing because of your data, or because of your queries?

Most developers optimize their vector databases.

But smart developers optimize their queries first.

These 4 techniques optimize your queries before they hit your vector database:

𝟭. 𝗤𝘂𝗲𝗿𝘆 𝗗𝗲𝗰𝗼𝗺𝗽𝗼𝘀𝗶𝘁𝗶𝗼𝗻

Query decomposition breaks down complex questions into smaller, manageable pieces.

So instead of asking "How do I build an agentic RAG system that handles multi-step reasoning?”, decompose it into:

- "What are the core components of agentic RAG?”

- "How do agents handle multi-step reasoning chains?"

- "What are the best tools for coordinating AI agents and vector search?"

This technique enables agents to approach tasks systematically, thereby improving the accuracy and reliability of LLM responses.

𝟮. 𝗤𝘂𝗲𝗿𝘆 𝗥𝗼𝘂𝘁𝗶𝗻𝗴

Direct queries to the most appropriate data source or index.

Legal question? → Route it to your legal documents.

Technical question? → Send it to your engineering docs.

This targeted approach dramatically improves relevance.

𝟯. 𝗤𝘂𝗲𝗿𝘆 𝗧𝗿𝗮𝗻𝘀𝗳𝗼𝗿𝗺𝗮𝘁𝗶𝗼𝗻

Rewrite queries to better match your data structure.

Transform "latest updates" → "recent changes 2025"

or expand acronyms automatically.

This bridges the gap between how users ask questions and how information is stored.

𝟰. 𝗤𝘂𝗲𝗿𝘆 𝗔𝗴𝗲𝗻𝘁

Query agents are the most advanced approach, using AI agents to intelligently handle the entire query processing pipeline. The agent can reformulate the query, choose the right search type and filters, and decide which data collections to search.

Query optimization happens before retrieval, addressing the root cause of poor results rather than trying to compensate for them downstream.

Dive deeper in this free RAG ebook: weaviate.io/ebooks/advance…

Learn more about the query agent: docs.weaviate.io/agents/query?u…

English

AI がリツイート

AI memory systems don't fail because they forget.

They actually fail because they remember everything.

If you've ever used an agent that repeats your own preferences back to you, or worse, ignores them, you’ve hit the ‘𝗹𝗶𝗺𝗶𝘁𝗲𝗱 𝗹𝗼𝗼𝗽.’ Each interaction is treated as disposable.

No continuity between sessions. No growth.

For a chatbot, that's annoying. For an autonomous agent, it’s catastrophic.

Memory isn't just something you simply store and retrieve. It's something you actively maintain.

Imagine a developer-facing agent that recommends a specific library version early in a project. Months later, the tooling has changed, but the old guidance is still in memory. The agent confidently suggests outdated instructions, leaving users worse off than if they hadn't asked at all.

So to move from simple storage to intelligence, your memory system needs:

- 𝗪𝗿𝗶𝘁𝗲 𝗰𝗼𝗻𝘁𝗿𝗼𝗹: Deciding what to store and at what confidence level

- 𝗗𝗲𝗱𝘂𝗽𝗹𝗶𝗰𝗮𝘁𝗶𝗼𝗻: Collapsing repeated information into canonical facts

- 𝗥𝗲𝗰𝗼𝗻𝗰𝗶𝗹𝗶𝗮𝘁𝗶𝗼𝗻: Handling contradictions as reality changes

- 𝗔𝗺𝗲𝗻𝗱𝗺𝗲𝗻𝘁: Correcting wrong facts rather than appending newer versions

- 𝗣𝘂𝗿𝗽𝗼𝘀𝗲𝗳𝘂𝗹 𝗳𝗼𝗿𝗴𝗲𝘁𝘁𝗶𝗻𝗴: Allowing temporary information to naturally fade

Without active maintenance, memory becomes an ever-growing pile of notes - some useful, some stale, some flat-out wrong.

Once your system relies on memory for continual learning and adaptation, it stops behaving like a feature and starts behaving like infrastructure - requiring the same durability, isolation, and governance guarantees as your storage layer.

At Weaviate, we're building memory from the ground up as a first-class data problem.

Read the full deep-dive:

weaviate.io/blog/limit-in-…

And if you're interested in where memory is heading at Weaviate, sign up for a preview 🧡

English

AI がリツイート

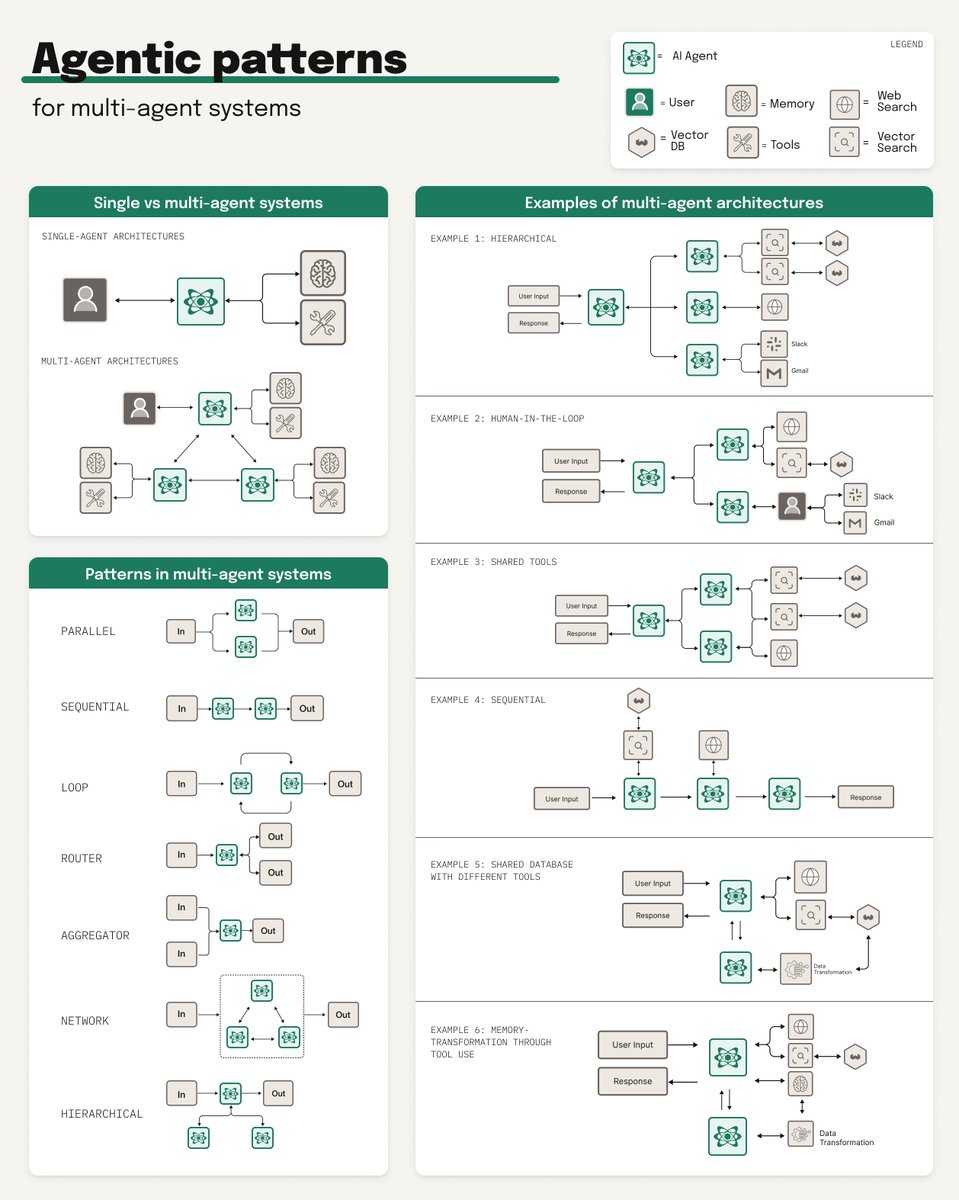

Not all 𝗺𝘂𝗹𝘁𝗶-𝗮𝗴𝗲𝗻𝘁 𝗮𝗿𝗰𝗵𝗶𝘁𝗲𝗰𝘁𝘂𝗿𝗲𝘀 are created equal.

Here are six patterns that actually work in production:

1️⃣ 𝗛𝗶𝗲𝗿𝗮𝗿𝗰𝗵𝗶𝗰𝗮𝗹

One top-level agent coordinates multiple specialized sub-agents. The coordinator analyzes the query and routes it to the right specialists - one might handle proprietary internal data, another personal accounts (email, chat), another public web searches, then synthesizes the results into a coherent answer.

𝘞𝘩𝘦𝘯 𝘵𝘰 𝘶𝘴𝘦: When you need to query across different data sources that require different patterns or strategies.

2️⃣ 𝗛𝘂𝗺𝗮𝗻 𝗶𝗻 𝘁𝗵𝗲 𝗟𝗼𝗼𝗽

Critical decisions get routed to humans for approval before execution. The workflow pauses, a human validates or modifies the proposed action, then the agent continues.

𝘞𝘩𝘦𝘯 𝘵𝘰 𝘶𝘴𝘦: High-stakes decisions, regulated environments, or anywhere you need accountability and oversight.

3️⃣ 𝗦𝗵𝗮𝗿𝗲𝗱 𝗧𝗼𝗼𝗹𝘀

Each agent has its own role and focus, but they can all call the same APIs, databases, or search functions - same tools, different tasks.

𝘞𝘩𝘦𝘯 𝘵𝘰 𝘶𝘴𝘦: When the tools are general-purpose but the 𝘳𝘦𝘢𝘴𝘰𝘯𝘪𝘯𝘨 about how to use them needs to be specialized.

4️⃣ 𝗦𝗲𝗾𝘂𝗲𝗻𝘁𝗶𝗮𝗹

Agents work in a pipeline, where the output of one agent becomes the input for the next. Agent 1 retrieves documents → Agent 2 filters and ranks → Agent 3 synthesizes the final answer.

𝘞𝘩𝘦𝘯 𝘵𝘰 𝘶𝘴𝘦: When your workflow has clear stages where you need specialized expertise at each step.

5️⃣ 𝗦𝗵𝗮𝗿𝗲𝗱 𝗗𝗮𝘁𝗮𝗯𝗮𝘀𝗲 𝘄𝗶𝘁𝗵 𝗗𝗶𝗳𝗳𝗲𝗿𝗲𝗻𝘁 𝗧𝗼𝗼𝗹𝘀

All agents access the same underlying database (like a vector store), but each has different specialized tools for 𝘸𝘩𝘢𝘵 they do with that data. One agent might have tools for semantic search, another for data transformation.

𝘞𝘩𝘦𝘯 𝘵𝘰 𝘶𝘴𝘦: When you have a centralized knowledge base but need different types of operations performed on it.

6️⃣ 𝗠𝗲𝗺𝗼𝗿𝘆 𝗧𝗿𝗮𝗻𝘀𝗳𝗼𝗿𝗺𝗮𝘁𝗶𝗼𝗻

Agents that modify data in place within the database. This allows agents to not just retrieve but actively maintain and update the knowledge base.

𝘞𝘩𝘦𝘯 𝘵𝘰 𝘶𝘴𝘦: When your data needs continuous enrichment, cleanup, or transformation as part of the agentic workflow.

The reality is that most production systems use 𝗵𝘆𝗯𝗿𝗶𝗱 𝗮𝗽𝗽𝗿𝗼𝗮𝗰𝗵𝗲𝘀 combining multiple patterns. You might have a hierarchical coordinator that routes to sequential pipelines, with human-in-the-loop gates at critical decision points, all working with a shared database.

Learn more about building multi-agent systems in our ebook: weaviate.io/ebooks/agentic…

Or check out @weaviate_io Agent Skills to start building: weaviate.io/blog/weaviate-…

English