Kole Sam

6.7K posts

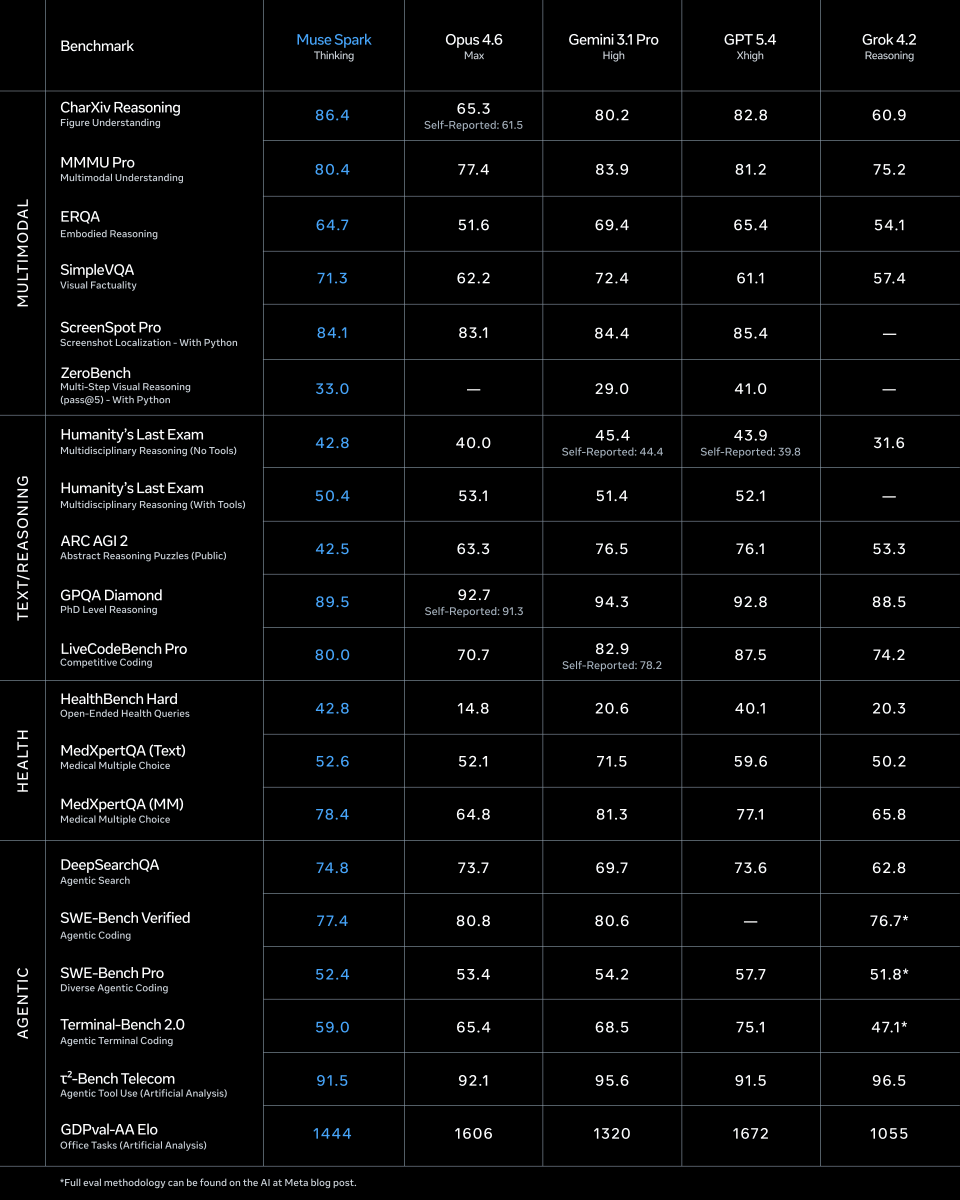

1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵

“Caveman Claude” is the most underrated AI tip right now. What is it? Humans tend to add fluff to what needs to really be said 90% of the meaning we wanna convey can be communicated in about 20% of the words we actually say. No changes made to the meaning of the prompt, but it uses WAY fewer tokens.

Introducing Gemini 3.1 Flash Live, our new realtime model to build voice and vision agents!! We have spent more than a year improving the model + infra + experience, the results? A step function improvement in quality, reliability, and latency.

We’re saying goodbye to the Sora app. To everyone who created with Sora, shared it, and built community around it: thank you. What you made with Sora mattered, and we know this news is disappointing. We’ll share more soon, including timelines for the app and API and details on preserving your work. – The Sora Team

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

🚨 JUST IN: First Lady Melania Trump just WALKED OUT with an American-built humanoid robot at the White House, for a Fostering the Future AI education event Classiest first lady ever 🇺🇸

Claude is helping me organize my whole music career and other businesses in days ... and it's moving my business forward at a high rate! Some tech youngbull I met on LinkedIn gave me a incredible template! Who else can help me with Claude

We're shipping a new feature in Claude Cowork as a research preview that I'm excited about: Dispatch! One persistent conversation with Claude that runs on your computer. Message it from your phone. Come back to finished work. To try it out, download Claude Desktop, then pair your phone.

We're shipping a new feature in Claude Cowork as a research preview that I'm excited about: Dispatch! One persistent conversation with Claude that runs on your computer. Message it from your phone. Come back to finished work. To try it out, download Claude Desktop, then pair your phone.

We’re introducing GPT-5.4 mini and nano, our most capable small models yet. GPT-5.4 mini is more than 2x faster than GPT-5 mini. Optimized for coding, computer use, multimodal understanding, and subagents. For lighter-weight tasks, GPT-5.4 nano is our smallest and cheapest version of GPT-5.4. openai.com/index/introduc…

"Every software company in the world, needs to have an @openclaw strategy" - Jensen at @NVIDIAAI GTC Framing OpenClaw as one of the most important open source releases ever, they have announced NemoClaw - a reference platform for enterprise grade secure Openclaw, with OpenShell, Network boundaries, security baked in.