Dr Vincent Sativa

95K posts

Dr Vincent Sativa

@PhantomByteAI

We write articles and build cool stuff for individuals & small businesses. AI tools, bots, lead gen systems, and scripts. Code from the Shadows. 👻

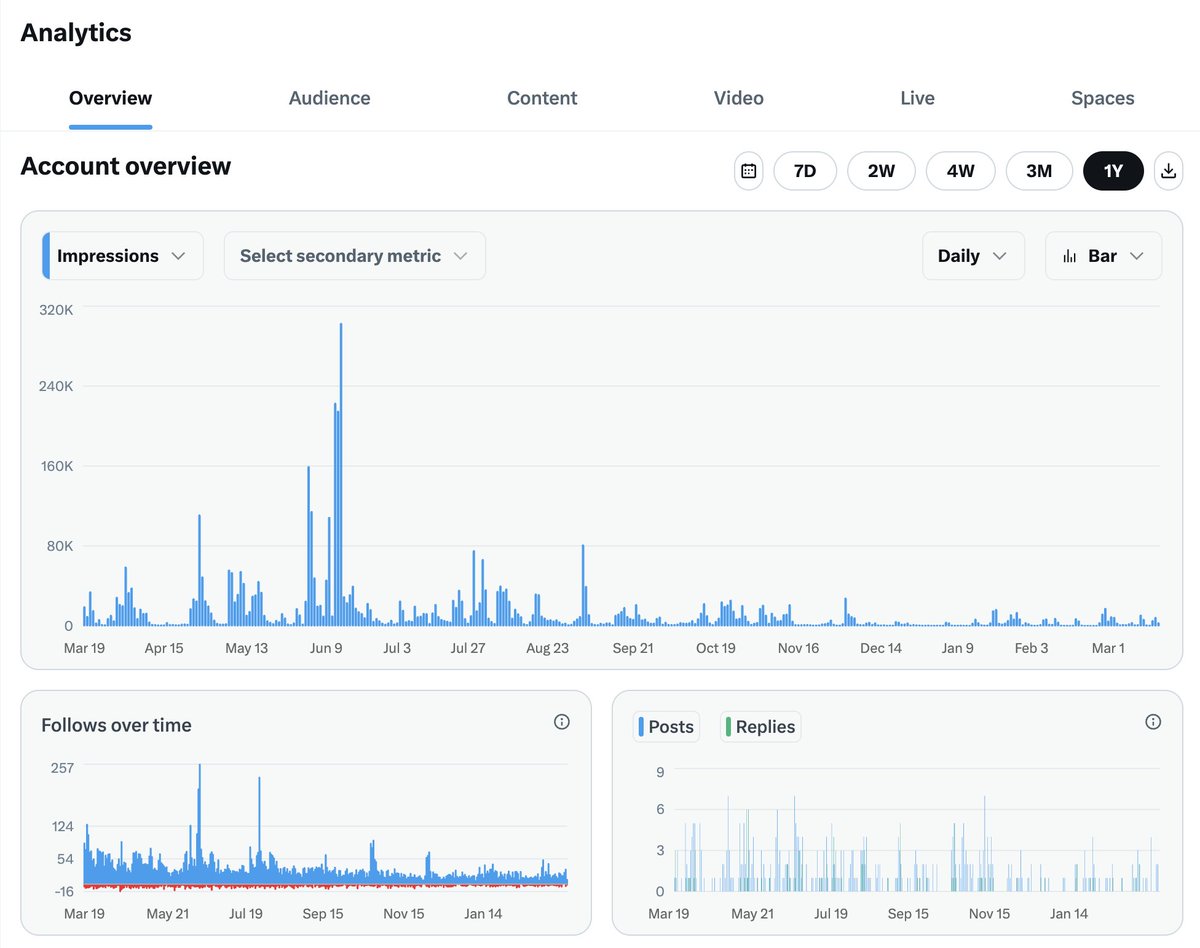

IF YOU'RE ON OPENCLAW DO THIS NOW: I just sped up my OpenClaw by 95% with a single prompt Over the past week my claw has been unbelievably slow. Turns out the output of EVERY cron job gets loaded into context Months of cron outputs sent with every message Do this prompt now: "Check how many session files are in ~/.openclaw/agents/main/sessions/ and how big sessions.json is. If there are thousands of old cron session files bloating it, delete all the old .jsonl files except the main session, then rebuild sessions.json to only reference sessions that still exist on disk." This will delete all the session data around your cron outputs. If you do a ton of cron jobs, this is a tremendous amount of bloat that does not need to be loaded into context and is MAJORLY slowing down your Openclaw If you for some reason want to keep some of this cron session data in memory, then don't have your openclaw delete ALL of them. But for me, I have all the outputs automatically save to a Convex database anyway, so there was no reason to keep it all in context. Instantly sped up my OpenClaw from unusable to lightning quick