Brad Mills 🔑⚡️

83.6K posts

@bradmillscan

Bitcoin angel. Building a Citadel Mind & Body through Proof of Work. Nostr #npub1zjx3xe49u3njkyep4hcqxgth37r2ydc6f0d7nyfn72xlpv7n97ss73pvrl 🐦

Strategy to repurchase $1.5 billion principal amount of 2029 convertible notes. $MSTR $STRC strategy.com/press/strategy…

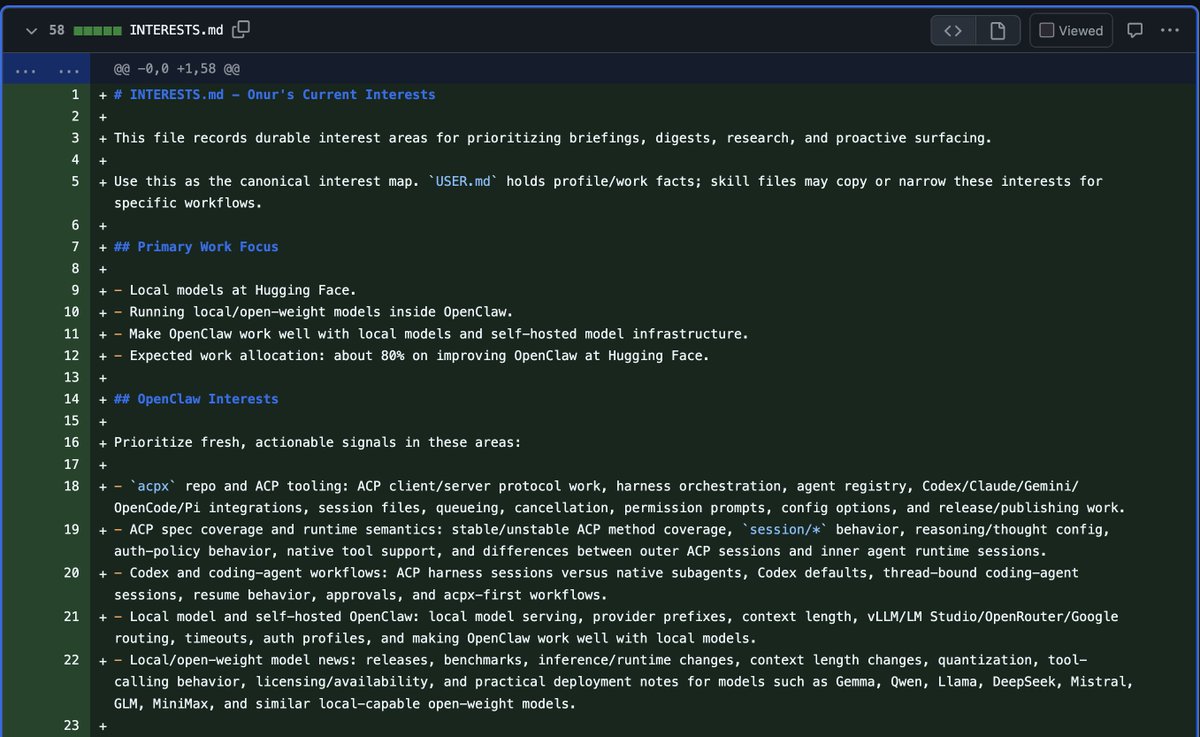

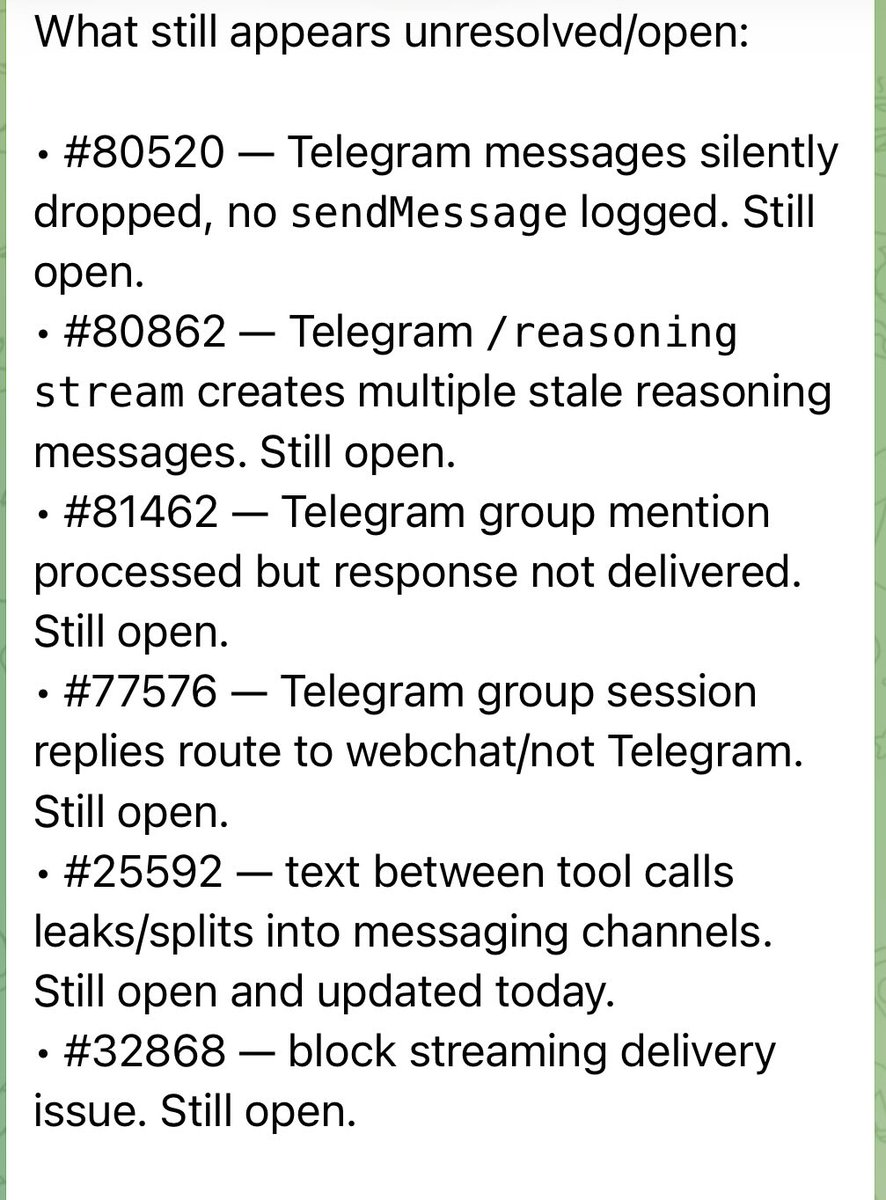

OpenClaw 2026.5.12 🦞 🧠 OpenAI setup defaults to Codex login 🛟 Runtime fallbacks + stalled-stream recovery 📬 Telegram polling survives stalls ⚡ Leaner installs, faster startup paths Faster, calmer, harder to wedge. github.com/openclaw/openc…

OpenClaw 2026.5.12 🦞 🧠 OpenAI setup defaults to Codex login 🛟 Runtime fallbacks + stalled-stream recovery 📬 Telegram polling survives stalls ⚡ Leaner installs, faster startup paths Faster, calmer, harder to wedge. github.com/openclaw/openc…